Simple and effective interaction between humans and four-legged robots is the way to create capable intelligent assistant robots, pointing to a future where technology improves our lives in ways beyond our imagination. For such human-robot interaction systems, the key is to give the quadruped robot the ability to respond to natural language commands. #Large language models (LLM) have developed rapidly recently and have shown the potential to perform high-level planning. However, it is still difficult for LLM to understand low-level instructions, such as joint angle targets or motor torques, especially for legged robots that are inherently unstable and require high-frequency control signals. Therefore, most existing work assumes that the LLM has been provided with a high-level API that determines the robot's behavior, which fundamentally limits the expressive capabilities of the system. In the CoRL 2023 paper "SayTap: Language to Quadrupedal Locomotion", Google DeepMind and the University of Tokyo proposed a new method that uses foot contact patterns as connections A bridge between human natural language instructions and motion controllers that output low-level commands.

- Paper address: https://arxiv.org/abs/2306.07580

- Project website: https://saytap.github.io/

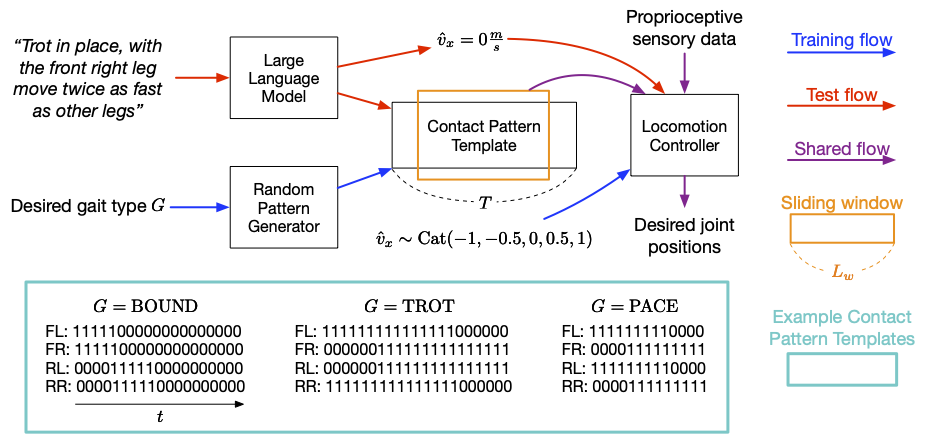

The foot contact pattern refers to the foot contact pattern of a quadrupedal agent when it moves. The order and manner in which they are placed on the ground. Based on this, they developed an interactive quadruped robot system that allows users to flexibly develop different movement behaviors. For example, users can use simple language to command the robot to walk, run, jump or perform other actions. Their contributions include an LLM prompt design, a reward function, and a method that enables the SayTap controller to use feasible contact pattern distributions. Research shows that the SayTap controller can implement multiple motion modes, and these capabilities can also be transferred to real robot hardware. The SayTap method uses a contact pattern template that is a 4 From top to bottom, each row of the matrix gives the foot contact pattern of the left forefoot (FL), right forefoot (FR), left rearfoot (RL), and right rearfoot (RR) respectively. SayTap's control frequency is 50 Hz, which means each 0 or 1 lasts 0.02 seconds. This study defines the desired foot contact pattern as a cyclic sliding window of size L_w and shape 4 X L_w. The sliding window extracts the quadruped grounding flags from the contact pattern template, which indicate whether the robot foot was on the ground or in the air between times t 1 and t L_w. The figure below gives an overview of the SayTap method.

##SayTap introduction The desired foot contact patterns serve as a new interface between natural language user commands and motion controllers. The motion controller is used to perform primary tasks (such as following a specified speed) and to place the robot foot on the ground at specific times so that the achieved foot contact pattern is as close as possible to the desired contact pattern.

To do this, at each time step, the motion controller takes as input the desired foot contact pattern, plus proprioceptive data such as joint position and velocity) and task-related inputs (such as user-specific velocity commands). DeepMind used reinforcement learning to train the motion controller and represented it as a deep neural network. During training of the controller, the researchers used a random generator to sample the desired foot contact patterns and then optimized the policy to output low-level robot actions that achieve the desired foot contact patterns. At test time, LLM is used to translate user commands into foot contact patterns.

SayTap uses foot contact patterns as a bridge between natural language user commands and low-level control commands. SayTap supports both simple and direct instructions (such as "Slowly jog forward") and vague user commands (such as "Good news, we are going to have a picnic this weekend!"). Through motion controllers based on reinforcement learning, four The foot robot reacts according to the commands.

SayTap uses foot contact patterns as a bridge between natural language user commands and low-level control commands. SayTap supports both simple and direct instructions (such as "Slowly jog forward") and vague user commands (such as "Good news, we are going to have a picnic this weekend!"). Through motion controllers based on reinforcement learning, four The foot robot reacts according to the commands.

Research shows that using properly designed prompts, LLM has the ability to accurately map user commands to specific formats foot contact pattern templates, even if the user commands are unstructured or ambiguous. During training, the researchers used a random pattern generator to generate multiple contact pattern templates, which have different pattern lengths T, based on a given step. The foot-to-ground contact ratio of state type G in one cycle enables the motion controller to learn on a wide range of motion pattern distributions and obtain better generalization capabilities. Please refer to the paper for more details. #Using a simple prompt containing only three common foot contact pattern context samples, LLM can Various human commands are accurately translated into contact patterns and even generalized to situations where there is no explicit specification of how the robot should behave. SayTap prompt is concise and compact, containing four Components: (1) General description used to describe the tasks that the LLM should complete; (2) Gait definition, used Remind LLM to focus on basic knowledge about quadrupedal gaits and their association with emotions;(3) Output format definition;(4) Demonstrate examples to let LLM learn in context Situation.The researchers also set five speeds so that the robot can move forward or backward, fast or slow, or stay still.Follow simple and direct commands#The animation below shows an example of SayTap successfully executing a direct and clear command .Although some commands are not included in the three context examples, LLM can still be guided to express the internal knowledge it learned in the pre-training stage. This will use the "gait definition module" in prompt, which is the above The second module in prompt.

##Follow unstructured or vague commands But even more interesting is SayTap’s ability to handle unstructured and ambiguous instructions. It only takes a few hints to link certain gaits to general emotional impressions, such as the robot jumping up and down after hearing something exciting (like "Let's go on a picnic!"). In addition, it can accurately represent scenes. For example, when told that the ground is very hot, the robot will move quickly to keep its feet from touching the ground as little as possible.

##SayTap is an interactive system for quadruped robots, It allows users to flexibly develop different movement behaviors. SayTap introduces desired foot contact patterns as an interface between natural language and low-level controllers. The new interface is both straightforward and flexible, and it allows the robot to follow both direct instructions and commands that do not explicitly state how the robot should behave. Researchers at DeepMind said that a major future research direction is to test whether commands that imply specific feelings can enable LLM to output the desired gait. In the gait definition module of the above results, the researchers provided a sentence that linked happy emotions to the jumping gait. Providing more information might enhance LLM's ability to interpret commands, such as decoding implicit feelings. In experimental evaluations, the link between happy emotions and a bouncing gait allowed the robot to behave energetically while following vague human instructions. Another interesting future research direction is the introduction of multi-modal inputs, such as video and audio. Theoretically, the foot contact patterns translated from these signals are also suitable for the newly proposed workflow here and are expected to open up more interesting use cases. Original link: https://blog.research.google/2023/08/saytap-language-to-quadrupedal.html

The above is the detailed content of Google uses a large model to train a robot dog to understand vague instructions and is excited to go on a picnic. For more information, please follow other related articles on the PHP Chinese website!