Object navigation is one of the basic tasks of intelligent robots. In this task, the intelligent robot actively explores and finds certain types of objects designated by humans in an unknown new environment. The object target navigation task is oriented to the application needs of future home service robots. When people need the robot to complete certain tasks, such as getting a glass of water, the robot needs to first find and move to the location of the water cup, and then help people get the water cup.

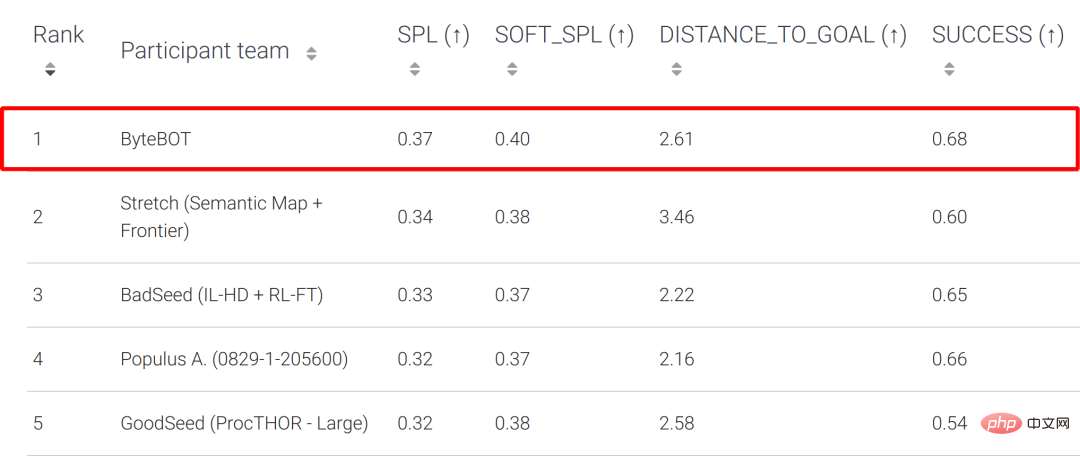

The Habitat Challenge is jointly organized by Meta AI and other institutions. It is one of the well-known competitions in the field of object navigation. As of 2022, it has been held for 4 consecutive years. A total of 54 teams participated in this competition. In the competition, researchers from the ByteDance AI Lab-Research team proposed a new object target navigation framework to address the shortcomings of existing methods. This framework cleverly combines imitation learning with traditional methods to stand out from the crowd and win the championship. Results that significantly exceeded the results of the second-place and other participating teams in the key metric SPL. Historically, the champion teams of this event are generally well-known research institutions such as CMU, UC Berkerly, and Facebook.

Test-Standard List

Test-Challenge List

Habitat Challenge Official Website : https://aihabitat.org/challenge/2022/

Habitat Challenge Competition LeaderBoard: https://eval.ai/web/challenges/challenge-page/1615/leaderboard

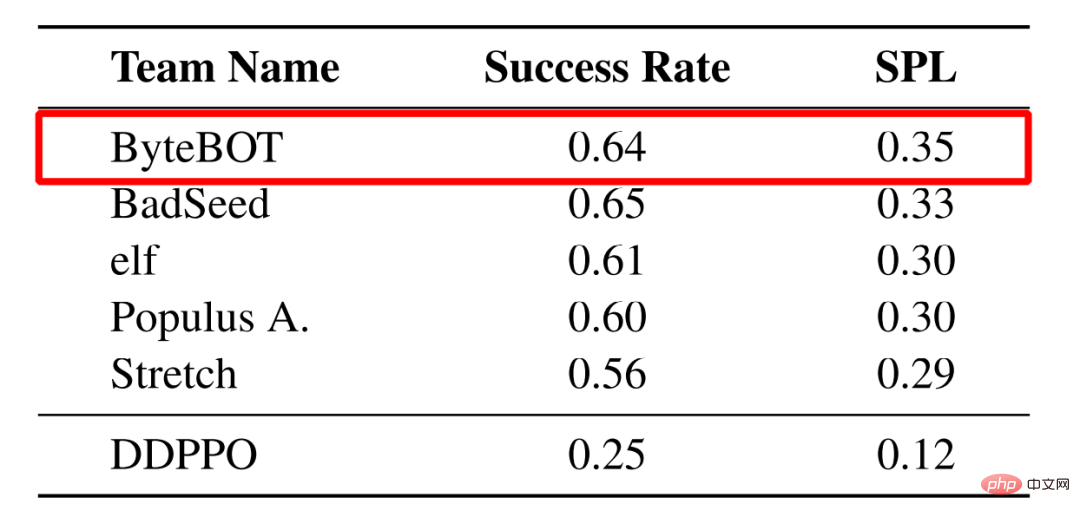

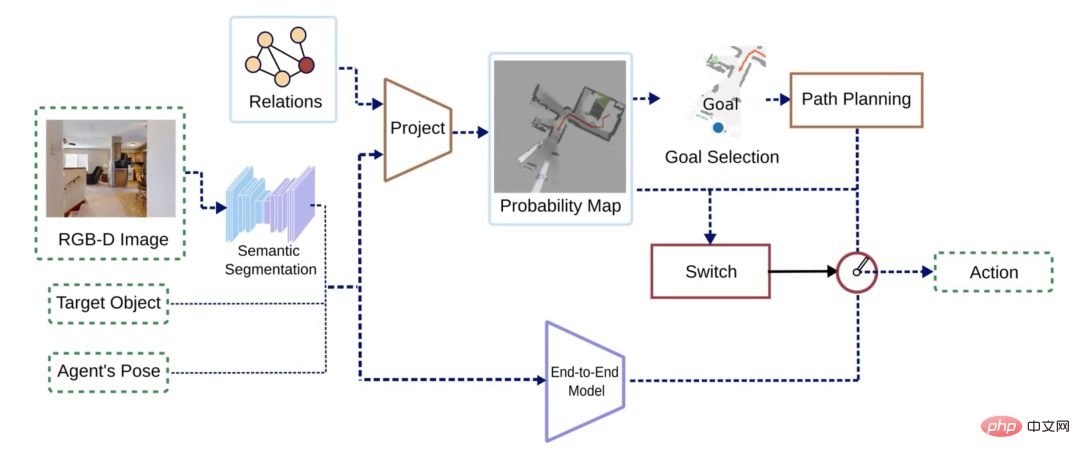

The current object target navigation methods can be roughly divided into two categories: end-to-end methods and map-based methods. The end-to-end method extracts the characteristics of the input sensor data and then feeds it into a deep learning model to obtain the action. Such methods are generally based on reinforcement learning or imitation learning (Figure 1 Map-less methods); map-based methods generally build explicit Or implicit map, then select a target point on the map through reinforcement learning and other methods, and finally plan the path and obtain the action (Figure 1 Map-based method).

Figure 1 Flow chart of end-to-end method (top) and map-based method (bottom)

After a lot of experiments After comparing the two types of methods, researchers found that both types of methods have their own advantages and disadvantages: the end-to-end method does not require the construction of a map of the environment, so it is more concise and has stronger generalization ability in different scenarios. However, because the network needs to learn to encode the spatial information of the environment, it relies on a large amount of training data, and it is difficult to learn some simple behaviors at the same time, such as stopping near the target object. Map-based methods use rasters to store features or semantics and have explicit spatial information, so the learning threshold for this type of behavior is lower. However, it relies heavily on accurate positioning results, and in some environments such as stairs, artificial design of perception and path planning strategies is required.

Based on the above conclusions, researchers from the ByteDance AI Lab-Research team hope to combine the advantages of the two methods. However, the algorithm processes of these two methods are very different, making it difficult to directly combine them; in addition, it is also difficult to design a strategy to directly integrate the output of the two methods. Therefore, the researchers designed a simple but effective strategy that allows the two types of methods to alternately conduct active exploration and object search according to the status of the robot, thereby maximizing their respective advantages.

The algorithm mainly consists of two branches: the probability map-based branch and the end-to-end branch. The input of the algorithm is the first-view RGB-D image and robot pose, as well as the target object category to be found, and the output is the next action (action). The RGB image is first instance segmented and passed to both branches along with other raw input data. The two branches output their own actions respectively, and a switching strategy determines the final output action.

Figure 2 Schematic diagram of algorithm flow

Probability map-based branch

The probability map-based branch draws on the idea of Semantic linking map[2] and is based on the author's original paper published at the IROS Robot Conference[3] method has been simplified. This branch builds a 2D semantic map based on the input instance segmentation results, depth map, and robot pose; on the other hand, it updates a probability map based on the pre-learned association probabilities between objects.

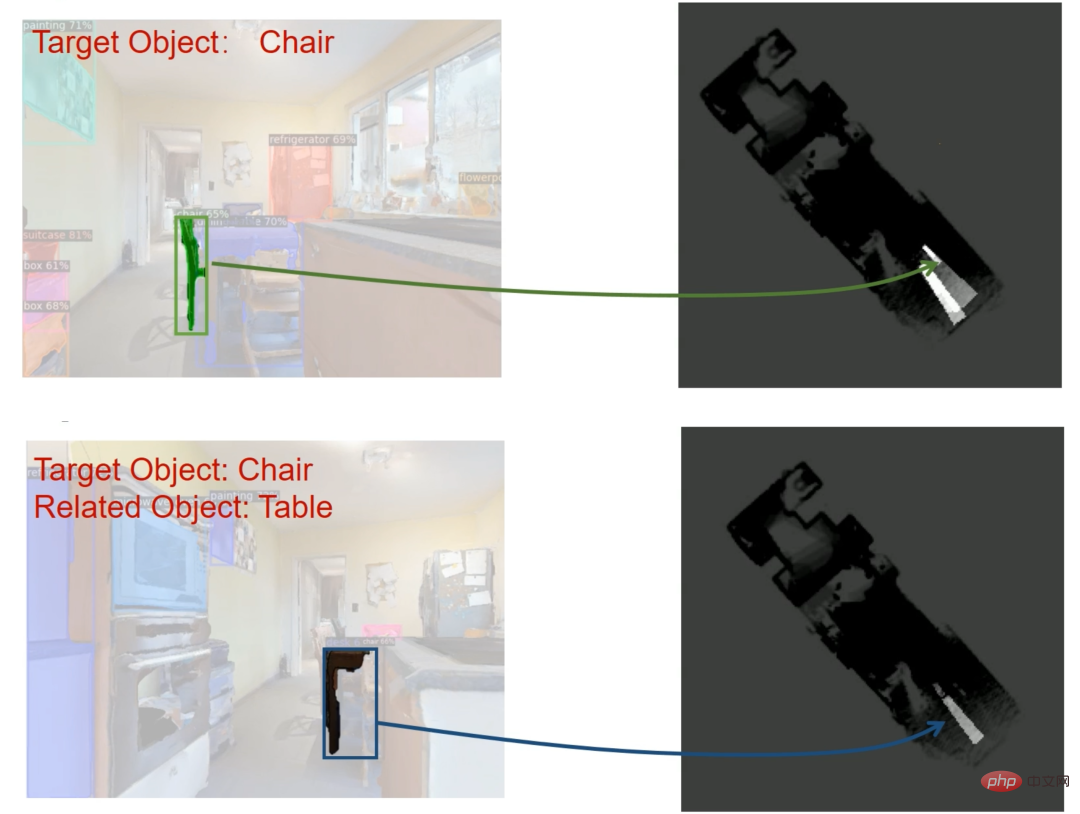

The probability map update methods include the following: when the target object is detected but not confident enough (the confidence score is lower than the threshold), you should continue to observe closer at this time, so the corresponding area on the probability map The probability value should be increased (as shown in the top of Figure 3); similarly, if objects related to the target object are detected (for example, the probability of tables and chairs placed together is relatively high), the probability value of the corresponding area will also increase ( As shown below in Figure 3). By selecting the area with the highest probability as the target point, the algorithm encourages the robot to approach potential target objects and related objects for further observation until it finds a target object with a confidence probability higher than the threshold.

Figure 3 Schematic diagram of probability map update method

End-to-end branch

The inputs of the end-to-end branch include RGB-D images, instance segmentation results, robot poses, and target object categories, and actions are directly output. The main function of the end-to-end branch is to guide the robot to find objects like humans, so the model and training process of the Habitat-Web[4] method are adopted. The method is based on imitation learning, where the network is trained by collecting examples of humans looking for objects in a training set.

Switching strategy

The switching strategy is mainly based on the results of the probability map and path planning, selecting one of the two actions output by the probability map branch and the end-to-end branch as final output. When there is no raster with a probability greater than the threshold in the probability map, the robot needs to explore the environment; when a feasible path cannot be planned on the map, the robot may be in some special environments (such as stairs). In both cases, end-to-end methods will be used. End-to-end branching enables the robot to have sufficient environmental adaptability. In other cases, the probabilistic map branch is selected to give full play to its advantages in finding target objects.

The effect of this switching strategy is shown in the video. The robot generally uses the end-to-end branch to efficiently explore the environment. Once a possible target object or related object is discovered, it switches to the probability map branch for closer observation. If the confidence probability of the target object is greater than the threshold, it will stop at the target object; otherwise, the probability value in the area will continue to decrease until there are no grids with a probability greater than the threshold, and the robot will switch back to end-to-end to continue exploring.

As can be seen from the video, this method combines the advantages of both the end-to-end approach and the map-based approach. The two branches perform their own duties. The end-to-end method is mainly responsible for exploring the environment; the probability map branch is responsible for observing close to the area of interest. Therefore, this method can not only explore complex scenes (such as stairs), but also reduce the training requirements of the end-to-end branch.

For the object active target navigation task, the ByteDance AI Lab-Research team proposed a framework that combines classic probability maps and modern imitation learning. This framework is a successful attempt to combine traditional methods with an end-to-end approach. In the Habitat competition, the method proposed by the ByteDance AI Lab-Research team significantly exceeded the results of the second place and other participating teams, proving the advancement of the algorithm. By introducing traditional methods into the current mainstream Embodied AI end-to-end method, we can further make up for some shortcomings of the end-to-end method, thereby making intelligent robots go further on the road to helping and serving people.

Recently, ByteDance AI Lab-Research team’s research in the field of robotics has also been included in top robotics conferences such as CoRL, IROS, and ICRA, including object pose estimation, object grabbing, target navigation, and automatic assembly. , human-computer interaction and other core tasks of robots.

【CoRL 2022】Generative Category-Level Shape and Pose Estimation with Semantic Primitives

【IROS 2022】3D Part Assembly Generation with Instance Encoded Transformer

【IROS 2022 】Navigating to Objects in Unseen Environments by Distance Prediction

[EMNLP 2022] Towards Unifying Reference Expression Generation and Comprehension

[ICRA 2022] Learning Design and Construction with Varying-Sized Materials via Prioritized Memory Resets

【IROS 2021】Simultaneous Semantic and Collision Learning for 6-DoF Grasp Pose Estimation

[IROS 2021] Learning to Design and Construct Bridge without Blueprint

[1] Yadav, Karmesh, et al. "Habitat-Matterport 3D Semantics Dataset." arXiv preprint arXiv:2210.05633 (2022) .

[2] Zeng, Zhen, Adrian Röfer, and Odest Chadwicke Jenkins. "Semantic linking maps for active visual object search." 2020 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2020.

[3] Minzhao Zhu, Binglei Zhao, and Tao Kong. "Navigating to Objects in Unseen Environments by Distance Prediction." arXiv preprint arXiv:2202.03735 (2022).

[4] Ramrakhya, Ram, et al. "Habitat-Web: Learning Embodied Object-Search Strategies from Human Demonstrations at Scale." Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2022.

Bytedance AI Lab NLP&Research focuses on cutting-edge technology research in the field of artificial intelligence, covering multiple technical research fields such as natural language processing and robotics. It is also committed to putting research results into practice for The company's existing products and businesses provide core technical support and services. The team's technical capabilities are being opened to the outside world through the Volcano Engine, empowering AI innovation.

Bytedance AI-Lab NLP&Research Contact information

The above is the detailed content of Byte AI Lab's core technology won the Habitat Challenge 2022 Active Navigation Championship, which combines traditional methods with imitation learning.. For more information, please follow other related articles on the PHP Chinese website!