I believe you have also heard about the solution for fast uploading of large files. In fact, it is nothing more than making the file smaller, that is, by compressing the file resources or dividing the file resources into chunks before uploading.

This article only introduces the method of uploading resources in parts, and will interact with the front-end (vue3 vite) and the server (nodejs koa2). Implement the simple function of uploading large files in chunks.

Sorting out ideas

Question 1: Who is responsible for resource chunking? Who is responsible for resource integration?

Of course, this problem is also very simple. The front end must be responsible for chunking and the server is responsible for integration.

Question 2: How does the front end divide resources into chunks?

The first step is to select the uploaded file resource, and then you can get the corresponding file object File, and the File.prototype.slice method can achieve resource segmentation. Of course, some people say it is Blob.prototype. slice method, because Blob.prototype.slice === File.prototype.slice.

Question 3: How does the server know when to integrate resources? How to ensure the orderliness of resource integration?

Since the front end will divide the resources into chunks and then send requests separately, that is to say, originally 1 file corresponded to 1 upload request, and now it may become 1 file corresponding to n upload requests. Therefore, the front end can integrate these multiple interfaces based on Promise.all. After the upload is completed, a merge request is sent to notify the server to merge.

When merging, you can use the read and write streams (readStream/writeStream) in nodejs to input the streams of all slices into the stream of the final file through the pipe.

When sending a request for resources, the front end will determine the sequence number corresponding to each file, and send the current block, sequence number, file hash and other information to the server. When the server merges, it will use the sequence number. Just merge them one by one.

Question 4: What should I do if a certain chunk of upload request fails?

Once an upload request on the server fails, information about the current chunking failure will be returned, which will include file name, file hash, chunk size, chunk serial number, etc. The front end will get this information. You can retransmit later, and consider whether it is more convenient to replace Promise.all with Promise.allSettled at this time.

Front-end part

Create project

Through pnpm create vite Create a project, the corresponding file directory is as follows.

Request module

src/request.js

This file It is a simple encapsulation for axios, as follows:

import axios from "axios";

const baseURL = 'http://localhost:3001';

export const uploadFile = (url, formData, onUploadProgress = () => { }) => {

return axios({

method: 'post',

url,

baseURL,

headers: {

'Content-Type': 'multipart/form-data'

},

data: formData,

onUploadProgress

});

}

export const mergeChunks = (url, data) => {

return axios({

method: 'post',

url,

baseURL,

headers: {

'Content-Type': 'application/json'

},

data

});

}File resource chunking

According to DefualtChunkSize = 5 * 1024 * 1024, that is, 5 MB, to process the file The resources are calculated in blocks, and the hash value of the file is calculated based on the file content through spark-md5[1], which facilitates other optimizations. For example, when the hash value remains unchanged, the server does not need to read and write the file repeatedly.

// 获取文件分块

const getFileChunk = (file, chunkSize = DefualtChunkSize) => {

return new Promise((resovle) => {

let blobSlice = File.prototype.slice || File.prototype.mozSlice || File.prototype.webkitSlice,

chunks = Math.ceil(file.size / chunkSize),

currentChunk = 0,

spark = new SparkMD5.ArrayBuffer(),

fileReader = new FileReader();

fileReader.onload = function (e) {

console.log('read chunk nr', currentChunk + 1, 'of');

const chunk = e.target.result;

spark.append(chunk);

currentChunk++;

if (currentChunk < chunks) {

loadNext();

} else {

let fileHash = spark.end();

console.info('finished computed hash', fileHash);

resovle({ fileHash });

}

};

fileReader.onerror = function () {

console.warn('oops, something went wrong.');

};

function loadNext() {

let start = currentChunk * chunkSize,

end = ((start + chunkSize) >= file.size) ? file.size : start + chunkSize;

let chunk = blobSlice.call(file, start, end);

fileChunkList.value.push({ chunk, size: chunk.size, name: currFile.value.name });

fileReader.readAsArrayBuffer(chunk);

}

loadNext();

});

}Send upload requests and merge requests

Integrate all chunked upload requests through the Promise.all method. After all chunked resources are uploaded, in then Send a merge request.

// 上传请求

const uploadChunks = (fileHash) => {

const requests = fileChunkList.value.map((item, index) => {

const formData = new FormData();

formData.append(`${currFile.value.name}-${fileHash}-${index}`, item.chunk);

formData.append("filename", currFile.value.name);

formData.append("hash", `${fileHash}-${index}`);

formData.append("fileHash", fileHash);

return uploadFile('/upload', formData, onUploadProgress(item));

});

Promise.all(requests).then(() => {

mergeChunks('/mergeChunks', { size: DefualtChunkSize, filename: currFile.value.name });

});

}Progress bar data

Blocked progress data uses the onUploadProgress configuration item in axios to obtain data, and automatically calculates the current data based on changes in the blocked progress data using computed The total progress of the file.

// 总进度条

const totalPercentage = computed(() => {

if (!fileChunkList.value.length) return 0;

const loaded = fileChunkList.value

.map(item => item.size * item.percentage)

.reduce((curr, next) => curr + next);

return parseInt((loaded / currFile.value.size).toFixed(2));

})

// 分块进度条

const onUploadProgress = (item) => (e) => {

item.percentage = parseInt(String((e.loaded / e.total) * 100));

}Server part

Building service

Use koa2 to build a simple service, the port is 3001

Use koa-body to process and receive the front-end transfer'Content-Type': 'multipart/form-data' Type of data

Use koa-router to register server routing

Use koa2-cors to handle cross-domain issues

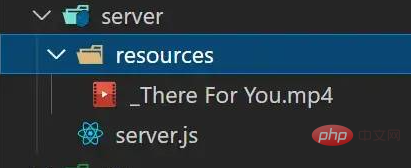

Directory/File Division

server/server.js

This file is the specific code implementation of the server , used to handle receiving and integrating chunked resources.

server/resources

This directory is used to store multiple blocks of a single file, as well as the resources after the final block integration:

When the chunked resources are not merged, a directory will be created with the current file name in the directory to store all the chunks related to the file

When block resources need to be merged, all block resources in the directory corresponding to this file will be read and then integrated into the original file

分块资源合并完成,会删除这个对应的文件目录,只保留合并后的原文件,生成的文件名比真实文件名多一个 _ 前缀,如原文件名 "测试文件.txt" 对应合并后的文件名 "_测试文件.txt"

接收分块

使用 koa-body 中的 formidable 配置中的 onFileBegin 函数处理前端传来的 FormData 中的文件资源,在前端处理对应分块名时的格式为:filename-fileHash-index,所以这里直接将分块名拆分即可获得对应的信息。

// 上传请求

router.post(

'/upload',

// 处理文件 form-data 数据

koaBody({

multipart: true,

formidable: {

uploadDir: outputPath,

onFileBegin: (name, file) => {

const [filename, fileHash, index] = name.split('-');

const dir = path.join(outputPath, filename);

// 保存当前 chunk 信息,发生错误时进行返回

currChunk = {

filename,

fileHash,

index

};

// 检查文件夹是否存在如果不存在则新建文件夹

if (!fs.existsSync(dir)) {

fs.mkdirSync(dir);

}

// 覆盖文件存放的完整路径

file.path = `${dir}/${fileHash}-${index}`;

},

onError: (error) => {

app.status = 400;

app.body = { code: 400, msg: "上传失败", data: currChunk };

return;

},

},

}),

// 处理响应

async (ctx) => {

ctx.set("Content-Type", "application/json");

ctx.body = JSON.stringify({

code: 2000,

message: 'upload successfully!'

});

});整合分块

通过文件名找到对应文件分块目录,使用 fs.readdirSync(chunkDir) 方法获取对应目录下所以分块的命名,在通过 fs.createWriteStream/fs.createReadStream 创建可写/可读流,结合管道 pipe 将流整合在同一文件中,合并完成后通过 fs.rmdirSync(chunkDir) 删除对应分块目录。

// 合并请求

router.post('/mergeChunks', async (ctx) => {

const { filename, size } = ctx.request.body;

// 合并 chunks

await mergeFileChunk(path.join(outputPath, '_' + filename), filename, size);

// 处理响应

ctx.set("Content-Type", "application/json");

ctx.body = JSON.stringify({

data: {

code: 2000,

filename,

size

},

message: 'merge chunks successful!'

});

});

// 通过管道处理流

const pipeStream = (path, writeStream) => {

return new Promise(resolve => {

const readStream = fs.createReadStream(path);

readStream.pipe(writeStream);

readStream.on("end", () => {

fs.unlinkSync(path);

resolve();

});

});

}

// 合并切片

const mergeFileChunk = async (filePath, filename, size) => {

const chunkDir = path.join(outputPath, filename);

const chunkPaths = fs.readdirSync(chunkDir);

if (!chunkPaths.length) return;

// 根据切片下标进行排序,否则直接读取目录的获得的顺序可能会错乱

chunkPaths.sort((a, b) => a.split("-")[1] - b.split("-")[1]);

console.log("chunkPaths = ", chunkPaths);

await Promise.all(

chunkPaths.map((chunkPath, index) =>

pipeStream(

path.resolve(chunkDir, chunkPath),

// 指定位置创建可写流

fs.createWriteStream(filePath, {

start: index * size,

end: (index + 1) * size

})

)

)

);

// 合并后删除保存切片的目录

fs.rmdirSync(chunkDir);

};前端 & 服务端 交互

前端分块上传

测试文件信息:

选择文件类型为 19.8MB,而且上面设定默认分块大小为 5MB ,于是应该要分成 4 个分块,即 4 个请求。

服务端分块接收

前端发送合并请求

服务端合并分块

扩展 —— 断点续传 & 秒传

有了上面的核心逻辑之后,要实现断点续传和秒传的功能,只需要在取扩展即可,这里不再给出具体实现,只列出一些思路。

断点续传

断点续传其实就是让请求可中断,然后在接着上次中断的位置继续发送,此时要保存每个请求的实例对象,以便后期取消对应请求,并将取消的请求保存或者记录原始分块列表取消位置信息等,以便后期重新发起请求。

取消请求的几种方式:

如果使用原生 XHR 可使用 (new XMLHttpRequest()).abort() 取消请求

如果使用 axios 可使用 new CancelToken(function (cancel) {}) 取消请求

如果使用 fetch 可使用 (new AbortController()).abort() 取消请求

秒传

不要被这个名字给误导了,其实所谓的秒传就是不用传,在正式发起上传请求时,先发起一个检查请求,这个请求会携带对应的文件 hash 给服务端,服务端负责查找是否存在一模一样的文件 hash,如果存在此时直接复用这个文件资源即可,不需要前端在发起额外的上传请求。

最后

前端分片上传的内容单纯从理论上来看其实还是容易理解的,但是实际自己去实现的时候还是会踩一些坑,比如服务端接收解析 formData 格式的数据时,没法获取文件的二进制数据等。

更多编程相关知识,请访问:编程视频!!