Found a total of 3 related content

Magically modified RNN challenges Transformer, RWKV is new: launching two new architecture models

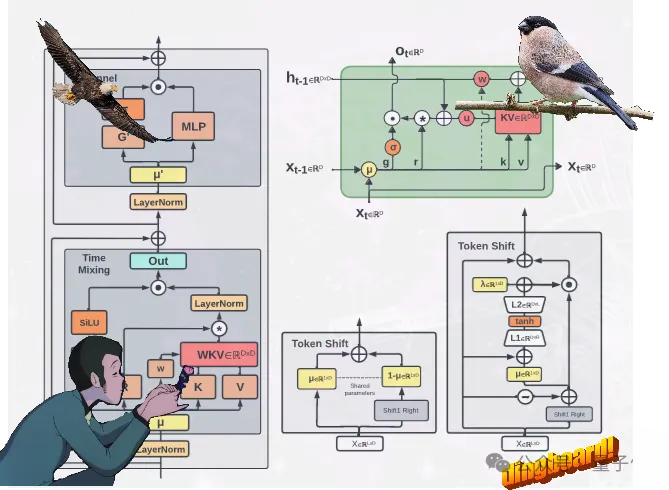

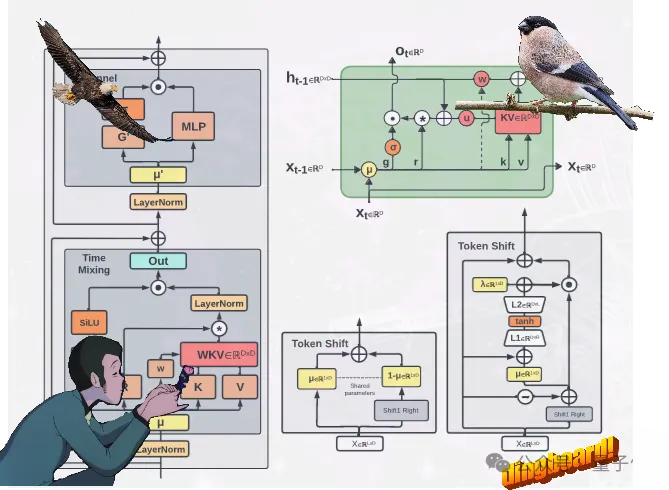

Article Introduction:Instead of taking the usual path of Transformer, the new domestic architecture RWKV of RNN has been modified and made new progress: two new RWKV architectures are proposed, namely Eagle (RWKV-5) and Finch (RWKV-6). These two sequence models are based on the RWKV-4 architecture and then improved. Design advancements in the new architecture include multi-headedmatrix-valuedstates and dynamicrecurrencemechanism. These improvements improve the expressive capabilities of the RWKV model while maintaining the inference efficiency characteristics of RNN. At the same time, the new architecture introduces a new multilingual corpus, including

2024-04-15

comment 0

856

Attention-free large model Eagle7B: Based on RWKV, inference cost is reduced by 10-100 times

Article Introduction:Attention-free large model Eagle7B: Based on RWKV, the inference cost is reduced by 10-100 times. In the AI track, small models have attracted much attention recently, compared to models with hundreds of billions of parameters. For example, the Mistral-7B model released by the French AI startup outperformed Llama213B in every benchmark and outperformed Llama134B in code, mathematics, and inference. Compared with large models, small models have many advantages, such as low computing power requirements and the ability to run on the device side. Recently, a new language model has emerged, namely Eagle7B with 7.52B parameters, which comes from the open source non-profit organization RWKV. It has the following characteristics: It is built based on the RWKV-v5 architecture.

2024-02-01

comment 0

831

Introducing RWKV: The rise of linear Transformers and exploring alternatives

Article Introduction:Here is a summary of some of my thoughts from the RWKV podcast: https://www.latent.space/p/rwkv#detailsWhy is the importance of alternatives so prominent? With the artificial intelligence revolution in 2023, the Transformer architecture is currently at its peak. However, in the rush to adopt the successful Transformer architecture, it is easy to overlook the alternatives that can be learned from. As engineers, we should not take a one-size-fits-all approach and use the same solution to every problem. We should weigh the pros and cons in every situation; otherwise we will be trapped within the limitations of a particular platform while feeling "satisfied" in not knowing there are alternatives, which can lead to

2023-09-27

comment 0

2127