Found a total of 636 related content

Detailed explanation of gradient descent algorithm in Python

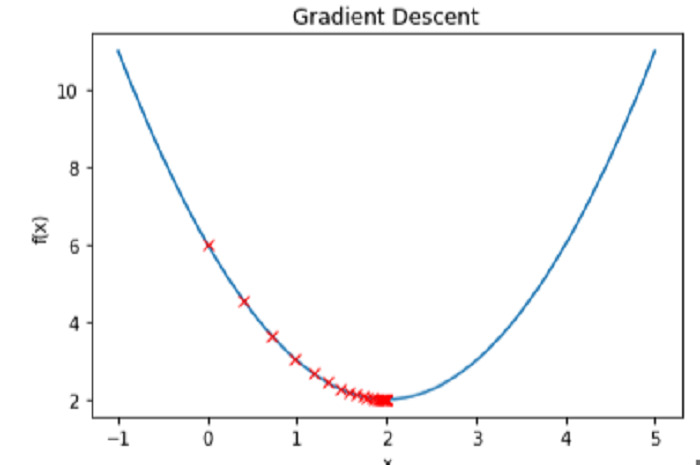

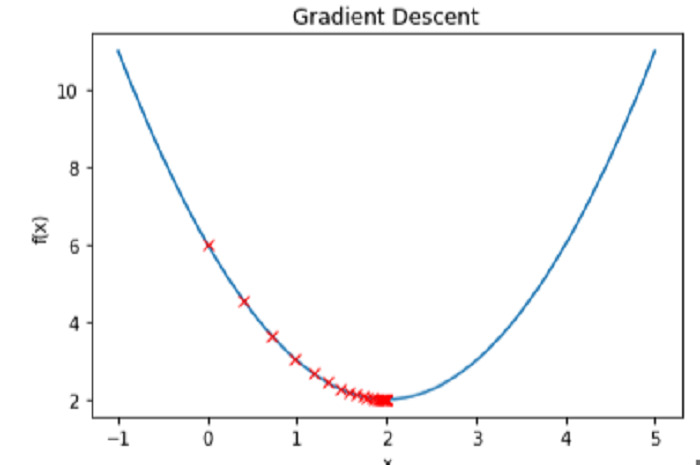

Article Introduction:Gradient descent is a commonly used optimization algorithm and is widely used in machine learning. Python is a great programming language for data science, and there are many ready-made libraries for implementing gradient descent algorithms. This article will introduce the gradient descent algorithm in Python in detail, including concepts and implementation. 1. Definition of Gradient Descent Gradient descent is an iterative algorithm used to optimize the parameters of a function. In machine learning, we usually use gradient descent to minimize the loss function. Therefore, gradient descent can

2023-06-10

comment 0

1796

Detailed explanation of stochastic gradient descent algorithm in Python

Article Introduction:The stochastic gradient descent algorithm is one of the commonly used optimization algorithms in machine learning. It is an optimized version of the gradient descent algorithm and can converge to the global optimal solution faster. This article will introduce the stochastic gradient descent algorithm in Python in detail, including its principles, application scenarios and code examples. 1. Principle of Stochastic Gradient Descent Algorithm Gradient Descent Algorithm Before introducing the stochastic gradient descent algorithm, let’s briefly introduce the gradient descent algorithm. The gradient descent algorithm is one of the commonly used optimization algorithms in machine learning. Its idea is to follow the negative gradient of the loss function.

2023-06-10

comment 0

1178

What is the gradient descent algorithm in Python?

Article Introduction:What is the gradient descent algorithm in Python? The gradient descent algorithm is a commonly used mathematical optimization technique used to find the minimum value of a function. The algorithm gradually updates the parameter values of the function in an iterative manner, moving it toward the local minimum. In Python, the gradient descent algorithm is widely used in fields such as machine learning, deep learning, data science, and numerical optimization. Principle of Gradient Descent Algorithm The basic principle of gradient descent algorithm is to update along the negative gradient direction of the objective function. On a two-dimensional plane, the objective function can be

2023-06-04

comment 0

565

Examples of practical applications of gradient descent

Article Introduction:Gradient descent is a commonly used optimization algorithm, mainly used in machine learning and deep learning to find the best model parameters or weights. Its core goal is to measure the difference between the model's predicted output and its actual output by minimizing a cost function. The algorithm uses the direction of steepest descent of the cost function gradient by iteratively adjusting the model parameters until it reaches the minimum value. Gradient calculation is implemented by taking the partial derivative of the cost function for each parameter. In gradient descent, each iteration the algorithm chooses an appropriate step size based on the learning rate, taking a step toward the steepest cost function. The choice of learning rate is very important because it affects the step size of each iteration and needs to be adjusted carefully to ensure that the algorithm can converge to the optimal solution. Practical Use Cases of Gradient DescentGradient Descent

2024-01-23

comment 0

542

How to implement gradient descent algorithm using Python?

Article Introduction:How to implement gradient descent algorithm using Python? The gradient descent algorithm is a commonly used optimization algorithm that is widely used in machine learning and deep learning. The basic idea is to find the minimum point of the function through iteration, that is, to find the parameter value that minimizes the function error. In this article, we will learn how to implement the gradient descent algorithm in Python and give specific code examples. The core idea of the gradient descent algorithm is to iteratively optimize along the opposite direction of the function gradient, thereby gradually approaching the minimum point of the function. in reality

2023-09-19

comment 0

971

What is the stochastic gradient descent algorithm in Python?

Article Introduction:What is the stochastic gradient descent algorithm in Python? The stochastic gradient descent algorithm is a common algorithm used to optimize machine learning models. Its purpose is to minimize the loss function. This algorithm is called "random" because it uses randomization to help avoid getting stuck in a local optimum when training the model. In this article, we will introduce how the stochastic gradient descent algorithm works and how to implement it in Python. The gradient descent algorithm is an iterative algorithm for minimizing a loss function. In each iteration, it adds the current parameters to the loss

2023-06-05

comment 0

985

Evaluation of time complexity of gradient descent algorithm

Article Introduction:The gradient descent algorithm is an iterative optimization algorithm used to find the minimum value of the loss function. In each iteration, the algorithm calculates the gradient of the current position and performs parameter updates based on the direction of the gradient to gradually reduce the value of the loss function. The significance of evaluating the time complexity of the gradient descent algorithm is to help us better understand and optimize the performance and efficiency of the algorithm. By analyzing the time complexity of the algorithm, we can predict the running time of the algorithm and select appropriate parameters and optimization strategies to improve the efficiency and convergence speed of the algorithm. In addition, the analysis of time complexity helps to compare the performance of different algorithms and select the optimization algorithm that is most suitable for a specific problem. The time complexity of the gradient descent algorithm is mainly determined by the size of the data set. At each iteration, the entire number needs to be calculated

2024-01-23

comment 0

499

This article will help you understand what gradient descent is

Article Introduction:Gradient descent is the source of power for machine learning. After paving the way for the previous two sections, we can start to talk about the source of power for machine learning: gradient descent. Gradient descent is not a very complicated mathematical tool. It has a history of more than 200 years. However, people may not have expected that such a relatively simple mathematical tool would become the basis of many machine learning algorithms, and it would also ignite the revolution in conjunction with neural networks. The deep learning revolution. 1. What is gradient? Calculate the partial derivatives of each parameter of a multivariate function, and then write the obtained partial derivatives of each parameter in the form of a vector, which is the gradient. Specifically, the function f (x1, x2) of two independent variables corresponds to the two features in the machine learning data set. If the partial derivatives are obtained for x1 and x2 respectively, then the

2023-05-17

comment 0

2076

In-depth exploration of Python's underlying technology: how to implement the gradient descent algorithm

Article Introduction:In-depth exploration of Python's underlying technology: How to implement the gradient descent algorithm, specific code examples are required. Introduction: The gradient descent algorithm is a commonly used optimization algorithm and is widely used in the fields of machine learning and deep learning. This article will delve into the underlying technology of Python, introduce the principle and implementation process of the gradient descent algorithm in detail, and provide specific code examples. 1. Introduction to Gradient Descent Algorithm The gradient descent algorithm is an optimization algorithm. Its core idea is to gradually approach the minimum value of the loss function by iteratively updating parameters. in particular,

2023-11-08

comment 0

927

How to implement gradient descent algorithm in Python to find local minima?

Article Introduction:Gradient descent is an important optimization method in machine learning, used to minimize the loss function of the model. In layman's terms, it requires repeatedly changing the parameters of the model until the ideal value range that minimizes the loss function is found. The method works by taking tiny steps in the direction of the negative gradient of the loss function, or more specifically, along the path of steepest descent. The learning rate is a hyperparameter that regulates the trade-off between algorithm speed and accuracy, and it affects the size of the step size. Many machine learning methods, including linear regression, logistic regression, and neural networks, to name a few, employ gradient descent. Its main application is model training, where the goal is to minimize the difference between the expected and actual values of the target variable. In this post we will look at implementing gradients in Python

2023-09-06

comment 0

382

How to implement gradient descent to solve logistic regression in python

Article Introduction:Linear regression 1. The definition of linear regression function likelihood function: the function likelihood function about (unknown) parameters given the joint sample value Natural function log likelihood: 3. Linear regression objective function (expression of error, our purpose is to minimize the error between the true value and the predicted value) (the derivative is 0 to obtain the extreme value, and the parameters of the function are obtained) Logistic regression logic Regression is to add a layer of Sigmoid function to the results of linear regression. 1. Logistic regression function 2. Logistic regression likelihood function. The premise data obeys the Bernoulli distribution logarithmic likelihood: the introduction of the transformation into a gradient descent task, the logistic regression objective function gradient descent method My understanding of the solution is to seek the derivative and update the parameters until a certain condition is reached.

2023-05-12

comment 0

989

Code logic for implementing mini-batch gradient descent algorithm using Python

Article Introduction:Let theta = model parameters and max_iters = number of epochs. For itr=1,2,3,...,max_iters: For mini_batch(X_mini,y_mini): Forward pass of batch X_mini: 1. Predict the mini-batch 2. Calculate the prediction error (J( theta)) Post-pass: Calculate the gradient (theta) = J (theta) wrt the partial derivative update parameters of theta: theta = theta – learning_rate * gradient (theta) Python code flow to implement the gradient descent algorithm Step 1: Import dependencies, as Linear regression generates data,

2024-01-22

comment

944

Gradient descent optimization method for logistic regression model

Article Introduction:Logistic regression is a commonly used binary classification model whose purpose is to predict the probability of an event. The optimization problem of the logistic regression model can be expressed as: estimating the model parameters w and b by maximizing the log likelihood function, where x is the input feature vector and y is the corresponding label (0 or 1). Specifically, by calculating the cumulative sum of log(1+exp(-y(w·x+b))) for all samples, we can obtain the optimal parameter values, so that the model can best fit the data. good. Problems are often solved using gradient descent algorithms, such as parameters used in logistic regression to maximize the log-likelihood. The following are the steps of the gradient descent algorithm of the logistic regression model: 1. Initialization parameters: Choose an initial value, and pass

2024-01-23

comment

270

Gradient Boosted Trees and Gradient Boosted Machines

Article Introduction:The gradient boosting model mainly includes two fitting methods: gradient boosting tree and gradient boosting machine. The gradient boosting tree uses repeated iterations to gradually reduce the residual error by training a series of decision trees, and finally obtains a prediction model. The gradient boosting machine introduces more learners based on the gradient boosting tree, such as linear regression and support vector machines, to improve the performance of the model. The combination of these learners can better capture the complex relationships of the data, thereby improving the accuracy and stability of predictions. The concept and principle of gradient boosting tree Gradient boosting tree is an ensemble learning method that reduces residual errors by iteratively training decision trees to obtain the final prediction model. The principle of gradient boosting tree is as follows: Initialize the model: use the average value of the target variable as the initial predicted value. Iterative training: pass

2024-01-23

comment

249

How do deep residual networks overcome the vanishing gradient problem?

Article Introduction:Residual network is a popular deep learning model that solves the vanishing gradient problem by introducing residual blocks. This article starts from the essential cause of the vanishing gradient problem and explains in detail the solution to the residual network. 1. The essential cause of the vanishing gradient problem. In deep neural networks, the output of each layer is calculated by multiplying the input of the previous layer and the weight matrix and calculating it through the activation function. As the number of network layers increases, the output of each layer will be affected by the output of previous layers. This means that even small changes in the weight matrix and activation function will have an impact on the output of the entire network. In the backpropagation algorithm, gradients are used to update the weights of the network. The calculation of gradient requires passing the gradient of the next layer to the previous layer through the chain rule. Therefore, the gradients of previous layers will also contribute to the calculation of gradients.

2024-01-22

comment 0

804

Reinforcement learning policy gradient algorithm

Article Introduction:Policy gradient algorithm is an important reinforcement learning algorithm. Its core idea is to search for the best strategy by directly optimizing the policy function. Compared with the method of indirectly optimizing the value function, the policy gradient algorithm has better convergence and stability, and can handle continuous action space problems, so it is widely used. The advantage of this algorithm is that it can directly learn the policy parameters without the need for an estimated value function. This enables the policy gradient algorithm to cope with the complex problems of high-dimensional state space and continuous action space. In addition, the policy gradient algorithm can also approximate the gradient through sampling, thereby improving computational efficiency. In short, the policy gradient algorithm is a powerful and flexible method. In the policy gradient algorithm, we need to define a policy function\pi(a|s), which gives

2024-01-22

comment 0

800

How to use Python to perform gradient calculation on images

Article Introduction:How to use Python to calculate the gradient of an image. Gradient is one of the commonly used technical means in image processing. By calculating the gradient value of each pixel in the image, it can help us understand the edge information of the image and perform other further processing. . This article will introduce how to use Python to perform gradient calculation on images, and attach code examples. 1. Principle of gradient calculation Gradient calculation is based on the brightness change of the image to measure the edge information of the image. In digital images, pixel values are grayscale levels from 0 to 255

2023-08-25

comment 0

1196

How to perform gradient filtering on images using Python

Article Introduction:How to use Python to perform gradient filtering on images. Gradient filtering is a technique commonly used in digital image processing to detect edge and contour information in images. In Python, we can use the OpenCV library to implement gradient filtering. This article will introduce how to use Python to perform gradient filtering on images, and attach code examples for reference. The principle of gradient filtering is to determine the position of the edge by calculating the difference in pixel values around the pixel point. Generally speaking, edges in images are usually represented by sharp changes in the gray value of the image.

2023-08-22

comment 0

509

Step-by-step visualization of the decision-making process of the gradient boosting algorithm

Article Introduction:The gradient boosting algorithm is one of the most commonly used ensemble machine learning techniques, and this model uses a sequence of weak decision trees to build a strong learner. This is also the theoretical basis of the XGBoost and LightGBM models, so in this article, we will build a gradient boosting model from scratch and visualize it. Introduction to Gradient Boosting Algorithm Gradient Boosting algorithm (Gradient Boosting) is an ensemble learning algorithm that improves the prediction accuracy of the model by constructing multiple weak classifiers and then combining them into a strong classifier. The principle of the gradient boosting algorithm can be divided into the following steps: Initialization model: Generally speaking, we can use a simple model (such as a decision tree) as the initial classifier. Calculate the loss function

2023-04-13

comment 0

863

Gradient boosting (GBM) algorithm example in Python

Article Introduction:Gradient boosting (GBM) algorithm example in Python Gradient boosting (GBM) is a machine learning method that gradually reduces the loss function by iteratively training the model. It has good application results in both regression and classification problems, and is a powerful ensemble learning algorithm. This article will use Python as an example to introduce how to use the GBM algorithm to model a regression problem. First we need to import some commonly used Python libraries, as shown below: importpandasaspdimpo

2023-06-10

comment 0

1356