Node.js Now it has become a member of the toolbox for building high-concurrency network application services. Why has Node.js become the darling of the public? This article will start with the basic concepts of processes, threads, coroutines, and I/O models, and give you a comprehensive introduction to Node.js and the concurrency model.

We generally call the running instance of a program a process, which is a basic unit for resource allocation and scheduling by the operating system. , generally includes the following parts:

process table table, each process occupies a process table entry (also called process control block ), which contains the program counter, stack pointer, memory allocation, and open files status, scheduling information and other important process status information to ensure that after the process is suspended, the operating system can correctly revive the process. The process has the following characteristics:

It should be noted that if a program is run twice, even if the operating system can enable them to share code (that is, only one copy of the code is in memory), it cannot change the running program. The fact that the two instances are two different processes.

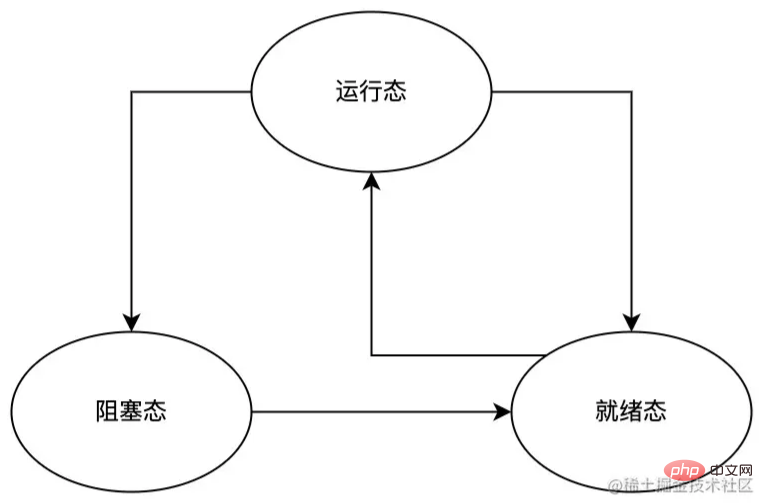

During the execution of the process, due to various reasons such as interruptions and CPU scheduling, the process will switch between the following states:

As can be seen from the process state switching diagram above, the process can switch from the running state to the ready state and the blocking state, but only the ready state can be directly switched to the running state. This is because:

Sometimes, we need to use threads to solve the following problems:

Regarding threads, we need to know the following points:

Now that we understand the basic characteristics of threads, let’s talk about some common thread types.

Kernel state thread is a thread directly supported by the operating system. Its main features are as follows:

User-mode thread is a thread completely built in user space. Its main characteristics are as follows:

Lightweight process (LWP) is a user thread built on and supported by the kernel. The main features are as follows:

User space can only use kernel threads through lightweight processes (LWP), which can be regarded as a bridge between user state threads and kernel threads. Therefore, only the first Only by supporting kernel threads can there be a lightweight process (LWP);

Most operations of a lightweight process (LWP) require the user mode space to initiate a system call. This system call The cost is relatively high (requiring switching between user mode and kernel mode);

Each lightweight process (LWP) needs to be associated with a specific kernel thread, Therefore:

can access all shared address spaces and systems of the processes to which they belong. resource.

Above we have briefly discussed the common thread types (kernel state threads, user state threads, lightweight processes) Introduction, each of them has its own scope of application. In actual use, they can be freely combined and used according to their own needs, such as common one-to-one, many-to-one, many-to-many and other models. Due to space limitations, this article I won’t introduce too much about this, and interested students can do their own research.

Coroutine, also called Fiber, is a type of thread that is built on threads and is managed and scheduled by the developer. , state maintenance and other behaviors, its main features are:

In JavaScript, the async/await we often use is an implementation of coroutine, such as the following example:

function updateUserName(id, name) {

const user = getUserById(id);

user.updateName(name);

return true;

}

async function updateUserNameAsync(id, name) {

const user = await getUserById(id);

await user.updateName(name);

return true;

}Above example , the logical execution sequence within functions updateUserName and updateUserNameAsync is:

getUserById and assign its return value to Variable user; updateName method of user; true to the caller . The main difference between the two lies in the state control during actual operation:

updateUserName, as mentioned above The above logical sequence is executed in sequence; updateUserNameAsync, it is also executed in sequence according to the logical sequence mentioned above, except that when await is encountered When, updateUserNameAsync will be suspended and save the current program state at the suspended location. It will not be awakened again until await the subsequent program fragment returns. updateUserNameAsync And restore the program state before suspending, and then continue to execute the next program. Through the above analysis, we can boldly guess: what coroutines need to solve is not the program concurrency problems that processes and threads need to solve, but the problems encountered when processing asynchronous tasks (such as File operations, network requests, etc.); before async/await, we could only handle asynchronous tasks through callback functions, which could easily make us fall into callback hell and produce a mess of Code that is generally difficult to maintain can be achieved through coroutines to synchronize asynchronous code.

What needs to be kept in mind is that the core capability of the coroutine is to be able to suspend a certain program and maintain the state of the suspended position of the program, and resume at the suspended position at some time in the future, and continue to execute the suspended position. the next program.

A complete I/O operation needs to go through the following stages:

I/O operation request to the kernel through a system call; the I/O operation request (divided into a preparation phase and Actual execution stage), and returns the processing results to the user thread. We can roughly divide I/O operations into blocking I/O, non-blocking I/O, Synchronous I/O, Asynchronous I/O Four types. Before discussing these types, we first become familiar with the following two sets of concepts (assuming here that service A calls service B):

##Blocking/non-blocking:

;. Synchronous/asynchronous:

; after execution. To A, then service B is asynchronous. blocking/non-blocking with synchronous/asynchronous, so special attention is required:

For the caller of the service; For the service As far as the callee is concerned. blocking/non-blocking and synchronous/asynchronous, let’s look at the specific I/O model.

I/O system call, the user enters the (thread) process Will be immediately blocked until the entire I/O operation is processed and the result is returned to the user (thread) process, the user (thread) process can be unblocked status, continue to perform subsequent operations.

operation, the user cannot perform other operations in the (thread) process; request can block the incoming (thread) process, so in order to respond to I/O requests in a timely manner, it is necessary to allocate an incoming (thread) process to each request. This will cause huge resource usage, and for long connections In terms of requests, since the incoming (thread) process resources cannot be released for a long time, if there are new requests in the future, a serious performance bottleneck will occur. After the system call, if the I/O operation is not ready, the I/O call will return an error, and the user does not need to wait when entering the thread. Instead, polling is used to detect whether the I/O operation is ready; after the operation will block the user from entering ( Thread (thread) until the execution result is returned to the user (thread). operation readiness status (generally use while loop), so the model needs to occupy the CPU and consume CPU resources; operation is ready, the user's (thread) process will not be blocked until I/O After the operation is ready, subsequent actual I/O operations will block the user from entering the thread; I/O After the system call, if The I/O call will cause the user's thread to be blocked, then the I/O call will be synchronous I/O, otherwise it will be AsynchronousI/O.

I/O operation synchronous or asynchronous is the user's thread (thread) connection with I/O Communication mechanism for operations, where:

SynchronizationIn this case, the interaction between the user thread and I/O is synchronized through the kernel buffer, that is, the kernel will /O The execution result of the operation is synchronized to the buffer, and then the data in the buffer is copied to the user thread. This process will block the user thread until I/O The operation is completed; In the case of user thread (thread) interaction with I/O is directly synchronized through the kernel, that is, the kernel will directly I/O The execution result of the operation is copied to the user's thread. This process will not block the user's thread. I/O model, I personally believe that the reason for choosing this model is:

intensive. How to manage multi-threaded resources reasonably and efficiently while ensuring high concurrency is more complicated than the management of single-threaded resources. I/O model, and uses the main thread's EventLoop and Auxiliary Worker thread to implement its model:

I/O models, and finally introduces Node.js The concurrency model is briefly introduced. Although introduced

There is not much space on the Node.js concurrency model, but I believe that it will never change without departing from its roots. Once you master the relevant basics and then deeply understand the design and implementation of Node.js, you will get twice the result with half the effort.

nodejs tutorial!

The above is the detailed content of Let's talk about processes, threads, coroutines and concurrency models in Node.js. For more information, please follow other related articles on the PHP Chinese website!