This article will give you a detailed understanding of the stream module inNodejs, and introduce the concept and usage of stream. I hope it will be helpful to everyone!

stream module is a very core module inNode. Other modules such as fs, http, etc. are all based on instances of the stream module.

For most front-end novices, when they first get started with Node, they still don’t have a clear understanding of the concept and use of streams, because there seems to be very little about "streams" in front-end work. Handle related applications.

With the simple word "flow", we can easily have the concepts of water flow, flow, etc.

Official definition: Stream is an abstract interface used to process stream data in Node.js

From the official definition, we can see:

Accurate understanding of stream, It can be understood asData flow, which is a means of transmitting data. In an application, a stream is an ordered data flow with a starting point and an end point.

The main reason why we don’t understand stream well is that it is an abstract concept.

In order for us to clearly understand the stream module, let us first explain the practical applications of the stream module with specific application scenarios.

stream stream, in Node, is mainly used inlarge amounts of dataprocessing requirements, such as fs reading and writing of large files, http request response, file compression, data Encryption/decryption and other applications.

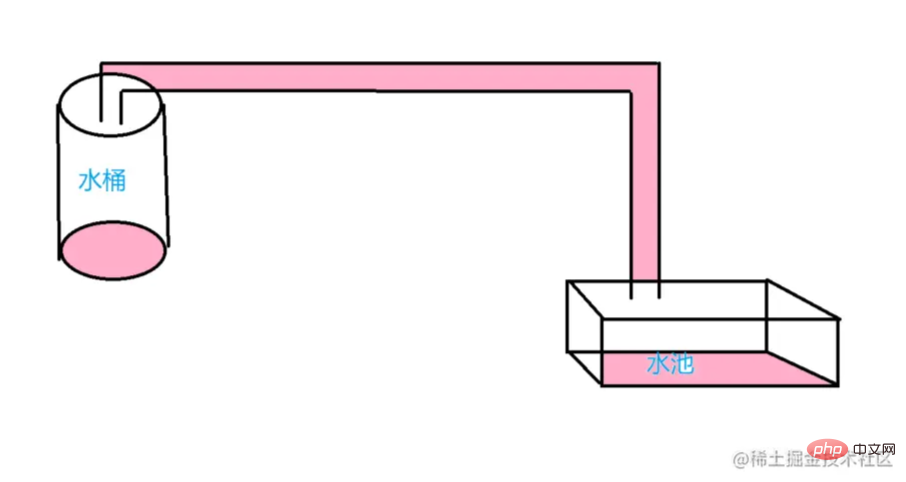

We use the above picture to illustrate the use of streams. The bucket can be understood asdata source, and the pool can be understood asdata target, the intermediate connection pipeline, we can understand it asdata flow, throughdata flow pipeline, data flows from the data source to the data target.

In Node, streams are divided into 4 categories: readable stream, writable stream, duplex stream, and conversion stream.

Writable: A stream to which data can be writtenReadable: Yes Streams to read data fromDuplex: Streams forReadableandWritableTransform: A stream that can modify or transform data while writing and reading itDuplexAll streams are # An instance of. That is, we can monitor changes in the data flow through the event mechanism.4. Data mode and cache areaBefore learning the specific use of 4 types of streams, we need to understand two concepts

, which will help us better understand in the next flow of study.4.1 Data Mode

All streams created by the Node.js API are only forstringsand

Buffer(orUint8Array) object to operate on.4.2 Buffer

Writableand

Readablestreams both store data in internal buffers in the buffer. The amount of data that can be buffered depends on the When the implementation calls Once the total size of the internal read buffer reaches the threshold specified by When the ##5.1 Flow and pause of stream reading Readable 5.2 Common examples of readable streams 6.1 The flow and suspension of writable streams

The above is the detailed content of Let's talk about the core module in Nodejs: stream module (see how to use it). For more information, please follow other related articles on the PHP Chinese website!highWaterMarkoption passed to the stream's constructor. For ordinary streams, thehighWaterMarkoption specifies the total number ofbytes;For streams operating in object mode, thehighWaterMarkoption specifies the total number of objects. ThehighWaterMarkoption is a threshold, not a limit: it dictates how much data the stream buffers before it stops requesting more data.stream.push(chunk), the data is cached in theReadablestream. If the consumer of the stream does not callstream.read(), the data will remain in the internal queue until it is consumed.highWaterMark, the stream will temporarily stop reading data from the underlying resource until the currently buffered data can be consumedwritable.write(chunk)method is called repeatedly, the data will be cached in theWritablestream.5. Readable stream

Streams effectively run in one of two modes: flowing and paused.

Add data event handle

If not Pipeline target, by calling the stream.pause() method.

import path from 'path'; import fs, { read } from 'fs'; const filePath = path.join(path.resolve(), 'files', 'text.txt'); const readable = fs.createReadStream(filePath); // 如果使用 readable.setEncoding() 方法为流指定了默认编码,则监听器回调将把数据块作为字符串传入;否则数据将作为 Buffer 传入。 readable.setEncoding('utf8'); let str = ''; readable.on('open', (fd) => { console.log('开始读取文件') }) // 每当流将数据块的所有权移交给消费者时,则会触发 'data' 事件 readable.on('data', (data) => { str += data; console.log('读取到数据') }) // 方法将导致处于流动模式的流停止触发 'data' 事件,切换到暂停模式。 任何可用的数据都将保留在内部缓冲区中。 readable.pause(); // 方法使被显式暂停的 Readable 流恢复触发 'data' 事件,将流切换到流动模式。 readable.resume(); // 当调用 stream.pause() 并且 readableFlowing 不是 false 时,则会触发 'pause' 事件。 readable.on('pause', () => { console.log('读取暂停') }) // 当调用 stream.resume() 并且 readableFlowing 不是 true 时,则会触发 'resume' 事件。 readable.on('resume', () => { console.log('重新流动') }) // 当流中没有更多数据可供消费时,则会触发 'end' 事件。 readable.on('end', () => { console.log('文件读取完毕'); }) // 当流及其任何底层资源(例如文件描述符)已关闭时,则会触发 'close' 事件。 readable.on('close', () => { console.log('关闭文件读取') }) // 将 destWritable 流绑定到 readable,使其自动切换到流动模式并将其所有数据推送到绑定的 Writable。 数据流将被自动管理 readable.pipe(destWriteable) // 如果底层流由于底层内部故障而无法生成数据,或者当流实现尝试推送无效数据块时,可能会发生这种情况。 readable.on('error', (err) => { console.log(err) console.log('文件读取发生错误') })

import path from 'path'; import fs, { read } from 'fs'; const filePath = path.join(path.resolve(), 'files', 'text.txt'); const copyFile = path.join(path.resolve(), 'files', 'copy.txt'); let str = ''; // 创建可读流 const readable = fs.createReadStream(filePath); // 如果使用 readable.setEncoding() 方法为流指定了默认编码 readable.setEncoding('utf8'); // 创建可写流 const wirteable = fs.createWriteStream(copyFile); // 编码 wirteable.setDefaultEncoding('utf8'); readable.on('open', (fd) => { console.log('开始读取文件') }) // 每当流将数据块的所有权移交给消费者时,则会触发 'data' 事件 readable.on('data', (data) => { str += data; console.log('读取到数据'); // 写入 wirteable.write(data, 'utf8'); }) wirteable.on('open', () => { console.log('开始写入数据') }) // 如果对 stream.write(chunk) 的调用返回 false,则 'drain' 事件将在适合继续将数据写入流时触发。 // 即生产数据的速度大于写入速度,缓存区装满之后,会暂停生产着从底层读取数据 // writeable缓存区释放之后,会发送一个drain事件让生产者继续读取 wirteable.on('drain', () => { console.log('继续写入') }) // 在调用 stream.end() 方法之后,并且所有数据都已刷新到底层系统,则触发 'finish' 事件。 wirteable.on('finish', () => { console.log('数据写入完毕') }) readable.on('end', () => { // 数据读取完毕通知可写流 wirteable.end() }) // 当在可读流上调用 stream.pipe() 方法将此可写流添加到其目标集时,则触发 'pipe' 事件。 // readable.pipe(destWriteable) wirteable.on('pipe', () => { console.log('管道流创建') }) wirteable.on('error', () => { console.log('数据写入发生错误') })