We have already learned the basic concepts of OpenKruise and several commonly used enhanced controllers. Next, let’s continue to learn about other advanced functions.

SidecarSet supports automatically injecting sidecar containers into qualified Pods created in the cluster through admission webhook. In addition to injecting when Pod is created, SidecarSet also provides The ability to in-place upgrade the sidecar container image that has been injected into the Pod. SidecarSet decouples the definition and life cycle of sidecar containers from business containers. It is mainly used to manage stateless sidecar containers, such as monitoring, logging and other agents.

For example, we define a SidecarSet resource object as shown below:

# sidecarset-demo.yaml

apiVersion: apps.kruise.io/v1alpha1

kind: SidecarSet

metadata:

name: scs-demo

spec:

selector:

matchLabels: # 非常重要的属性,会去匹配具有 app=nginx 的 Pod

app: nginx

updateStrategy:

type: RollingUpdate

maxUnavailable: 1

containers:

- name: sidecar1

image: busybox

command: ["sleep", "999d"]

volumeMounts:

- name: log-volume

mountPath: /var/log

volumes: # 该属性会被合并到 pod.spec.volumes 去

- name: log-volume

emptyDir: {}Just create this resource object directly:

➜ kubectl get sidecarset NAME MATCHED UPDATED READY AGE scs-demo 0 0 0 6s

It should be noted that when we defined the SidecarSet object above, there was a very important attribute in it, which is the label selector. It will match the Pod with app=nginx and then inject the sidecar1 container defined below into it. For example, it is defined as follows The Pod shown above contains the label app=nginx, which can match the SidecarSet object above:

apiVersion: v1 kind: Pod metadata: labels: app: nginx # 匹配 SidecarSet 里面指定的标签 name: test-pod spec: containers: - name: app image: nginx:1.7.9

Create the resource object above directly:

➜ kubectl get pod test-pod NAME READY STATUSRESTARTS AGE test-pod 2/2 Running 022s

You can see that there are 2 containers in the Pod, which are automatically injected into the sidecar1 container defined above:

➜ kubectl get pod test-pod -o yaml

apiVersion: v1

kind: Pod

metadata:

labels:

app: nginx

name: test-pod

namespace: default

spec:

containers:

- command:

- sleep

- 999d

env:

- name: IS_INJECTED

value: "true"

image: busybox

imagePullPolicy: Always

name: sidecar1

resources: {}

volumeMounts:

- mountPath: /var/log

name: log-volume

- image: nginx:1.7.9

imagePullPolicy: IfNotPresent

name: app

# ......

volumes:

- emptyDir: {}

name: log-volume

# ......Now let’s update the sidecar container in SidecarSet Replace the image with busybox:1.35.0:

➜ kubectl patch sidecarset scs-demo --type='json' -p='[{"op": "replace", "path": "/spec/containers/0/image", "value": "busybox:1.35.0"}]'

sidecarset.apps.kruise.io/scs-demo patchedAfter updating, check the sidecar container in the Pod:

➜ kubectl get pod test-pod

NAME READY STATUSRESTARTSAGE

test-pod 2/2 Running 1 (67s ago) 28m

➜ kubectl describe pod test-pod

......

Events:

TypeReason AgeFrom Message

---------- ---- ---- -------

# ......

NormalCreated10mkubeletCreated container app

NormalStarted10mkubeletStarted container app

NormalKilling114s kubeletContainer sidecar1 definition changed, will be restarted

NormalPulling84skubeletPulling image "busybox:1.35.0"

NormalCreated77s (x2 over 11m)kubeletCreated container sidecar1

NormalStarted77s (x2 over 11m)kubeletStarted container sidecar1

NormalPulled 77skubeletSuccessfully pulled image "busybox:1.35.0" in 6.901558972s (6.901575894s including waiting)

➜ kubectl get pod test-pod -o yaml |grep busybox

kruise.io/sidecarset-inplace-update-state: '{"scs-demo":{"revision":"f78z4854d9855xd6478fzx9c84645z2548v24z26455db46bdfzw44v49v98f2cw","updateTimestamp":"2023-04-04T08:05:18Z","lastContainerStatuses":{"sidecar1":{"imageID":"docker.io/library/busybox@sha256:b5d6fe0712636ceb7430189de28819e195e8966372edfc2d9409d79402a0dc16"}}}}'

image: busybox:1.35.0

image: docker.io/library/busybox:1.35.0

imageID: docker.io/library/busybox@sha256:223ae047b1065bd069aac01ae3ac8088b3ca4a527827e283b85112f29385fb1bYou can see the Pod The sidecar container image in has been upgraded to busybox:1.35.0 in place, without any impact on the main container.

It should be noted that sidecar injection will only occur during the Pod creation phase, and only the Pod spec will be updated and will not affect the work to which the Pod belongs. Load template template. In addition to the default k8s container fields, spec.containers also extends the following fields to facilitate injection:

apiVersion: apps.kruise.io/v1alpha1 kind: SidecarSet metadata: name: sidecarset spec: selector: matchLabels: app: sample containers: # 默认的 K8s 容器字段 - name: nginx image: nginx:alpine volumeMounts: - mountPath: /nginx/conf name: nginxconf # 扩展的 sidecar 容器字段 podInjectPolicy: BeforeAppContainer shareVolumePolicy: # 数据卷共享 type: disabled | enabled transferEnv: # 环境变量共享 - sourceContainerName: main # 会把main容器中的PROXY_IP环境变量注入到当前定义的sidecar容器中 envName: PROXY_IP volumes: - Name: nginxconf hostPath: /data/nginx/conf

SidecarSet not only supports in-place upgrade of sidecar containers, but also provides a very rich upgrade and grayscale strategies. Similarly, under the updateStrategy attribute in the SidecarSet object, partition can be configured to define the number or percentage of Pods to retain the old version. The default is 0; the maxUnavailable attribute can also be configured, indicating the maximum unavailable number during the release process.

You can also set paused: true to pause the release. At this time, the injection capability will still be implemented for the newly created and expanded pods, and the updated pods will keep the updated version. Pods that have not been updated will have their updates paused.

apiVersion: apps.kruise.io/v1alpha1 kind: SidecarSet metadata: name: sidecarset spec: # ... updateStrategy: type: RollingUpdate maxUnavailable: 20% partition: 10 paused: true

For businesses that require canary release, it can be achieved through selector. For those who need to take the lead in gold release Canary Grayscale's pod is marked with a fixed [canary.release] = true label, and then selects the pod through selector.matchLabels.

For example, now we have a 3-copy Pod, which also has the label app=nginx, as shown below:

apiVersion: apps/v1 kind: Deployment metadata: name: nginx namespace: default spec: replicas: 3 revisionHistoryLimit: 3 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - name: ngx image: nginx:1.7.9 ports: - containerPort: 80

创建后现在就具有 4 个 app=nginx 标签的 Pod 了,由于都匹配上面创建的 SidecarSet 对象,所以都会被注入一个 sidecar1 的容器,镜像为 busybox:

➜ kubectl get pods -l app=nginx NAMEREADY STATUSRESTARTS AGE nginx-6457955f7-7hnjw 2/2 Running 051s nginx-6457955f7-prkgz 2/2 Running 051s nginx-6457955f7-tbtxk 2/2 Running 051s test-pod2/2 Running 04m2s

现在如果我们想为 test-pod 这个应用来执行灰度策略,将 sidecar 容器镜像更新成 busybox:1.35.0,则可以在 updateStrategy 下面添加 selector.matchLabels 属性 canary.release: "true":

piVersion: apps.kruise.io/v1alpha1 kind: SidecarSet metadata: name: test-sidecarset spec: updateStrategy: type: RollingUpdate selector: matchLabels: canary.release: "true" containers: - name: sidecar1 image: busybox:1.35.0 # ......

然后同样需要给 test-pod 添加上 canary.release=true 这个标签:

apiVersion: v1 kind: Pod metadata: labels: app: nginx canary.release: "true" name: test-pod spec: containers: - name: app image: nginx:1.7.9

更新后可以发现 test-pod 的 sidecar 镜像更新了,其他 Pod 没有变化,这样就实现了 sidecar 的灰度功能:

➜ kubectl describe pod test-pod Events: TypeReason AgeFrom Message ---------- ---- ---- ------- NormalKilling7m53skubeletContainer sidecar1 definition changed, will be restarted NormalCreated7m23s (x2 over 8m17s)kubeletCreated container sidecar1 NormalStarted7m23s (x2 over 8m17s)kubeletStarted container sidecar1 NormalPulling7m23skubeletPulling image "busybox" NormalPulled 7m23skubeletSuccessfully pulled image "busybox" in 603.928658ms

SidecarSet 原地升级会先停止旧版本的容器,然后创建新版本的容器,这种方式适合不影响 Pod 服务可用性的 sidecar 容器,比如说日志收集的 Agent。

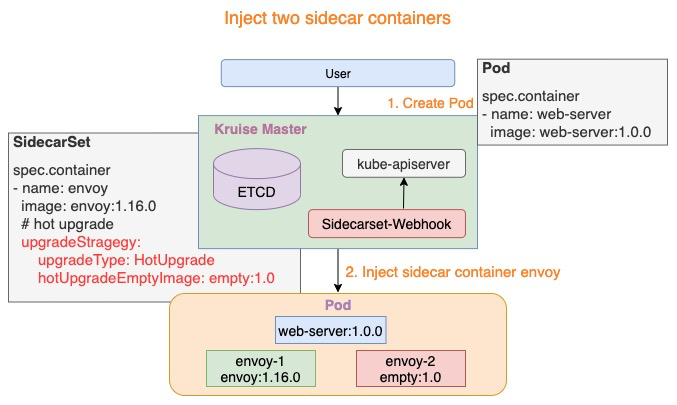

但是对于很多代理或运行时的 sidecar 容器,例如 Istio Envoy,这种升级方法就有问题了,Envoy 作为 Pod 中的一个代理容器,代理了所有的流量,如果直接重启,Pod 服务的可用性会受到影响,如果需要单独升级 envoy sidecar,就需要复杂的优雅终止和协调机制,所以我们为这种 sidecar 容器的升级提供了一种新的解决方案。

# hotupgrade-sidecarset.yaml apiVersion: apps.kruise.io/v1alpha1 kind: SidecarSet metadata: name: hotupgrade-sidecarset spec: selector: matchLabels: app: hotupgrade containers: - name: sidecar image: openkruise/hotupgrade-sample:sidecarv1 imagePullPolicy: Always lifecycle: postStart: exec: command: - /bin/sh - /migrate.sh upgradeStrategy: upgradeType: HotUpgrade hotUpgradeEmptyImage: openkruise/hotupgrade-sample:empty

整体来说热升级特性总共包含以下两个过程:

Pod 创建时,SidecarSet Webhook 将会注入两个容器:

注入热升级容器

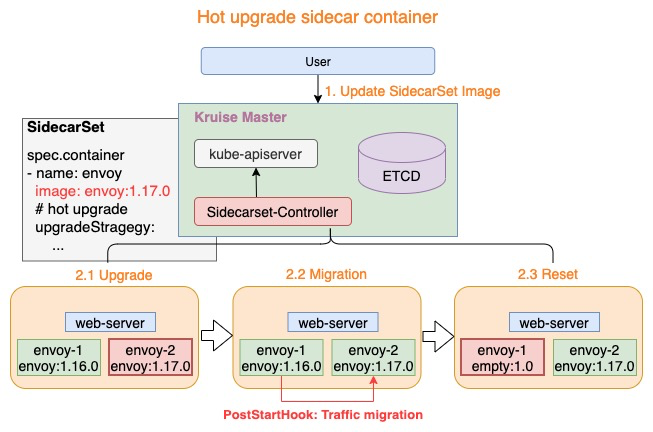

热升级流程主要分为三个步骤:

上述三个步骤完成了热升级中的全部流程,当对 Pod 执行多次热升级时,将重复性的执行上述三个步骤。

热升级流程

这里我们以 OpenKruise 的官方示例来进行说明,首先创建上面的 hotupgrade-sidecarset 这个 SidecarSet。然后创建一个如下所示的 CloneSet 对象:

# hotupgrade-cloneset.yaml apiVersion: apps.kruise.io/v1alpha1 kind: CloneSet metadata: name: busybox labels: app: hotupgrade spec: replicas: 1 selector: matchLabels: app: hotupgrade template: metadata: labels: app: hotupgrade spec: containers: - name: busybox image: openkruise/hotupgrade-sample:busybox

创建完成后,CloneSet 管理的 Pod 已经注入 sidecar-1 和 sidecar-2 两个容器:

➜ kubectl get sidecarset hotupgrade-sidecarset NAMEMATCHED UPDATED READY AGE hotupgrade-sidecarset 1 1 0 58s ➜ kubectl get pods -l app=hotupgrade NAMEREADY STATUSRESTARTS AGE busybox-nd5bp 3/3 Running 031s ➜ kubectl describe pod busybox-nd5bp Name: busybox-nd5bp Namespace:default Node: node2/10.206.16.10 # ...... Controlled By:CloneSet/busybox Containers: sidecar-1: Container ID: containerd://511aa4b60d36483177e92805653c1b618495e47d8d5de331008259f78b3be89e Image:openkruise/hotupgrade-sample:sidecarv1 Image ID: docker.io/openkruise/hotupgrade-sample@sha256:3d677ca19712b67d2c264374736d71089d21e100eff341f6b4bb0f5288ec6f34 Environment: IS_INJECTED: true SIDECARSET_VERSION: (v1:metadata.annotations['version.sidecarset.kruise.io/sidecar-1']) SIDECARSET_VERSION_ALT: (v1:metadata.annotations['versionalt.sidecarset.kruise.io/sidecar-1']) # ...... sidecar-2: Container ID: containerd://6b0678695ccb977695248e41108606b409ad0c7e3e4fe1ba9b48e839e3c235ef Image:openkruise/hotupgrade-sample:empty Image ID: docker.io/openkruise/hotupgrade-sample@sha256:606be602967c9f91c47e4149af4336c053e26225b717a1b5453ac8fa9a401cc5 Environment: IS_INJECTED: true SIDECARSET_VERSION: (v1:metadata.annotations['version.sidecarset.kruise.io/sidecar-2']) SIDECARSET_VERSION_ALT: (v1:metadata.annotations['versionalt.sidecarset.kruise.io/sidecar-2']) # ...... busybox: Container ID: containerd://da7eebb0161bab37f7de75635e68c5284a973a21f6d3f095bb5e8212ac8ce908 Image:openkruise/hotupgrade-sample:busybox Image ID: docker.io/openkruise/hotupgrade-sample@sha256:08f9ede05850686e1200240e5e376fc76245dd2eb56299060120b8c3dba46dc9 # ...... # ...... Events: TypeReason Age From Message ---------- -------- ------- NormalScheduled50s default-schedulerSuccessfully assigned default/busybox-nd5bp to node2 NormalPulling49s kubeletPulling image "openkruise/hotupgrade-sample:sidecarv1" NormalPulled 41s kubeletSuccessfully pulled image "openkruise/hotupgrade-sample:sidecarv1" in 7.929984849s (7.929998445s including waiting) NormalCreated41s kubeletCreated container sidecar-1 NormalStarted41s kubeletStarted container sidecar-1 NormalPulling36s kubeletPulling image "openkruise/hotupgrade-sample:empty" NormalPulled 29s kubeletSuccessfully pulled image "openkruise/hotupgrade-sample:empty" in 7.434180553s (7.434189239s including waiting) NormalCreated29s kubeletCreated container sidecar-2 NormalStarted29s kubeletStarted container sidecar-2 NormalPulling29s kubeletPulling image "openkruise/hotupgrade-sample:busybox" NormalPulled 22s kubeletSuccessfully pulled image "openkruise/hotupgrade-sample:busybox" in 6.583450981s (6.583456512s including waiting) NormalCreated22s kubeletCreated container busybox NormalStarted22s kubeletStarted container busybox ......

busybox 主容器每 100 毫秒会请求一次 sidecar(versinotallow=v1)服务:

➜ kubectl logs -f busybox-nd5bp -c busybox I0404 09:12:26.513128 1 main.go:39] request sidecar server success, and response(body=This is version(v1) sidecar) I0404 09:12:26.623496 1 main.go:39] request sidecar server success, and response(body=This is version(v1) sidecar) I0404 09:12:26.733958 1 main.go:39] request sidecar server success, and response(body=This is version(v1) sidecar) ......

现在我们去升级 sidecar 容器,将镜像修改为 openkruise/hotupgrade-sample:sidecarv2:

➜ kubectl patch sidecarset hotupgrade-sidecarset --type='json' -p='[{"op": "replace", "path": "/spec/containers/0/image", "value": "openkruise/hotupgrade-sample:sidecarv2"}]'更新后再去观察 pod 的状态,可以看到 sidecar-2 镜像正常更新了:

➜ kubectl get pods -l app=hotupgrade NAMEREADY STATUSRESTARTSAGE busybox-nd5bp 3/3 Running 2 (45s ago) 23m ➜ kubectl describe pods busybox-nd5bp ...... Events: ...... NormalPulling33skubeletPulling image "openkruise/hotupgrade-sample:sidecarv2" NormalKilling33skubeletContainer sidecar-2 definition changed, will be restarted NormalStarted25s (x2 over 22m)kubeletStarted container sidecar-2 NormalCreated25s (x2 over 22m)kubeletCreated container sidecar-2 NormalPulled 25skubeletSuccessfully pulled image "openkruise/hotupgrade-sample:sidecarv2" in 8.169453753s (8.16946743s including waiting) NormalKilling14skubeletContainer sidecar-1 definition changed, will be restarted NormalResetContainerSucceed14ssidecarset-controllerreset sidecar container image empty successfully NormalPulling14skubeletPulling image "openkruise/hotupgrade-sample:empty" NormalCreated12s (x2 over 22m)kubeletCreated container sidecar-1 NormalStarted12s (x2 over 22m)kubeletStarted container sidecar-1 NormalPulled 12skubeletSuccessfully pulled image "openkruise/hotupgrade-sample:empty" in 1.766097364s (1.766109087s including waiting)

并且在更新过程中观察 busybox 容器仍然会不断请求 sidecar 服务,但是并没有失败的请求出现:

➜ kubectl logs -f busybox-nd5bp -c busybox I0306 11:08:47.587727 1 main.go:39] request sidecar server success, and response(body=This is version(v1) sidecar) I0404 09:14:28.588973 1 main.go:39] request sidecar server success, and response(body=This is version(v2) sidecar) # ......

整个热升级示例代码可以参考仓库的实现:https://github.com/openkruise/samples/tree/master/hotupgrade。

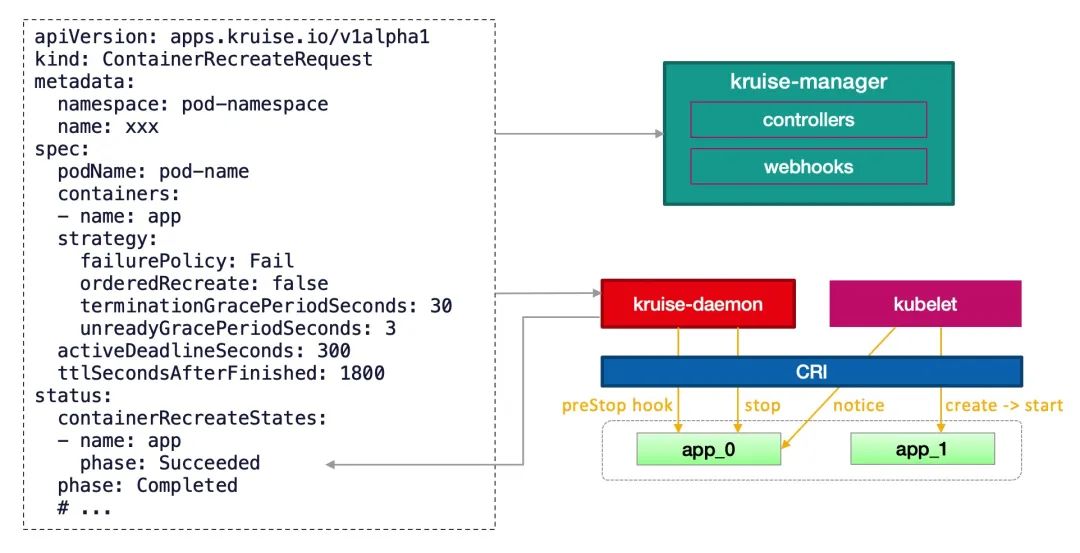

ContainerRecreateRequest 控制器可以帮助用户重启/重建存量 Pod 中一个或多个容器。和 Kruise 提供的原地升级类似,当一个容器重建的时候,Pod 中的其他容器还保持正常运行,重建完成后,Pod 中除了该容器的 restartCount 增加以外不会有什么其他变化。

不过需要注意之前临时写到旧容器 rootfs 中的文件会丢失,但是 volume mount 挂载卷中的数据都还存在。这个功能依赖于 kruise-daemon 组件来停止 Pod 容器。

为要重建容器的 Pod 提交一个 ContainerRecreateRequest 自定义资源(缩写 CRR):

# crr-demo.yaml apiVersion: apps.kruise.io/v1alpha1 kind: ContainerRecreateRequest metadata: name: crr-dmo spec: podName: pod-name containers: # 要重建的容器名字列表,至少要有 1 个 - name: app - name: sidecar strategy: failurePolicy: Fail # 'Fail' 或 'Ignore',表示一旦有某个容器停止或重建失败, CRR 立即结束 orderedRecreate: false # 'true' 表示要等前一个容器重建完成了,再开始重建下一个 terminationGracePeriodSeconds: 30 # 等待容器优雅退出的时间,不填默认用 Pod 中定义的 unreadyGracePeriodSeconds: 3 # 在重建之前先把 Pod 设为 not ready,并等待这段时间后再开始执行重建 minStartedSeconds: 10 # 重建后新容器至少保持运行这段时间,才认为该容器重建成功 activeDeadlineSeconds: 300 # 如果 CRR 执行超过这个时间,则直接标记为结束(未结束的容器标记为失败) ttlSecondsAfterFinished: 1800 # CRR 结束后,过了这段时间自动被删除掉

一般来说,列表中的容器会一个个被停止,但可能同时在被重建和启动,除非 orderedRecreate 被设置为 true。unreadyGracePeriodSeconds 功能依赖于 KruisePodReadinessGate 这个 feature-gate,后者会在每个 Pod 创建的时候注入一个 readinessGate,否则,默认只会给 Kruise 工作负载创建的 Pod 注入 readinessGate,也就是说只有这些 Pod 才能在 CRR 重建时使用 unreadyGracePeriodSeconds。

当用户创建了一个 CRR,Kruise webhook 会把当时容器的 containerID/restartCount 记录到 spec.containers[x].statusContext 之中。 在 kruise-daemon 执行的过程中,如果它发现实际容器当前的 containerID 与 statusContext 不一致或 restartCount 已经变大,则认为容器已经被重建成功了(比如可能发生了一次原地升级)。

容器重启请求

一般情况下,kruise-daemon 会执行 preStop hook 后把容器停掉,然后 kubelet 感知到容器退出,则会新建一个容器并启动。最后 kruise-daemon 看到新容器已经启动成功超过 minStartedSeconds 时间后,会上报这个容器的 phase 状态为 Succeeded。

如果容器重建和原地升级操作同时触发了:

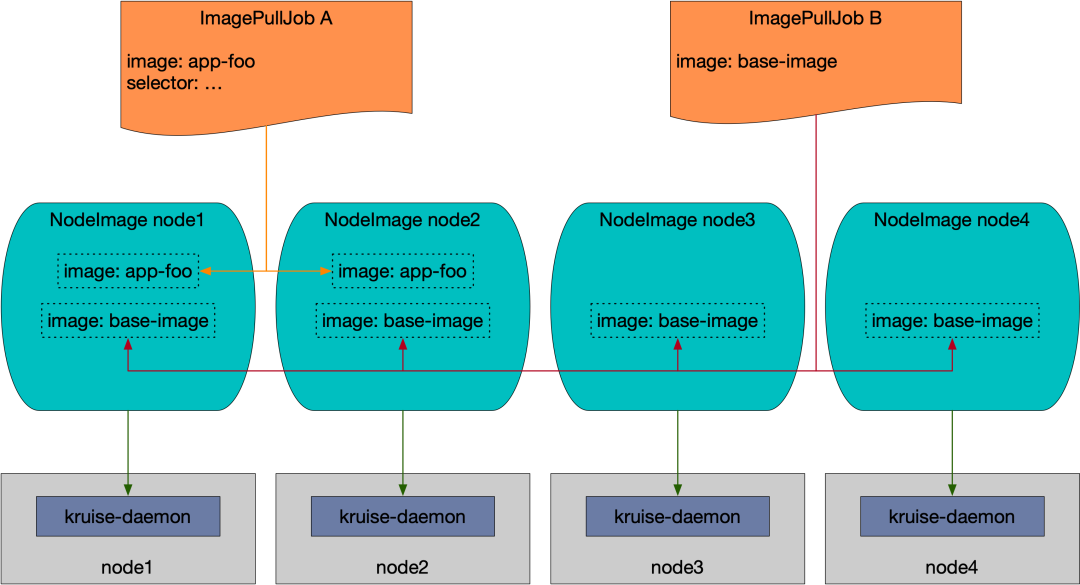

NodeImage 和 ImagePullJob 是从 Kruise v0.8.0 版本开始提供的 CRD。Kruise 会自动为每个节点创建一个 NodeImage,它包含了哪些镜像需要在这个 Node 上做预热,比如我们这里 3 个节点,则会自动创建 3 个 NodeImage 对象:

➜ kubectl get nodeimage NAMEDESIRED PULLING SUCCEED FAILED AGE master1 0 0 0 05d node1 0 0 0 05d node2 0 0 0 05d

比如我们查看 node1 节点上的 NodeImage 对象:

➜ kubectl get nodeimage node1 -o yaml

apiVersion: apps.kruise.io/v1alpha1

kind: NodeImage

metadata:

name: node1

# ......

spec: {}

status:

desired: 0

failed: 0

pulling: 0

succeeded: 0比如我们希望在这个节点上拉去一个 ubuntu:latest 镜像,则可以按照如下所示的去修改 spec:

...... spec: images: ubuntu:# 镜像 name tags: - tag: latest# 镜像 tag pullPolicy: ttlSecondsAfterFinished: 300# [required] 拉取完成(成功或失败)超过 300s 后,将这个任务从 NodeImage 中清除 timeoutSeconds: 600 # [optional] 每一次拉取的超时时间, 默认为 600 backoffLimit: 3 # [optional] 拉取的重试次数,默认为 3 activeDeadlineSeconds: 1200 # [optional] 整个任务的超时时间,无默认值

更新后我们可以从 status 中看到拉取进度以及结果,并且你会发现拉取完成 600s 后任务会被清除。

➜ kubectl describe nodeimage node1 Name: node1 Namespace: # ...... Spec: Images: Ubuntu: Tags: Created At:2023-04-04T09:29:18Z Pull Policy: Active Deadline Seconds: 1200 Backoff Limit: 3 Timeout Seconds: 600 Ttl Seconds After Finished:300 Tag: latest Status: Desired:1 Failed: 0 Image Statuses: Ubuntu: Tags: Completion Time:2023-04-04T09:29:28Z Phase:Succeeded Progress: 100 Start Time: 2023-04-04T09:29:18Z Tag:latest Pulling:0 Succeeded:1 Events: TypeReasonAge From Message ------------------ ------- NormalPullImageSucceed11s kruise-daemon-imagepullerImage ubuntu:latest, ecalpsedTime 10.066193581s

我们可以在 node1 节点上查看到这个镜像已经被拉取下来了:

ubuntu@node1:~$ sudo ctr -n k8s.io i ls|grep ubuntu docker.io/library/ubuntu:latestapplication/vnd.oci.image.index.v1+json sha256:67211c14fa74f070d27cc59d69a7fa9aeff8e28ea118ef3babc295a0428a6d21 28.2 MiBlinux/amd64,linux/arm/v7,linux/arm64/v8,linux/ppc64le,linux/s390xio.cri-containerd.image=managed docker.io/library/ubuntu@sha256:67211c14fa74f070d27cc59d69a7fa9aeff8e28ea118ef3babc295a0428a6d21 application/vnd.oci.image.index.v1+json sha256:67211c14fa74f070d27cc59d69a7fa9aeff8e28ea118ef3babc295a0428a6d21 28.2 MiBlinux/amd64,linux/arm/v7,linux/arm64/v8,linux/ppc64le,linux/s390xio.cri-containerd.image=managed ubuntu@node1:~$

此外用户可以创建 ImagePullJob 对象,来指定一个镜像要在哪些节点上做预热。

比如创建如下所示的 ImagePullJob 资源对象:

apiVersion: apps.kruise.io/v1alpha1 kind: ImagePullJob metadata: name: job-with-always spec: image: nginx:1.9.1 # [required] 完整的镜像名 name:tag parallelism: 10 # [optional] 最大并发拉取的节点梳理, 默认为 1 selector: # [optional] 指定节点的 名字列表 或 标签选择器 (只能设置其中一种) names: - node1 - node2 matchLabels: node-type: xxx # podSelector: # [optional] pod label 选择器来在这些 pod 所在节点上拉取镜像, 与 selector 不能同时设置. #pod-label: xxx completionPolicy: type: Always # [optional] 默认为 Always activeDeadlineSeconds: 1200 # [optional] 无默认值, 只对 Alway 类型生效 ttlSecondsAfterFinished: 300 # [optional] 无默认值, 只对 Alway 类型生效 pullPolicy: # [optional] 默认 backoffLimit=3, timeoutSecnotallow=600 backoffLimit: 3 timeoutSeconds: 300 pullSecrets: - secret-name1 - secret-name2

我们可以在 selector 字段中指定节点的名字列表或标签选择器 (只能设置其中一种),如果没有设置 selector 则会选择所有节点做预热。或者可以配置 podSelector 来在这些 pod 所在节点上拉取镜像,podSelector 与 selector 不能同时设置。

同时,ImagePullJob 有两种 completionPolicy 类型:

同样如果你要预热的镜像来自私有仓库,则可以通过 pullSecrets 来指定仓库的 Secret 信息。

如果这个镜像来自一个私有仓库,则可以通过 pullSecrets 来指定仓库的 Secret 信息。

# ... spec: pullSecrets: - secret-name1 - secret-name2

因为 ImagePullJob 是一种 namespaced-scope 资源,所以这些 Secret 必须存在 ImagePullJob 所在的 namespace 中。然后你只需要在 pullSecrets 字段中写上这些 secret 的名字即可。

Container Launch Priority 提供了控制一个 Pod 中容器启动顺序的方法。通常来说 Pod 容器的启动和退出顺序是由 Kubelet 管理的,Kubernetes 曾经有一个 KEP 计划在 container 中增加一个 type 字段来标识不同类型容器的启停优先级,但是由于sig-node 考虑到对现有代码架构的改动太大,所以将该提案拒绝了。

这个功能作用在 Pod 对象上,不管它的 owner 是什么类型的,因此可以适用于 Deployment、CloneSet 以及其他的工作负载。

比如我们可以设置按照容器顺序启动,只需要在 Pod 中定义一个 apps.kruise.io/container-launch-priority 的注解即可:

apiVersion: v1 kind: Pod annotations: apps.kruise.io/container-launch-priority: Ordered spec: containers: - name: sidecar # ... - name: main # ...

Kruise 会保证前面的容器(sidecar)会在后面容器(main)之前启动。

此外我们还可以按自定义顺序启动,但是需要在 Pod 容器中添加 KRUISE_CONTAINER_PRIORITY 这个环境变量:

apiVersion: v1 kind: Pod spec: containers: - name: main # ... - name: sidecar env: - name: KRUISE_CONTAINER_PRIORITY value: "1" # ...

该环境变量值的范围在 [-2147483647, 2147483647],不写默认是 0,权重高的容器,会保证在权重低的容器之前启动,但是需要注意相同权重的容器不保证启动顺序。

在对 Secret、ConfigMap 等命名空间级别资源进行跨 namespace 分发及同步的场景中,原生 Kubernetes 目前只支持用户手动分发与同步,十分地不方便。比如:

面对这些需要跨命名空间进行资源分发和多次同步的场景,OpenKruise 设计了一个新的 CRD - ResourceDistribution,可以更便捷的自动化分发和同步这些资源。

ResourceDistribution 目前支持 Secret 和 ConfigMap 两类资源的分发和同步。

ResourceDistribution 是全局的 CRD,其主要由 resource 和 targets 两个字段构成,其中 resource 字段用于描述用户所要分发的资源,targets 字段用于描述用户所要分发的目标命名空间。

apiVersion: apps.kruise.io/v1alpha1 kind: ResourceDistribution metadata: name: sample spec: resource: ... ... targets: ... ...

其中 resource 字段必须是一个完整、正确的资源描述,如下所示:

apiVersion: apps.kruise.io/v1alpha1 kind: ResourceDistribution metadata: name: sample spec: resource: apiVersion: v1 kind: ConfigMap metadata: name: game-demo data: game.properties: | enemy.types=aliens,monsters player.maximum-lives=5 player_initial_lives: "3" ui_properties_file_name: user-interface.properties user-interface.properties: | color.good=purple color.bad=yellow allow.textmode=true targets: ... ...

用户可以先在本地某个命名空间中创建相应资源并进行测试,确认资源配置正确后再拷贝过来。

targets 字段目前支持四种规则来描述用户所要分发的目标命名空间,包括 allNamespaces、includedNamespaces、namespaceLabelSelector 以及 excludedNamespaces:

allNamespaces、includedNamespaces、namespaceLabelSelector 之间是**或(OR)**的关系,而 excludedNamespaces 一旦被配置,则会显式地排除掉这些命名空间。另外,targets 还将自动忽略 kube-system 和 kube-public 两个命名空间。

一个配置正确的 targets 字段如下所示:

apiVersion: apps.kruise.io/v1alpha1 kind: ResourceDistribution metadata: name: sample spec: resource: ... ... targets: includedNamespaces: list: - name: ns-1 - name: ns-4 namespaceLabelSelector: matchLabels: group: test excludedNamespaces: list: - name: ns-3

该配置表示该 ResourceDistribution 的目标命名空间一定会包含 ns-1 和 ns-4,并且 Labels 满足 namespaceLabelSelector 的命名空间也会被包含进目标命名空间,但是,即使 ns-3 即使满足 namespaceLabelSelector 也不会被包含,因为它已经在 excludedNamespaces 中被显式地排除了。

如果同步的资源需要更新则可以去更新 resource 字段,更新后会自动地对所有目标命名空间中的资源进行同步更新。每一次更新资源时,ResourceDistribution 都会计算新版本资源的哈希值,并记录到资源的 Annotations 之中,当 ResourceDistribution 发现新版本的资源与目前资源的哈希值不同时,才会对资源进行更新。

apiVersion: v1 kind: ConfigMap metadata: name: demo annotations: kruise.io/resourcedistribution.resource.from: sample kruise.io/resourcedistribution.resource.distributed.timestamp: 2021-09-06 08:44:52.7861421 +0000 UTC m=+12896.810364601 kruise.io/resourcedistribution.resource.hashcode: 0821a13321b2c76b5bd63341a0d97fb46bfdbb2f914e2ad6b613d10632fa4b63 ... ...

当然非常不建议用户绕过 ResourceDistribution 直接对资源进行修改,除非用户知道自己在做什么。

除了这些增强控制器之外 OpenKruise 还有很多高级的特性,可以前往官网 https://openkruise.io 了解更多信息。

The above is the detailed content of K8s enhanced workload OpenKruise operation and maintenance enhancements. For more information, please follow other related articles on the PHP Chinese website!

How to turn off real-time protection in Windows Security Center

How to turn off real-time protection in Windows Security Center

How to modify the text on the picture

How to modify the text on the picture

What is the difference between wechat and WeChat?

What is the difference between wechat and WeChat?

How to solve parse error

How to solve parse error

How to download nvidia control panel

How to download nvidia control panel

Advantages of plc control system

Advantages of plc control system

Connected but unable to access the internet

Connected but unable to access the internet

ajax tutorial

ajax tutorial