Guide to using correct startup commands for Kafka cluster deployment

How to correctly use the Kafka startup command for cluster deployment

Kafka is a distributed stream processing platform that can handle large amounts of real-time data. It can be used to build a variety of applications such as real-time data analysis, machine learning, and fraud detection.

To deploy a Kafka cluster, you need to install Kafka software on each server. Then you need to configure each server so that they can communicate with each other. Finally, you need to start the cluster.

Install Kafka software

You can download the Kafka software from the Apache Kafka website. Once the download is complete, you'll need to unzip it into a directory on each server.

Configuring Kafka Server

To configure the Kafka server, you need to edit the config/server.properties file. This file contains various settings such as:

-

broker.id: A unique ID for each server. -

listeners: The port the server listens on. -

log.dirs: The directory where Kafka logs are stored. -

zookeeper.connect: The address of the ZooKeeper cluster.

Start Kafka Cluster

To start the Kafka cluster, you need to run the following command on each server:

kafka-server-start config/server.properties

This will start Kafka server. You can run the following command on each server to verify that the server is running:

kafka-server-info

This will display status information for the server.

Create a topic

To create a topic you need to run the following command:

kafka-topics --create --topic my-topic --partitions 3 --replication-factor 2

This will create a topic named "my-topic" , the topic has 3 partitions and 2 replicas.

Producing data

To produce data to the topic you need to run the following command:

kafka-console-producer --topic my-topic

This will open a console where you can Enter the data to be sent to the topic.

Consuming Data

To consume data from a topic you need to run the following command:

kafka-console-consumer --topic my-topic --from-beginning

This will open a console where you can View the data in the topic.

Manage cluster

You can use the following command to manage the Kafka cluster:

-

kafka-topics: management theme. -

kafka-partitions: Management partitions. -

kafka-replicas: Manage replicas. -

kafka-consumers: Manage consumers. -

kafka-producers: Manage producers.

Troubleshooting

If you are having trouble using Kafka, you can check out the following resources:

- Apache Kafka Documentation: https://kafka.apache.org/documentation/

- Kafka User Forum: https://groups.google.com/g/kafka-users

- Kafka JIRA: https: //issues.apache.org/jira/projects/KAFKA

The above is the detailed content of Guide to using correct startup commands for Kafka cluster deployment. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Generative AI brings real-time supply chains closer to reality

Apr 17, 2024 pm 05:25 PM

Generative AI brings real-time supply chains closer to reality

Apr 17, 2024 pm 05:25 PM

Generative artificial intelligence is affecting or expected to affect many industries, and the time for supply chain network transformation is ripe. Generative AI promises to significantly facilitate real-time interactions and information in the supply chain, from planning to procurement, manufacturing and fulfillment. The impact on productivity of all these processes is significant. A new study from Accenture calculates that all working hours of end-to-end supply chain activities of more than 40% of enterprises (43%) may be affected by production artificial intelligence. In addition, 29% of the working time in the entire supply chain can be automated through production AI, while 14% of the working time in the entire supply chain can be significantly increased through production AI. This emerging technology has the potential to impact the entire supply chain, from design and planning, to sourcing and manufacturing, to fulfillment.

Best practices for real-time data analysis using PHP and MQTT

Jul 08, 2023 pm 05:57 PM

Best practices for real-time data analysis using PHP and MQTT

Jul 08, 2023 pm 05:57 PM

Best practices for real-time data analysis using PHP and MQTT With the rapid development of IoT and big data technology, real-time data analysis is becoming more and more important in various industries. In real-time data analysis, MQTT (MQTelemetryTransport), as a lightweight communication protocol, is widely used in the field of Internet of Things. Combining PHP and MQTT, real-time data analysis can be achieved quickly and efficiently. This article will introduce best practices for real-time data analysis using PHP and MQTT, and

How to use Java to develop a real-time data analysis application based on Apache Kafka

Sep 20, 2023 am 08:21 AM

How to use Java to develop a real-time data analysis application based on Apache Kafka

Sep 20, 2023 am 08:21 AM

How to use Java to develop a real-time data analysis application based on Apache Kafka. With the rapid development of big data, real-time data analysis applications have become an indispensable part of enterprises. Apache Kafka, as the most popular distributed message queue system at present, provides powerful support for the collection and processing of real-time data. This article will lead readers to learn how to use Java to develop a real-time data analysis application based on Apache Kafka, and attach specific code examples. Prepare

Golang development: building a real-time data analysis system

Sep 21, 2023 am 09:21 AM

Golang development: building a real-time data analysis system

Sep 21, 2023 am 09:21 AM

Golang development: Building a real-time data analysis system In the context of modern technology development, data analysis is becoming an important part of corporate decision-making and business development. In order to understand and utilize data in real time, it is critical to build a real-time data analysis system. This article will introduce how to use the Golang programming language to build an efficient real-time data analysis system and provide specific code examples. System architecture design An efficient real-time data analysis system usually requires the following core components: Data source: The data source can be a database, consumer

The top ten free platform recommendations for real-time data on currency circle markets are released

Apr 22, 2025 am 08:12 AM

The top ten free platform recommendations for real-time data on currency circle markets are released

Apr 22, 2025 am 08:12 AM

Cryptocurrency data platforms suitable for beginners include CoinMarketCap and non-small trumpet. 1. CoinMarketCap provides global real-time price, market value, and trading volume rankings for novice and basic analysis needs. 2. The non-small quotation provides a Chinese-friendly interface, suitable for Chinese users to quickly screen low-risk potential projects.

In-depth analysis of MongoDB cluster deployment and capacity planning

Nov 04, 2023 pm 03:18 PM

In-depth analysis of MongoDB cluster deployment and capacity planning

Nov 04, 2023 pm 03:18 PM

MongoDB is a non-relational database that has been widely used in many large enterprises. Compared with traditional relational databases, MongoDB has excellent flexibility and scalability. This article will delve into the deployment and capacity planning of MongoDB clusters to help readers better understand and apply MongoDB. 1. The concept of MongoDB cluster MongoDB cluster is composed of multiple MongoDB instances. The instance can be a single MongoDB process running on different machines.

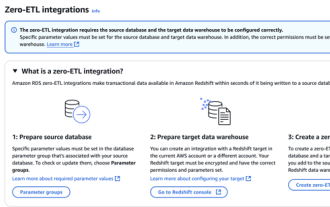

RDS MySQL integration with Redshift zero ETL

Apr 08, 2025 pm 07:06 PM

RDS MySQL integration with Redshift zero ETL

Apr 08, 2025 pm 07:06 PM

Data Integration Simplification: AmazonRDSMySQL and Redshift's zero ETL integration Efficient data integration is at the heart of a data-driven organization. Traditional ETL (extract, convert, load) processes are complex and time-consuming, especially when integrating databases (such as AmazonRDSMySQL) with data warehouses (such as Redshift). However, AWS provides zero ETL integration solutions that have completely changed this situation, providing a simplified, near-real-time solution for data migration from RDSMySQL to Redshift. This article will dive into RDSMySQL zero ETL integration with Redshift, explaining how it works and the advantages it brings to data engineers and developers.

What is mongodb used for?

Apr 02, 2024 pm 12:42 PM

What is mongodb used for?

Apr 02, 2024 pm 12:42 PM

MongoDB is a document-based, distributed database suitable for storing large data sets, managing unstructured data, application development, real-time analytics, and cloud storage with flexibility, scalability, high performance, ease of use, and Community support and other advantages.