In 2023, the status of Transformer, the dominant player in the field of AI large models, will begin to be challenged. A new architecture called "Mamba" has emerged. It is a selective state space model that is comparable to Transformer in terms of language modeling, and may even surpass it. At the same time, Mamba can achieve linear scaling as the context length increases, which enables it to handle million-word-length sequences and improve inference throughput by 5 times when processing real data. This breakthrough performance improvement is eye-catching and brings new possibilities to the development of the AI field.

In more than a month after its release, Mamba began to gradually show its influence and spawned many projects such as MoE-Mamba, Vision Mamba, VMamba, U-Mamba, MambaByte, etc. . Mamba has shown great potential in continuously overcoming the shortcomings of Transformer. These developments demonstrate Mamba’s continued development and advancement, bringing new possibilities to the field of artificial intelligence.

However, this rising "star" encountered a setback at the 2024 ICLR meeting. The latest public results show that Mamba’s paper is still pending. We can only see its name in the column of pending decision, and we cannot determine whether it was delayed or rejected.

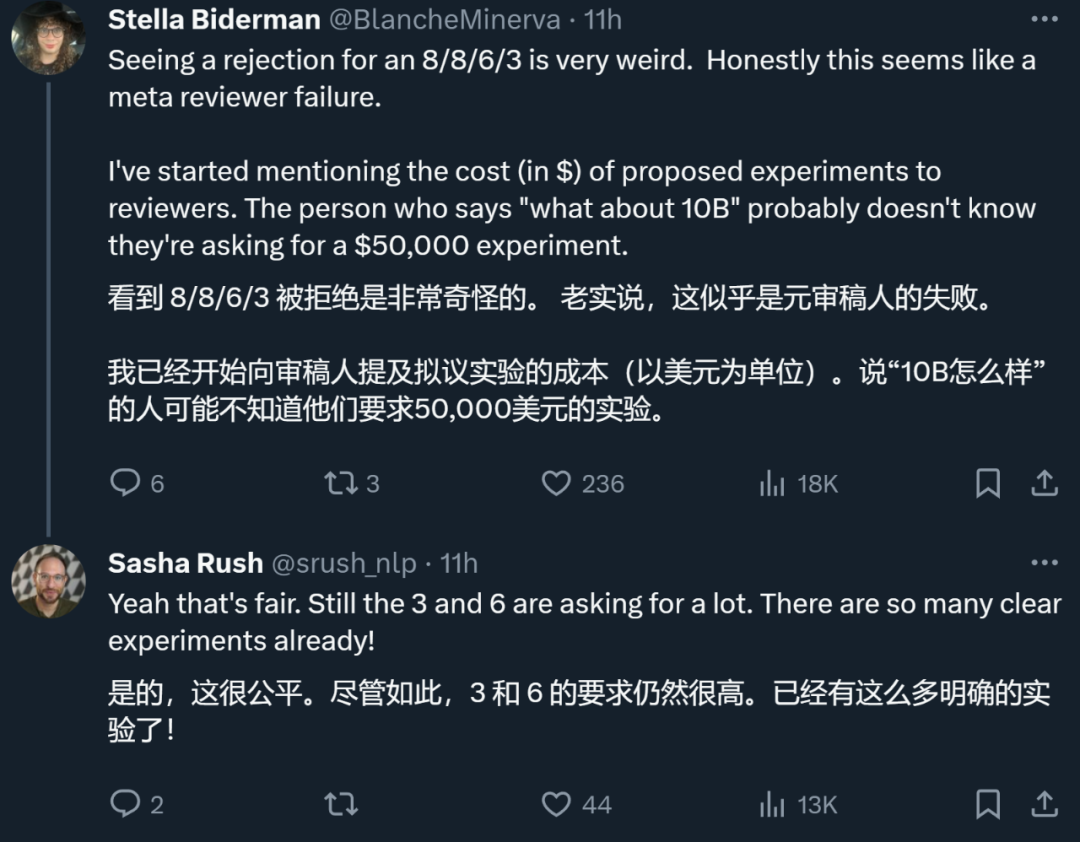

Overall, Mamba received ratings from four reviewers, which were 8/8/6/3 respectively. Some people said it was really puzzling to still be rejected after receiving such a rating.

To understand the reason, we have to look at what the reviewers who gave low scores said.

Paper review page: https://openreview.net/forum?id=AL1fq05o7H

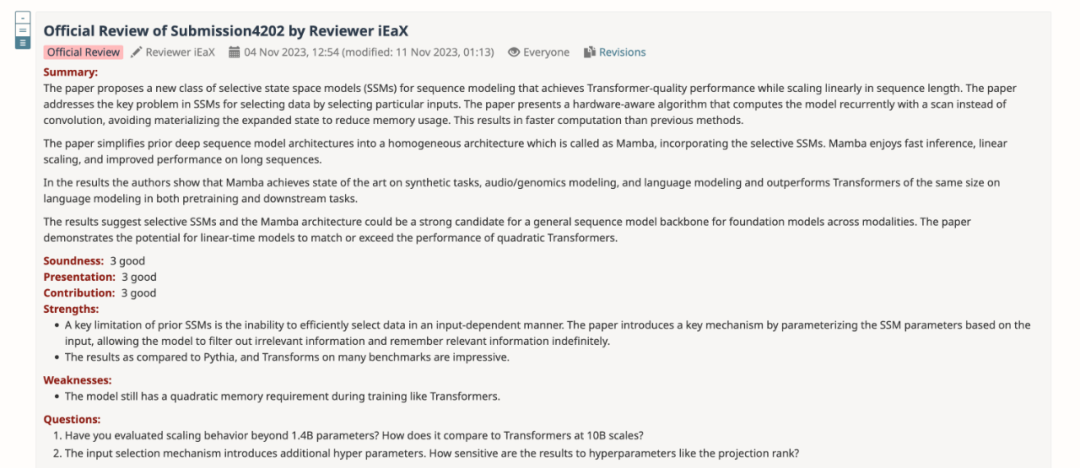

In the review feedback, the reviewer who gave a score of "3: reject, not good enough" explained several opinions about Mamba:

Thoughts on model design:

Thoughts on the experiment:

Additionally, another reviewer also pointed out a shortcoming of Mamba: the model still has secondary memory requirements during training like Transformers.

After summarizing the opinions of all reviewers, the author team also revised and improved the content of the paper and added new Experimental results and analysis:

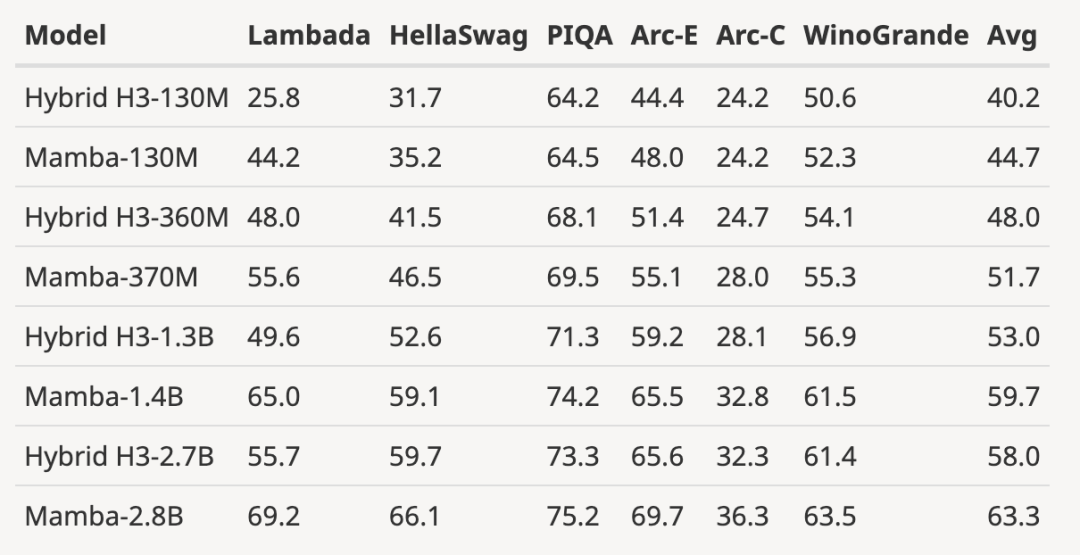

The author downloaded the size to 125M-2.7 Pretrained H3 model with B parameters and performed a series of evaluations. Mamba is significantly better in all language evaluations. It is worth noting that these H3 models are hybrid models using quadratic attention, while the author's pure model using only the linear-time Mamba layer is significantly better in all indicators. .

The evaluation comparison with the pre-trained H3 model is as follows:

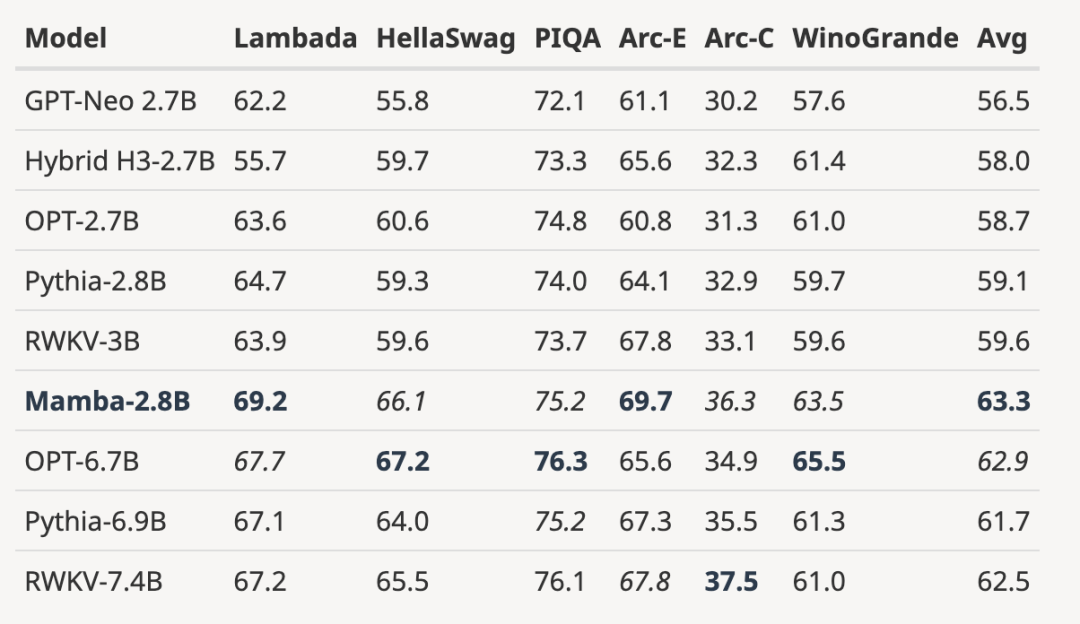

As shown in the figure below, with 3B open source trained based on the same number of tokens (300B) Compared with the model, Mamba is superior in every evaluation result. It is even comparable to 7B-scale models: when comparing Mamba (2.8B) with OPT, Pythia and RWKV (7B), Mamba achieves the best average score and best/second best on every benchmark Score.

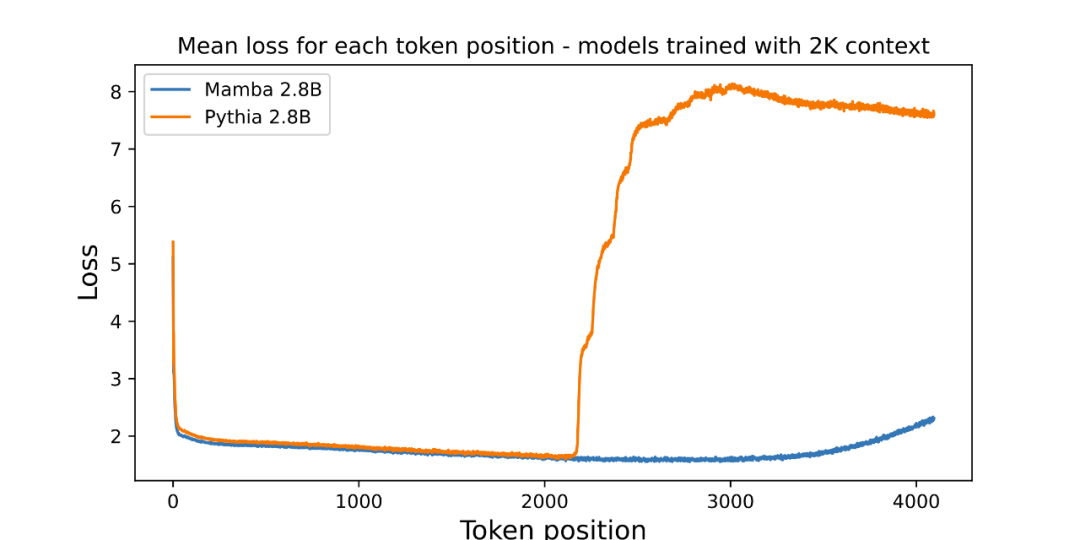

The author has attached a picture to evaluate the length extrapolation of the pre-trained 3B parameter language model:

Picture The average loss (log readability) per position is plotted in . The perplexity of the first token is high because it has no context, while the perplexity of both Mamba and the baseline Transformer (Pythia) increases before training on the context length (2048). Interestingly, Mamba's solvability improves significantly beyond its training context, up to a length of around 3000.

The author emphasizes that length extrapolation is not a direct motivation for the model in this article, but treats it as an additional feature:

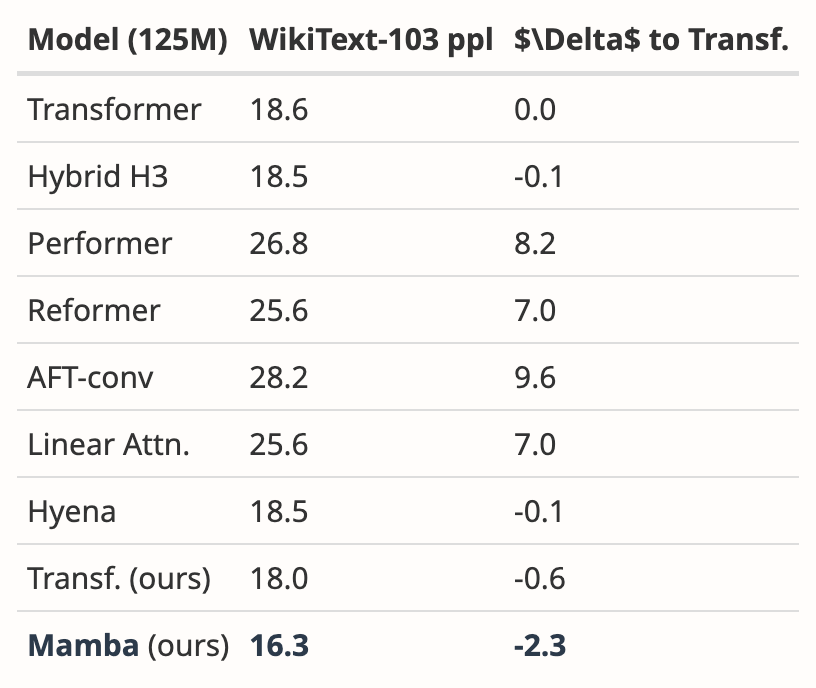

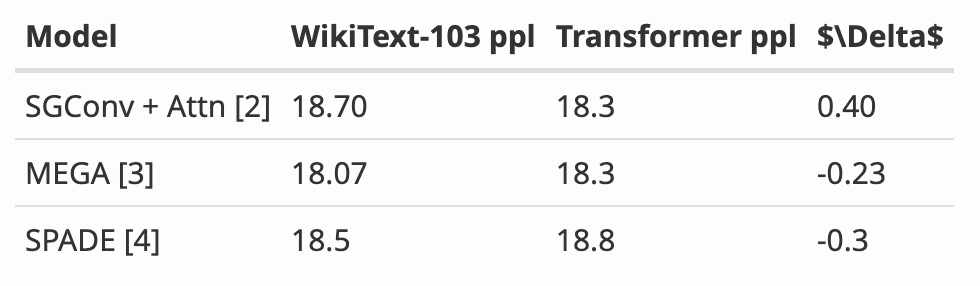

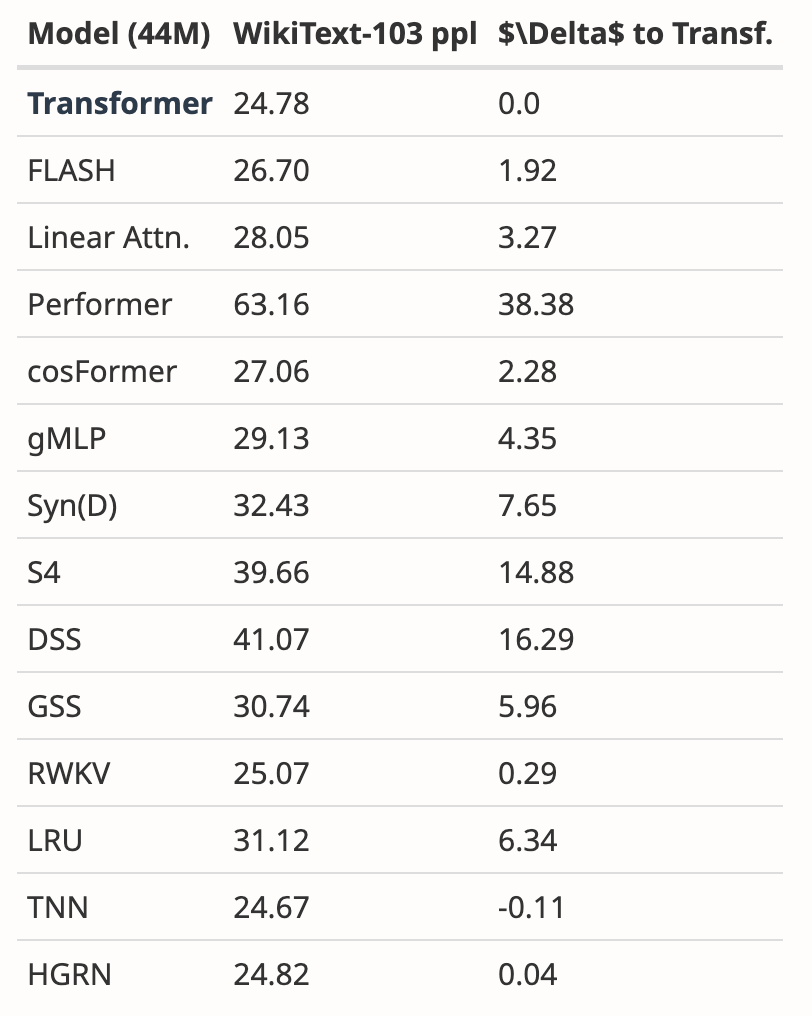

The author analyzed the results of multiple papers, It shows that Mamba performs significantly better on WikiText-103 than more than 20 other state-of-the-art sub-quadratic sequence models.

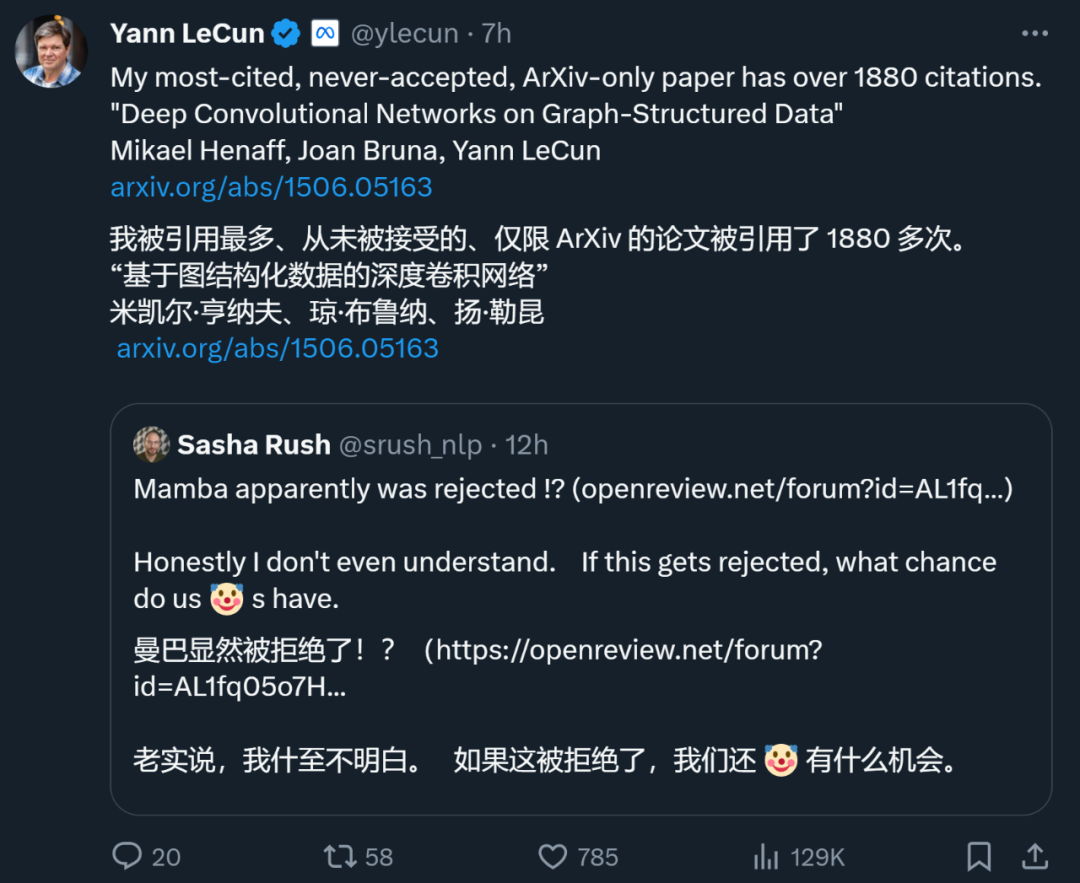

In major AI top conferences, "explosion in the number of submissions" is a headache, so energy is limited Reviewers will inevitably make mistakes. This has led to the rejection of many famous papers in history, including YOLO, transformer XL, Dropout, support vector machine (SVM), knowledge distillation, SIFT, and Google search engine's webpage ranking algorithm PageRank (see: "The famous YOLO and PageRank influential research was rejected by the top CS conference"). Even Yann LeCun, one of the three giants of deep learning, is also a major paper maker who is often rejected. Just now, he tweeted that his paper "Deep Convolutional Networks on Graph-Structured Data", which has been cited 1887 times, was also rejected by the top conference. During ICML 2022, he even "submitted three articles and three were rejected." So, just because a paper is rejected by a top conference does not mean it has no value. Among the above-mentioned rejected papers, many chose to transfer to other conferences and were eventually accepted. Therefore, netizens suggested that Mamba switch to COLM, which was established by young scholars such as Chen Danqi. COLM is an academic venue dedicated to language modeling research, focused on understanding, improving, and commenting on the development of language model technology, and may be a better choice for papers like Mamba's. However, regardless of whether Mamba is ultimately accepted by ICLR, it has become an influential work and has allowed the community to see a breakthrough The hope of Transformer shackles has injected new vitality into the exploration beyond the traditional Transformer model. Those papers rejected by top conferences

The above is the detailed content of Why didn't ICLR accept Mamba's paper? The AI community has sparked a big discussion. For more information, please follow other related articles on the PHP Chinese website!

What file is resource?

What file is resource?

How to set a scheduled shutdown in UOS

How to set a scheduled shutdown in UOS

Springcloud five major components

Springcloud five major components

The role of math function in C language

The role of math function in C language

What does wifi deactivated mean?

What does wifi deactivated mean?

iPhone 4 jailbreak

iPhone 4 jailbreak

The difference between arrow functions and ordinary functions

The difference between arrow functions and ordinary functions

How to skip connecting to the Internet after booting up Windows 11

How to skip connecting to the Internet after booting up Windows 11