Zero-shot learning (Zero-Shot Learning) focuses on classifying categories that have not appeared during the training process. Zero-shot learning based on semantic description is implemented through pre-defined high-order semantic information for each category. Knowledge transfer from seen class to unseen class. Traditional zero-shot learning only needs to identify unseen classes in the test phase, while generalized zero-shot learning (GZSL) needs to identify both visible and unseen classes. Its evaluation indicators are the average accuracy of visible classes and the average accuracy of unseen classes. Harmonic average of accuracy.

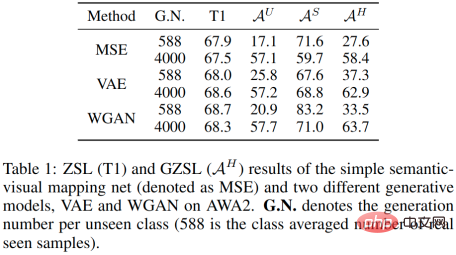

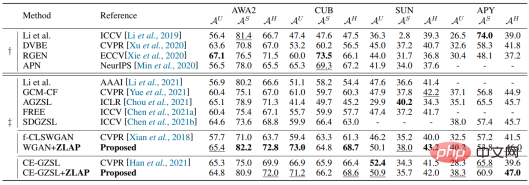

A common zero-shot learning strategy is to use visible class samples and semantics to train a conditional generation model from semantic space to visual sample space, and then generate unseen classes with the help of unseen class semantics pseudo-samples, and finally use visible class samples and unseen class pseudo-samples to train the classification network. However, learning a good mapping relationship between two modalities (semantic modality and visual modality) usually requires a large number of samples (refer to CLIP), which cannot be achieved in traditional zero-shot learning environments. Therefore, the visual sample distribution generated using unseen class semantics usually deviates from the real sample distribution, which means the following two points: 1. The unseen class accuracy obtained by this method is limited. 2. When the average number of pseudo-samples generated per class for unseen classes is equivalent to the average number of samples for each class for visible classes, there is a large difference between the accuracy of unseen classes and the accuracy of visible classes, as shown in Table 1 below.

#We found that even if we only learn the mapping of semantics to category center points, we can also map unseen class semantics Copying a single sample point multiple times and then participating in classifier training can also achieve an effect close to using a generative model. This means that the unseen pseudo-sample features generated by the generative model are relatively homogeneous to the classifier.

Previous methods usually cater to GZSL evaluation metrics by generating a large number of unseen class pseudo-samples (although a large number of samples is not helpful for unseen class inter-class discrimination) ). However, this re-sampling strategy has been proven in the field of long-tail learning to cause the classifier to overfit on some features, which is pseudo-unseen that deviates from the real sample. class characteristics. This situation is not conducive to the identification of real samples of seen and unseen classes. So, can we abandon this resampling strategy and instead use the offset and homogeneity of generating pseudo-samples of unseen classes (or the class imbalance between seen classes and unseen classes) as inductive bias? What about classifier learning?

Based on this, weproposed a plug-and-play classifier module that can improve generative zero-shot learning by just modifying one line of code. The effect of the method. Only 10 pseudo-samples are generated per unseen class to achieve SOTA level. Compared with other generative zero-sample methods, the new method has a huge advantage in computational complexity. Research members are from Nanjing University of Science and Technology and Oxford University.

This article uses the consistent training and testing goals as a guide to derive the variational lower bound of the generalized zero-shot learning evaluation index. The classifier modeled in this way can avoid using the re-adoption strategy and prevent the classifier from overfitting on the generated pseudo samples and adversely affecting the recognition of real samples. The proposed method can make the embedding-based classifier effective in the generative method framework and reduce the classifier's dependence on the quality of generated pseudo samples.

We decided to start with the loss function of the classifier. Assuming that the class space has been completed by generated pseudo-samples of unseen classes, the previous classifier is optimized with the goal of maximizing global accuracy:

Where  is the global accuracy,

is the global accuracy,  represents the classifier output,

represents the classifier output,  represents the sample distribution,

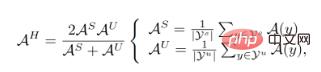

represents the sample distribution,  is the corresponding label of sample X. The evaluation index of GZSL is:

is the corresponding label of sample X. The evaluation index of GZSL is:

and

and  Represents visible class and unseen class collections respectively. The inconsistency between training objectives and testing objectives means that previous classifier training strategies did not take into account the differences between seen and unseen classes. Naturally, we try to achieve consistent results between training and testing goals by deriving

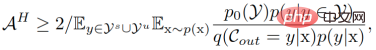

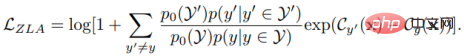

Represents visible class and unseen class collections respectively. The inconsistency between training objectives and testing objectives means that previous classifier training strategies did not take into account the differences between seen and unseen classes. Naturally, we try to achieve consistent results between training and testing goals by deriving  . After derivation, we got its lower bound:

. After derivation, we got its lower bound:

where  represents the visible class - the unseen class prior, It has nothing to do with the data and is adjusted as a hyperparameter in the experiment.

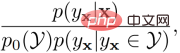

represents the visible class - the unseen class prior, It has nothing to do with the data and is adjusted as a hyperparameter in the experiment.  represents the internal prior of the visible class or the unseen class, which is replaced by the frequency of the visible class sample or the uniform distribution during the implementation process. By maximizing the lower bound of

represents the internal prior of the visible class or the unseen class, which is replaced by the frequency of the visible class sample or the uniform distribution during the implementation process. By maximizing the lower bound of  , we obtain the final optimization goal:

, we obtain the final optimization goal:

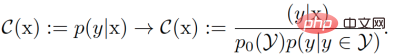

Thus, our classification The modeling objectives have undergone the following changes compared to the previous ones:

Fitting the posterior probability by using cross-entropy , we get the classifier loss as:

, we get the classifier loss as:

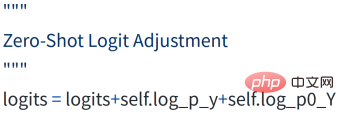

This is similar to the logic adjustment (Logit Adjustment) in long-tail learning, so we call it zero-sample logic adjustment (ZLA). So far, we have implemented the introduction of parameterized priors to implant the category imbalance between seen classes and unseen classes as inductive bias into classifier training, and only need to add additional bias terms to the original logits in the code implementation. can achieve the above effects.

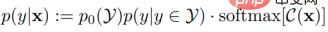

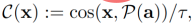

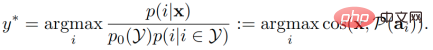

So far, the core of zero-sample migration is semantic prior. ) only plays a role in the training generator and pseudo-sample generation stages. The identification of unseen classes depends entirely on the quality of the generated unseen class pseudo-samples. Obviously, if semantic priors can be introduced in the classifier training stage, it will help to identify unseen classes. In the field of zero-shot learning, there is a class of embedding-based methods that can achieve this function. However, this type of method is similar to the knowledge learned by the generative model, that is, the connection between semantics and vision (semantic-visual link), which led to the direct introduction of the previous generative framework (refer to the paper f-CLSWGAN) based on The embedded classifier cannot achieve better results than the original (unless the classifier itself has better zero-shot performance). Through the ZLA strategy proposed in this paper, we are able to change the role that the generated pseudo-samples of unseen classes play in classifier training. From the original provision of invisible class information to the current adjustment of the decision boundary between invisible classes and visible classes, we can introduce semantic priors in the classifier training stage. Specifically, we use a prototype learning method to map the semantics of each category into a visual prototype (i.e., classifier weight), and then model the adjusted posterior probability as the cosine similarity between the sample and the visual prototype. Degree (cosine similarity), that is,

where  is the temperature coefficient. In the testing phase, samples are predicted to correspond to the category of the visual prototype with the greatest cosine similarity.

is the temperature coefficient. In the testing phase, samples are predicted to correspond to the category of the visual prototype with the greatest cosine similarity.

We combine the proposed classifier with the basic WGAN to generate 10 samples in each unseen class The effect is comparable to SoTAs. In addition, we inserted it into the more advanced CE-GZSL method to improve the initial effect without changing other parameters (including the number of generated samples).

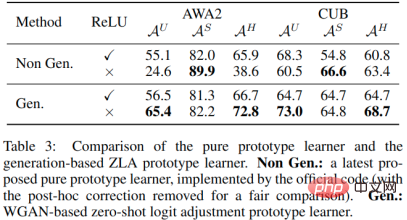

In ablation experiments, we compared a generation-based prototype learner with a pure prototype learner. We found that the last ReLU layer is critical to the success of a pure prototype learner because zeroing out negative numbers increases the similarity of the category prototype to unseen class features (unseen class features are also ReLU activated). However, setting some values to zero also limits the expression of the prototype, which is not conducive to further recognition performance. Using pseudo-unseen class samples to compensate for unseen class information can not only achieve higher performance when using RuLU, but also achieve further performance transcendence without a ReLU layer.

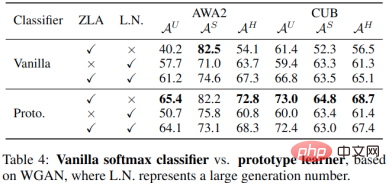

In another ablation study, we compared a prototype learner to an initial classifier. The results show that the prototype learner has no advantage over the initial classifier when generating a large number of unseen class samples. When using the ZLA technology proposed in this article, the prototype learner shows its superiority. As mentioned before, this is because both the prototype learner and the generative model are learning semantic-visual connections, so the semantic information is difficult to fully utilize. ZLA enables the generated unseen class samples to adjust the decision boundary instead of just providing unseen class information, thereby activating the prototype learner.

The above is the detailed content of Using one line of code to greatly improve the effect of zero-shot learning methods, Nanjing University of Technology & Oxford propose a plug-and-play classifier module. For more information, please follow other related articles on the PHP Chinese website!

Introduction to the usage of vbs whole code

Introduction to the usage of vbs whole code

Edge browser cannot search

Edge browser cannot search

What is the interrupt priority?

What is the interrupt priority?

How to set header and footer in Word

How to set header and footer in Word

What is the difference between a router and a cat?

What is the difference between a router and a cat?

Introduction to frequency converter maintenance methods

Introduction to frequency converter maintenance methods

The main function of the arithmetic unit in a microcomputer is to perform

The main function of the arithmetic unit in a microcomputer is to perform

Binary representation of negative numbers

Binary representation of negative numbers