what is apache kafka data collection

What is Apache Kafka data collection?

Apache Kafka - Introduction

Apache Kafka originated at LinkedIn and later became an open source Apache project in 2011 and then became an Apache first-class project in 2012. Kafka is written in Scala and Java. Apache Kafka is a fault-tolerant messaging system based on publish and subscribe. It is fast, scalable and distributed by design.

This tutorial will explore the principles, installation, and operation of Kafka, and then introduce the deployment of Kafka clusters. Finally, we will conclude with real-time applications and integration with Big Data Technologies.

Before proceeding with this tutorial, you must have a good understanding of Java, Scala, distributed messaging systems, and Linux environments.

In big data, a large amount of data is used. Regarding data, we have two main challenges. The first challenge is how to collect large amounts of data, and the second challenge is analyzing the collected data. To overcome these challenges, you need a messaging system.

Kafka is designed for distributed high-throughput systems. Kafka tends to work well as an alternative to more traditional mail brokers. Compared to other messaging systems, Kafka has better throughput, built-in partitioning, replication, and inherent fault tolerance, making it ideal for large-scale message processing applications.

What is a mail system?

The messaging system is responsible for transferring data from one application to another, so applications can focus on the data but not worry about how to share it. Distributed messaging is based on the concept of reliable message queues. Messages are queued asynchronously between the client application and the messaging system. Two types of messaging patterns are available - one is point-to-point and the other is a publish-subscribe (pub-sub) messaging system. Most messaging patterns follow pub-sub.

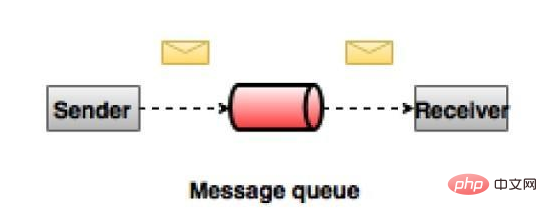

Point-to-point messaging system

In a point-to-point system, messages will remain in queues. One or more consumers can consume messages from the queue, but a specific message can be consumed by at most only one consumer. Once a consumer reads a message from a queue, it disappears from the queue. A typical example of this system is an order processing system, where each order will be processed by one order processor, but multiple order processors can work simultaneously. The diagram below depicts the structure.

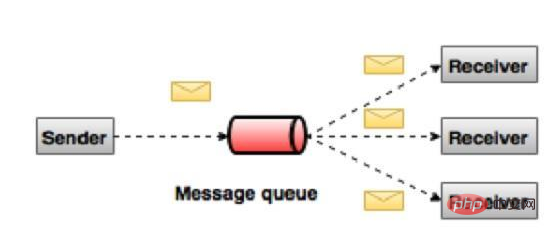

Publish-Subscribe Messaging System

In a publish-subscribe system, messages will remain in topics. Unlike peer-to-peer systems, a consumer can subscribe to one or more topics and consume all messages in that topic. In the Publish-Subscribe system, the message generator is called the publisher, and the message consumer is called the subscriber. A real-life example is Dish TV, which publishes different channels like sports, movies, music, etc. Anyone can subscribe to their own channels and get their subscription channels.

#What is Kafka?

Apache Kafka is a distributed publish-subscribe messaging system and powerful queue that can handle large amounts of data and enables you to deliver messages from one endpoint to another. Kafka is suitable for offline and online message consumption. Kafka messages are persisted on disk and replicated within the cluster to prevent data loss. Kafka is built on the ZooKeeper synchronization service. It integrates perfectly with Apache Storm and Spark to stream data analysis in real time.

Advantages The following are several benefits of Kafka -

Reliability - Kafka is distributed, partitioned, replicated and fault-tolerant.

Scalability - The Kafka messaging system scales easily with no downtime.

Durability - Kafka uses a distributed commit log, which means messages remain on disk as quickly as possible, so it is durable.

Performance - Kafka has high throughput for both publish and subscribe messages. It maintains stable performance even when many terabytes of messages are stored.

Kafka is very fast, guaranteeing zero downtime and zero data loss.

Use Cases

Kafka can be used for many use cases. Some of them are listed below -

Metrics - Kafka is often used to run monitoring data. This involves aggregating statistics from distributed applications to produce a centralized feed of operational data.

Log aggregation solution - Kafka can be used across an organization to collect logs from multiple services and serve them to multiple servers in a standard format.

Stream Processing - Popular frameworks like Storm and Spark

Streaming reads data from a topic, processes it, and writes the processed data to a new topic that is available to users and applications . Kafka's strong durability is also very useful in stream processing.

Kafka requires

Kafka is a unified platform for processing all real-time data sources. Kafka supports low-latency messaging and guarantees fault tolerance in the presence of machine failures. It has the ability to handle a large number of different consumers. Kafka is very fast, performing 2 million writes/second. Kafka persists all data to disk, which essentially means that all writes go to the operating system's (RAM) page cache. This transfers data from the page cache to the web socket very efficiently.

For more Apache related knowledge, please visit the Apache usage tutorial column!

The above is the detailed content of what is apache kafka data collection. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

How to execute php code after writing php code? Several common ways to execute php code

May 23, 2025 pm 08:33 PM

How to execute php code after writing php code? Several common ways to execute php code

May 23, 2025 pm 08:33 PM

PHP code can be executed in many ways: 1. Use the command line to directly enter the "php file name" to execute the script; 2. Put the file into the document root directory and access it through the browser through the web server; 3. Run it in the IDE and use the built-in debugging tool; 4. Use the online PHP sandbox or code execution platform for testing.

How to update Debian Tomcat

May 28, 2025 pm 04:54 PM

How to update Debian Tomcat

May 28, 2025 pm 04:54 PM

Updating the Tomcat version in the Debian system generally includes the following process: Before performing the update operation, be sure to do a complete backup of the existing Tomcat environment. This covers the /opt/tomcat folder and its related configuration documents, such as server.xml, context.xml, and web.xml. The backup task can be completed through the following command: sudocp-r/opt/tomcat/opt/tomcat_backup Get the new version Tomcat Go to ApacheTomcat's official website to download the latest version. According to your Debian system

What are the SEO optimization techniques for Debian Apache2?

May 28, 2025 pm 05:03 PM

What are the SEO optimization techniques for Debian Apache2?

May 28, 2025 pm 05:03 PM

DebianApache2's SEO optimization skills cover multiple levels. Here are some key methods: Keyword research: Use tools (such as keyword magic tools) to mine the core and auxiliary keywords of the page. High-quality content creation: produce valuable and original content, and the content needs to be conducted in-depth research to ensure smooth language and clear format. Content layout and structure optimization: Use titles and subtitles to guide reading. Write concise and clear paragraphs and sentences. Use the list to display key information. Combining multimedia such as pictures and videos to enhance expression. The blank design improves the readability of text. Technical level SEO improvement: robots.txt file: Specifies the access rights of search engine crawlers. Accelerate web page loading: optimized with the help of caching mechanism and Apache configuration

What are the Debian Hadoop monitoring tools?

May 23, 2025 pm 09:57 PM

What are the Debian Hadoop monitoring tools?

May 23, 2025 pm 09:57 PM

There are many methods and tools for monitoring Hadoop clusters on Debian systems. The following are some commonly used monitoring tools and their usage methods: Hadoop's own monitoring tool HadoopAdminUI: Access the HadoopAdminUI interface through a browser to intuitively understand the cluster status and resource utilization. HadoopResourceManager: Access the ResourceManager WebUI (usually http://ResourceManager-IP:8088) to monitor cluster resource usage and job status. Hadoop

How to deal with insufficient memory when starting Apache service

May 16, 2025 pm 10:15 PM

How to deal with insufficient memory when starting Apache service

May 16, 2025 pm 10:15 PM

Apache service insufficient memory can be solved by adjusting MPM configuration and optimizing system resources. 1. Check the current configuration, 2. Adjust the MPM settings according to business needs, 3. Monitor memory usage, 4. Optimize module loading, 5. Regularly adjust the configuration to meet the needs.

Analysis of the reasons why the service cannot start after installing Apache

May 19, 2025 pm 07:24 PM

Analysis of the reasons why the service cannot start after installing Apache

May 19, 2025 pm 07:24 PM

The main reasons why the Apache service cannot be started are configuration file errors, port conflicts and permissions issues. 1. Configuration file error: Check httpd.conf or apache2.conf and use the apachectlconfigtest tool. 2. Port conflict: Change Listen directives, such as Listen8080, and update firewall rules. 3. Permissions issue: Make sure Apache has sufficient permissions, adjust directory permissions or run users.

Using Oracle Database Integration with Hadoop in Big Data Environment

Jun 04, 2025 pm 10:24 PM

Using Oracle Database Integration with Hadoop in Big Data Environment

Jun 04, 2025 pm 10:24 PM

The main reason for integrating Oracle databases with Hadoop is to leverage Oracle's powerful data management and transaction processing capabilities, as well as Hadoop's large-scale data storage and analysis capabilities. The integration methods include: 1. Export data from OracleBigDataConnector to Hadoop; 2. Use ApacheSqoop for data transmission; 3. Read Hadoop data directly through Oracle's external table function; 4. Use OracleGoldenGate to achieve data synchronization.

Configuration and management of multi-version Apache coexistence installation

May 21, 2025 pm 10:51 PM

Configuration and management of multi-version Apache coexistence installation

May 21, 2025 pm 10:51 PM

Multi-version Apache coexistence can be achieved through the following steps: 1. Install different versions of Apache to different directories; 2. Configure independent configuration files and listening ports for each version; 3. Use virtual hosts to further isolate different versions. Through these methods, multiple Apache versions can be run efficiently on the same server to meet the needs of different projects.