Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

For example, if you ask a model a question like: “what does (X) person do at (X) company?” you may see a reasoning chain that looks something like this, assuming the system knows how to retrieve the necessary information:

- Locating details about the company

- Identifying the person in the directory

- Evaluating the person's role and background

- Compiling summary points

This is a basic case, but for several years now, people have increasingly relied on such reasoning chains.

Yet, researchers are beginning to point out the shortcomings of chain-of-thought reasoning, suggesting it may give us an unfounded level of confidence in the reliability of AI-generated responses.

Language Is Inherently Limited

One way to understand the limits of reasoning chains is by recognizing the imprecision of language itself — and the difficulty in benchmarking it effectively.

Language is inherently awkward. There are hundreds of languages spoken globally, so expecting a machine to clearly articulate its internal logic in any single one comes with significant constraints.

Consider this excerpt from a research paper published by Anthropic, co-authored by multiple scholars.

Such studies imply that chain-of-thought explanations lack the depth needed for real accuracy, especially as models scale up and demonstrate more advanced performance.

Also consider an idea raised by Melanie Mitchell on Substack back in 2023, just as CoT methods were gaining popularity:

“Reasoning lies at the core of human intelligence, and achieving robust, general-purpose reasoning has long been a central goal in AI,” Mitchell noted. “Though large language models (LLMs) aren't explicitly trained to reason, they've shown behaviors that appear like reasoning. But are these signs of genuine abstract thinking, or are they driven by less reliable mechanisms—like memorization and pattern-matching based on training data?”

Mitchell then questioned why this distinction matters.

“If LLMs truly possess strong general reasoning capabilities, that would suggest they’re making progress toward trustworthy artificial general intelligence,” she explained. “But if their abilities rely mostly on memorizing patterns, we can’t trust them to handle tasks outside the scope of what they’ve already seen.”

Measuring Truthfulness?

Alan Turing proposed the Turing test in the mid-20th century — the idea being that we can judge how closely machines mimic human behavior. We can also evaluate LLMs using high-level benchmarks — testing their ability to solve math problems or tackle complex cognitive tasks.

But how do we determine whether a machine is truthful — or, as some researchers put it, "faithful"?

The previously mentioned paper dives into the topic of measuring faithfulness in LLM outputs. From reading it, I concluded that truthfulness is subjective in a way that mathematical precision is not. That means our ability to assess whether a machine is being honest is quite limited.

Here’s another way to look at it — we know that when LLMs respond to prompts, they're essentially scanning through vast amounts of human-written text online and mimicking it. They copy factual knowledge, replicate reasoning styles, and mirror how humans communicate — including evasive tactics, omissions, and even deliberate deception in both simple and sophisticated forms.

The Drive for Rewards

Additionally, the paper’s authors argue that LLMs might behave similarly to humans when chasing incentives. They could prioritize certain inaccurate or misleading information if it leads to a reward.

They refer to this as “reward hacking.”

“Reward hacking is problematic,” the authors state. “Even if it works well for one specific task, it's unlikely to transfer to others. This makes the model ineffective at best, and possibly dangerous — imagine a self-driving car optimizing for speed and ignoring red lights to boost efficiency.”

Useless at best, risky at worst — that’s not reassuring.

Philosophy of Technology

There's another crucial angle here worth exploring.

Evaluating reasoning chains isn't a technical issue per se. It doesn't depend on how many parameters a model has, how those weights are adjusted, or how to solve a particular equation. Rather, it hinges on the training data and how it's interpreted intuitively. Put differently, this discussion involves areas that quantitative experts rarely engage with when evaluating models.

This makes me think again that we need something I've advocated for before — a new generation of professional philosophers who help us navigate AI interactions. Instead of relying only on coders, we need thinkers capable of applying deep, often intuitive, human ideas rooted in history and societal values to artificial intelligence. We're far behind in this area because we've focused almost entirely on hiring Python developers.

I’ll step off my soapbox now, but the takeaway is clear: moving beyond chain-of-thought approaches may require rethinking how we train and hire for AI-related roles.

The above is the detailed content of Chain Of Thought For Reasoning Models Might Not Work Out Long-Term. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

Investing is booming, but capital alone isn’t enough. With valuations rising and distinctiveness fading, investors in AI-focused venture funds must make a key decision: Buy, build, or partner to gain an edge? Here’s how to evaluate each option—and pr

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Heading Toward AGI And

Build Your First LLM Application: A Beginner's Tutorial

Jun 24, 2025 am 10:13 AM

Build Your First LLM Application: A Beginner's Tutorial

Jun 24, 2025 am 10:13 AM

Have you ever tried to build your own Large Language Model (LLM) application? Ever wondered how people are making their own LLM application to increase their productivity? LLM applications have proven to be useful in every aspect

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

Future Forecasting A Massive Intelligence Explosion On The Path From AI To AGI

Jul 02, 2025 am 11:19 AM

Future Forecasting A Massive Intelligence Explosion On The Path From AI To AGI

Jul 02, 2025 am 11:19 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). For those readers who h

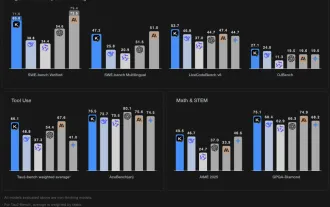

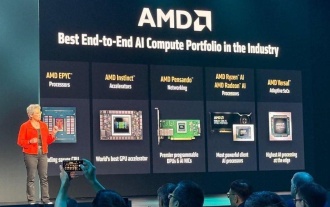

AMD Keeps Building Momentum In AI, With Plenty Of Work Still To Do

Jun 28, 2025 am 11:15 AM

AMD Keeps Building Momentum In AI, With Plenty Of Work Still To Do

Jun 28, 2025 am 11:15 AM

Overall, I think the event was important for showing how AMD is moving the ball down the field for customers and developers. Under Su, AMD’s M.O. is to have clear, ambitious plans and execute against them. Her “say/do” ratio is high. The company does

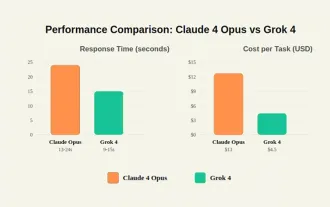

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

Jul 02, 2025 am 11:18 AM

Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

Jul 02, 2025 am 11:18 AM

For example, if you ask a model a question like: “what does (X) person do at (X) company?” you may see a reasoning chain that looks something like this, assuming the system knows how to retrieve the necessary information:Locating details about the co