當我們觀察到緩慢的系統時,我們的第一個直覺可能是將其標記為失敗。這種假設很普遍,並強調了一個基本事實:效能是應用程式的成熟度和生產準備程度的代名詞。

在 Web 應用程式中,毫秒可以決定使用者互動的成功或失敗,因此風險非常高。性能不僅僅是技術標桿,更是用戶滿意度和營運效率的基石。

效能封裝了系統在不同工作負載下的回應能力,透過 CPU 和記憶體利用率、回應時間、可擴充性和吞吐量等指標進行量化。

在本文中,我們將探討 AppSignal 如何監控和增強 Django 應用程式的效能。

讓我們開始吧!

在 Django 應用程式中實現最佳效能涉及多方面的方法。這意味著開發能夠高效運行並在擴展時保持效率的應用程式。關鍵指標在過程中至關重要,可以提供切實的數據來指導我們的最佳化工作。讓我們探討其中一些指標。

在本文中,我們將建立一個基於 Django 的電子商務商店,為高流量事件做好準備,整合 AppSignal 來監控、最佳化並確保其在負載下無縫擴展。我們還將示範如何使用 AppSignal 增強現有應用程式以提高效能(在本例中為 Open edX 學習管理系統)。

要跟隨,您需要:

現在讓我們為我們的專案建立一個目錄並從 GitHub 克隆它。我們將安裝所有要求並運行遷移:

現在訪問127.0.0.1:8000。你應該看到這樣的東西:

這個 Django 應用程式是一個簡單的電子商務商店,為用戶提供產品清單、詳細資訊和結帳頁面。成功複製並安裝應用程式後,使用 Django createsuperuser 管理命令建立超級使用者。

現在讓我們在應用程式中建立幾個產品。首先,我們將透過執行以下命令進入 Django 應用程式:

創建3個類別和3個產品:

現在關閉 shell 並運行伺服器。您應該會看到類似以下內容:

我們將在我們的專案中安裝 AppSignal 和 opentelemetry-instrumentation-django。

在安裝這些軟體包之前,請使用您的憑證登入 AppSignal(您可以註冊 30 天免費試用)。選擇組織後,點擊導覽列右上角的新增應用程式。選擇 Python 作為您的語言,您將收到一個push-api-key.

確保您的虛擬環境已啟動並執行以下命令:

在 CLI 提示中提供應用程式名稱。安裝後,您應該會在專案中看到一個名為 __appsignal__.py 的新檔案。

Now let's create a new file called .env in the project root and add APPSIGNAL_PUSH_API_KEY=YOUR-KEY (remember to change the value to your actual key). Then, let's change the content of the __appsignal__.py file to the following:

# __appsignal__.py import os from appsignal import Appsignal # Load environment variables from the .env file from dotenv import load_dotenv load_dotenv() # Get APPSIGNAL_PUSH_API_KEY from environment push_api_key = os.getenv('APPSIGNAL_PUSH_API_KEY') appsignal = Appsignal( active=True, name="mystore", push_api_key=os.getenv("APPSIGNAL_PUSH_API_KEY"), )

Next, update the manage.py file to read like this:

# manage.py #!/usr/bin/env python """Django's command-line utility for administrative tasks.""" import os import sys # import appsignal from __appsignal__ import appsignal # new line def main(): """Run administrative tasks.""" os.environ.setdefault("DJANGO_SETTINGS_MODULE", "mystore.settings") # Start Appsignal appsignal.start() # new line try: from django.core.management import execute_from_command_line except ImportError as exc: raise ImportError( "Couldn't import Django. Are you sure it's installed and " "available on your PYTHONPATH environment variable? Did you " "forget to activate a virtual environment?" ) from exc execute_from_command_line(sys.argv) if __name__ == "__main__": main()

We've imported AppSignal and started it using the configuration from __appsignal.py.

Please note that the changes we made to manage.py are for a development environment. In production, we should change wsgi.py or asgi.py. For more information, visit AppSignal's Django documentation.

As we approach the New Year sales on our Django-based e-commerce platform, we recall last year's challenges:increased trafficled toslow load timesand even some downtime. This year, we aim to avoid these issues by thoroughly testing and optimizing our site beforehand. We'll use Locust to simulate user traffic and AppSignal to monitor our application's performance.

First, we'll create a locustfile.py file that simulates simultaneous users navigating through critical parts of our site: the homepage, a product detail page, and the checkout page. This simulation helps us understand how our site performs under pressure.

Create the locustfile.py in the project root:

# locustfile.py from locust import HttpUser, between, task class WebsiteUser(HttpUser): wait_time = between(1, 3) # Users wait 1-3 seconds between tasks @task def index_page(self): self.client.get("/store/") @task(3) def view_product_detail(self): self.client.get("/store/product/smartphone/") @task(2) def view_checkout_page(self): self.client.get("/store/checkout/smartphone/")

In locustfile.py, users primarily visit the product detail page, followed by the checkout page, and occasionally return to the homepage. This pattern aims to mimic realistic user behavior during a sale.

Before running Locust, ensure you have a product with thesmartphoneslug in the Django app. If you don't, go to /admin/store/product/ and create one.

Before we start, let's define what we consider an acceptable response time. For a smooth user experience, we aim for:

These targets ensure users experience minimal delay, keeping their engagement high.

With our Locust test ready, we run it to simulate the 500 users and observe the results in real time. Here's how:

After running Locust, you can find information about the load test in its dashboard:

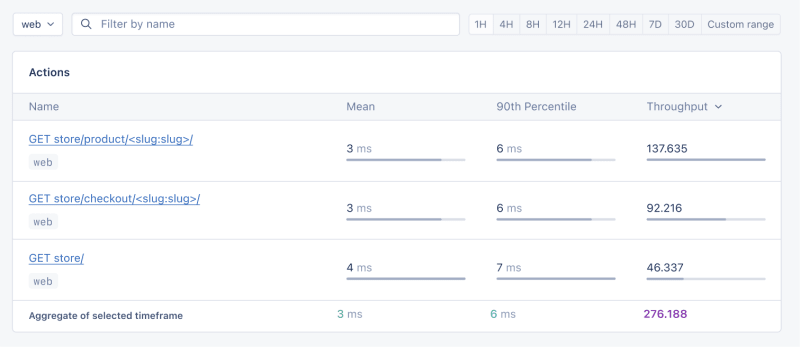

Now, go to your application page in AppSignal. Under thePerformancesection, click onActionsand you should see something like this:

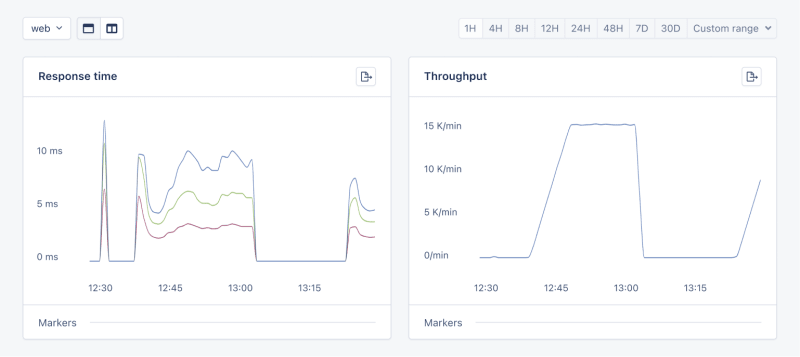

Now let's click on theGraphsunderPerformance:

We need to prepare our site for the New Year sales, as response times might exceed our targets. Here's a simplified plan:

When it comes to web applications, one of the most common sources of slow performance is database queries. Every time a user performs an action that requires data retrieval or manipulation, a query is made to the database.

If these queries are not well-optimized, they can take a considerable amount of time to execute, leading to a sluggish user experience. That's why it's crucial to monitor and optimize our queries, ensuring they're efficient and don't become the bottleneck in our application's performance.

Before diving into the implementation, let's clarify two key concepts in performance monitoring:

Instrumentationis the process of augmenting code to measure its performance and behavior during execution. Think of it like fitting your car with a dashboard that tells you not just the speed, but also the engine's performance, fuel efficiency, and other diagnostics while you drive.

Spans, on the other hand, are the specific segments of time measured by instrumentation. In our car analogy, a span would be the time taken for a specific part of your journey, like from your home to the highway. In the context of web applications, a span could represent the time taken to execute a database query, process a request, or complete any other discrete operation.

Instrumentation helps us create a series of spans that together form a detailed timeline of how a request is handled. This timeline is invaluable for pinpointing where delays occur and understanding the overall flow of a request through our system.

With our PurchaseProductView, we're particularly interested in the database interactions that create customer records and process purchases. By adding instrumentation to this view, we'll be able to measure these interactions and get actionable data on their performance.

Here's how we integrate AppSignal's custom instrumentation into our Django view:

# store/views.py # Import OpenTelemetry's trace module for creating custom spans from opentelemetry import trace # Import AppSignal's set_root_name for customizing the trace name from appsignal import set_root_name # Inside the PurchaseProductView def post(self, request, *args, **kwargs): # Initialize the tracer for this view tracer = trace.get_tracer(__name__) # Start a new span for the view using 'with' statement with tracer.start_as_current_span("PurchaseProductView"): # Customize the name of the trace to be more descriptive set_root_name("POST /store/purchase/") # ... existing code to handle the purchase ... # Start another span to monitor the database query performance with tracer.start_as_current_span("Database Query - Retrieve or Create Customer"): # ... code to retrieve or create a customer ... # Yet another span to monitor the purchase record creation with tracer.start_as_current_span("Database Query - Create Purchase Record"): # ... code to create a purchase record ...

See the full code of the view after the modification.

In this updated view, custom instrumentation is added to measure the performance of database queries when retrieving or creating a customer and creating a purchase record.

Now, after purchasing a product in theSlow eventssection of thePerformancedashboard, you should see the purchase event, its performance, and how long it takes to run the query.

purchase is the event we added to our view.

In this section, we are going to see how we can integrate AppSignal with Open edX, an open-source learning management system based on Python and Django.

Monitoring the performance of learning platforms like Open edX is highly important, since a slow experience directly impacts students' engagement with learning materials and can have a negative impact (for example, a high number of users might decide not to continue with a course).

Here, we can follow similar steps as theProject Setupsection. However, for Open edX, we will followProduction Setupand initiate AppSignal in wsgi.py. Check out this commit to install and integrate AppSignal with Open edX.

Now we'll interact with our platform and see the performance result in the dashboard.

Let's register a user, log in, enroll them in multiple courses, and interact with the course content.

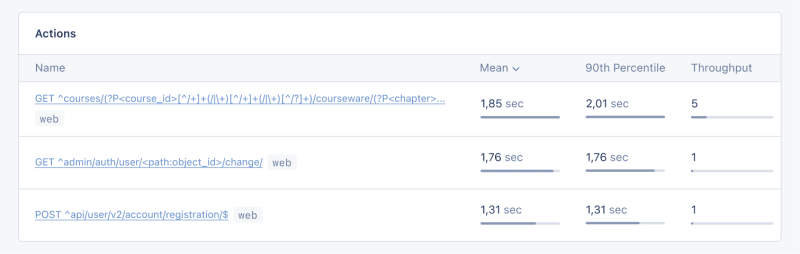

Going toActions, let's order the actions based on their mean time and find slow events:

As we can see, for 3 events (out of the 34 events we tested) the response time is higher than 1 second.

Host Metricsin AppSignal show resource usage:

私たちのシステムは重負荷ではありません - 負荷平均は 0.03 - ですが、メモリ使用量は高くなります。

リソース使用量が特定の条件に達したときに通知を受け取るトリガーを追加することもできます。たとえば、メモリ使用量が 80% を超えたときにトリガーを設定して通知を受け取り、機能停止を防ぐことができます。

条件に達すると、次のような通知が表示されます:

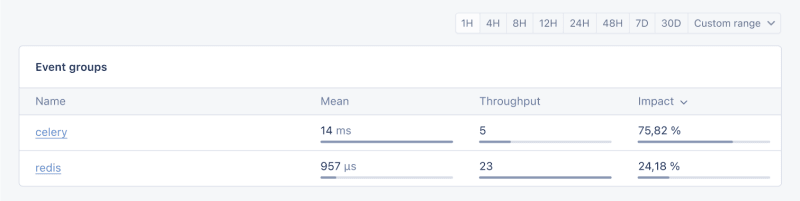

Open edX では、証明書の生成、グレーディング、一括メール機能などの非同期タスクや長時間実行タスクに Celery を使用します。

タスクとユーザーの数によっては、これらのタスクの一部は長時間実行され、プラットフォームでパフォーマンスの問題を引き起こす可能性があります。

たとえば、数千人のユーザーがコースに登録しており、それらのユーザーを再スコアリングする必要がある場合、このタスクには時間がかかることがあります。タスクがまだ実行中であるため、自分の成績がダッシュボードに反映されないというユーザーからの苦情を受ける可能性があります。 Celery タスク、そのランタイム、リソース使用状況に関する情報があれば、アプリケーションの重要な洞察と改善の可能性が得られます。

AppSignal を使用して Open edX で Celery タスクをトレースし、ダッシュボードで結果を確認してみましょう。まず、必要な要件がインストールされていることを確認します。次に、このコミットのように Celery のパフォーマンスをトレースするタスクを設定しましょう。

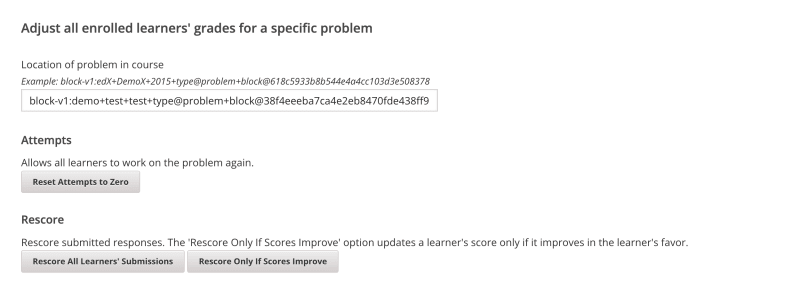

ここで、Open edX ダッシュボードでいくつかのタスクを実行して、試行をリセットし、学習者の提出物を再採点してみましょう:

AppSignal のパフォーマンスダッシュボードに移動します ->スローイベントでは、次のようなものが表示されます:

Celery をクリックすると、Open edX で実行されたすべてのタスクが表示されます:

これは、タスクが予想よりも長く実行されているかどうかを確認するのに役立つ優れた情報であり、考えられるパフォーマンスのボトルネックに対処できます。

それで終わりです!

この記事では、AppSignal が Django アプリのパフォーマンスに関する洞察をどのように得られるかを説明しました。

同時ユーザー数、データベース クエリ、応答時間などのメトリクスを含む、単純な e コマース Django アプリケーションを監視しました。

ケーススタディとして、AppSignal を Open edX と統合し、パフォーマンス監視が、特にプラットフォームを使用する学生のユーザー エクスペリエンスを向上させるのにどのように役立つかを明らかにしました。

コーディングを楽しんでください!

追伸Python の投稿が出版されたらすぐに読みたい場合は、Python Wizardry ニュースレターを購読して、投稿を 1 つも見逃さないようにしてください!

以上是使用 AppSignal 監控 Python Django 應用程式的效能的詳細內容。更多資訊請關注PHP中文網其他相關文章!