Found a total of 3 related content

Several tips for avoiding pitfalls when using ChatGLM

Article Introduction:Yesterday I said that after returning from the Data Technology Carnival, I deployed a set of ChatGLM and planned to study the use of large language models to train database operation and maintenance knowledge base. Many friends did not believe it, saying that you are already this old, Lao Bai, and you can still do it yourself. these things? In order to dispel the doubts of these friends, I will share with you the process of tossing ChatGLM in the past two days today, and also share some tips on avoiding pitfalls for friends who are interested in tossing ChatGLM. ChatGLM-6B is developed based on the language model GLM jointly trained by Tsinghua University's KEG Laboratory and Zhipu AI in 2023. It is a large-scale language model that provides appropriate responses and support for users' questions and requirements. The answer above is from ChatGLM himself

2023-05-02comment 01492

ChatGLM, the Tsinghua-based Gigabit base conversation model, has launched internal testing and is an open source single-card version of the model.

Article Introduction:The release of ChatGPT has stirred up the entire AI field, and major technology companies, startups, and university teams are following suit. Recently, Heart of the Machine has reported on the research results of many startup companies and university teams. Yesterday, another large-scale domestic AI dialogue model made its grand debut: ChatGLM, a company transformed from Tsinghua University’s technological achievements and based on the GLM-130B 100 billion base model, has now started an invitation-only internal test. It is worth mentioning that Zhipu AI has also open sourced the Chinese-English bilingual dialogue model ChatGLM-6B, which supports inference on a single consumer-grade graphics card. Internal test application website: chatglm.cn It is understood that the capability improvement of the current version of ChatGLM model mainly comes from independent

2023-04-30comment 0916

Do you want to install ChatGPT on your computer? The domestic open source large language model ChatGLM helps you realize it!

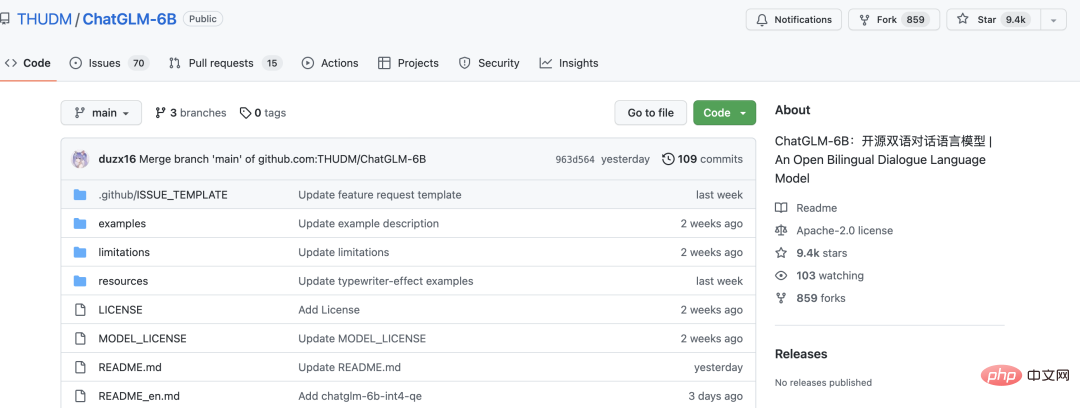

Article Introduction:Hello everyone. Today I would like to share with you an open source large language model ChatGLM-6B. Within ten days, nearly 10,000 stars were harvested. ChatGLM-6B is an open source conversational language model that supports Chinese and English bilinguals. It is based on the General Language Model (GLM) architecture and has 6.2 billion parameters. Combined with model quantization technology, users can deploy it locally on consumer-grade graphics cards (a minimum of 6GB of video memory is required at the INT4 quantization level). ChatGLM-6B uses technology similar to ChatGPT and is optimized for Chinese question and answer and dialogue. After about 1T identifiers of Chinese and English bilingual training, auxiliary

2023-04-13comment 01592