Translator| Chen Jun

##Reviewer| Chonglou

Recently, we implemented a customized artificial intelligence (AI) project. Given that Party A holds very sensitive customer information, for security reasons we cannot pass it to OpenAI or other proprietary models. Therefore, we downloaded and ran an open source AI model in an AWS virtual machine, keeping it completely under our control. At the same time, Rails applications can make API calls to AI in a safe environment. Of course, if security issues do not have to be considered, we would prefer to cooperate directly with OpenAI.

Next, I will share with you how to download the open source AI model locally, let it run, and how to Run the Ruby script against it.

#The motivation for this project is simple: data security. When handling sensitive customer information, the most reliable approach is usually to do it within the company. Therefore, we need customized AI models to play a role in providing a higher level of security control and privacy protection.

In the past6In the past few months, products such as: Mistral, Mixtral and Lama# have appeared on the market. ## and many other open source AI models. Although they are not as powerful as GPT-4, the performance of many of them has exceeded GPT-3.5, and with the As time goes by, they will become stronger and stronger. Of course, which model you choose depends entirely on your processing capabilities and what you need to achieve.

#Since we will be running the AI model locally, a size of approximately4GB was chosen Mistral. It outperforms GPT-3.5 on most metrics. Although Mixtral performs better than Mistral, it is a bulky model requiring at least 48GBMemory can run.

ParametersLLM ), we often consider mentioning their parameter sizes. Here, the Mistral model we will run locally is a 70# model with 70 million parameters (of course, Mixtral has 700 billion parameters, while GPT-3.5 has approximately 1750 billion parameters).

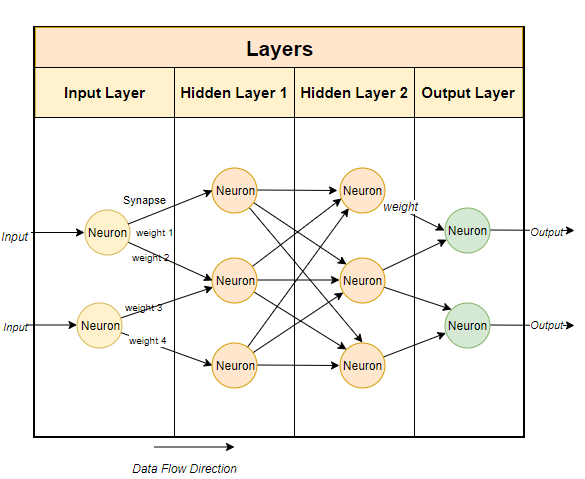

# Typically, large language models use neural network-based techniques. Neural networks are made up of neurons, and each neuron is connected to all other neurons in the next layer.

The purpose of the neural network is to "learn" an advanced algorithm, a pattern matching algorithm. By being trained on large amounts of text, it will gradually learn the ability to predict text patterns and respond meaningfully to the cues we give it. Simply put, parameters are the number of weights and biases in the model. It gives us an idea of how many neurons there are in a neural network. For example, for a model with 70 billion parameters, there are approximately 100 layers, each with thousands of neurons .

To run the open source model locally, you must first download it Related applications. While there are many options on the market, the one I find easiest and easiest to run on IntelMac is Ollama.

Although Ollama is currently only available on Mac and It runs on Linux, but it will also run on Windows in the future. Of course, you can use WSL (Windows Subsystem for Linux) to run Linux shell# on Windows ##.

Ollama not only allows you to download and run a variety of open source models, but also opens the model on a local port, allowing you to Ruby code makes API calls. This makes it easier for Ruby developers to write Ruby applications that can be integrated with local models.

Since Ollama is mainly based on the command line , so installing Ollama on Mac# and Linux systems is very simple. You just need to download Ollama through the link//m.sbmmt.com/link/04c7f37f2420f0532d7f0e062ff2d5b5, spend5 It will take about a minute to install the software package and then run the model.

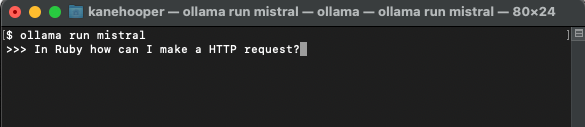

##ollama run mistral

Since Mistral is approximately 4GB, it will take some time for you to complete the download. Once the download is complete, it will automatically open the Ollama prompt for you to interact and communicate with Mistral.

#Next time you run it through Ollamamistral, you can run the corresponding model directly.

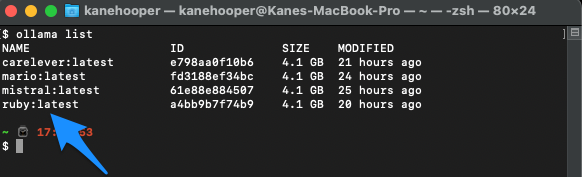

OpenAI## Create a customized GPT in #. Through Ollama, you can customize the basic model. Here we can simply create a custom model. For more detailed cases, please refer to Ollama's online documentation. First, you can create a Modelfile (model file) and add the following text in it:

FROM mistral# Set the temperature set the randomness or creativity of the responsePARAMETER temperature 0.3# Set the system messageSYSTEM ”””You are an excerpt Ruby developer. You will be asked questions about the Ruby Programminglanguage. You will provide an explanation along with code examples.”””

Next, you can run the following command on the terminal to create a new model:

ollama create

Ruby. ollama create ruby -f './Modelfile'

ollama list

At this point you can use Run the custom model with the following command:

Although Ollama does not yet have a dedicated gem, butRuby Developers can interact with models using basic HTTP request methods. Ollama running in the background can open the model through the 11434 port, so you can open it via "//m.sbmmt.com/link/ dcd3f83c96576c0fd437286a1ff6f1f0" to access it. Additionally, the documentation for OllamaAPI also provides different endpoints for basic commands such as chat conversations and creating embeds.

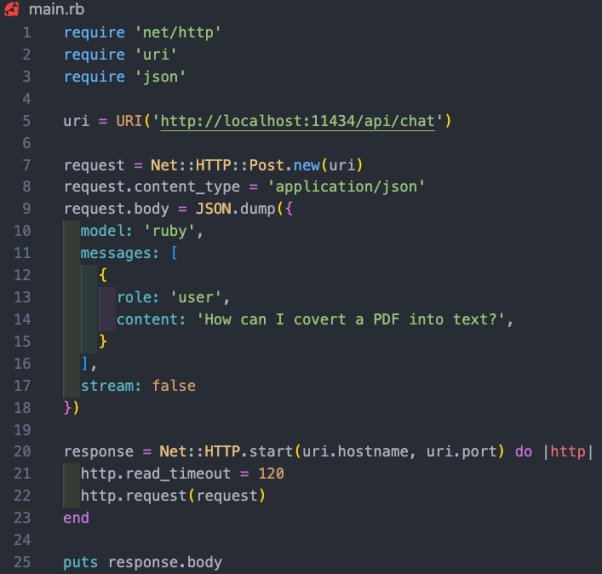

In this project case, we want to use the /api/chat endpoint to send prompts to the AI model. The image below shows some basic Ruby code for interacting with the model:

The functions of the above Ruby code snippet include:

As mentioned above, running the local AI model The real value lies in assisting companies holding sensitive data, processing unstructured data such as emails or documents, and extracting valuable structured information. In the project case we participated in, we conducted model training on all customer information in the customer relationship management (CRM) system. From this, users can ask any questions they have about their customers without having to sift through hundreds of records.

Translator introduction

Julian Chen ), 51CTO community editor, has more than ten years of experience in IT project implementation, is good at managing and controlling internal and external resources and risks, and focuses on disseminating network and information security knowledge and experience.

Original title: ##How To Run Open-Source AI Models Locally With Ruby By Kane Hooper

The above is the detailed content of To protect customer privacy, run open source AI models locally using Ruby. For more information, please follow other related articles on the PHP Chinese website!