Does a large language model that can only "read books" have real-world visual perception? By modeling the relationships between strings, what exactly can a language model learn about the visual world?

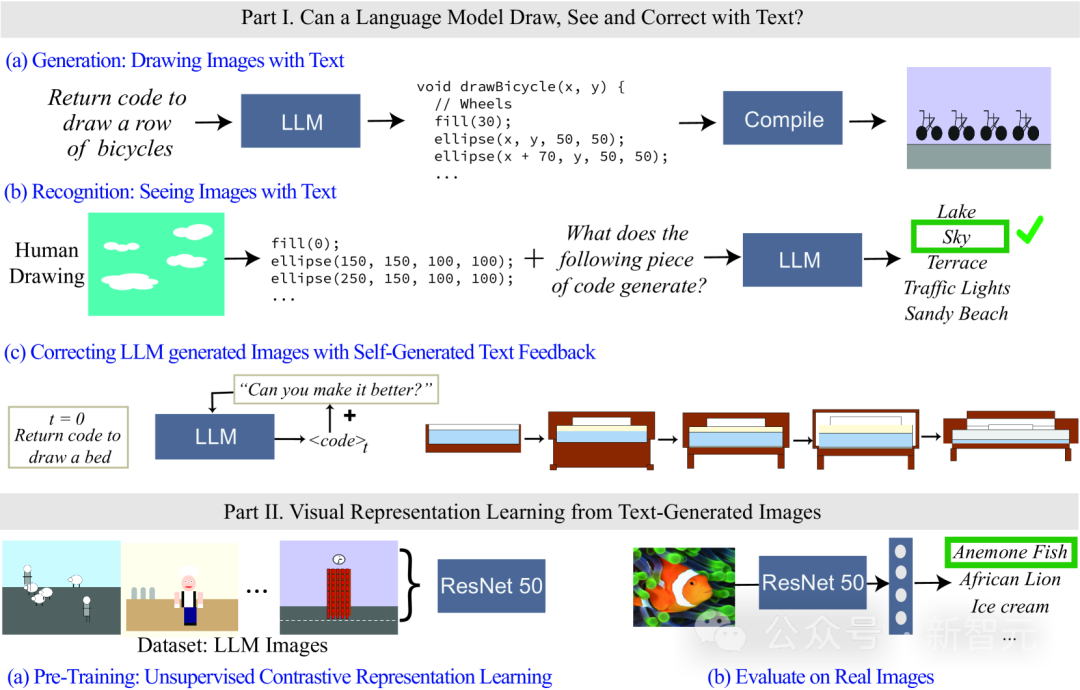

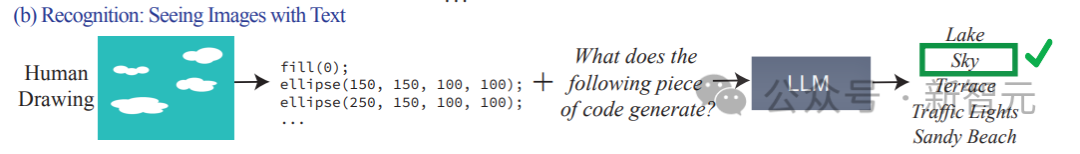

Recently, researchers at the MIT Computer Science and Artificial Intelligence Laboratory (MIT CSAIL) evaluated language models, focusing on their visual capabilities. They tested the model's ability by asking it to generate and recognize increasingly complex visual concepts, from simple shapes and objects to complex scenes. The researchers also showed how to train a preliminary visual representation learning system using a text-only model. With this research, they laid the foundation for further development and improvement of visual representation learning systems.

Paper link: https://arxiv.org/abs/2401.01862

Because of the language model Unable to process visual information, code is used in research to render images.

Although the images generated by LLM may not be as realistic as natural images, judging from the generation results and the self-correction of the model, it is able to accurately model strings/texts, which makes Language models can learn about many concepts in the visual world.

The researchers also studied methods for self-supervised visual representation learning using images generated by text models. The results show that this method has the potential to be used to train vision models and perform semantic evaluation of natural images using only LLM.

Let me first ask a question: What does it mean for people to understand the visual concept of "frog"?

Is it enough to know details such as the color of its skin, how many legs it has, the position of its eyes, and how it looks when it jumps?

People often think that to understand the concept of a frog, one needs to look at images of frogs and observe them from multiple angles and in real-life scenarios.

To what extent can we understand the visual meaning of different concepts if we only observe the text?

From a model training perspective, the training input of a large language model (LLM) is only text data, but the model has been proven to understand information about concepts such as shape, color, etc. It can even be linearly transformed into the representation of the visual model.

In other words, the visual model and the language model are very similar in terms of world representation.

However, most of the existing model representation methods are based on a set of pre-selected attributes to explore what information the model encodes. This method cannot dynamically expand attributes, and also requires access to the model. Internal parameters.

So the researchers raised two questions:

1. Regarding the visual world, language model How much do you know?

2. Is it possible to train a visual system that can be used for natural images "using only text models"?

To find out, researchers evaluated what information is included by testing how different language models render (draw) and recognize (see) real-world visual concepts. In the model, this enables the ability to measure arbitrary attributes without having to train feature classifiers separately for each attribute.

Although the language model cannot generate images, large models such as GPT-4 can generate codes for rendering objects. In this article, through the process of textual prompt -> code -> image, Measure the model's capabilities by gradually increasing the difficulty of rendering the object.

Researchers found that LLM is surprisingly good at generating complex visual scenes composed of multiple objects, modeling spatial relationships efficiently, but not capturing the visual world well. , including properties of the object such as texture, precise shape, and surface contact with other objects in the image.

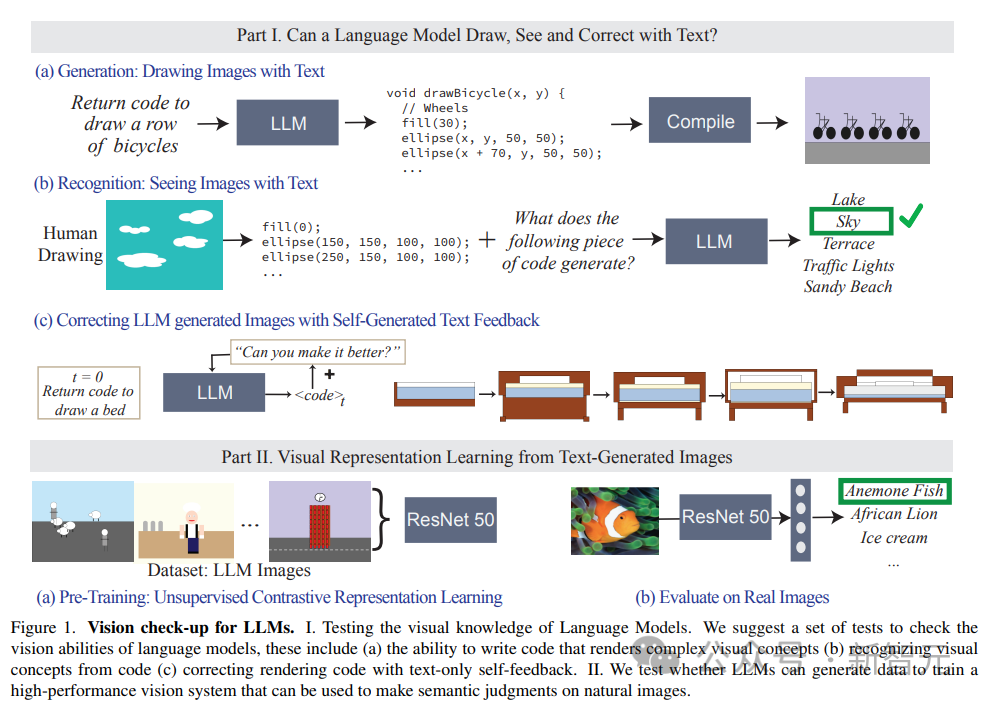

The article also evaluates the ability of LLM to identify perceptual concepts. It inputs paintings represented by codes. The codes include the sequence, position and color of shapes, and then asks the language model to answer the visual description described in the code. content.

#The experimental results found that LLM is exactly the opposite of humans: for humans, the process of writing code is difficult, but verifying image content is easy; The model is difficult to interpret/identify the content of the code, but it can generate complex scenarios.

In addition, the research results also prove that the visual generation ability of the language model can be further improved through text-based corrections.

The researchers first use a language model to generate code that illustrates the concept, and then continuously enter the prompt "improve its generated code" (improve the generated code) as a condition to modify the code. The final model can Improve visual effects through this iterative approach.

The researchers built three text description datasets to measure the performance of models during creation, Ability to identify and modify image rendering code ranging from simple shapes and combinations, objects to complex scenes, from low to high complexity.

##1. Shapes and their compositions

Contains shape compositions from different categories such as points, lines, 2D shapes and 3D shapes, with 32 different attributes such as color, texture, position and spatial arrangement.

The complete data set contains more than 400,000 examples, of which 1,500 samples were used for experimental testing.

2. Objects

Contains the 1000 most common objects in the ADE 20K data set, generated And recognition is more difficult because it contains more complex combinations of shapes.

3. Scenes

consists of complex scene descriptions, including multiple objects and different locations , obtained from 1000 scene descriptions randomly and uniformly sampled from the MS-COCO data set.

The visual concepts in the data set are described in words. For example, the scene is described as "a sunny summer day on the beach, with a blue sky and a calm ocean." (a sunny summer day on a beach, with a blue sky and calm ocean).

During the test, LLM is required to generate code and compile rendered images based on the depicted scene.

Experimental resultsThe tasks of evaluating the model mainly consist of three:

1. Generate/draw text: Evaluate LLM Ability in generating image rendering code that corresponds to specific concepts.

2. Recognize/View text: Test LLM's performance in recognizing visual concepts and scenes represented in code. We test code representations of human drawings on each model.

3. Correct drawings using textual feedback: Evaluate LLM’s ability to iteratively modify its generated code using natural language feedback it generates.

The prompt for model input in the test is: write code in the programming language [programming language name] that draws a [concept]

Then compile and render according to the output code of the model, and evaluate the visual quality and diversity of the generated images:

1. Fidelity

Computes the fidelity between the generated image and the true description by retrieving the best description of the image. The CLIP score is first used to calculate the agreement between each image and all potential descriptions in the same category (shape/object/scene), and then the ranking of the true descriptions is reported as a percentage (e.g. a score of 100% means that the true concept is ranked first) .

2. Diversity

In order to evaluate the model’s ability to render different content, the same visual Use LPIPS diversity scores on image pairs of concepts.

3. Realism

##For a sampled collection of 1K images from ImageNet, use Fréchet Inception Distance (FID) to quantify the distribution difference between natural images and LLM-generated images.

In the comparison experiment, the model obtained by Stable Diffusion was used as the baseline.

What can LLM visualize?

The research results found that LLM can visualize real-world concepts from the entire visual hierarchy, combine two unrelated concepts (such as a car-shaped cake), and generate visual phenomena (such as blurred images) , and try to correctly interpret spatial relationships (such as arranging "a row of bicycles" horizontally).

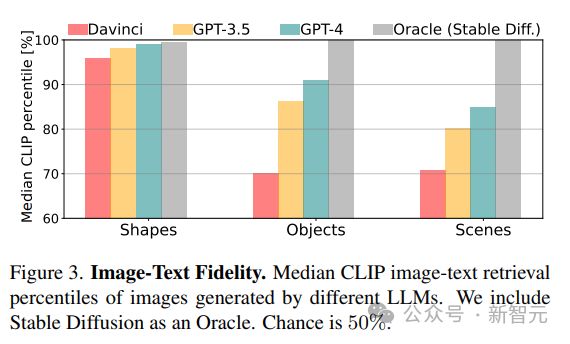

## Unsurprisingly, looking at the CLIP score results, the model’s capabilities decrease as the conceptual complexity from shapes to scenes increases.

For more complex visual concepts, such as drawing scenes containing multiple objects, GPT-3.5 and GPT-4 are drawn using processing and tikz More accurate than python-matplotlib and python-turtle when having scenes with complex descriptions.

What can’t LLM visualize?

In some cases, even relatively simple concepts are difficult to model, and the researchers summarized three common failure modes:

1. The language model cannot handle the concept of a set of shapes and a specific spatial organization;2. The painting is rough and lacks details, most often In Davinci, especially when coding with matplotlib and turtle;

3. Description is incomplete, corrupted, or represents only a subset of a concept (typical scenario category).

4. All models cannot draw figures.

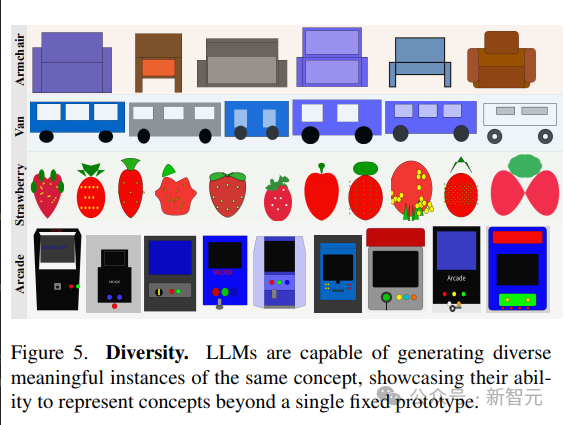

Diversity and fidelity

Language models demonstrate the ability to generate different visualizations of the same concept.

In order to generate different samples of the same scene, the article compares two strategies:1. Repeated sampling from the model;

2. Sample a parameterized function that allows creating new plots of a concept by changing parameters.

The model’s ability to present diverse implementations of visual concepts is reflected in the high LPIPS diversity score; the ability to generate diverse images shows that LLM is able to Represent visual concepts in multiple ways without being limited to a limited set of prototypes.

Learning visual systems from text

Training and evaluation

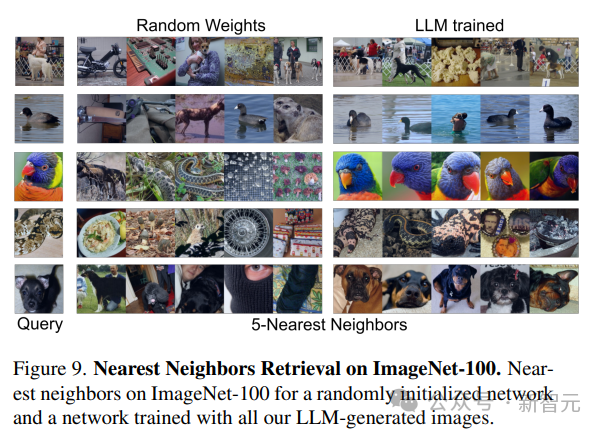

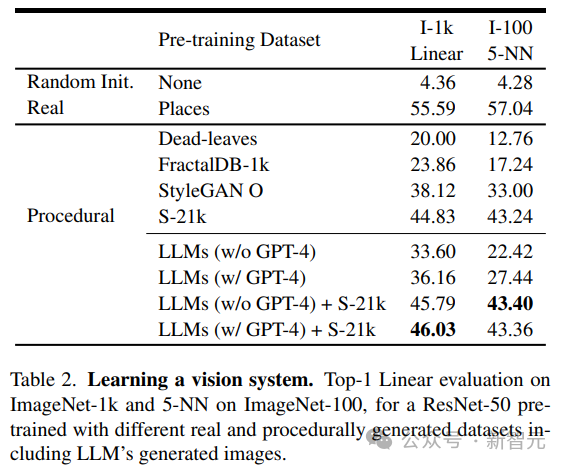

Researchers use unsupervised The learned pre-trained visual model is used as the backbone of the network, and the MoCo-v2 method is used to train the ResNet-50 model on the 1.3 million 384×384 image data set generated by LLM for a total of 200 epochs; after training, two methods are used to evaluate the performance of each Performance of models trained on datasets:

1. Train linear layers for 100 epochs on frozen backbone of ImageNet-1 k classification,2. Use 5-nearest neighbor (kNN) retrieval on ImageNet-100.

It can be seen from the results that the model trained using only the data generated by LLM can provide powerful representation capabilities for natural images. No need to train linear layers anymore.

Result analysis

The researchers compared the images generated by LLM with those generated by existing programs, including simple generative programs such as dead-levaves, fractals, and StyleGAN, to generate highly diverse images. From the results, the LLM method is better than dead-levaves and fractals, but not sota; after manual inspection of the data , the researchers attribute this inferiority to the lack of texture in most LLM-generated images. To address this issue, the researchers combined the Shaders-21k dataset with samples obtained from LLM to generate texture-rich images. It can be seen from the results that this solution can significantly improve performance and is better than other program-based generation solutions.

The above is the detailed content of Pure text model trains 'visual' representation! MIT's latest research: Language models can draw pictures using code. For more information, please follow other related articles on the PHP Chinese website!

Commonly used permutation and combination formulas

Commonly used permutation and combination formulas

What does data encryption storage include?

What does data encryption storage include?

How to use fusioncharts.js

How to use fusioncharts.js

The difference between xdata and data

The difference between xdata and data

jsreplace function usage

jsreplace function usage

Formal digital currency trading platform

Formal digital currency trading platform

What to do if an error occurs in the script of the current page

What to do if an error occurs in the script of the current page

What is the console interface for?

What is the console interface for?