Today I will talk to you about the application of large models in time series forecasting. With the development of large models in the field of NLP, more and more work attempts to apply large models to the field of time series prediction. This article introduces the main methods of applying large models to time series forecasting, and summarizes some recent related work to help everyone understand the research methods of time series forecasting in the era of large models.

In the past three months, a lot of large model time series prediction work has emerged, which can basically be divided into two types.

Rewritten content: One method is to directly use large-scale models of NLP for time series prediction. In this method, large-scale NLP models such as GPT and Llama are used for time series prediction. The key lies in how to convert time series data into data suitable for large-scale model input.

The second one is in the field of training time series Large model. In this type of method, a large number of time series data sets are used to jointly train a large model such as GPT or Llama in the time series field and use it for downstream time series tasks.

In view of the above two types of methods, here are some related classic large model time series representation works.

This method is the earliest batch of large model time series prediction work

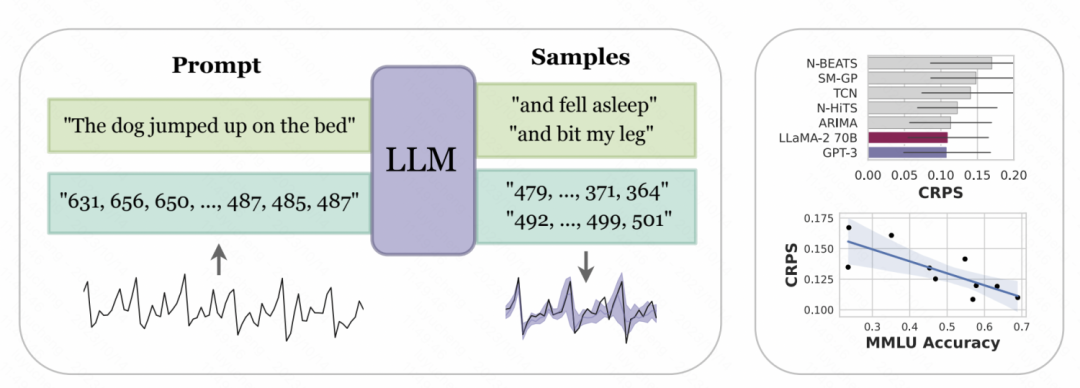

New York University and Carnegie Mellon In the paper "Large-scale Language Model as a Zero-Sample Time Series Predictor" co-published by the university, the digital representation of the time series is designed to be tokenized in order to convert it into input that can be recognized by large models such as GPT and LLaMa. Since different large-scale models tokenize numbers differently, personalization is required when using different models. For example, GPT will split a string of numbers into different subsequences, which will affect the learning of the model. Therefore, this article forces a space between numbers to accommodate GPT's input format. For recently released large models such as LLaMa, individual numbers are generally divided, so there is no need to add spaces. At the same time, in order to avoid the input sequence being too long due to too large time series values, some scaling operations are performed in this article to limit the values of the original time series to a more reasonable range

Picture

Picture

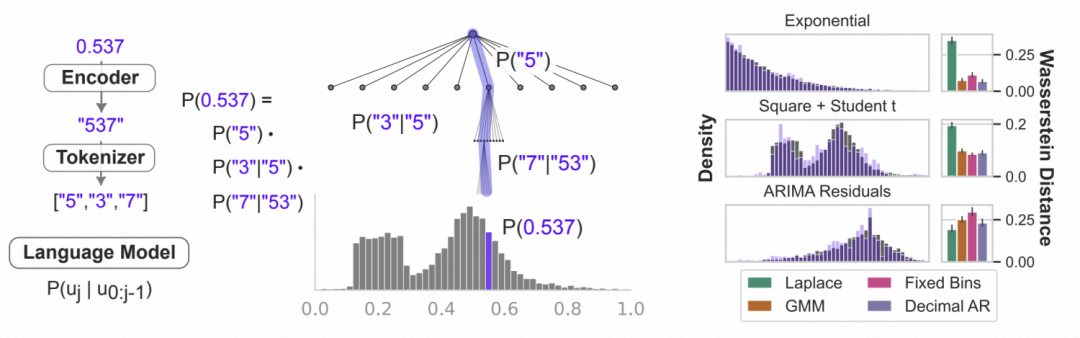

The above-processed digital string is input into the large model, allowing the large model to autoregressively predict the next number, and finally convert the predicted number into the corresponding time series value. The figure below gives a schematic diagram. The conditional probability of language model is used to model numbers. It is to predict the probability that the next digit will be each number based on the previous numbers. It is an iterative hierarchical softmax structure, coupled with the representation ability of the large model. , can adapt to a variety of distribution types, which is why large models can be used for time series prediction in this way. At the same time, the probability of the next number predicted by the model can also be converted into a prediction of uncertainty to achieve uncertainty estimation of time series.

Picture

Picture

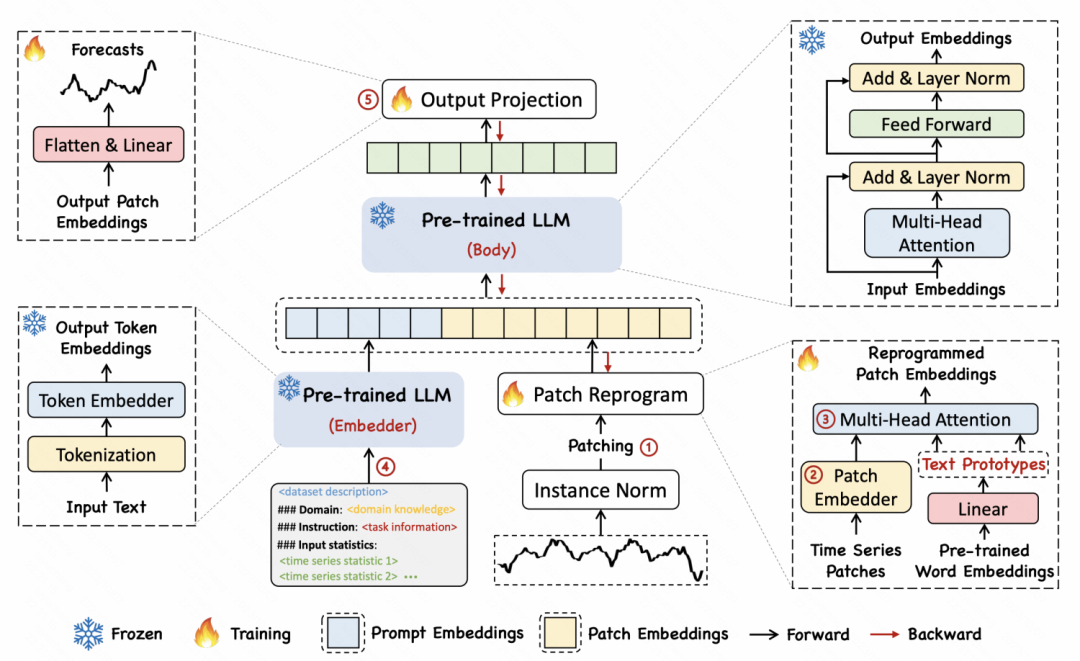

In another article titled "TIME-LLM: TIME SERIES FORECASTING BY REPROGRAMMING LARGE LANGUAGE MODELS", the author proposed a A reprogramming method that converts time series into text to achieve alignment between the two forms of time series and text

The specific implementation method is to first divide the time series into multiple patches, and each patch Get an embedding through MLP. Then, the patch embedding is mapped to the word vector in the language model to achieve mapping and cross-modal alignment of time series segments and text. The article proposes a text prototype idea, which maps multiple words to a prototype to represent the semantics of a sequence of patches over a period of time. For example, in the example below, the words shot and up are mapped to red triangles, which correspond to patches of short-term rising subsequences in the time series.

Picture

Picture

Another research direction is to refer to the large model construction method in the field of natural language processing. Directly build a large model for time series forecasting

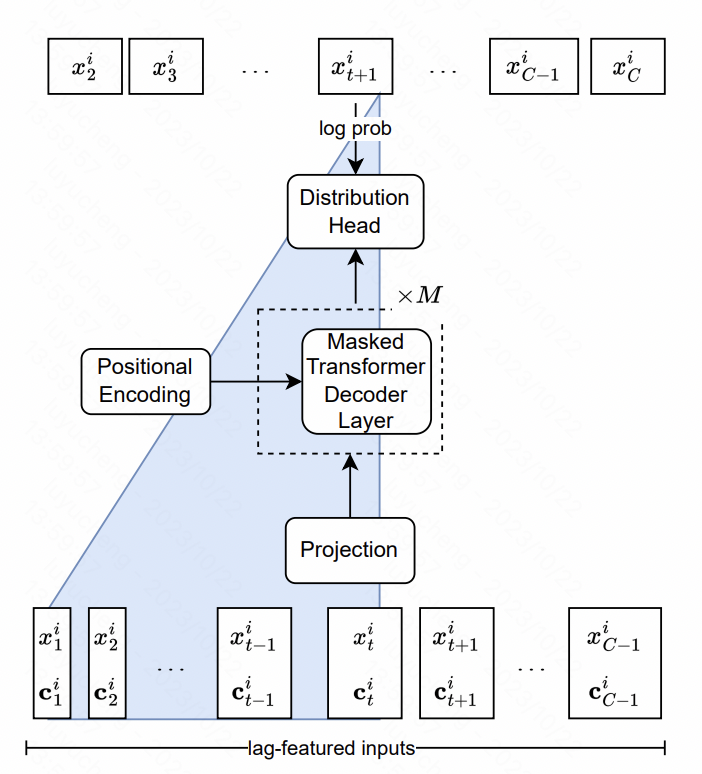

Lag-Llama: Towards Foundation Models for Time Series ForecastingThis article builds the Llama model in time series. The core includes design at the feature level and model structure level.

In terms of features, the article extracts multi-scale and multi-type lag features, which are mainly historical sequence statistical values of different time windows of the original time series. These sequences are input into the model as additional features. In terms of model structure, the core of the LlaMA structure in NLP is Transformer, in which the normalization method and position encoding part have been optimized. The final output layer uses multiple heads to fit the parameters of the probability distribution. For example, the Gaussian distribution fits the mean variance. The student-t distribution is used in this article, and the three corresponding parameters of freedom, mean, and scale are output, and finally each time is obtained. The predicted probability distribution result of the point.

Picture

Picture

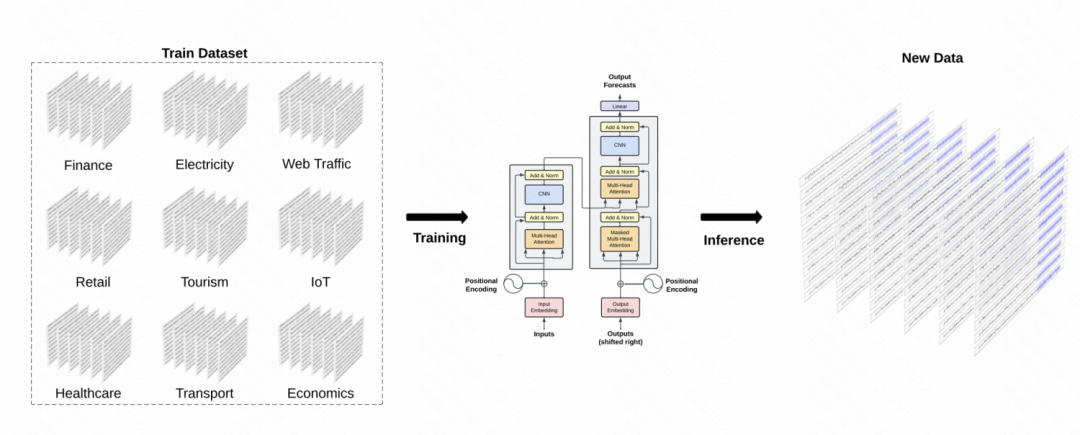

Another similar work is TimeGPT-1, which builds a GPT model in the time series field. In terms of data training, TimeGPT uses a large amount of time series data, reaching a total of 10 billion data sample points, involving various types of domain data. During training, larger batch sizes and smaller learning rates are used to improve training robustness. The main structure of the model is the classic GPT model

Picture

Picture

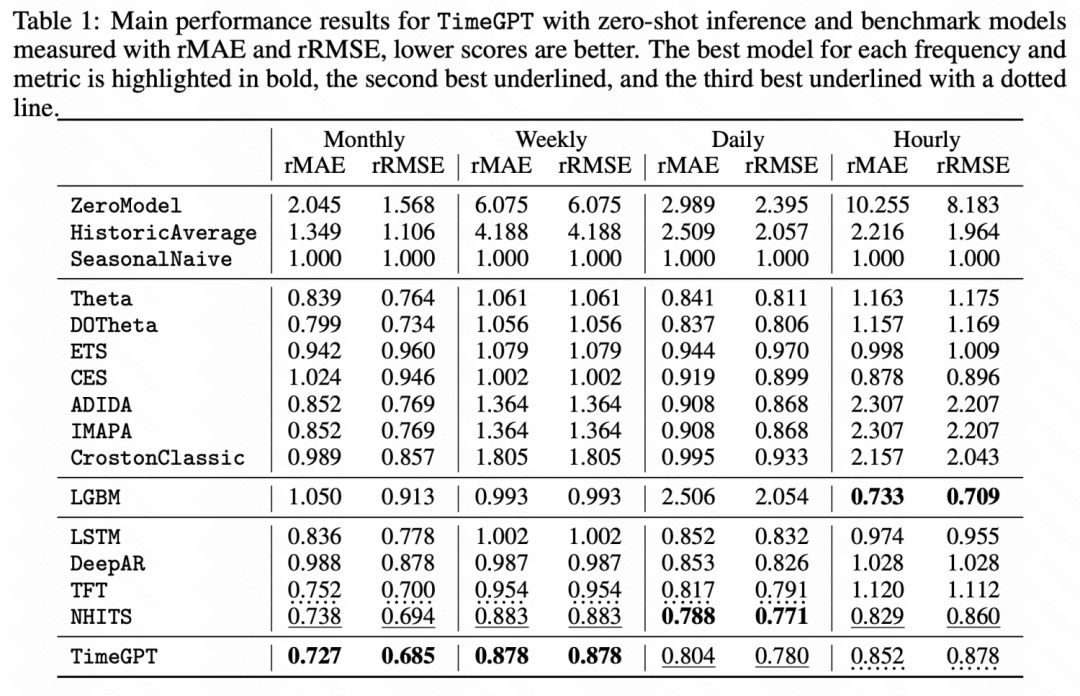

It can also be seen from the following experimental results that in some zero-shot learning tasks, this The time series pre-trained large model has achieved significant improvement compared to the basic model.

Picture

Picture

This article introduces the research ideas of time series forecasting under the wave of large models, including direct Use NLP large models to make time series predictions and train large models in the time series field. No matter which method is used, it shows us the potential of large model time series and is a direction worthy of in-depth study.

The above is the detailed content of An article on time series forecasting under the wave of large-scale models. For more information, please follow other related articles on the PHP Chinese website!