Apache Spark is increasingly becoming a model for next-generation big data processing tools.. By borrowing from open source algorithms and distributing processing tasks across clusters of compute nodes, Spark and Hadoop generation frameworks easily outperform both in the types of data analysis they can perform on a single platform and in the speed with which they can perform these tasks. over traditional frameworks. Spark uses memory to process data, making it significantly faster (up to 100 times faster) than disk-based Hadoop.

But with a little help, Spark can run even faster. If you combine Spark with Redis (a popular in-memory data structure storage technology), you can once again significantly improve the performance of processing analysis tasks. This is due to Redis' optimized data structure and its ability to minimize complexity and overhead when performing operations. Using connectors to connect to Redis' data structures and APIs can further speed up Spark.

How big is the speedup? If Redis is used in conjunction with Spark, it turns out that processing the data (in order to analyze the time series data described below) is faster than Spark simply using in-process memory or off-heap cache to store the data. 45 times – not 45% faster, but a full 45 times faster!

The importance of analytical transaction speed is growing as many companies need to enable analytics as fast as business transactions. As more and more decisions become automated, the analytics needed to drive those decisions should happen in real time. Apache Spark is an excellent general-purpose data processing framework; although it is not completely real-time, it is a big step towards making data useful in a more timely manner.

Spark uses Resilient Distributed Datasets (RDDs), which can be stored in volatile memory or in a persistent storage system like HDFS. All RDDs distributed across the nodes of the Spark cluster remain unchanged, but other RDDs can be created through transformation operations.

Spark RDD

RDD is an important abstract object in Spark. They represent a fault-tolerant way to efficiently present data to an iterative process. Using in-memory processing means that processing time will be reduced by orders of magnitude compared to using HDFS and MapReduce.

Redis is specially designed for high performance. Sub-millisecond latency is the result of optimized data structures that improve efficiency by allowing operations to be performed close to where the data is stored. This data structure not only efficiently utilizes memory and reduces application complexity, but also reduces network overhead, bandwidth consumption, and processing time. Redis supports multiple data structures, including strings, sets, sorted sets, hashes, bitmaps, hyperloglogs, and geospatial indexes. Redis data structures are like Lego bricks, providing developers with simple channels to implement complex functions.

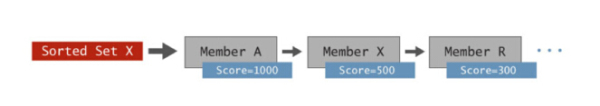

To visually show how this data structure can simplify the processing time and complexity of applications, we might as well take the ordered set (Sorted Set) data structure as an example. An ordered set is basically a set of members ordered by score.

Redis sorted collection

You can store many types of data here, and they are automatically sorted by scores. Common data types stored in ordered collections include: time series data such as items (by price), product names (by quantity), stock prices, and sensor readings such as timestamps.

The charm of ordered collections lies in Redis's built-in operations, which allow range queries, intersection of multiple ordered collections, retrieval by member level and score, and more transactions to be executed simply with extreme speed. It can also be executed at scale. Not only do built-in operations save code that needs to be written, but in-memory execution reduces network latency and saves bandwidth, enabling high throughput with sub-millisecond latencies. If you use sorted sets to analyze time series data, you can often achieve performance improvements of several orders of magnitude compared to other in-memory key/value storage systems or disk-based databases.

The Spark-Redis connector was developed by the Redis team to improve Spark's analysis capabilities. This package enables Spark to use Redis as one of its data sources. Through this connector, Spark can directly access Redis's data structure, thereby significantly improving the performance of various types of analysis.

Spark Redis Connector

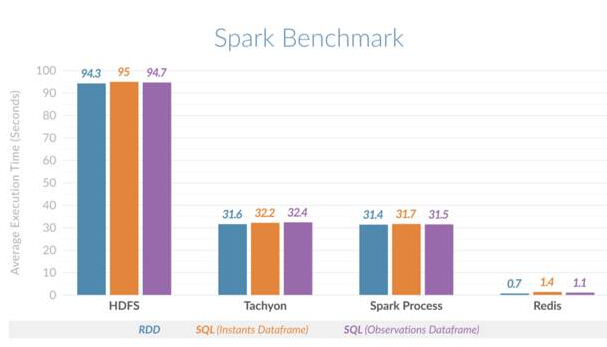

In order to demonstrate the benefits brought to Spark, the Redis team decided to use several different scenarios Execute time slice (range) queries to horizontally compare time series analysis in Spark. These scenarios include: Spark stores all data in in-heap memory, Spark uses Tachyon as an off-heap cache, Spark uses HDFS, and a combination of Spark and Redis.

The Redis team used Cloudera's Spark time series package to build a Spark-Redis time series package that uses Redis ordered collections to speed up time series analysis. In addition to providing all the data structures that allow Spark to access Redis, this package also performs two additional tasks

Automatically ensure that the Redis node is consistent with the Spark cluster, thereby ensuring that each Spark node uses local Redis data, thus optimizing latency.

Integrate with the Spark dataframe and data source API to automatically convert Spark SQL queries into the most efficient retrieval mechanism for data in Redis.

Simply put, this means that users do not have to worry about the operational consistency between Spark and Redis and can continue to use Spark SQL for analysis, while greatly improving query performance.

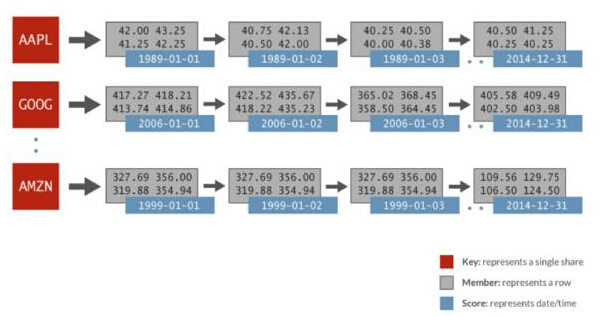

The time series data used in this horizontal comparison includes: randomly generated financial data and 1024 stocks per day for 32 years. Each stock is represented by its own ordered set, the score is the date, and the data members include the opening price, last price, last price, closing price, trading volume, and adjusted closing price. The following image depicts the data representation in a Redis sorted set used for Spark analysis:

Spark Redis time series

in the above In the example, for the ordered set AAPL, there are scores represented for each day (1989-01-01), and multiple values for the whole day represented as a related row. Just use a simple ZRANGEBYSCORE command in Redis to do this: get all the values for a certain time slice, and therefore get all the stock prices in the specified date range. Redis can perform these types of queries faster than other key/value storage systems, up to 100 times faster.

This horizontal comparison confirms the performance improvement. It was found that Spark using Redis can perform time slice queries 135 times faster than Spark using HDFS, and 45 times faster than Spark using on-heap (process) memory or Spark using Tachyon as an off-heap cache. The figure below shows the average execution time compared for different scenarios:

Spark Redis Horizontal Comparison

This guide will go step by step Guide you to install the standard Spark cluster and Spark-Redis package. Through a simple word counting example, it demonstrates how to integrate the use of Spark and Redis. After you have tried Spark and the Spark-Redis package, you can further explore more scenarios that utilize other Redis data structures.

While ordered sets are well suited for time series data, Redis’ other data structures (such as sets, lists, and geospatial indexes) can further enrich Spark analysis. Imagine this: a Spark process is trying to figure out which areas are suitable for launching a new product, taking into account factors such as crowd preferences and distance from the city center to optimize the launch effect. Imagine how data structures like geospatial indexes and collections with built-in analytics capabilities could significantly speed up this process. The Spark-Redis combination has great application prospects.

Spark provides a wide range of analysis capabilities, including SQL, machine learning, graph computing and Spark Streaming. Using Spark's in-memory processing capabilities only gets you up to a certain scale. However, with Redis, you can go one step further: not only can you improve performance by using Redis's data structure, but you can also expand Spark more easily, that is, by making full use of the shared distributed memory data storage mechanism provided by Redis to process hundreds of Thousands of records, or even billions of records.

This example of time series is just the beginning. Using Redis data structures for machine learning and graph analysis is also expected to bring significant execution time benefits to these workloads.

The above is the detailed content of How Redis speeds up Spark. For more information, please follow other related articles on the PHP Chinese website!

Commonly used database software

Commonly used database software

What are the in-memory databases?

What are the in-memory databases?

Which one has faster reading speed, mongodb or redis?

Which one has faster reading speed, mongodb or redis?

How to use redis as a cache server

How to use redis as a cache server

How redis solves data consistency

How redis solves data consistency

How do mysql and redis ensure double-write consistency?

How do mysql and redis ensure double-write consistency?

What data does redis cache generally store?

What data does redis cache generally store?

What are the 8 data types of redis

What are the 8 data types of redis