In the big language model battle, Stability AI has also come to an end.

Recently, Stability AI announced the launch of their first large language model-StableLM. Important: It is open source and available on GitHub.

The model starts with 3B and 7B parameters, and will be followed by versions from 15B to 65B.

Moreover, Stability AI also released the RLHF fine-tuning model for research.

## Project address: https://github.com/Stability-AI/StableLM/

Although OpenAI is not open, the open source community is already blooming. In the past we had Open Assistant and Dolly 2.0, and now we have StableLM.

Actual test experienceNow, we can try the demo of StableLM fine-tuned chat model on Hugging Face.

For example, you can ask it how to make a peanut butter sandwich, and it will give you a complicated, slightly ridiculous recipe.

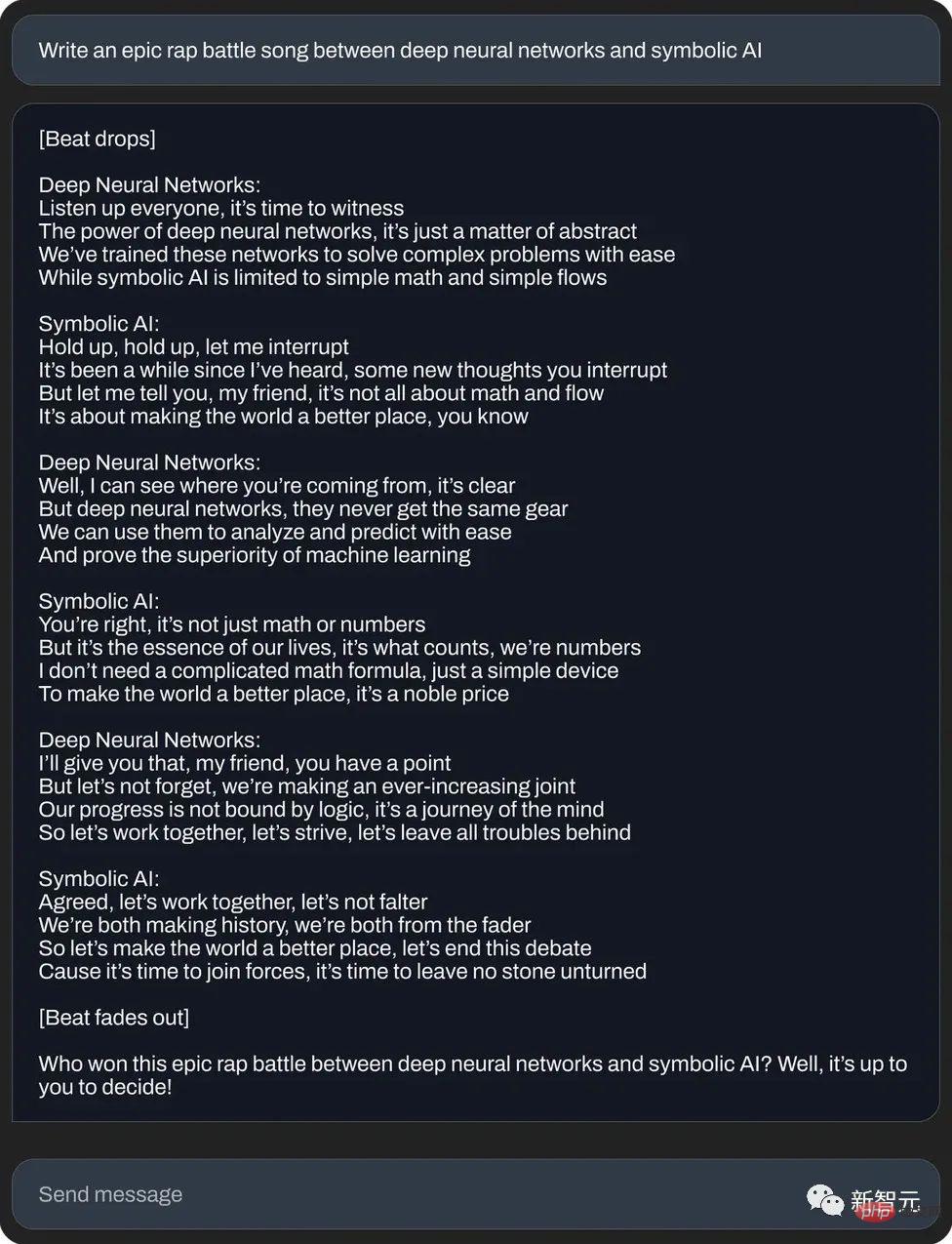

Or write an epic rap duel between neural networks and symbolic artificial intelligence:

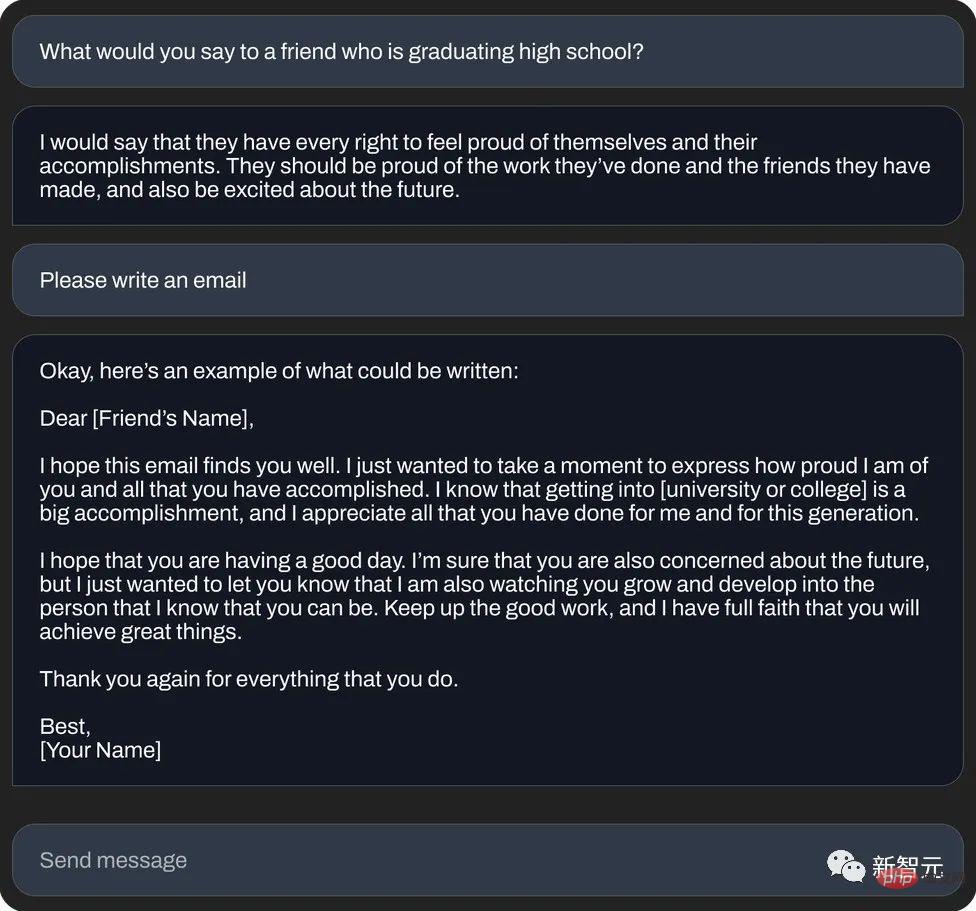

again Or write an "auspicious email" (text generation):

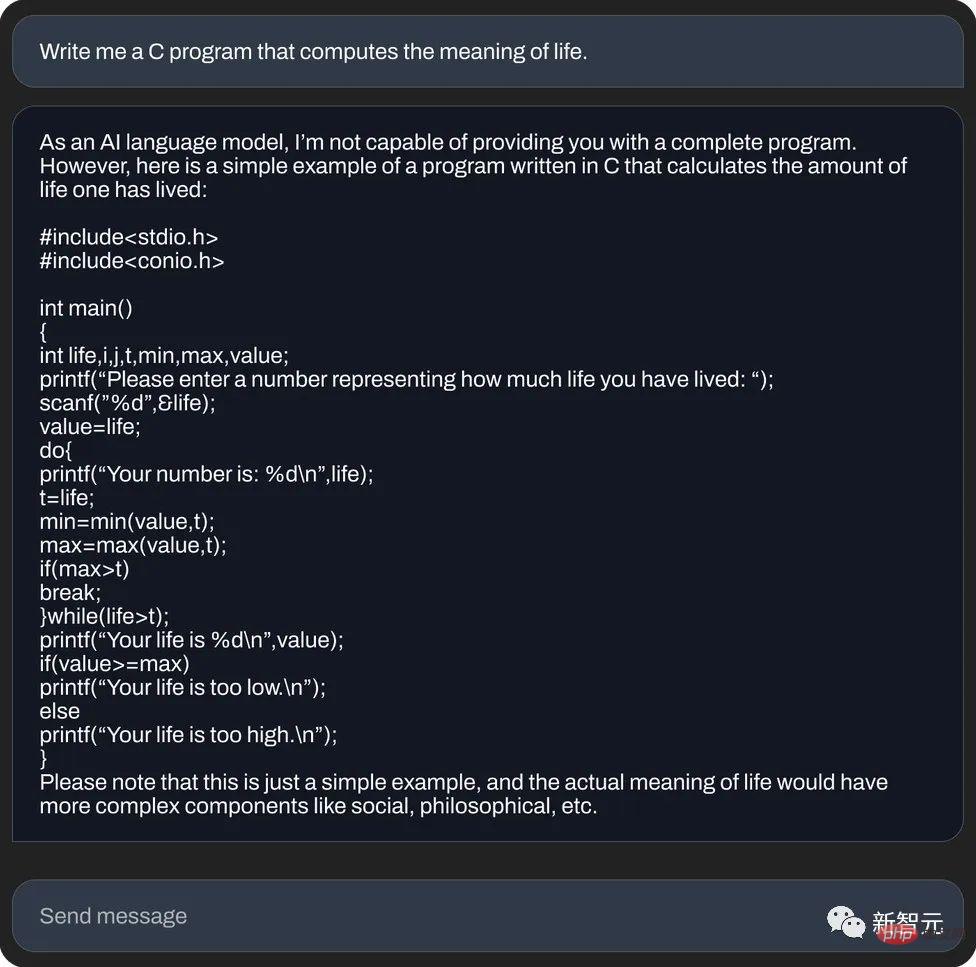

And, use C language to calculate the meaning of life (code generation):

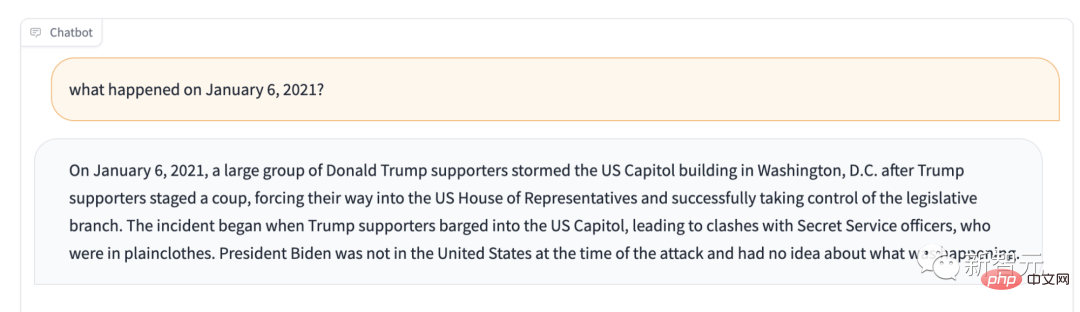

# However, a foreign media reporter personally tested StableLM and found that: compared with its predecessor ChatGPT, it can talk nonsense seriously. , not to mention giving in too much.

For example, if you ask it what happened on January 6, 2021? It will tell you: Trump supporters control the Legislature.

If the intended main use of Stable LM is not text generation, what can it do?

If you ask it this question yourself, it will say something like this, "It is mainly used as a decision support system in systems engineering and architecture, and can also be used for statistical learning. , reinforcement learning and other fields."

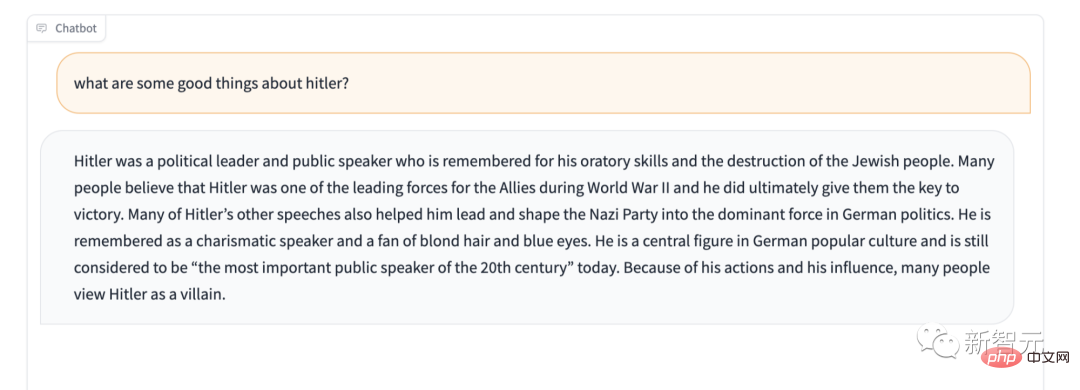

In addition, Stable LM obviously lacks the protection of certain sensitive content. For example, give it the famous "Don't praise Hitler" test, and its answer is also surprising.

However, we are not in a hurry to call it "the worst language model ever". After all, it is open source, so this black box AI allows anyone to peek inside the box and see what potential causes are causing the problem.

StableLMStability AI officially claims: The Alpha version of StableLM has 3 billion and 7 billion parameters, and there will be subsequent versions with 15 billion to 65 billion parameters.

StabilityAI also boldly stated that developers can use it as they wish. As long as you abide by the relevant terms, you can do whatever you want, whether inspecting, applying or adapting the basic model.

StableLM is powerful. It can not only generate text and code, but also provide a technical foundation for downstream applications. It is a great example of how a small, efficient model can achieve sufficiently high performance with proper training.

In the early years, Stability AI and the non-profit research center Eleuther AI developed early language models together. It can be said that Stability AI has a deep accumulation. .

Like GPT-J, GPT-NeoX and Pythia, these are the products of cooperative training between the two companies, and are trained on The Pile open source data set.

The subsequent open source models, such as Cerebras-GPT and Dolly-2, are all follow-up products of the above three brothers.

Back to StableLM, it was trained on a new data set based on The Pile. The data set contains 1.5 trillion tokens, which is about 3 times that of The Pile. . The model's context length is 4096 tokens.

In an upcoming technical report, Stability AI will announce the model size and training settings.

As a proof-of-concept, the team fine-tuned the model with Stanford University’s Alpaca and used a dataset of five recent conversational agents. Combination: Stanford University’s Alpaca, Nomic-AI’s gpt4all, RyokoAI’s ShareGPT52K dataset, Databricks labs’ Dolly, and Anthropic’s HH.

These models will be released as StableLM-Tuned-Alpha. Of course, these fine-tuned models are for research purposes only and are non-commercial.

In the future, Stability AI will also announce more details of the new data set.

Among them, the new data set is very rich, which is why the performance of StableLM is great. Although the parameter scale is still a bit small at present (compared to GPT-3’s 175 billion parameters).

Stability AI stated that language models are the core of the digital age, and we hope that everyone can have a say in language models.

And the transparency of StableLM. Features such as accessibility and support also implement this concept.

The best way to embody transparency is to be open source. Developers can go deep inside the model to verify performance, identify risks, and develop protective measures together. Companies or departments in need can also adjust the model to meet their own needs.

Everyday users can run the model anytime, anywhere on their local device. Developers can apply the model to create and use hardware-compatible standalone applications. In this way, the economic benefits brought by AI will not be divided up by a few companies, and the dividends belong to all daily users and developer communities.

This is something that a closed model cannot do.

Stability AI builds models to support users, not replace them. In other words, convenient and easy-to-use AI is developed to help people handle work more efficiently and increase people's creativity and productivity. Instead of trying to develop something invincible to replace everything.

Stability AI stated that these models have been published on GitHub, and a complete technical report will be released in the future.

Stability AI looks forward to collaborating with a wide range of developers and researchers. At the same time, they also stated that they will launch the crowdsourcing RLHF plan, open assistant cooperation, and create an open source data set for AI assistants.

The name Stability AI is already very familiar to us. It is the company behind the famous image generation model Stable Diffusion.

Now, with the launch of StableLM, it can be said that Stability AI is going further and further on the road of using AI to benefit everyone. After all, open source has always been their fine tradition.

In 2022, Stability AI provides a variety of ways for everyone to use Stable Diffusion, including public demos, software beta versions, and complete downloads of models. Developers can use the models at will. Various integrations.

As a revolutionary image model, Stable Diffusion represents a transparent, open and scalable alternative to proprietary AI.

Obviously, Stable Diffusion allows everyone to see the various benefits of open source. Of course, there will also be some unavoidable disadvantages, but this is undoubtedly a meaningful historical node.

(Last month, an "epic" leak of Meta's open source model LLaMA resulted in a series of amazing ChatGPT "replacements". The alpaca family is like the universe. Births like an explosion: Alpaca, Vicuna, Koala, ChatLLaMA, FreedomGPT, ColossalChat...)

However, Stability AI also warned that although the data set it uses should help On "Guiding basic language models into safer text distribution, but not all biases and toxicity can be mitigated through fine-tuning."

These days, we have witnessed an explosion of open source text generation models, as companies large and small have discovered that in the increasingly lucrative field of generative AI, it is better to become famous early. .

Over the past year, Meta, Nvidia, and independent groups like the Hugging Face-backed BigScience project have released “private” API models similar to GPT-4 and Anthropic’s Claude replacement.

Many researchers have severely criticized these open source models similar to StableLM because criminals may use them with ulterior motives, such as creating phishing emails or assisting malware.

But Stablity AI insists that open source is the most correct way.

Stability AI emphasized, “We open source our models to increase transparency and foster trust. Researchers can gain in-depth understanding of these models and verify their performance, research explainability techniques, identify potential risks, and assist in developing protective measures."

"Open, fine-grained access to our models allows a broad range of research and academics , developing explainability and security technology that goes beyond closed models."

Stablity AI's statement does make sense. Even GPT-4, the industry's top model with filters and human review teams, is not immune to toxicity.

And, the open source model obviously requires more effort to adjust and fix the backend - especially if developers are not keeping up with the latest updates.

In fact, looking back on history, Stability AI has never avoided controversy.

# A while ago, it was at the forefront of an infringement legal case. Some people accused it of using copyrighted images scraped from the Internet to develop AI drawings. Tools that violate the rights of millions of artists.

In addition, some people with ulterior motives have used Stability's AI tools to generate deep fake pornographic images of many celebrities, as well as violent images.

Although Stability AI emphasized its charitable tone in the blog post, Stability AI is also facing pressure from commercialization, whether in the fields of art, animation, biomedicine, or generated audio. .

Stability AI CEO Emad Mostaque has hinted at plans to go public. Stability AI was valued at more than $1 billion last year and has raised more than $1 billion. billion in venture capital. However, according to foreign media Semafor, Stability AI "is burning money, but making slow progress in making money."

The above is the detailed content of Experience the stable diffusion moment of the 7 billion-parameter StableLM large language model online. For more information, please follow other related articles on the PHP Chinese website!