Thanks to the development of neural 3D reconstruction technology, capturing feature representations of real-world 3D scenes has never been easier.

However, there has never been a simple and effective solution for 3D scene editing above this.

Recently, researchers from UC Berkeley proposed a method of editing NeRF scenes using text instructions - Instruct-NeRF2NeRF, based on the previous work InstructPix2Pix.

##Paper address: https://arxiv.org/abs/2303.12789

Using Instruct-NeRF2NeRF, we can edit large-scale real-world scenes with just one sentence, and make it more realistic and targeted than previous work.

For example, if you want him to have a beard, a tuft of beard will appear on his face!

Or just change your head and become Einstein in seconds.

#In addition, since the model can continuously update the data set with new edited images, the reconstruction of the scene will gradually improve.

NeRF InstructPix2Pix = Instruct-NeRF2NeRFSpecifically, humans are given an input image, and written instructions that tell the model what to do, which the model then follows. These instructions are used to edit images.

The implementation steps are as follows:

Compared with traditional 3D editing, NeRF2NeRF is a new 3D scene editing method. Its biggest highlight is the use of "iterative data set update" technology.

Although editing is performed on a 3D scene, a 2D rather than a 3D diffusion model is used in the paper to extract form and appearance priors because the data used to train the 3D generative model is very limited. .

This 2D diffusion model is the InstructPix2Pix developed not long ago by the research team - a 2D image editing model based on command text. When you input image and text commands, it can output editing image after.

However, this 2D model will cause uneven changes in different angles of the scene. Therefore, "iterative data set update" came into being. This technology alternately modifies NeRF's "input image data". Set" and update the underlying 3D representation.

This means that the text-guided diffusion model (InstructPix2Pix) will generate new image variations according to the instructions and use these new images as input for NeRF model training. Therefore, the reconstructed 3D scene will be based on new text-guided editing.

In the initial iterations, InstructPix2Pix often fails to perform consistent edits across different views, however, during NeRF re-rendering and updating, they will converge to a globally consistent Scenes.

In summary, the NeRF2NeRF method improves the editing efficiency of 3D scenes by iteratively updating image content and integrating these updated contents into the 3D scene, while also maintaining Scene coherence and realism.

It can be said that this work of the UC Berkeley research team is an extended version of the previous InstructPix2Pix. By combining NeRF with InstructPix2Pix and working with "iterative data set update", a Key editing can still play with 3D scenes!

However, since Instruct-NeRF2NeRF is based on the previous InstructPix2Pix, it inherits many limitations of the latter, such as the inability to carry out large-scale space operations.

Additionally, like DreamFusion, Instruct-NeRF2NeRF can only use the diffusion model on one view at a time, so you may encounter similar artifact issues.

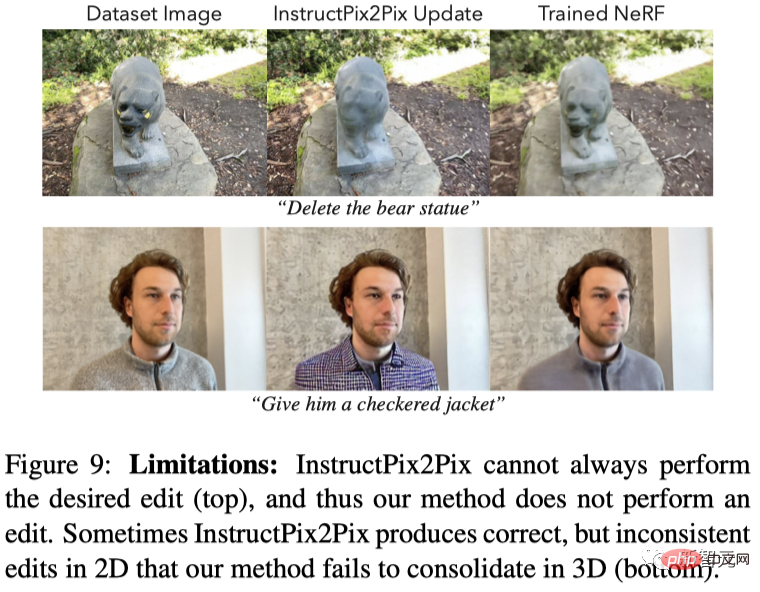

The following figure shows two types of failure cases:

(1) Pix2Pix cannot perform editing in 2D, so NeRF2NeRF cannot perform editing in 3D It also failed;

(2) Pix2Pix can complete editing in 2D, but there is a big inconsistency in 3D, so NeRF2NeRF also failed.

Another example is the "panda" below, which not only looks very fierce (the prototype statue is very fierce) , and the fur color is somewhat weird, and the eyes are obviously "out of shape" when moving in the screen.

Since ChatGPT, Diffusion, and NeRFs have been pulled into the spotlight, this article can be said to give full play to the advantages of the three, from "AI Sentence "Word drawing" has advanced to "AI one-sentence editing of 3D scenes".

Although the method has some limitations, it still has its flaws and provides a simple and feasible solution for 3D feature editing, which is expected to become a milestone in the development of NeRF.

Finally, let’s take a look at the effects released by the author.

It is not difficult to see that this one-click PS 3D scene editing artifact is more in line with expectations in terms of command understanding ability and image realism. In the future, it may become a popular choice among academics and The "new favorite" among netizens has created Chat-NeRFs after ChatGPT.

Even if you change the environmental background, seasonal characteristics, and weather of the image at will, The new images given are also completely consistent with the logic of reality.

Original picture:

##Autumn:

Snow day:

Reference: https://www .php.cn/link/ebeb300882677f350ea818c8f333f5b9

The above is the detailed content of One line of text to achieve 3D face-changing! UC Berkeley proposes 'Chat-NeRF' to complete blockbuster-level rendering in just one sentence. For more information, please follow other related articles on the PHP Chinese website!

What to do if an error occurs in the script of the current page

What to do if an error occurs in the script of the current page

The difference between static web pages and dynamic web pages

The difference between static web pages and dynamic web pages

How to switch between Huawei dual systems

How to switch between Huawei dual systems

Is Huawei's Hongmeng OS Android?

Is Huawei's Hongmeng OS Android?

Mini program path acquisition

Mini program path acquisition

How to decipher wifi password

How to decipher wifi password

Introduction to the main work content of front-end engineers

Introduction to the main work content of front-end engineers

How to solve the problem that document.cookie cannot be obtained

How to solve the problem that document.cookie cannot be obtained