As the size of language models and corpora gradually expands, large language models (LLM) show more potential. Some recent studies have shown that LLM can use in-context learning (ICL) to perform a range of complex tasks, such as solving mathematical reasoning problems.

Ten researchers from Peking University, Shanghai AI Lab and University of California, Santa Barbara recently published a review paper on in-context learning, combing ICL research in detail current progress.

Paper address: https://arxiv.org/pdf/2301.00234v1.pdf

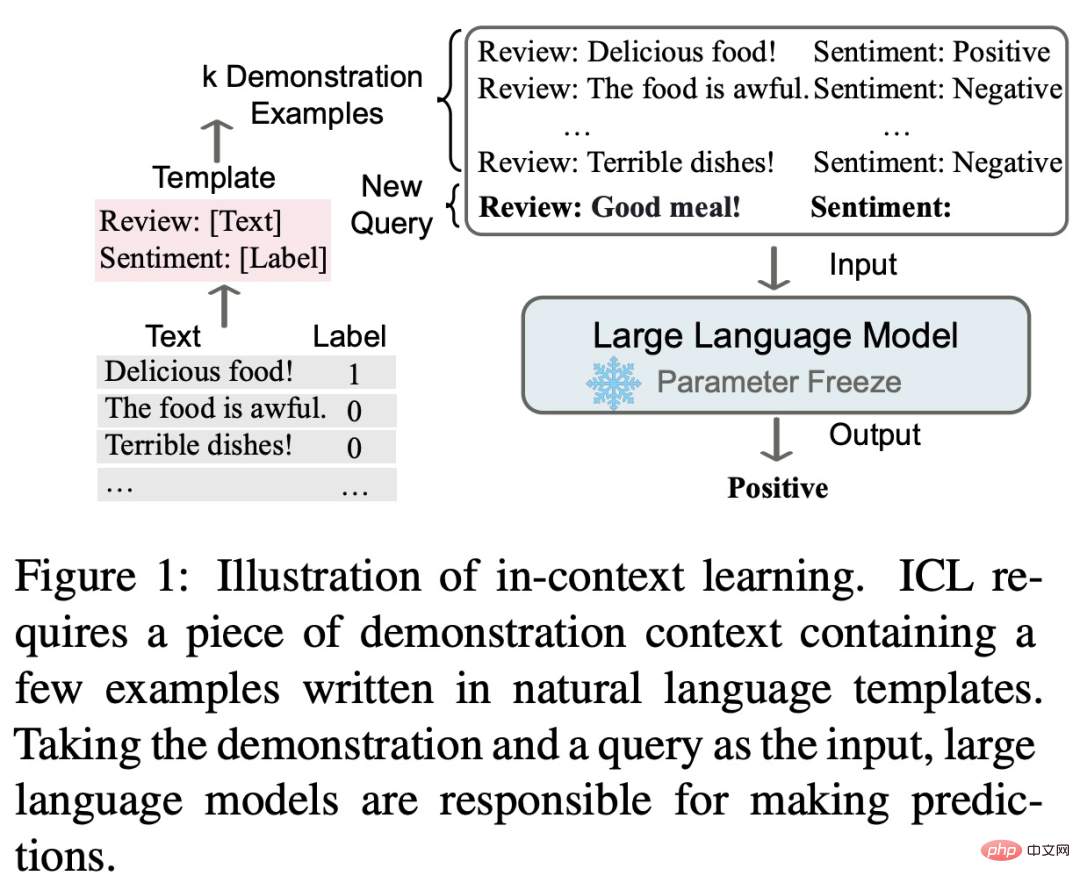

The core idea of in-context learning is analogy learning. The following figure describes how the language model uses ICL to make decisions.

First, ICL requires some examples to form a demonstration context, and these examples are usually written in natural language templates. ICL then associates the query question with the presentation context to form a prompt, and feeds it into a language model for prediction. Unlike the training phase of supervised learning, which requires updating model parameters using inverse gradients, ICL does not require parameter updates to allow the pre-trained language model to directly perform prediction tasks, and the model is expected to learn the hidden patterns in the demonstration examples and make decisions based on them. Correct prediction.

As a new paradigm, ICL has many attractive advantages. First, the demo examples are written in a natural language format, which provides an interpretable interface for relating to large language models. This paradigm makes it easier to incorporate human knowledge into language models by changing demonstration examples and templates (Liu et al., 2022; Lu et al., 2022; Wu et al., 2022; Wei et al., 2022c). easy. Second, in-context learning is similar to the decision-making process of human learning through analogy. Third, compared to supervised training, ICL is a training-free learning framework. This can not only greatly reduce the computational cost of adapting the model to new tasks, but also make Language Model as a Service (LMaaS, Sun et al., 2022) possible and easily applied to large-scale real-world tasks.

Despite the great promise of ICL, there are still many issues worth exploring, including its performance. For example, the original GPT-3 model has certain ICL capabilities, but some studies have found that this ability can be significantly improved through adaptation during pre-training. Furthermore, ICL's performance is sensitive to specific settings, including prompt templates, contextual sample selection, and sample ordering. In addition, although the working mechanism of ICL seems reasonable, it is still not clear enough, and there are not many studies that can preliminarily explain its working mechanism.

This review paper concludes that the powerful performance of ICL relies on two stages:

In the training phase, the language model is trained directly according to the language modeling goals, such as left-to-right generation. Although these models are not specifically optimized for in-context learning, ICL's capabilities are still surprising. Existing ICL research is basically based on well-trained language models.

In the inference stage, since the input and output labels are represented by interpretable natural language templates, ICL performance can be optimized from multiple perspectives. This review paper provides a detailed description and comparison, selects appropriate examples for demonstration, and designs specific scoring methods for different tasks.

The general content and structure of this review paper is shown in the figure below, including: formal definition of ICL (§3), warmup method (§4), prompt design strategy (§5 ) and the scoring function (§6).

Additionally, §7 provides insight into the current quest to uncover the workings behind ICLs. §8 further provides useful assessments and resources for ICL, and §9 introduces potential application scenarios that demonstrate the effectiveness of ICL. Finally, §10 summarizes the existing challenges and potential directions in the ICL field to provide a reference for further development of the field.

Interested readers can read the original text of the paper to learn more about the research details.

The above is the detailed content of What is the development status of In-Context Learning, which was taken off by GPT? This review makes it clear. For more information, please follow other related articles on the PHP Chinese website!