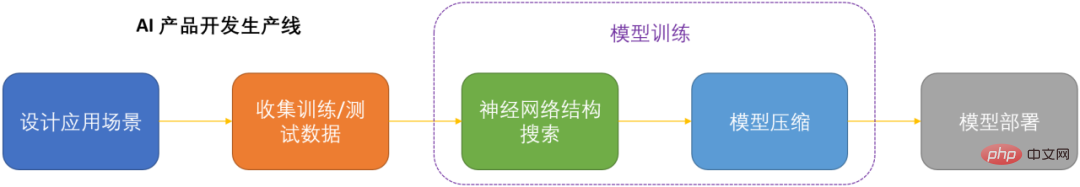

OTO is the industry’s first automated, one-stop, user-friendly and versatile neural network training and structure compression framework.

In the era of artificial intelligence, how to deploy and maintain neural networks is a key issue for productization. In order to save computing costs while minimizing the loss of model performance as much as possible, compressing neural networks has become one of the keys to productizing DNN. .

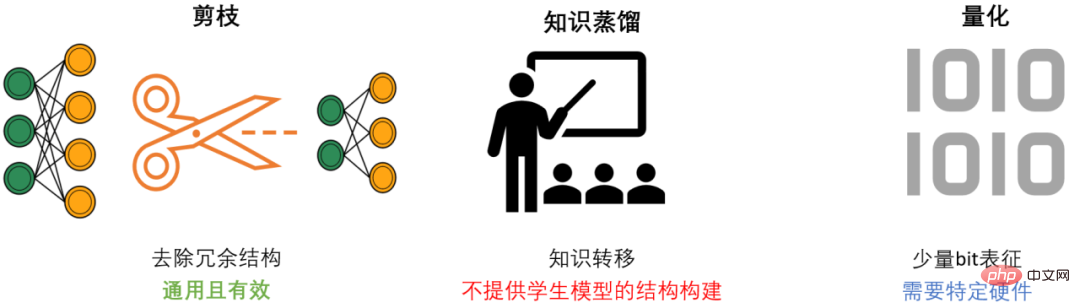

#DNN compression generally has three methods, pruning, knowledge distillation and quantization. Pruning aims to identify and remove redundant structures, slim down DNN while maintaining model performance as much as possible. It is the most versatile and effective compression method. Generally speaking, the three methods can complement each other and work together to achieve the best compression effect.

However, most of the existing pruning methods only target specific models and specific tasks, and require strong professional domain knowledge, so it usually requires AI developers to spend a lot of energy to Applying these methods to your own scenarios consumes a lot of manpower and material resources.

In order to solve the problems of existing pruning methods and provide convenience to AI developers, the Microsoft team proposed the Only-Train-Once OTO framework. OTO is the industry's first automated, one-stop, user-friendly and universal neural network training and structure compression framework. A series of work has been published in ICLR2023 and NeurIPS2021.

By using OTO, AI engineers can easily train target neural networks and obtain high-performance and lightweight models in one stop. OTO minimizes the developer's investment in engineering time and effort, and does not require the time-consuming pre-training and additional model fine-tuning that existing methods usually require.

The ideal structural pruning algorithm should be able to: automatically train from scratch in one stop for general neural networks, while achieving high performance and lightweight models, without the need for follow-up Fine tune. But because of the complexity of neural networks, achieving this goal is extremely challenging. To achieve this ultimate goal, the following three core questions need to be systematically addressed:

The Microsoft team designed and implemented three sets of core algorithms, systematically and comprehensively solving these three core problems for the first time.

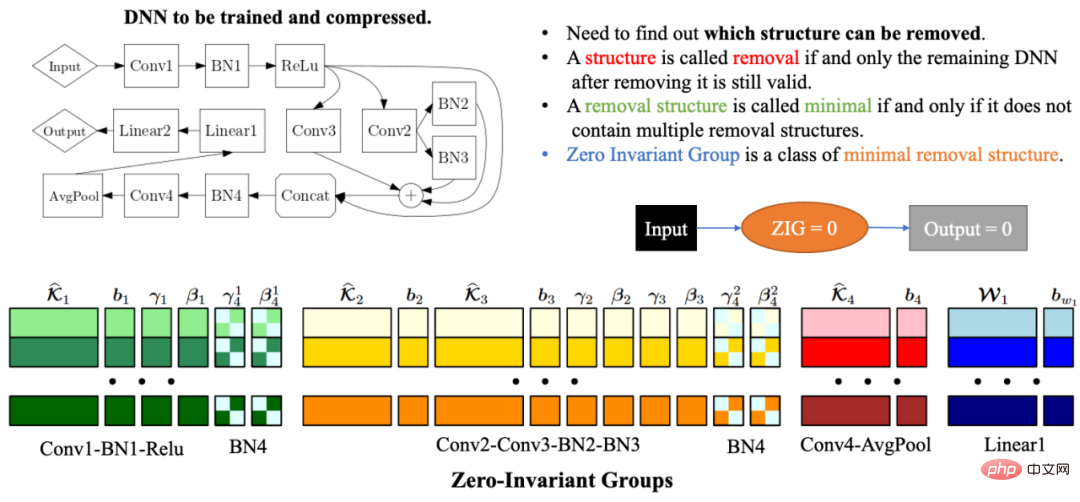

Automated Zero-Invariant Groups (zero invariant group) grouping

Due to the complexity and correlation of the network structure, deleting any network structure may result in remaining The network structure is invalid. Therefore, one of the biggest problems in automated network structure compression is how to find the model parameters that must be pruned together so that the remaining network is still valid. To solve this problem, the Microsoft team proposed Zero-Invariant Groups (ZIGs) in OTOv1. The zero-invariant group can be understood as a type of smallest removable unit, so that the remaining network is still valid after the corresponding network structure of the group is removed. Another great property of a zero-invariant group is that if a zero-invariant group is equal to zero, then no matter what the input value is, the output value is always zero. In OTOv2, the researchers further proposed and implemented a set of automated algorithms to solve the grouping problem of zero-invariant groups in general networks. The automated grouping algorithm is a carefully designed combination of a series of graph algorithms. The entire algorithm is very efficient and has linear time and space complexity.

Double half-plane projected gradient optimization algorithm (DHSPG)

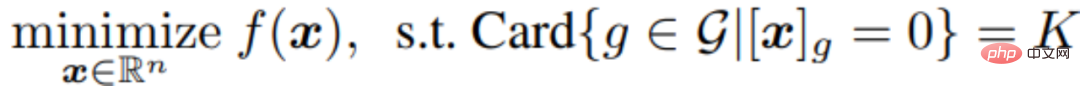

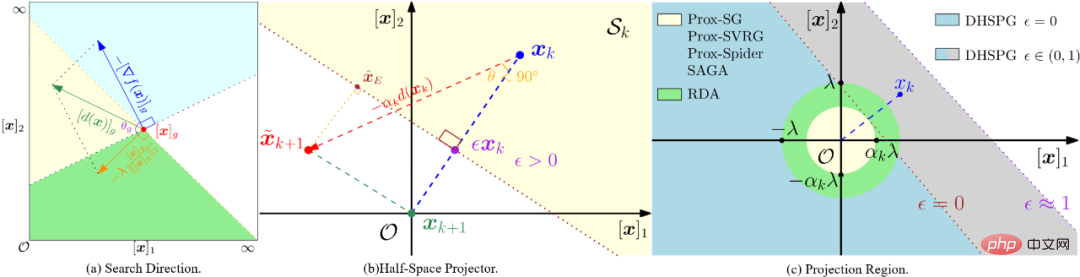

After dividing all zero-invariant groups of the target network, then The following model training and pruning tasks need to find out which zero-invariant groups are redundant and which ones are important. The network structure corresponding to the redundant zero-invariant groups needs to be deleted, and the important zero-invariant groups need to be retained to ensure the performance of the compression model. The researchers formulated this problem as a structural sparsification problem and proposed a new Dual Half-Space Projected Gradient (DHSPG) optimization algorithm to solve it.

DHSPG can very effectively find redundant zero-invariant groups and project them to zero, and continuously train important zero-invariant groups to achieve performance comparable to the original model. performance.

Compared with traditional sparse optimization algorithms, DHSPG has stronger and more stable sparse structure exploration capabilities, and expands the training search space and therefore usually achieves higher actual performance results.

Automatically build a lightweight compression model

By using DHSPG to train the model, we will get a zero-invariant A solution with high structural sparsity of groups, that is, a solution with many zero-invariant groups that are projected to zero, will also have high model performance. Next, the researchers deleted all structures corresponding to redundant zero-invariant groups to automatically build a compression network. Due to the characteristics of zero-invariant groups, that is, if a zero-invariant group is equal to zero, then no matter what the input value is, the output value will always be zero, so deleting redundant zero-invariant groups will not have any impact on the network. Therefore, the compressed network obtained through OTO will have the same output as the complete network, without the need for further model fine-tuning required by traditional methods.

Classification task

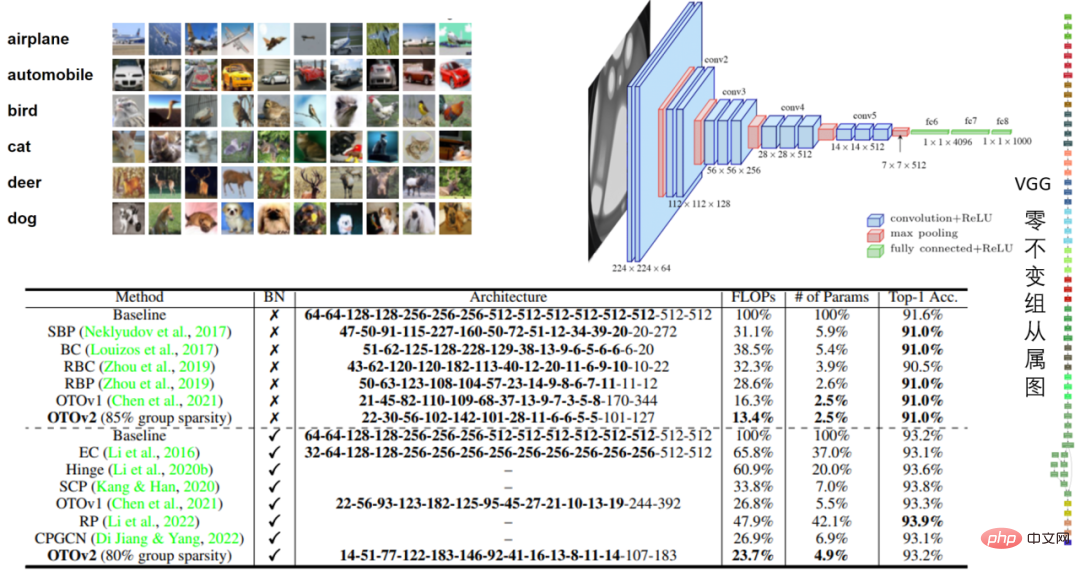

##Table 1: VGG16 and VGG16- in CIFAR10 BN model performance

In the VGG16 experiment of CIFAR10, OTO reduced the floating point number by 86.6% and the number of parameters by 97.5%. The performance was impressive.

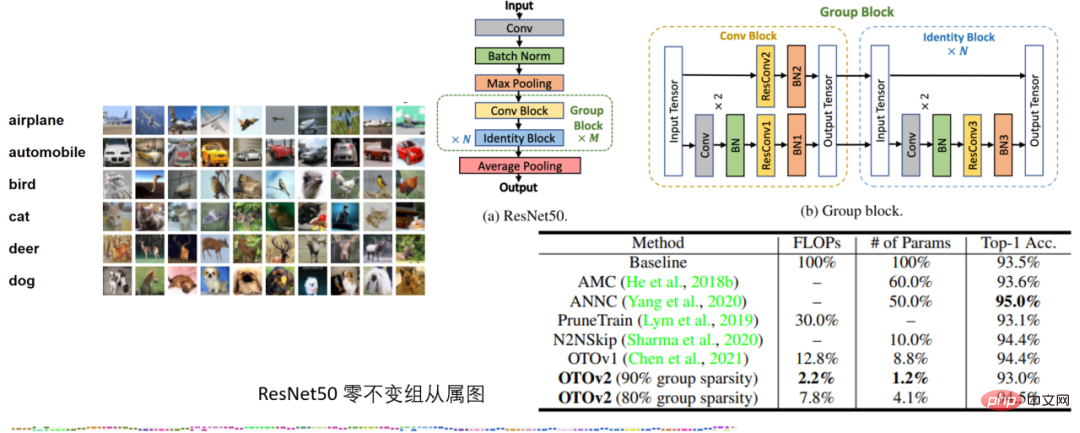

Table 2: ResNet50 experiment of CIFAR10

In the ResNet50 experiment of CIFAR10, OTO outperforms without quantization The SOTA neural network compression frameworks AMC and ANNC use only 7.8% of FLOPs and 4.1% of parameters.

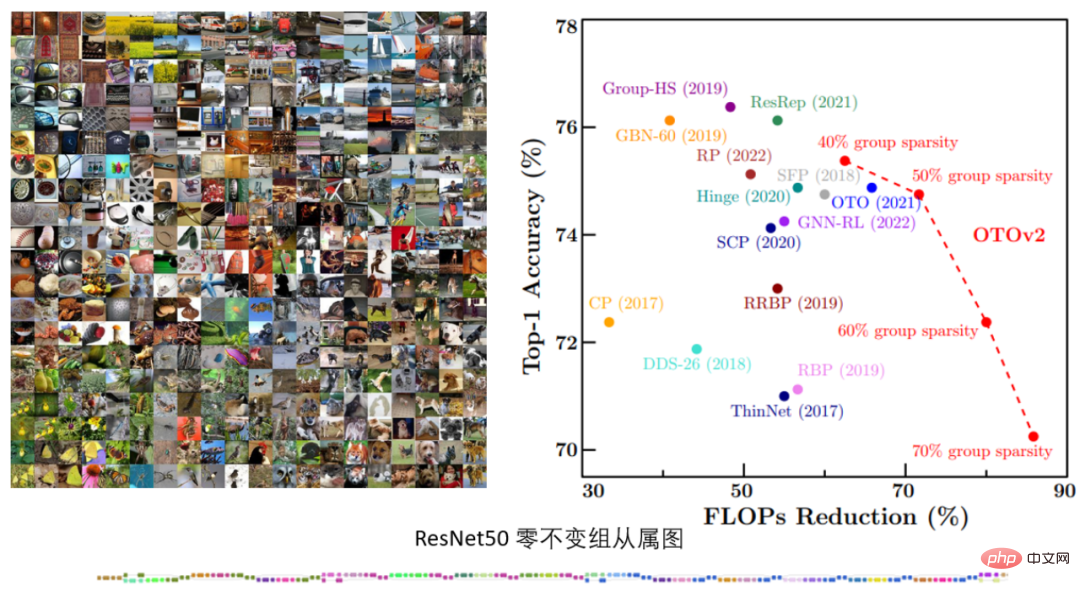

Table 3. ImageNet’s ResNet50 experiment

In the ImageNet’s ResNet50 experiment, OTOv2 under different structural sparseness targets, It shows performance that is comparable to or even better than existing SOTA methods.

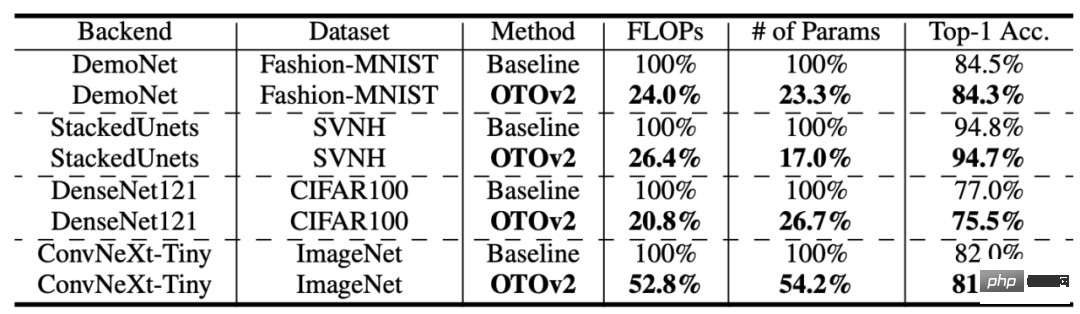

Table 4: More structures and data sets

OTO has also achieved more data sets and model structures Not a bad performance.Low-Level Vision Task

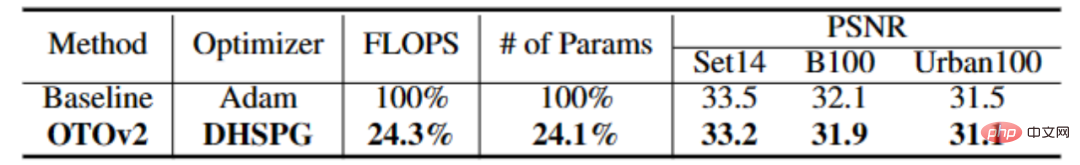

Table 4: Experiment of CARNx2

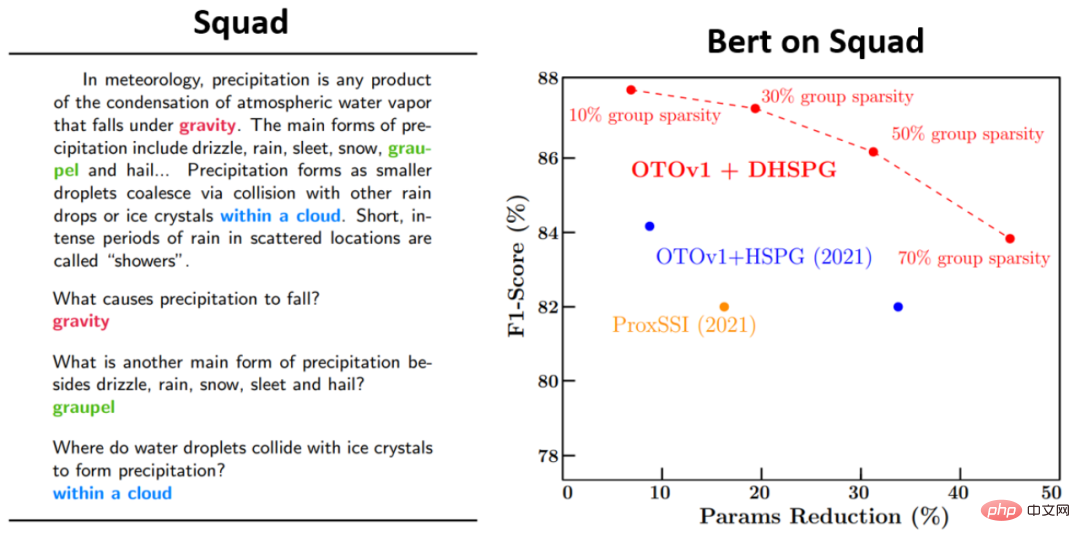

In the super-resolution task, OTO one-stop training compressed the CARNx2 network, achieving competitive performance with the original model and compressing the calculation amount and model size by more than 75%.Language model task

The above is the detailed content of Microsoft proposes OTO, an automated neural network training pruning framework, to obtain high-performance lightweight models in one stop. For more information, please follow other related articles on the PHP Chinese website!