When enterprises solve high concurrency problems, they generally have two processing strategies, software and hardware. On the hardware, a load balancer is added to distribute a large number of requests. On the software side, two solutions can be added at the high concurrency bottleneck: database and web server. Solution, among which the most commonly used solution for adding load on the front layer of the web server is to use nginx to achieve load balancing.

1. The role of load balancing

1. Forwarding function

According to A certain algorithm [weighting, polling] forwards client requests to different application servers, reducing the pressure on a single server and increasing system concurrency.

2. Fault removal

Use heartbeat detection to determine whether the application server can currently work normally. If the server goes down, the request will be automatically sent to other application servers.

3. Recovery addition

(Recommended learning:nginx tutorial)

If it is detected that the failed application server has resumed work, it will be added automatically. Join the team that handles user requests.

2. Nginx implements load balancing

Also uses two tomcats to simulate two application servers, with port numbers 8080 and 8081

1. Nginx's load distribution strategy

Nginx's upstream currently supports the distribution algorithm:

1), polling - 1:1 processing in turn Requests (default)

Each request is assigned to a different application server one by one in chronological order. If the application server goes down, it will be automatically eliminated, and the remaining ones will continue to be polled.

2), weight - you can you up

By configuring the weight, specify the polling probability, the weight is proportional to the access ratio, which is used when the application server performance is not good average situation.

3), ip_hash algorithm

Each request is allocated according to the hash result of the accessed IP, so that each visitor has a fixed access to an application server and can solve the session Shared issues.

2. Configure Nginx's load balancing and distribution strategy

This can be achieved by adding specified parameters after the application server IP added in the upstream parameter, such as:

upstream tomcatserver1 { server 192.168.72.49:8080 weight=3; server 192.168.72.49:8081; } server { listen 80; server_name 8080.max.com; #charset koi8-r; #access_log logs/host.access.log main; location / { proxy_pass http://tomcatserver1; index index.html index.htm; } }

Pass The above configuration can be achieved. When accessing the website8080.max.com, because theproxy_passaddress is configured, all requests will first pass through the nginx reverse proxy server, and then the server will When the request is forwarded to the destination host, read the upstream address of tomcatsever1, read the distribution policy, and configure tomcat1 weight to be 3, so nginx will send most of the requests to tomcat1 on server 49, which is port 8080; a smaller number will be sent to tomcat1 on server 49, which is port 8080; tomcat2 to achieve conditional load balancing. Of course, this condition is the hardware index processing request capability of servers 1 and 2.

3. Other configurations of nginx

upstream myServer { server 192.168.72.49:9090 down; server 192.168.72.49:8080 weight=2; server 192.168.72.49:6060; server 192.168.72.49:7070 backup; }

1) down

means that the previous server will not participate in the load temporarily

2) Weight

The default is 1. The larger the weight, the greater the weight of the load.

3) max_fails

The number of allowed request failures defaults to 1. When the maximum number is exceeded, the error defined by the proxy_next_upstream module is returned

4) fail_timeout

Pause time after max_fails failures.

5) Backup

When all other non-backup machines are down or busy, request the backup machine. So this machine will have the least pressure.

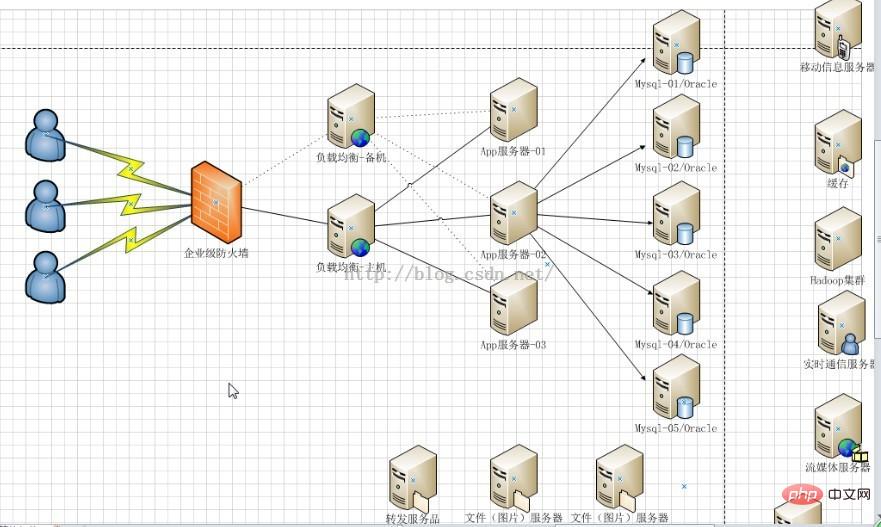

3. High availability using Nginx

In addition to achieving high availability of the website, it also means providing n multiple servers for publishing the same service and adding load balancing The server distributes requests to ensure that each server can process requests relatively saturated under high concurrency. Similarly, the load balancing server also needs to be highly available to prevent the subsequent application servers from being disrupted and unable to work if the load balancing server hangs up.

Solutions to achieve high availability: Add redundancy. Add n nginx servers to avoid the above single point of failure.

4. Summary

To summarize, load balancing, whether it is a variety of software or hardware solutions, mainly distributes a large number of concurrent requests according to certain rules. Let different servers handle it, thereby reducing the instantaneous pressure on a certain server and improving the anti-concurrency ability of the website. The author believes that the reason why nginx is widely used in load balancing is due to its flexible configuration. An nginx.conf file solves most problems, whether it is nginx creating a virtual server, nginx reverse proxy server, or nginx introduced in this article. Load balancing is almost always performed in this configuration file. The server is only responsible for setting up nginx and running it. Moreover, it is lightweight and does not need to occupy too many server resources to achieve better results.

The above is the detailed content of Configure Nginx to achieve load balancing (picture). For more information, please follow other related articles on the PHP Chinese website!