Coding problems have always troubled program developers, especially in Java, because Java is a cross-platform language and there is a lot of switching between coding on different platforms. Next, we will introduce the root causes of Java encoding problems; the differences between several encoding formats often encountered in Java; scenarios that often require encoding in Java; analysis of the causes of Chinese problems; possible encoding problems in developing Java Web Several places; how to control the encoding format of an HTTP request; how to avoid Chinese encoding problems, etc.

The smallest unit for storing information in a computer is 1 byte, which is 8 bits, so the range of characters that can be represented is 0 ~ 255.

There are too many symbols to represent and cannot be fully represented in 1 byte.

Computers provide a variety of translation methods, common ones include ASCII, ISO-8859-1, GB2312, GBK, UTF-8, UTF-16, etc. These all stipulate the conversion rules. According to these rules, the computer can correctly represent our characters. These encoding formats are introduced below:

ASCII code

There are 128 in total, represented by the lower 7 bits of 1 byte, 0 ~ 31 are control characters such as line feed, carriage return, delete, etc., 32 ~ 126 are printing characters, which can be input through the keyboard and can be displayed.

ISO-8859-1

128 characters is obviously not enough, so the ISO organization expanded on the basis of ASCII. They are ISO-8859-1 to ISO-8859-15. The former covers most characters and is the most widely used. ISO-8859-1 is still a single-byte encoding, which can represent a total of 256 characters.

GB2312

It is a double-byte encoding, and the total encoding range is A1 ~ F7, where A1 ~ A9 is the symbol area, containing a total of 682 symbols; B0 ~ F7 is the Chinese character area, containing 6763 Chinese characters.

GBk

GBK is the "Chinese Character Internal Code Extension Specification", which is an extension of GB2312. Its coding range is 8140 ~ FEFE (removing XX7F). There are a total of 23940 code points, which can represent 21003 Chinese characters. It is compatible with the coding of GB2312 and will not have Garbled characters.

UTF-16

It specifically defines how Unicode characters are accessed in the computer. UTF-16 uses two bytes to represent the Unicode conversion format. It uses a fixed-length representation method, that is, no matter what character it is, it is represented by two bytes. Two bytes are 16 bits, so it is called UTF-16. It is very convenient to represent characters. No two bytes represent one character, which greatly simplifies string operations.

UTF-8

Although it is simple and convenient for UTF-16 to uniformly use two bytes to represent a character, a large part of the characters can be represented by one byte. If it is represented by two bytes, the storage space will be doubled, which is a problem when the network bandwidth is limited. In this case, the traffic of network transmission will be increased. UTF-8 uses a variable length technology. Each encoding area has different character lengths. Different types of characters can be composed of 1 ~ 6 bytes.

UTF-8 has the following encoding rules:

If it is 1 byte and the highest bit (8th bit) is 0, it means it is an ASCII character (00 ~ 7F)

If it is 1 byte, starting with 11, then the number of consecutive 1's implies the number of bytes of this character

If it is 1 byte, starting with 10, it means it is not the first byte, and you need to look forward to get the first byte of the current character

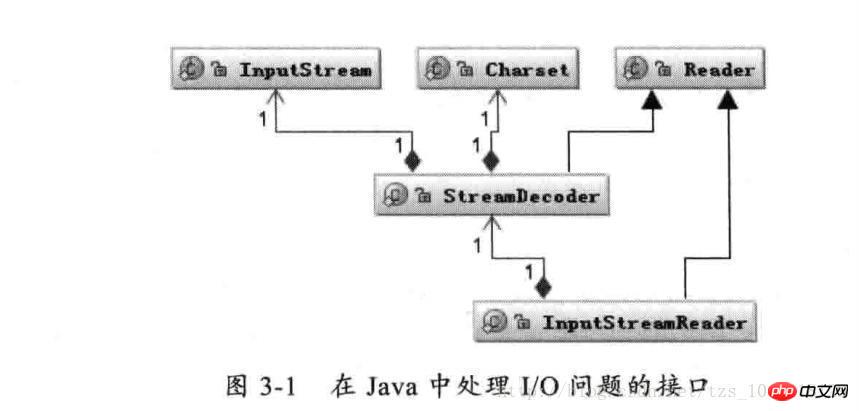

As shown above: The Reader class is the parent class for reading characters in Java I/O, and the InputStream class is the parent class for reading bytes. The InputStreamReader class is the bridge that associates bytes to characters. It is responsible for reading characters during the I/O process. It handles the conversion of read bytes into characters, and entrusts StreamDecoder to implement the decoding of specific bytes into characters. During the decoding process of StreamDecoder, the Charset encoding format must be specified by the user. It is worth noting that if you do not specify Charset, the default character set in the local environment will be used. For example, GBK encoding will be used in the Chinese environment.

For example, the following piece of code implements the file reading and writing function:

String file = "c:/stream.txt";

String charset = "UTF-8";

// 写字符换转成字节流

FileOutputStream outputStream = new FileOutputStream(file);

OutputStreamWriter writer = new OutputStreamWriter(

outputStream, charset);

try {

writer.write("这是要保存的中文字符");

} finally {

writer.close();

}

// 读取字节转换成字符

FileInputStream inputStream = new FileInputStream(file);

InputStreamReader reader = new InputStreamReader(

inputStream, charset);

StringBuffer buffer = new StringBuffer();

char[] buf = new char[64];

int count = 0;

try {

while ((count = reader.read(buf)) != -1) {

buffer.append(buffer, 0, count);

}

} finally {

reader.close();

}When our application involves I/O operations, as long as we pay attention to specifying a unified encoding and decoding Charset character set, there will generally be no garbled code problems.

Perform data type conversion from characters to bytes in memory.

1、String 类提供字符串转换到字节的方法,也支持将字节转换成字符串的构造函数。

String s = "字符串";

byte[] b = s.getBytes("UTF-8");

String n = new String(b, "UTF-8");2、Charset 提供 encode 与 decode,分别对应 char[] 到 byte[] 的编码 和 byte[] 到 char[] 的解码。

Charset charset = Charset.forName("UTF-8");

ByteBuffer byteBuffer = charset.encode(string);

CharBuffer charBuffer = charset.decode(byteBuffer);...

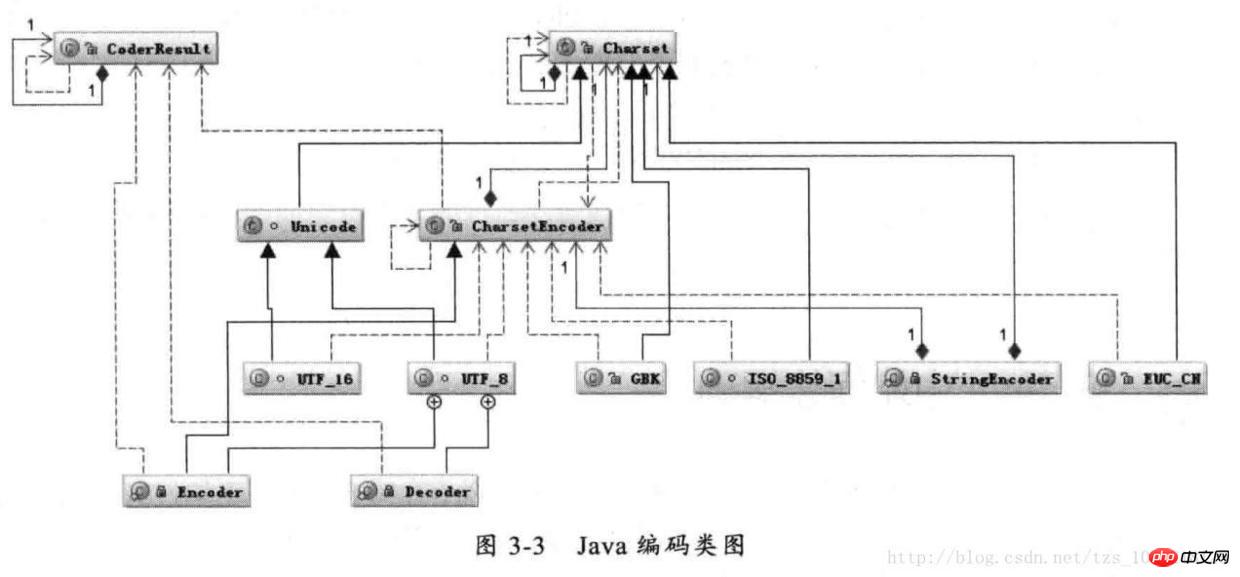

Java 编码类图

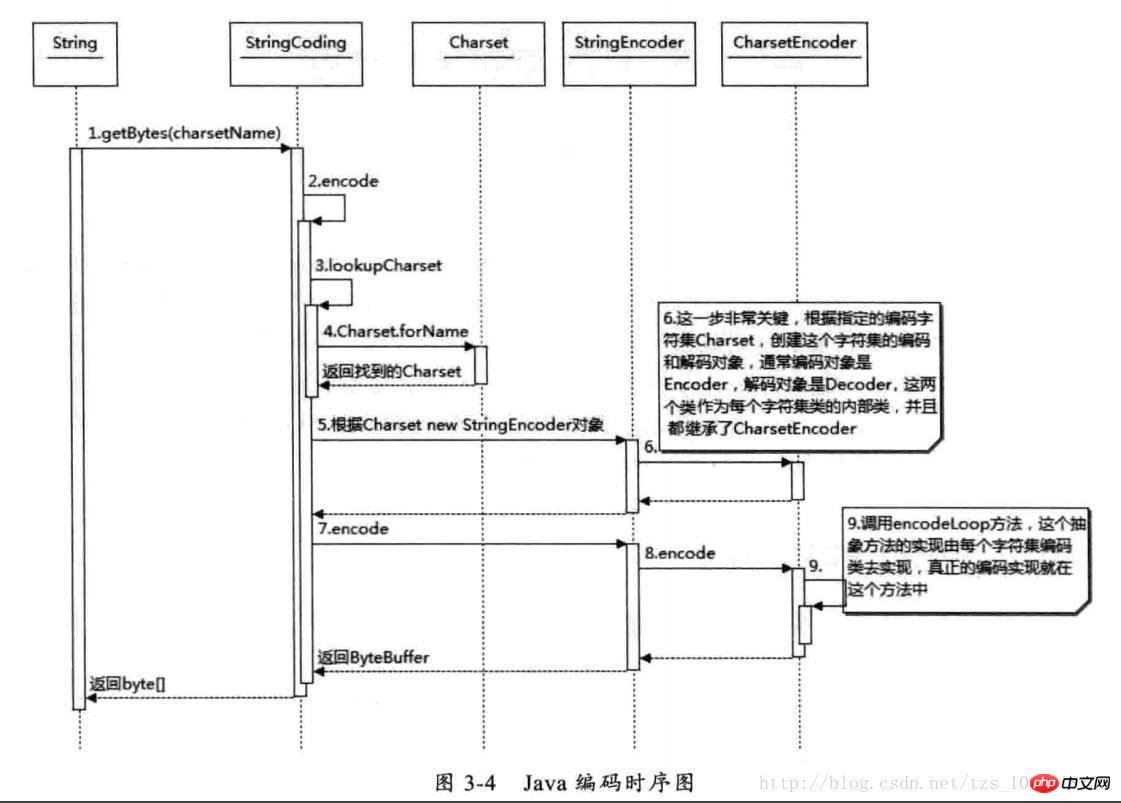

首先根据指定的 charsetName 通过 Charset.forName(charsetName) 设置 Charset 类,然后根据 Charset 创建 CharsetEncoder 对象,再调用 CharsetEncoder.encode 对字符串进行编码,不同的编码类型都会对应到一个类中,实际的编码过程是在这些类中完成的。下面是 String. getBytes(charsetName) 编码过程的时序图

Java 编码时序图

从上图可以看出根据 charsetName 找到 Charset 类,然后根据这个字符集编码生成 CharsetEncoder,这个类是所有字符编码的父类,针对不同的字符编码集在其子类中定义了如何实现编码,有了 CharsetEncoder 对象后就可以调用 encode 方法去实现编码了。这个是 String.getBytes 编码方法,其它的如 StreamEncoder 中也是类似的方式。

经常会出现中文变成“?”很可能就是错误的使用了 ISO-8859-1 这个编码导致的。中文字符经过 ISO-8859-1 编码会丢失信息,通常我们称之为“黑洞”,它会把不认识的字符吸收掉。由于现在大部分基础的 Java 框架或系统默认的字符集编码都是 ISO-8859-1,所以很容易出现乱码问题,后面将会分析不同的乱码形式是怎么出现的。

对中文字符后面四种编码格式都能处理,GB2312 与 GBK 编码规则类似,但是 GBK 范围更大,它能处理所有汉字字符,所以 GB2312 与 GBK 比较应该选择 GBK。UTF-16 与 UTF-8 都是处理 Unicode 编码,它们的编码规则不太相同,相对来说 UTF-16 编码效率最高,字符到字节相互转换更简单,进行字符串操作也更好。它适合在本地磁盘和内存之间使用,可以进行字符和字节之间快速切换,如 Java 的内存编码就是采用 UTF-16 编码。但是它不适合在网络之间传输,因为网络传输容易损坏字节流,一旦字节流损坏将很难恢复,想比较而言 UTF-8 更适合网络传输,对 ASCII 字符采用单字节存储,另外单个字符损坏也不会影响后面其它字符,在编码效率上介于 GBK 和 UTF-16 之间,所以 UTF-8 在编码效率上和编码安全性上做了平衡,是理想的中文编码方式。

对于使用中文来说,有 I/O 的地方就会涉及到编码,前面已经提到了 I/O 操作会引起编码,而大部分 I/O 引起的乱码都是网络 I/O,因为现在几乎所有的应用程序都涉及到网络操作,而数据经过网络传输都是以字节为单位的,所以所有的数据都必须能够被序列化为字节。在 Java 中数据被序列化必须继承 Serializable 接口。

一段文本它的实际大小应该怎么计算,我曾经碰到过一个问题:就是要想办法压缩 Cookie 大小,减少网络传输量,当时有选择不同的压缩算法,发现压缩后字符数是减少了,但是并没有减少字节数。所谓的压缩只是将多个单字节字符通过编码转变成一个多字节字符。减少的是 String.length(),而并没有减少最终的字节数。例如将“ab”两个字符通过某种编码转变成一个奇怪的字符,虽然字符数从两个变成一个,但是如果采用 UTF-8 编码这个奇怪的字符最后经过编码可能又会变成三个或更多的字节。同样的道理比如整型数字 1234567 如果当成字符来存储,采用 UTF-8 来编码占用 7 个 byte,采用 UTF-16 编码将会占用 14 个 byte,但是把它当成 int 型数字来存储只需要 4 个 byte 来存储。所以看一段文本的大小,看字符本身的长度是没有意义的,即使是一样的字符采用不同的编码最终存储的大小也会不同,所以从字符到字节一定要看编码类型。

我们能够看到的汉字都是以字符形式出现的,例如在 Java 中“淘宝”两个字符,它在计算机中的数值 10 进制是 28120 和 23453,16 进制是 6bd8 和 5d9d,也就是这两个字符是由这两个数字唯一表示的。Java 中一个 char 是 16 个 bit 相当于两个字节,所以两个汉字用 char 表示在内存中占用相当于四个字节的空间。

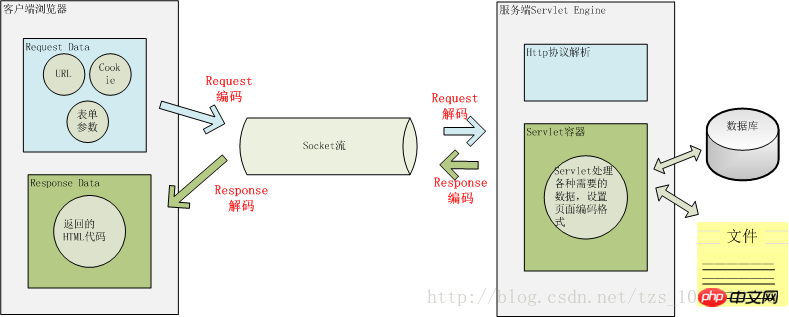

这两个问题搞清楚后,我们看一下 Java Web 中那些地方可能会存在编码转换?

用户从浏览器端发起一个 HTTP 请求,需要存在编码的地方是 URL、Cookie、Parameter。服务器端接受到 HTTP 请求后要解析 HTTP 协议,其中 URI、Cookie 和 POST 表单参数需要解码,服务器端可能还需要读取数据库中的数据,本地或网络中其它地方的文本文件,这些数据都可能存在编码问题,当 Servlet 处理完所有请求的数据后,需要将这些数据再编码通过 Socket 发送到用户请求的浏览器里,再经过浏览器解码成为文本。这些过程如下图所示:

一次 HTTP 请求的编码示例

用户提交一个 URL,这个 URL 中可能存在中文,因此需要编码,如何对这个 URL 进行编码?根据什么规则来编码?有如何来解码?如下图一个 URL:

上图中以 Tomcat 作为 Servlet Engine 为例,它们分别对应到下面这些配置文件中:

Port 对应在 Tomcat 的

<servlet-mapping>

<servlet-name>junshanExample</servlet-name>

<url-pattern>/servlets/servlet/*</url-pattern>

</servlet-mapping>

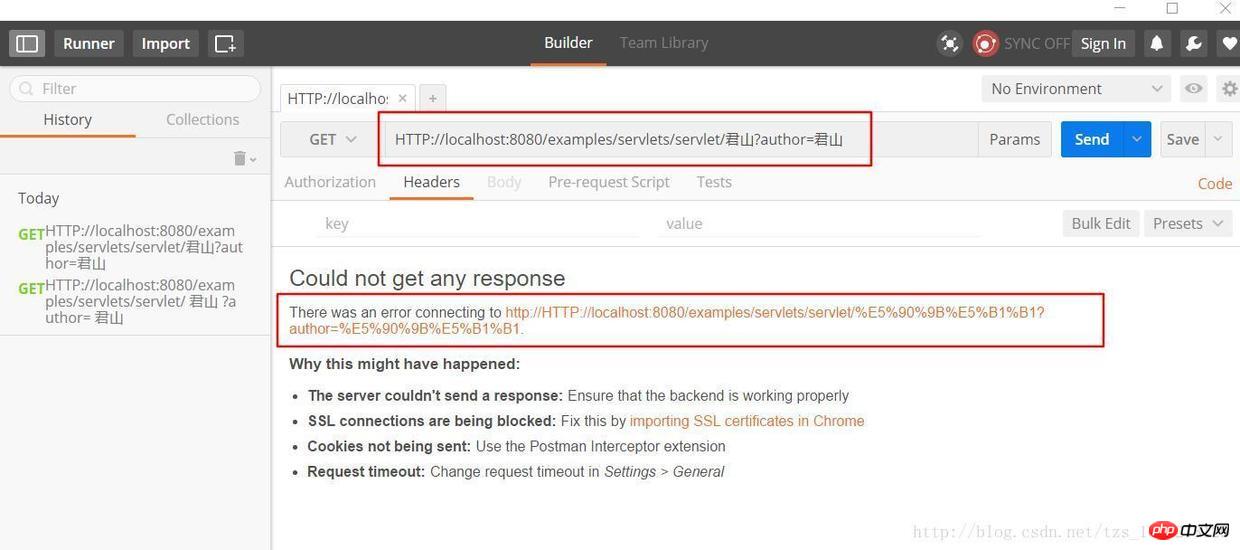

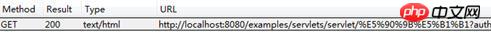

上图中 PathInfo 和 QueryString 出现了中文,当我们在浏览器中直接输入这个 URL 时,在浏览器端和服务端会如何编码和解析这个 URL 呢?为了验证浏览器是怎么编码 URL 的我选择的是360极速浏览器并通过 Postman 插件观察我们请求的 URL 的实际的内容,以下是 URL:

HTTP://localhost:8080/examples/servlets/servlet/君山?author=君山

君山的编码结果是:e5 90 9b e5 b1 b1,和《深入分析 Java Web 技术内幕》中的结果不一样,这是因为我使用的浏览器和插件和原作者是有区别的,那么这些浏览器之间的默认编码是不一样的,原文中的结果是:

君山的编码结果分别是:e5 90 9b e5 b1 b1,be fd c9 bd,查阅上一届的编码可知,PathInfo 是 UTF-8 编码而 QueryString 是经过 GBK 编码,至于为什么会有“%”?查阅 URL 的编码规范 RFC3986 可知浏览器编码 URL 是将非 ASCII 字符按照某种编码格式编码成 16 进制数字然后将每个 16 进制表示的字节前加上“%”,所以最终的 URL 就成了上图的格式了。

从上面测试结果可知浏览器对 PathInfo 和 QueryString 的编码是不一样的,不同浏览器对 PathInfo 也可能不一样,这就对服务器的解码造成很大的困难,下面我们以 Tomcat 为例看一下,Tomcat 接受到这个 URL 是如何解码的。

解析请求的 URL 是在 org.apache.coyote.HTTP11.InternalInputBuffer 的 parseRequestLine 方法中,这个方法把传过来的 URL 的 byte[] 设置到 org.apache.coyote.Request 的相应的属性中。这里的 URL 仍然是 byte 格式,转成 char 是在 org.apache.catalina.connector.CoyoteAdapter 的 convertURI 方法中完成的:

protected void convertURI(MessageBytes uri, Request request)

throws Exception {

ByteChunk bc = uri.getByteChunk();

int length = bc.getLength();

CharChunk cc = uri.getCharChunk();

cc.allocate(length, -1);

String enc = connector.getURIEncoding();

if (enc != null) {

B2CConverter conv = request.getURIConverter();

try {

if (conv == null) {

conv = new B2CConverter(enc);

request.setURIConverter(conv);

}

} catch (IOException e) {...}

if (conv != null) {

try {

conv.convert(bc, cc, cc.getBuffer().length -

cc.getEnd());

uri.setChars(cc.getBuffer(), cc.getStart(),

cc.getLength());

return;

} catch (IOException e) {...}

}

}

// Default encoding: fast conversion

byte[] bbuf = bc.getBuffer();

char[] cbuf = cc.getBuffer();

int start = bc.getStart();

for (int i = 0; i < length; i++) {

cbuf[i] = (char) (bbuf[i + start] & 0xff);

}

uri.setChars(cbuf, 0, length);

}

从上面的代码中可以知道对 URL 的 URI 部分进行解码的字符集是在 connector 的

How to parse QueryString? The QueryString of the GET HTTP request and the form parameters of the POST HTTP request are both saved as Parameters, and the parameter values are obtained through request.getParameter. They are decoded the first time the request.getParameter method is called. When the request.getParameter method is called, it will call the parseParameters method of org.apache.catalina.connector.Request. This method will decode the parameters passed by GET and POST, but their decoding character sets may be different. The decoding of the POST form will be introduced later. Where is the decoding character set of QueryString defined? It is transmitted to the server through the HTTP Header and is also in the URL. Is it the same as the decoding character set of the URI? From the previous browsers using different encoding formats for PathInfo and QueryString, we can guess that the decoded character sets will definitely not be consistent. This is indeed the case. The decoding character set of QueryString is either the Charset defined in ContentType in the Header or the default ISO-8859-1. To use the encoding defined in ContentType, you must set the connector's

Judging from the above URL encoding and decoding process, it is relatively complicated, and encoding and decoding are not fully controllable by us in the application. Therefore, we should try to avoid using non-ASCII characters in the URL in our application, otherwise it will be very difficult. You may encounter garbled characters. Of course, it is best to set the two parameters URIEncoding and useBodyEncodingForURI in

When the client initiates an HTTP request, in addition to the URL above, it may also pass other parameters in the Header such as Cookie, redirectPath, etc. These user-set values may also have encoding problems. How does Tomcat decode them?

Decoding the items in the Header is also performed by calling request.getHeader. If the requested Header item is not decoded, the toString method of MessageBytes is called. The default encoding used by this method for conversion from byte to char is also ISO-8859-1. , and we cannot set other decoding formats of the Header, so if you set the decoding of non-ASCII characters in the Header, there will definitely be garbled characters.

The same is true when we add a Header. Do not pass non-ASCII characters in the Header. If we must pass them, we can first encode these characters with org.apache.catalina.util.URLEncoder and then add them to the Header, so Information will not be lost during the transfer from the browser to the server. It would be nice if we decoded it according to the corresponding character set when we want to access these items.

As mentioned earlier, the decoding of parameters submitted by the POST form occurs when request.getParameter is called for the first time. The POST form parameter transfer method is different from QueryString. It is passed to the server through the BODY of HTTP. When we click the submit button on the page, the browser will first encode the parameters filled in the form according to the Charset encoding format of ContentType and then submit them to the server. The server will also use the character set in ContentType for decoding. Therefore, there is generally no problem with parameters submitted through the POST form, and the character set encoding is set by ourselves and can be set through request.setCharacterEncoding(charset).

In addition, for multipart/form-data type parameters, that is, the uploaded file encoding also uses the character set encoding defined by ContentType. It is worth noting that the uploaded file is transmitted to the local temporary directory of the server in a byte stream. This The process does not involve character encoding, but the actual encoding is adding the file content to parameters. If it cannot be encoded using this encoding, the default encoding ISO-8859-1 will be used.

When the resources requested by the user have been successfully obtained, the content will be returned to the client browser through Response. This process must first be encoded and then decoded by the browser. The encoding and decoding character set of this process can be set through response.setCharacterEncoding. It will override the value of request.getCharacterEncoding and return it to the client through the Content-Type of the Header. When the browser receives the returned socket stream, it will pass the Content-Type. charset to decode. If the Content-Type in the returned HTTP Header does not set charset, the browser will decode it according to the

除了 URL 和参数编码问题外,在服务端还有很多地方可能存在编码,如可能需要读取 xml、velocity 模版引擎、JSP 或者从数据库读取数据等。

xml 文件可以通过设置头来制定编码格式

<?xml version="1.0" encoding="UTF-8"?>

Velocity 模版设置编码格式:

services.VelocityService.input.encoding=UTF-8

JSP 设置编码格式:

<%@page contentType="text/html; charset=UTF-8"%>

访问数据库都是通过客户端 JDBC 驱动来完成,用 JDBC 来存取数据要和数据的内置编码保持一致,可以通过设置 JDBC URL 来制定如 MySQL:url="jdbc:mysql://localhost:3306/DB?useUnicode=true&characterEncoding=GBK"。

下面看一下,当我们碰到一些乱码时,应该怎么处理这些问题?出现乱码问题唯一的原因都是在 char 到 byte 或 byte 到 char 转换中编码和解码的字符集不一致导致的,由于往往一次操作涉及到多次编解码,所以出现乱码时很难查找到底是哪个环节出现了问题,下面就几种常见的现象进行分析。

例如,字符串“淘!我喜欢!”变成了“Ì Ô £ ¡Î Ò Ï²»¶ £ ¡”编码过程如下图所示:

字符串在解码时所用的字符集与编码字符集不一致导致汉字变成了看不懂的乱码,而且是一个汉字字符变成两个乱码字符。

例如,字符串“淘!我喜欢!”变成了“??????”编码过程如下图所示:

将中文和中文符号经过不支持中文的 ISO-8859-1 编码后,所有字符变成了“?”,这是因为用 ISO-8859-1 进行编解码时遇到不在码值范围内的字符时统一用 3f 表示,这也就是通常所说的“黑洞”,所有 ISO-8859-1 不认识的字符都变成了“?”。

例如,字符串“淘!我喜欢!”变成了“????????????”编码过程如下图所示:

这种情况比较复杂,中文经过多次编码,但是其中有一次编码或者解码不对仍然会出现中文字符变成“?”现象,出现这种情况要仔细查看中间的编码环节,找出出现编码错误的地方。

还有一种情况是在我们通过 request.getParameter 获取参数值时,当我们直接调用

String value = request.getParameter(name); 会出现乱码,但是如果用下面的方式

String value = String(request.getParameter(name).getBytes(" ISO-8859-1"), "GBK");

解析时取得的 value 会是正确的汉字字符,这种情况是怎么造成的呢?

看下如所示:

这种情况是这样的,ISO-8859-1 字符集的编码范围是 0000-00FF,正好和一个字节的编码范围相对应。这种特性保证了使用 ISO-8859-1 进行编码和解码可以保持编码数值“不变”。虽然中文字符在经过网络传输时,被错误地“拆”成了两个欧洲字符,但由于输出时也是用 ISO-8859-1,结果被“拆”开的中文字的两半又被合并在一起,从而又刚好组成了一个正确的汉字。虽然最终能取得正确的汉字,但是还是不建议用这种不正常的方式取得参数值,因为这中间增加了一次额外的编码与解码,这种情况出现乱码时因为 Tomcat 的配置文件中 useBodyEncodingForURI 配置项没有设置为”true”,从而造成第一次解析式用 ISO-8859-1 来解析才造成乱码的。

本文首先总结了几种常见编码格式的区别,然后介绍了支持中文的几种编码格式,并比较了它们的使用场景。接着介绍了 Java 那些地方会涉及到编码问题,已经 Java 中如何对编码的支持。并以网络 I/O 为例重点介绍了 HTTP 请求中的存在编码的地方,以及 Tomcat 对 HTTP 协议的解析,最后分析了我们平常遇到的乱码问题出现的原因。

To sum up, to solve the Chinese problem, we must first figure out where character-to-byte encoding and byte-to-character decoding will occur. The most common places are reading and storing data to disk, or data passing through the network. transmission. Then, for these places, figure out how the framework or system that operates these data controls encoding, set the encoding format correctly, and avoid using the default encoding format of the software or the operating system platform.

Note: Most of the article refers to the third chapter of the book "Insider Java Web Technology". I have deleted it. Please be sure to indicate the source when reprinting.

The above is the detailed content of In-depth analysis of Chinese encoding issues in Java Web. For more information, please follow other related articles on the PHP Chinese website!

What are the application scenarios of PHP singleton mode?

What are the application scenarios of PHP singleton mode?

The difference between mac air and pro

The difference between mac air and pro

What is an .Xauthority file?

What is an .Xauthority file?

python number to string

python number to string

How to share a printer between two computers

How to share a printer between two computers

How to solve the computer prompt of insufficient memory

How to solve the computer prompt of insufficient memory

How to fix winntbbu.dll missing

How to fix winntbbu.dll missing

How to set up virtual memory

How to set up virtual memory