Beyond Causal Language Modeling

NeurIPS 2024 Spotlight: Optimizing Language Model Pretraining with Selective Language Modeling (SLM)

Recently, I presented a fascinating paper from NeurIPS 2024, "Not All Tokens Are What You Need for Pretraining," at a local reading group. This paper tackles a surprisingly simple yet impactful question: Is next-token prediction necessary for every token during language model pretraining?

The standard approach involves massive web-scraped datasets and applying causal language modeling (CLM) universally. This paper challenges that assumption, proposing that some tokens hinder, rather than help, the learning process. The authors demonstrate that focusing training on "useful" tokens significantly improves data efficiency and downstream task performance. This post summarizes their core ideas and key experimental findings.

The Problem: Noise and Inefficient Learning

Large web corpora inevitably contain noise. While document-level filtering helps, noise often resides within individual documents. These noisy tokens waste computational resources and potentially confuse the model.

The authors analyzed token-level learning dynamics, categorizing tokens based on their cross-entropy loss trajectory:

- L→L (Low to Low): Quickly learned, providing minimal further benefit.

- H→L (High to Low): Initially difficult, but eventually learned; representing valuable learning opportunities.

- H→H (High to High): Consistently difficult, often due to inherent unpredictability (aleatoric uncertainty).

- L→H (Low to High): Initially learned, but later become problematic, possibly due to context shifts or noise.

Their analysis reveals that only a small fraction of tokens provide meaningful learning signals.

The Solution: Selective Language Modeling (SLM)

The proposed solution, Selective Language Modeling (SLM), offers a more targeted approach:

-

Reference Model (RM) Training: A high-quality subset of the data is used to fine-tune a pre-trained base model, creating a reference model (RM). This RM acts as a benchmark for token "usefulness."

-

Excess Loss Calculation: For each token in the large corpus, the difference between the RM's loss and the current training model's loss (the "excess loss") is calculated. Higher excess loss indicates greater potential for improvement.

-

Selective Backpropagation: The full forward pass is performed on all tokens, but backpropagation only occurs for the top k% of tokens with the highest excess loss. This dynamically focuses training on the most valuable tokens.

Experimental Results: Significant Gains

SLM demonstrates significant advantages across various experiments:

-

Math Domain: On OpenWebMath, SLM achieved up to 10% performance gains on GSM8K and MATH benchmarks compared to standard CLM, reaching baseline performance 5-10 times faster. A 7B model matched a state-of-the-art model using only 3% of its training tokens. Fine-tuning further boosted performance by over 40% for a 1B model.

-

General Domain: Even with a strong pre-trained base model, SLM yielded approximately 5.8% average improvement across 15 benchmarks, particularly in challenging domains like code and math.

-

Self-Referencing: Even a quickly trained RM from the raw corpus provided a 2-3% accuracy boost and a 30-40% reduction in tokens used.

Conclusion and Future Work

This paper offers valuable insights into token-level learning dynamics and introduces SLM, a highly effective technique for optimizing language model pretraining. Future research directions include scaling SLM to larger models, exploring API-based reference models, integrating reinforcement learning, using multiple reference models, and aligning SLM with safety and truthfulness considerations. This work represents a significant advancement in efficient and effective language model training.

The above is the detailed content of Beyond Causal Language Modeling. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

Investing is booming, but capital alone isn’t enough. With valuations rising and distinctiveness fading, investors in AI-focused venture funds must make a key decision: Buy, build, or partner to gain an edge? Here’s how to evaluate each option—and pr

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Heading Toward AGI And

Build Your First LLM Application: A Beginner's Tutorial

Jun 24, 2025 am 10:13 AM

Build Your First LLM Application: A Beginner's Tutorial

Jun 24, 2025 am 10:13 AM

Have you ever tried to build your own Large Language Model (LLM) application? Ever wondered how people are making their own LLM application to increase their productivity? LLM applications have proven to be useful in every aspect

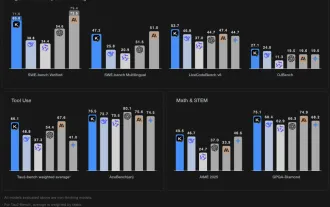

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

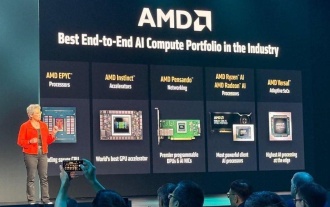

AMD Keeps Building Momentum In AI, With Plenty Of Work Still To Do

Jun 28, 2025 am 11:15 AM

AMD Keeps Building Momentum In AI, With Plenty Of Work Still To Do

Jun 28, 2025 am 11:15 AM

Overall, I think the event was important for showing how AMD is moving the ball down the field for customers and developers. Under Su, AMD’s M.O. is to have clear, ambitious plans and execute against them. Her “say/do” ratio is high. The company does

Future Forecasting A Massive Intelligence Explosion On The Path From AI To AGI

Jul 02, 2025 am 11:19 AM

Future Forecasting A Massive Intelligence Explosion On The Path From AI To AGI

Jul 02, 2025 am 11:19 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). For those readers who h

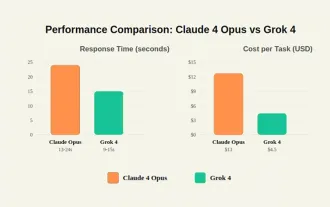

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

Jul 02, 2025 am 11:18 AM

Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

Jul 02, 2025 am 11:18 AM

For example, if you ask a model a question like: “what does (X) person do at (X) company?” you may see a reasoning chain that looks something like this, assuming the system knows how to retrieve the necessary information:Locating details about the co