Seven Cool GenAI & LLM Technical Interview Questions

To learn more about AIGC, please visit:

51CTO AI.x Community

https ://www.51cto.com/aigc/

Translator | Jingyan

Reviewer | Chonglou

is different from the traditional question banks that can be found everywhere on the Internet. These questions require outside-the-box thinking.

Large Language Models (LLMs) are increasingly important in the fields of data science, generative artificial intelligence (GenAI), and artificial intelligence. These complex algorithms enhance human skills and drive efficiency and innovation in many industries, becoming the key for companies to remain competitive. LLM has a wide range of applications. It can be used in fields such as natural language processing, text generation, speech recognition and recommendation systems. By learning from large amounts of data, LLM is able to generate text and answer questions, engage in conversations with humans, and provide accurate and valuable information. GenAI relies on LLM algorithms and models, which can generate a variety of creative features. However, although GenAI and LLM are becoming more and more common, we still lack detailed resources that can deeply understand their complexity. Newcomers in the workplace often feel like they are stuck in unknown territory when conducting interviews about the functions and practical applications of GenAI and LLM.

To this end, we have compiled this guidebook to record technical interview questions about GenAI & LLM. Complete with in-depth answers, this guide is designed to help you prepare for interviews, approach challenges with confidence, and gain a deeper understanding of the impact and potential of GenAI & LLM in shaping the future of AI and data science.

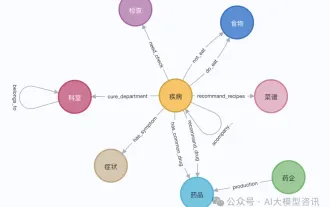

1. How to build a knowledge graph using an embedded dictionary in Python?

One way is to use a hash (a dictionary in Python, also called a key-value table), where the key (key) is a word, token, concept, or category, such as "mathematics." Each key corresponds to a value, which itself is a hash: a nested hash. The key in the nested hash is also a word related to the parent key in the parent hash, such as a word like "calculus". The value is a weight: "calculus" has a high value because "calculus" and "mathematics" are related and often appear together; conversely, "restaurants" has a low value because "restaurants" and "mathematics" rarely appear together.

In LLM, nested hashing may be embedding (a method of mapping high-dimensional data to a low-dimensional space, usually used to convert discrete, non-continuous data into a continuous vector representation, to facilitate computer processing). Since nested hashing does not have a fixed number of elements, it handles discrete graphs much better than vector databases or matrices. It brings faster algorithms and requires less memory.

2. How to perform hierarchical clustering when the data contains 100 million keywords?

If you want to cluster keywords, then for each pair of keywords {A, B }, you can calculate the similarity between A and B to learn how similar the two words are. The goal is to generate clusters of similar keywords.

Standard Python libraries such as Sklearn provide agglomerative clustering, also known as hierarchical clustering. However, in this example, they typically require a 100 million x 100 million distance matrix. This obviously doesn't work. In practice, random words A and B rarely appear together, so the distance matrix is very discrete. Solutions include using methods suitable for discrete graphs, such as using nested hashes discussed in question 1. One such approach is based on detecting clustering of connected components in the underlying graph.

3. How do you crawl a large repository like Wikipedia to retrieve the underlying structure, not just the individual entries?

These repositories all embed structured elements into web pages , making the content more structured than it appears at first glance. Some structural elements are invisible to the naked eye, such as metadata. Some are visible and also present in the crawled data, such as indexes, related items, breadcrumbs, or categories. You can search these elements individually to build a good knowledge graph or taxonomy. But you may want to write your own crawler from scratch rather than relying on tools like Beautiful Soup. LLMs rich in structural information (such as xLLM) provide better results. Additionally, if your repository does lack any structure, you can extend your scraped data with structures retrieved from external sources. This process is called "structure augmentation".

4. How to enhance LLM embeddings with contextual tokens?

Embeddings are composed of tokens; these are the smallest text elements you can find in any document. You don't necessarily have to have two tokens, like "data" and "science", you can have four tokens: "data^science", "data", "science", and "data~science". The last one represents the discovery of the term “data science”. The first means that both "data" and "science" are found, but in random positions within a given paragraph, rather than in adjacent positions. Such tokens are called multi-tokens or contextual tokens. They provide some nice redundancy, but if you're not careful you can end up with huge embeddings. Solutions include clearing out useless tokens (keep the longest one) and using variable-size embeddings. Contextual content can help reduce LLM illusions.

5. How to implement self-tuning to eliminate many of the problems associated with model evaluation and training?

This applies to systems based on explainable AI, not neural networks black box. Allow the user of the application to select hyperparameters and mark the ones he likes. Use this information to find ideal hyperparameters and set them to default values. This is automated reinforcement learning based on user input. It also allows the user to select his favorite suit based on the desired results, making your application customizable. Within an LLM, performance can be further improved by allowing users to select specific sub-LLMs (e.g. based on search type or category). Adding a relevance score to each item in your output can also help fine-tune your system.

6. How to increase the speed of vector search by several orders of magnitude?

In LLM, using variable-length embeddings greatly reduces the size of embeddings. Therefore, it speeds up searches for similar backend embeddings to those captured in the frontend prompt. However, it may require a different type of database, such as key-value tables. Reducing the token size and embeddings table is another solution: in a trillion-token system, 95% of tokens will never be extracted to answer a prompt. They're just noise, so get rid of them. Using context tokens (see question 4) is another way to store information in a more compact way. Finally, approximate nearest neighbor (ANN) search is performed on the compressed embeddings. The probabilistic version (pANN) can run much faster, see figure below. Finally, use a caching mechanism to store the most frequently accessed embeddings or queries for better real-time performance.

Probabilistic Approximate Nearest Neighbor Search (pANN)

As a rule of thumb, reducing the size of the training set by 50% will give better results. The fitting effect will also be greatly reduced. In LLM, it's better to choose a few good input sources than to search the entire Internet. Having a dedicated LLM for each top-level category, rather than one size fits all, further reduces the number of embeddings: each tip targets a specific sub-LLM, rather than the entire database.

7. What is the ideal loss function to get the best results from your model?

The best solution is to use the model evaluation metric as the loss function. The reason why this is rarely done is that you need a loss function that can be updated very quickly every time a neuron is activated in the neural network. In the context of neural networks, another solution is to calculate the evaluation metric after each epoch and stay on the epoch-generated solution with the best evaluation score, rather than on the epoch-generated solution with the smallest loss.

I am currently working on a system where the evaluation metric and loss function are the same. Not based on neural networks. Initially, my evaluation metric was the multivariate Kolmogorov-Smirnov distance (KS). But without a lot of calculations, it is extremely difficult to perform atomic updates on KS on big data. This makes KS unsuitable as a loss function since you would need billions of atomic updates. But by changing the cumulative distribution function to a probability density function with millions of bins, I was able to come up with a good evaluation metric that also works as a loss function.

Original title: 7 Cool Technical GenAI & LLM Job Interview Questions, author: Vincent Granville

Link: https://www.datasciencecentral.com/7-cool-technical-genai-llm -job-interview-questions/.

To learn more about AIGC, please visit:

51CTO AI.x Community

https://www.51cto.com/ aigc/

The above is the detailed content of Seven Cool GenAI & LLM Technical Interview Questions. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1793

1793

16

16

1736

1736

56

56

1587

1587

29

29

267

267

587

587

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

This site reported on June 27 that Jianying is a video editing software developed by FaceMeng Technology, a subsidiary of ByteDance. It relies on the Douyin platform and basically produces short video content for users of the platform. It is compatible with iOS, Android, and Windows. , MacOS and other operating systems. Jianying officially announced the upgrade of its membership system and launched a new SVIP, which includes a variety of AI black technologies, such as intelligent translation, intelligent highlighting, intelligent packaging, digital human synthesis, etc. In terms of price, the monthly fee for clipping SVIP is 79 yuan, the annual fee is 599 yuan (note on this site: equivalent to 49.9 yuan per month), the continuous monthly subscription is 59 yuan per month, and the continuous annual subscription is 499 yuan per year (equivalent to 41.6 yuan per month) . In addition, the cut official also stated that in order to improve the user experience, those who have subscribed to the original VIP

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Improve developer productivity, efficiency, and accuracy by incorporating retrieval-enhanced generation and semantic memory into AI coding assistants. Translated from EnhancingAICodingAssistantswithContextUsingRAGandSEM-RAG, author JanakiramMSV. While basic AI programming assistants are naturally helpful, they often fail to provide the most relevant and correct code suggestions because they rely on a general understanding of the software language and the most common patterns of writing software. The code generated by these coding assistants is suitable for solving the problems they are responsible for solving, but often does not conform to the coding standards, conventions and styles of the individual teams. This often results in suggestions that need to be modified or refined in order for the code to be accepted into the application

Step-by-step guide to using Groq Llama 3 70B locally

Jun 10, 2024 am 09:16 AM

Step-by-step guide to using Groq Llama 3 70B locally

Jun 10, 2024 am 09:16 AM

Translator | Bugatti Review | Chonglou This article describes how to use the GroqLPU inference engine to generate ultra-fast responses in JanAI and VSCode. Everyone is working on building better large language models (LLMs), such as Groq focusing on the infrastructure side of AI. Rapid response from these large models is key to ensuring that these large models respond more quickly. This tutorial will introduce the GroqLPU parsing engine and how to access it locally on your laptop using the API and JanAI. This article will also integrate it into VSCode to help us generate code, refactor code, enter documentation and generate test units. This article will create our own artificial intelligence programming assistant for free. Introduction to GroqLPU inference engine Groq

Seven Cool GenAI & LLM Technical Interview Questions

Jun 07, 2024 am 10:06 AM

Seven Cool GenAI & LLM Technical Interview Questions

Jun 07, 2024 am 10:06 AM

To learn more about AIGC, please visit: 51CTOAI.x Community https://www.51cto.com/aigc/Translator|Jingyan Reviewer|Chonglou is different from the traditional question bank that can be seen everywhere on the Internet. These questions It requires thinking outside the box. Large Language Models (LLMs) are increasingly important in the fields of data science, generative artificial intelligence (GenAI), and artificial intelligence. These complex algorithms enhance human skills and drive efficiency and innovation in many industries, becoming the key for companies to remain competitive. LLM has a wide range of applications. It can be used in fields such as natural language processing, text generation, speech recognition and recommendation systems. By learning from large amounts of data, LLM is able to generate text

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Large Language Models (LLMs) are trained on huge text databases, where they acquire large amounts of real-world knowledge. This knowledge is embedded into their parameters and can then be used when needed. The knowledge of these models is "reified" at the end of training. At the end of pre-training, the model actually stops learning. Align or fine-tune the model to learn how to leverage this knowledge and respond more naturally to user questions. But sometimes model knowledge is not enough, and although the model can access external content through RAG, it is considered beneficial to adapt the model to new domains through fine-tuning. This fine-tuning is performed using input from human annotators or other LLM creations, where the model encounters additional real-world knowledge and integrates it

GraphRAG enhanced for knowledge graph retrieval (implemented based on Neo4j code)

Jun 12, 2024 am 10:32 AM

GraphRAG enhanced for knowledge graph retrieval (implemented based on Neo4j code)

Jun 12, 2024 am 10:32 AM

Graph Retrieval Enhanced Generation (GraphRAG) is gradually becoming popular and has become a powerful complement to traditional vector search methods. This method takes advantage of the structural characteristics of graph databases to organize data in the form of nodes and relationships, thereby enhancing the depth and contextual relevance of retrieved information. Graphs have natural advantages in representing and storing diverse and interrelated information, and can easily capture complex relationships and properties between different data types. Vector databases are unable to handle this type of structured information, and they focus more on processing unstructured data represented by high-dimensional vectors. In RAG applications, combining structured graph data and unstructured text vector search allows us to enjoy the advantages of both at the same time, which is what this article will discuss. structure

Plaud launches NotePin AI wearable recorder for $169

Aug 29, 2024 pm 02:37 PM

Plaud launches NotePin AI wearable recorder for $169

Aug 29, 2024 pm 02:37 PM

Plaud, the company behind the Plaud Note AI Voice Recorder (available on Amazon for $159), has announced a new product. Dubbed the NotePin, the device is described as an AI memory capsule, and like the Humane AI Pin, this is wearable. The NotePin is

Google AI announces Gemini 1.5 Pro and Gemma 2 for developers

Jul 01, 2024 am 07:22 AM

Google AI announces Gemini 1.5 Pro and Gemma 2 for developers

Jul 01, 2024 am 07:22 AM

Google AI has started to provide developers with access to extended context windows and cost-saving features, starting with the Gemini 1.5 Pro large language model (LLM). Previously available through a waitlist, the full 2 million token context windo