Home > Article > Backend Development > A brief discussion on the encoding processing of Python crawling web pages

Background

During the Mid-Autumn Festival, a friend sent me an email, saying that when he was climbing Lianjia, he found that the codes returned by the web page were all garbled. He asked me to help him with his advice (working overtime during the Mid-Autumn Festival, so dedicated = =!). In fact, I have encountered this problem very early. I read it a little bit when I was reading novels, but I didn’t take it seriously. In fact, this problem is right Caused by poor understanding of coding.

Question

A very common crawler code, the code is like this:

# ecoding=utf-8

import re

import requests

import sys

reload(sys)

sys.setdefaultencoding('utf8')

url = 'http://jb51.net/ershoufang/rs%E6%8B%9B%E5%95%86%E6%9E%9C%E5%B2%AD/'

res = requests.get(url)

print res.text

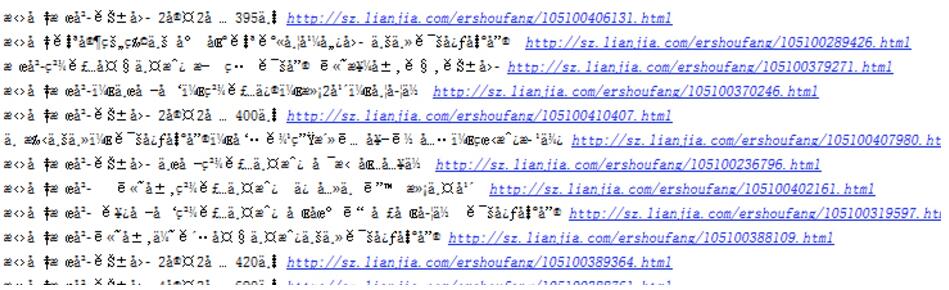

The purpose is actually very simple, which is to crawl the content of Lianjia. However, after executing this, the returned results and all the content involving Chinese will become garbled, such as this

Such data can be said to be useless.

Problem Analysis

The problem here is obvious, that is, the encoding of the text is incorrect, resulting in garbled characters.

View the encoding of the web page

From the header of the crawled target web page, the web page is encoded with utf-8.

So, we must use utf-8 for the final encoding, that is to say, the final text processing must use utf-8 to decode, that is: decode('utf-8')

Text encoding and decoding

Python encoding and decoding The process is like this, the source file ===》 encode (encoding method) ===》decode (decoding method), to a large extent, it is not recommended to use

import sys

reload(sys)

sys.setdefaultencoding('utf8')

This way to hard-process text encoding. However, laziness is not a big problem if it does not affect you at certain times. However, it is recommended to use encode and decode to process the text after obtaining the source file.

Back to the question

The biggest problem now is the encoding method of the source file. When we use requests normally, it will automatically guess the source The encoding method of the file is then transcoded into Unicode encoding. However, after all, it is a program and it is possible to guess wrong, so if we guess wrong, we need to manually specify the encoding method. The official document describes it as follows:

When you make a request, Requests makes educated guesses about the encoding of the response based on the HTTP headers. The text encoding guessed by Requests is used when you access r.text. You can find out what encoding Requests is using, and change it, using the r.encoding property.

So we need to check out what encoding method is returned by requests?

# ecoding=utf-8

import re

import requests

from bs4 import BeautifulSoup

import sys

reload(sys)

sys.setdefaultencoding('utf8')

url = 'http://jb51.net/ershoufang/rs%E6%8B%9B%E5%95%86%E6%9E%9C%E5%B2%AD/'

res = requests.get(url)

print res.encoding

The printed results are as follows:

ISO-8859-1

In other words, the source file is encoded using ISO-8859-1. Baidu searched for ISO-8859-1 and the results are as follows:

ISO8859-1, usually called Latin-1. Latin-1 includes additional characters indispensable for writing all Western European languages.

Problem Solving

After discovering this stuff, the problem is easily solved. As long as you specify the encoding, you can type Chinese correctly. The code is as follows:

# ecoding=utf-8

import requests

import sys

reload(sys)

sys.setdefaultencoding('utf8')

url = 'http://jb51.net/ershoufang/rs%E6%8B%9B%E5%95%86%E6%9E%9C%E5%B2%AD/'

res = requests.get(url)

res.encoding = ('utf8')

print res.text

The printed result is obvious, and the Chinese characters are displayed correctly.

Another way is to decode and encode the source file. The code is as follows:

# ecoding=utf-8

import requests

import sys

reload(sys)

sys.setdefaultencoding('utf8')

url = 'http://jb51.net/ershoufang/rs%E6%8B%9B%E5%95%86%E6%9E%9C%E5%B2%AD/'

res = requests.get(url)

# res.encoding = ('utf8')

print res.text.encode('ISO-8859-1').decode('utf-8')

Another: ISO-8859-1 is also called latin1, and it is normal to use latin1 for decoding results.

Regarding character encoding, there are many things that can be said. Friends who want to know more can refer to the following information.

•《The Absolute Minimum Every Software Developer Absolutely, Positively Must Know About Unicode and Character Sets (No Excuses!)》

The above article briefly discusses the coding processing of Python crawling web pages. I have compiled all the content shared with you. I hope it can give you a reference. I also hope that everyone will support the PHP Chinese website.

For more articles on coding and processing of crawling web pages with Python, please pay attention to the PHP Chinese website!