Home > Article > Backend Development > python implements simple crawler function

When we browse the Internet every day, we often see some good-looking pictures, and we want to save and download these pictures, or use them as desktop wallpapers, or as design materials.

Our most common method is to right-click the mouse and select Save As. However, some pictures do not have a save as option when you right-click the mouse. Another way is to use a screenshot tool to capture them, but this will reduce the clarity of the picture. Okay~! In fact, you are very good. Right-click to view the page source code.

We can use python to implement such a simple crawler function and crawl the code we want locally. Let's take a look at how to use python to implement such a function.

First, get the entire page data

First we can get the entire page information of the image to be downloaded.

getjpg.py

#coding=utf-8

import urllib

def getHtml(url):

page = urllib.urlopen(url)

html = page.read()

return html

html = getHtml("http://tieba.baidu.com/p/2738151262")

print htmlThe Urllib module provides an interface for reading web page data. We can read data on www and ftp just like local files. First, we define a getHtml() function:

The urllib.urlopen() method is used to open a URL address.

The read() method is used to read the data on the URL, pass a URL to the getHtml() function, and download the entire page. Executing the program will print out the entire web page.

Second, filter the data you want in the page

Python provides very powerful regular expressions. We need to first understand a little knowledge of python regular expressions.

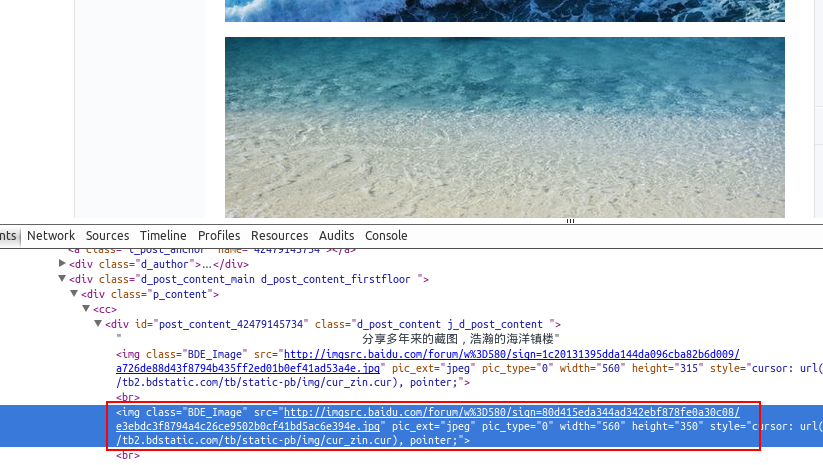

Suppose we find a few beautiful wallpapers in Baidu Tieba and go to the previous section to view the tools. Found the address of the picture, such as: src=”http://imgsrc.baidu.com/forum...jpg” pic_ext=”jpeg”

Modify the code as follows:

import re

import urllib

def getHtml(url):

page = urllib.urlopen(url)

html = page.read()

return html

def getImg(html):

reg = r'src="(.+?\.jpg)" pic_ext'

imgre = re.compile(reg)

imglist = re.findall(imgre,html)

return imglist

html = getHtml("http://tieba.baidu.com/p/2460150866")

print getImg(html)We The getImg() function was also created to filter the required image links in the entire acquired page. The re module mainly contains regular expressions:

re.compile() can compile the regular expression into a regular expression object.

The re.findall() method reads data containing imgre (regular expression) in html .

Running the script will get the URL address of the image contained in the entire page.

Three, save the page filtered data to the local

Traverse the filtered image address through a for loop and save it to the local. The code is as follows:

#coding=utf-8

import urllib

import re

def getHtml(url):

page = urllib.urlopen(url)

html = page.read()

return html

def getImg(html):

reg = r'src="(.+?\.jpg)" pic_ext'

imgre = re.compile(reg)

imglist = re.findall(imgre,html)

x = 0

for imgurl in imglist:

urllib.urlretrieve(imgurl,'%s.jpg' % x)

x+=1

html = getHtml("http://tieba.baidu.com/p/2460150866")

print getImg(html)The core here is the use of urllib.urlretrieve( ) method to directly download remote data to the local.

Traverse the obtained image connections through a for loop. In order to make the file name of the image look more standardized, rename it. The naming rule is to add 1 to the x variable. The save location defaults to the program's storage directory.

After the program is completed, you will see the files downloaded to the local directory in the directory.