Home > Article > Technology peripherals > 40% faster than Transformer! Meta releases new Megabyte model to solve the problem of computing power loss

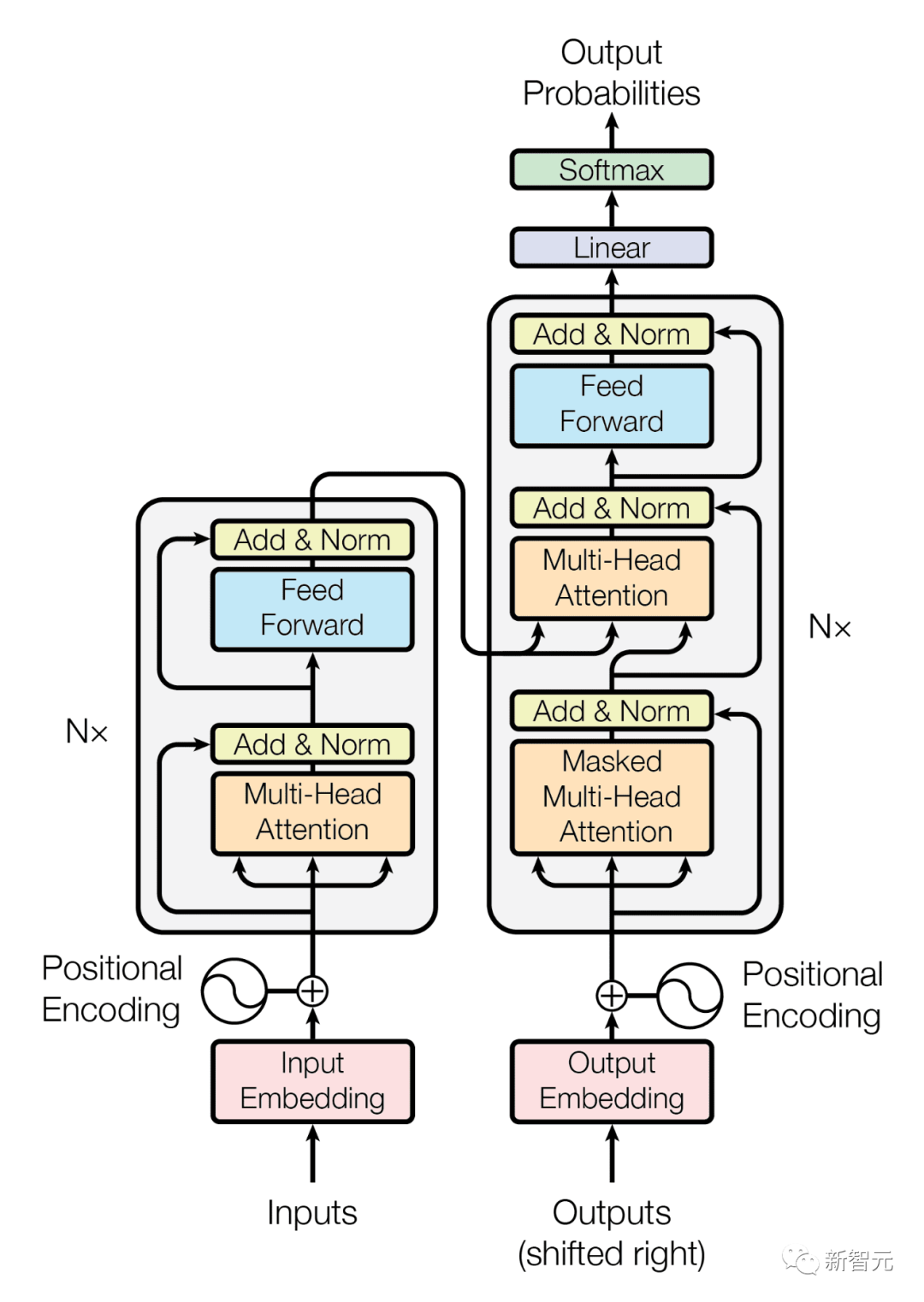

Transformer is undoubtedly the most popular model in the field of machine learning in the past few years.

Since it was proposed in the paper "Attention is All You Need" in 2017, this new network structure has exceeded all major translation tasks and created many new records. .

But Transformer has a flaw when processing long byte sequences, that is, the computing power is seriously lost, and Meta’s The latest results of researchers can solve this shortcoming well.

They have launched a new model architecture that can generate more than 1 million tokens across multiple formats and surpass the capabilities of the existing Transformer architecture behind models such as GPT-4.

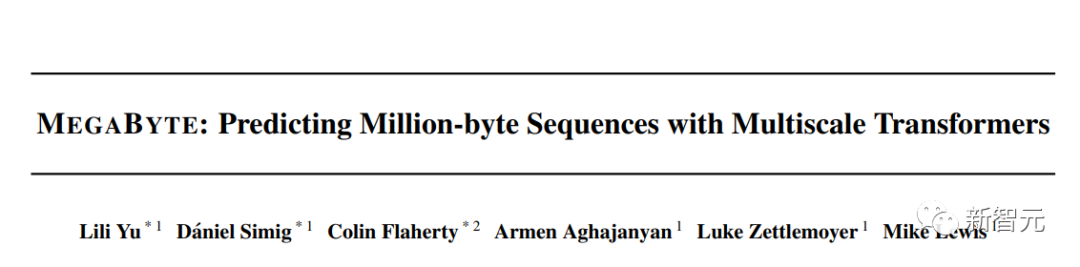

This model is called "Megabyte" and is a multi-scale decoder architecture that can process more than one million bytes. The sequence is end-to-end differentiable modeling.

##Paper link: https://arxiv.org/abs/2305.07185

Why Megabyte is better than Transformer, you must first look at the shortcomings of Transformer.

Disadvantages of TransformerSo far, several types of high-performance generative AI models, such as OpenAI’s GPT-4 and Google’s Bard, are all based on the Transformer architecture. Model.

But Meta’s research team believes that the popular Transformer architecture may be reaching its threshold, mainly due to two important flaws inherent in the Transformer design:

- As the input and output byte lengths increase, the cost of self-attention also increases rapidly. For example, input music, image or video files usually contain several megabytes. However, large decoders (LLM) often Using only a few thousand contextual tokens

- Feed-forward networks help language models understand and process words through a series of mathematical operations and transformations, but are difficult to scale on a per-position basis nature, these networks operate on character groups or positions independently, resulting in a large amount of computational overhead

What is the strength of MegabyteCompared with Transformer, the Megabyte model shows A uniquely different architecture that divides input and output sequences into patches instead of individual tokens.

As shown below, in each patch, the local AI model generates results, while the global model manages and coordinates the final output of all patches.

First, the byte sequence is divided into fixed-size patches, roughly similar to tokens. This model consists of three parts Composition:

The researchers observed that byte prediction is relatively easy for most tasks (such as completing a word given the first few characters), which means that each word Large networks of knots are unnecessary and smaller models can be used for internal predictions.#(1) patch embedder: simply encode patch

## by losslessly concatenating the embeddings of each byte #(2) A global model: a large autoregressive transformer represented by the input and output patches(3) A local model: a small autoregressive model that predicts the bytes in the patch

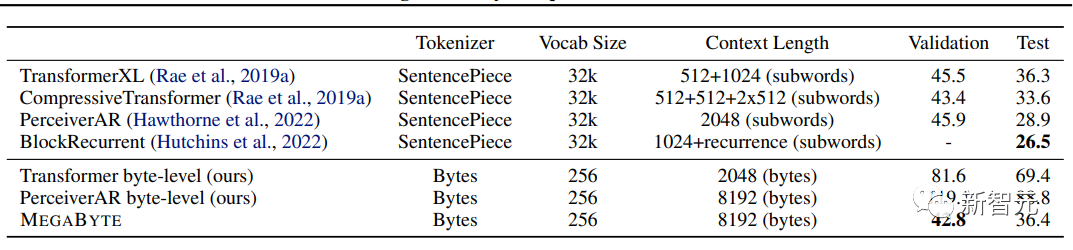

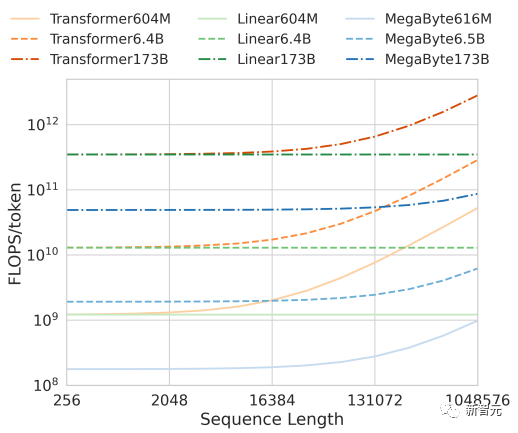

This approach solves the scalability challenges prevalent in today’s AI models. The Megabyte model’s patch system allows a single feedforward network to run on patches containing multiple tokens, effectively solving the problem of self-attention. Scaling issue. Among them, the Megabyte architecture has made three major improvements to the Transformer for long sequence modeling: - Quadratic self-attention (Sub -quadratic self-attention) Most work on long sequence models has focused on mitigating the quadratic cost of self-attention, while Megabyte breaks the long sequence into two shorter sequences that are still easy to handle even for long sequences. - patch feedforward layers (Per-patch feedforward layers) Over 98% FLOPS in GPT-3 sized models For computing positional feedforward layers, Megabyte uses large feedforward layers per patch to achieve larger, more performant models at the same cost. With a patch size of P, the baseline converter will use the same feedforward layer with m parameters P times, and Megabyte can use a layer with mP parameters once at the same cost. -Parallelism in Decoding Transformers must perform all calculations serially during generation because each time The input to a step is the output of the previous time step, and by generating patch representations in parallel, Megabyte allows for greater parallelism in the generation process. For example, a Megabyte model with 1.5B parameters generates sequences 40% faster than a standard 350MTransformer, while also improving perplexity when using the same amount of computation to train. Megabyte far outperforms other models and provides results competitive with the sota model trained on subwords In comparison, OpenAI’s GPT-4 has a 32,000 token limit, and Anthropic’s Claude has a 100,000 token limit. In addition, in terms of computational efficiency, within a fixed model size and sequence length range, Megabyte uses fewer tokens than Transformers and Linear Transformers of the same size, allowing the same computational cost. Use a larger model.

Together, these improvements allow us to train under the same computational budget Larger, better-performing models that scale to very long sequences and increase build speed during deployment.

With the AI arms race in full swing, model performance is getting stronger and stronger, and parameters are getting higher and higher.

While GPT-3.5 was trained on 175B parameters, some speculate that the more powerful GPT-4 was trained on 1 trillion parameters.

OpenAI CEO Sam Altman also recently suggested a change in strategy. He said that the company is considering abandoning the training of huge models and focusing on other performance optimizations.

He equates the future of AI models to iPhone chips, while most consumers know nothing about the original technical specifications.

Meta researchers believe their innovative architecture comes at the right time, but admit there are other avenues for optimization.

For example, a more efficient encoder model using patching technology, a decoding model that decomposes the sequence into smaller blocks, and preprocessing the sequence into a compressed token, etc., and can extend the existing Transformer Architectural capabilities to build next-generation models.

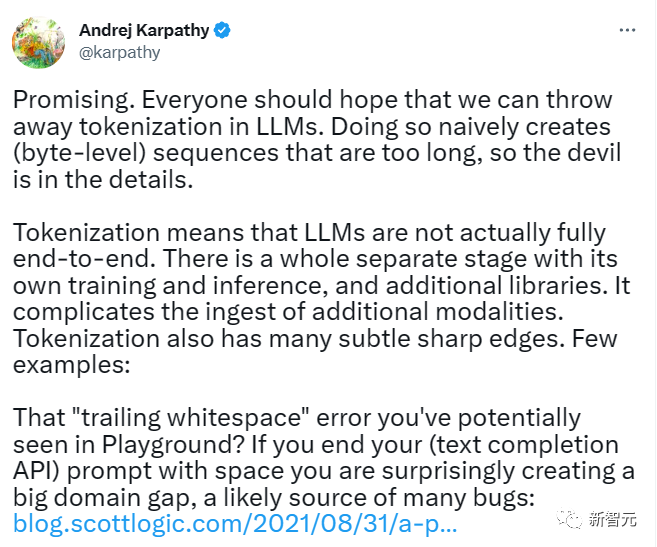

Former Tesla AI director Andrej Karpathy also expressed his views on this paper. He wrote on Twitter:

This is very promising and everyone should be hoping that we can throw away tokenization in large models and eliminate the need for those long byte sequences.

The above is the detailed content of 40% faster than Transformer! Meta releases new Megabyte model to solve the problem of computing power loss. For more information, please follow other related articles on the PHP Chinese website!