Home > Article > Technology peripherals > GPT4ALL: The ultimate open source large language model solution

There is a growing ecosystem of open source language models that provides individuals with comprehensive resources to create language applications for research and commercial purposes.

This article takes a deep dive into GPT4ALL, which goes beyond specific use cases by providing comprehensive building blocks that enable anyone to develop chatbots like ChatGPT.

GPT4ALL can provide all the support needed when using state-of-the-art open-source large language models.. It can access open source models and datasets, train and run them using the provided code, interact with them using a web interface or desktop application, connect to the Langchain backend for distributed computing, and use the Python API for easy integration.

The developers recently launched the Apache-2 licensed GPT4All-J chatbot, which is trained on a large and curated corpus of assistant interactions, including word questions, multi-turn conversations, code, poetry, songs and stories. To make it more accessible, they also released Python bindings and a chat UI, allowing almost anyone to run the model on a CPU.

You can try it yourself by installing a local chat client on your desktop.

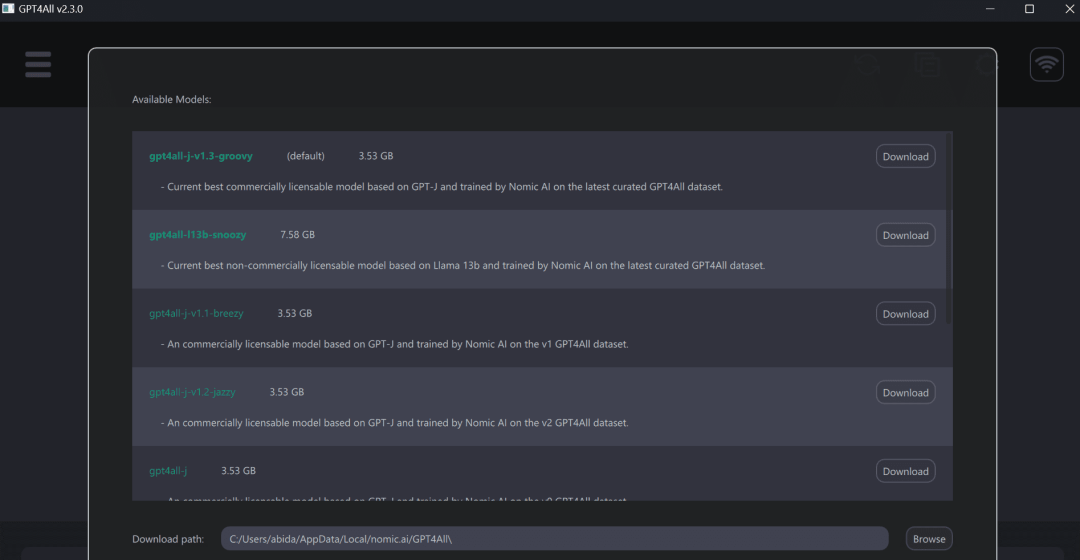

After that, run the GPT4ALL program and download itself Selected model. You can also manually download the model here (https://github.com/nomic-ai/gpt4all-chat#manual-download-of-models) and install it in the location indicated by the model download dialog in the GUI.

Using GPT4ALL has a better experience on a laptop, getting fast and accurate responses. Even non-technical people can easily use GPT4ALL as it is very user-friendly.

GPT4ALL has Python, TypeScript, Web Chat interface, and Langchain backends.

In this section, we will look at the Python API for accessing models using nomic-ai/pygpt4all.

pip install pygpt4allfrom pygpt4all.models.gpt4all import GPT4Alldef new_text_callback(text):print(text, end="")model = GPT4All("./models/ggml-gpt4all-l13b-snoozy.bin")model.generate("Once upon a time, ", n_predict=55, new_text_callback=new_text_callback)Additionally, converters can be used to download and run inference. Just provide the model name and version. The examples in this article are accessing the latest and improved v1.3-groovy model.

from transformers import AutoModelForCausalLMmodel = AutoModelForCausalLM.from_pretrained("nomic-ai/gpt4all-j", revisinotallow="v1.3-groovy")In the nomic-ai/gpt4all repository, you can obtain source code, model weights, datasets, and documentation for training and inference. You can try out some models first and then integrate them using the Python client or LangChain.

GPT4ALL provides us with a CPU-quantified GPT4All model checkpoint. To access it we must:

Linux :cd chat;./gpt4all-lora-quantized-linux-x86

The above is the detailed content of GPT4ALL: The ultimate open source large language model solution. For more information, please follow other related articles on the PHP Chinese website!