Original title: RoadBEV: Road Surface Reconstruction in Bird's Eye View

Paper link: https://arxiv.org/pdf/2404.06605.pdf

Code link: https ://github.com/ztsrxh/RoadBEV

Author affiliation: Tsinghua University, University of California, Berkeley

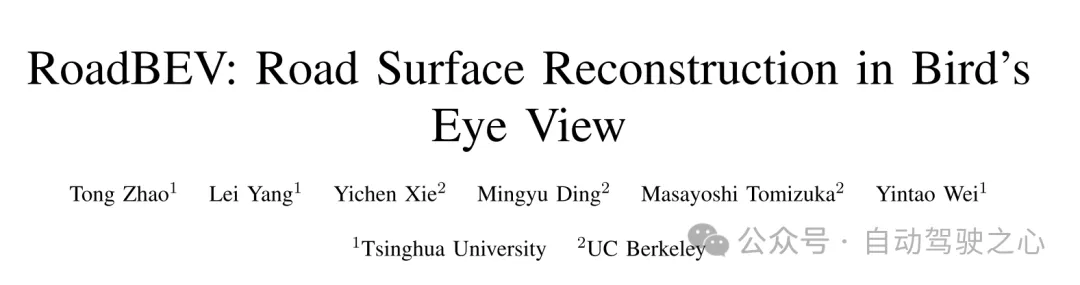

However, road surface reconstruction (RSR) from the image perspective has inherent shortcomings. The depth estimation for a specific pixel is actually to find optimal bins along the direction perpendicular to the image plane (shown as the orange point in Figure 1(b)). There is a certain angular deviation between the depth direction and the road surface. Changes and trends in road profile features are inconsistent with changes and trends in the search direction. Information cues about road elevation changes are sparse in the depth view. Furthermore, the depth search range is the same for each pixel, causing the model to capture global geometric hierarchy rather than local surface structure. Due to the global but coarse depth search, fine road elevation information is destroyed. Since this paper focuses on elevation in the vertical direction, the effort in the depth direction is wasted. In perspective views, texture details at long distances are lost, which further poses challenges for efficient depth regression unless further a priori constraints are introduced [12].

Estimating road elevation from a top view (i.e., bird's eye view, BEV) is a natural idea because elevation essentially describes vibrations in the vertical direction. Bird's eye view is an effective paradigm for representing multi-modal and multi-view data in unified coordinates [13], [14]. Recent state-of-the-art performance on 3D object detection and segmentation tasks was achieved by approaches based on bird's-eye views [15], as opposed to perspective views, which are performed by introducing estimated heads on view-transformed image features. Figure 1 illustrates the motivation for this paper. Instead of focusing on the global structure in the image view, the reconstruction in the bird's-eye view directly identifies road features within a specific small range in the vertical direction. Road features projected in a bird's-eye view densely reflect structural and contour changes, facilitating efficient and refined searches. The influence of perspective effects is also suppressed because roads are represented uniformly on a plane perpendicular to the viewing angle. Road reconstruction based on bird's-eye view features is expected to achieve higher performance.

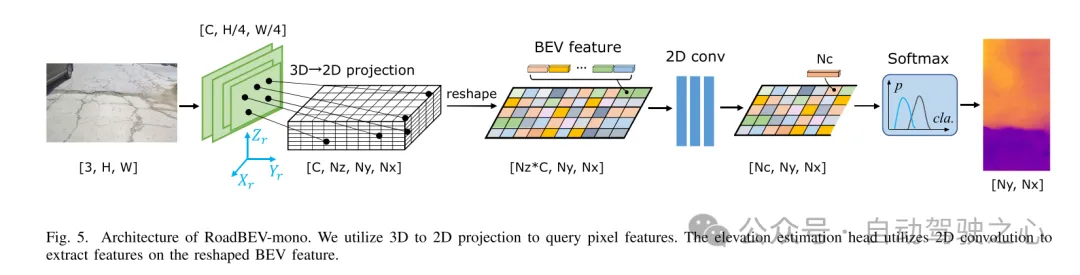

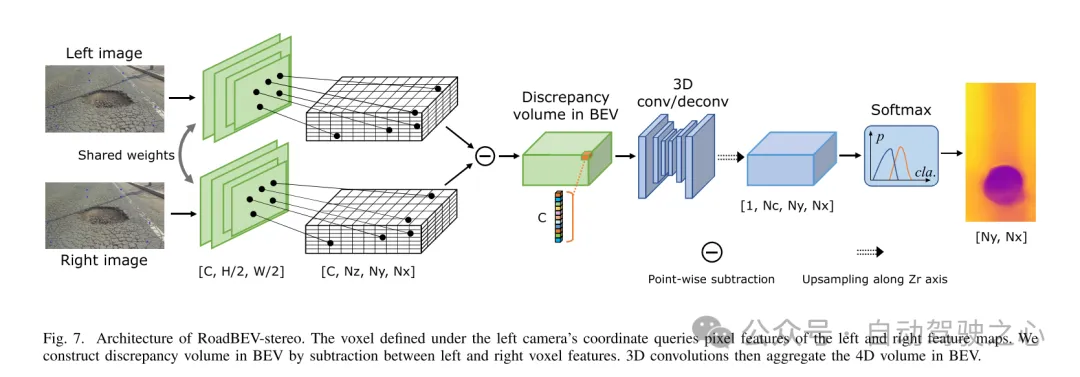

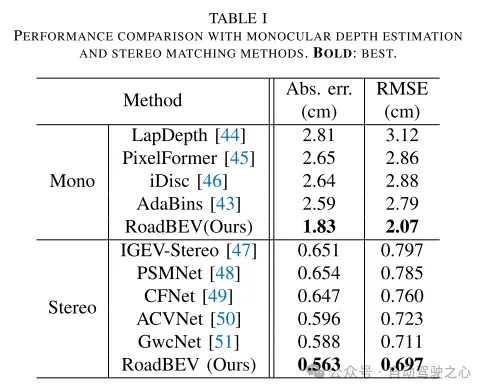

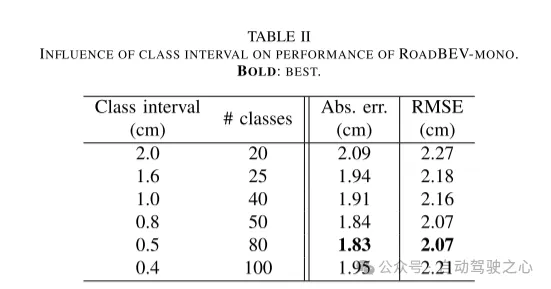

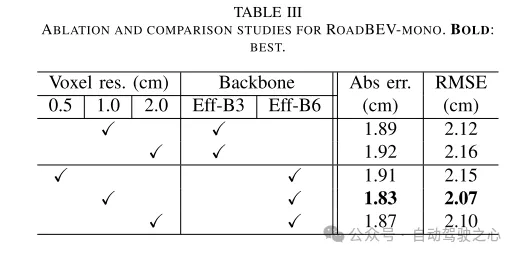

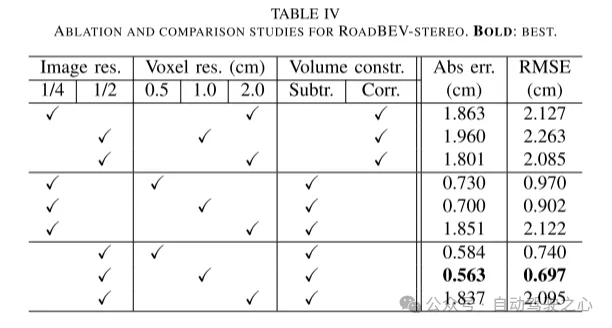

This paper reconstructs the road surface under BEV to solve the problems identified above. In particular, this paper focuses on road geometry, namely elevation. In order to utilize monocular and binocular images and demonstrate the broad feasibility of bird's-eye view perception, this paper proposes two sub-models named RoadBEV-mono and RoadBEV-stereo. Following the paradigm of a bird's eye view, this paper defines voxels of interest covering potential road relief. These voxels query pixel features through 3D-2D projection. For RoadBEV-mono, this paper introduces a height estimation head on the reshaped voxel features. The structure of RoadBEV-stereo is consistent with binocular matching in image views. Based on the left and right voxel features, a 4D cost volume is constructed in the bird's-eye view, which is aggregated through 3D convolution. Elevation regression is considered as a classification of predefined bins to enable more efficient model learning. This paper validates these models on a real-world dataset previously published by the authors, showing that they have huge advantages over traditional monocular depth estimation and stereo matching methods.

Figure 1. Motivation for this article. (a) Regardless of monocular or binocular configuration, our reconstruction method in bird's-eye view (BEV) outperforms the method in image view. (b) When performing depth estimation in the image view, the search direction is biased from the road elevation direction. In the depth view, road outline features are sparse. Potholes are not easily identified. (c) In a bird's-eye view, contour vibrations such as potholes, curb steps and even ruts can be accurately captured. Road elevation features in the vertical direction are denser and easier to identify.

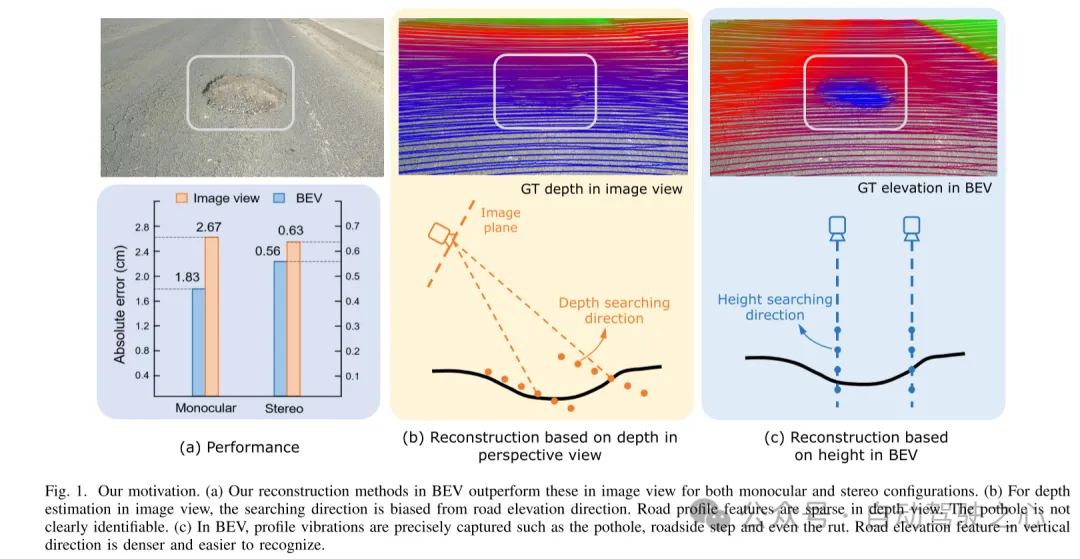

Figure 2. Coordinate representation and generation of true value (GT) elevation labels. (a) Coordinates (b) Region of interest (ROI) in image view (c) Region of interest (ROI) in bird's eye view (d) Generating ground truth (GT) labels in grid

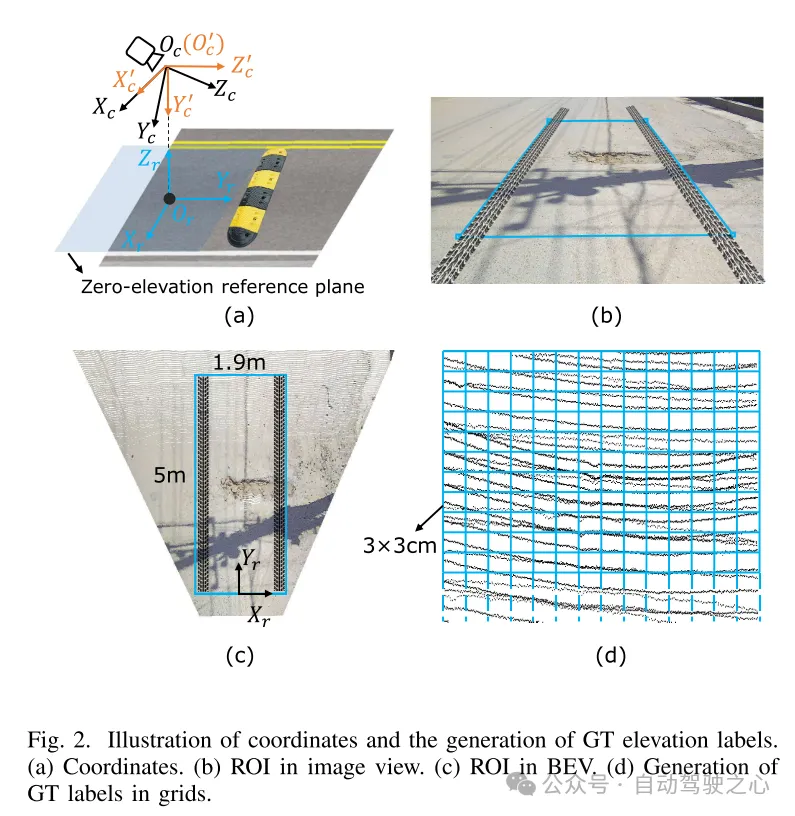

Figure 3. Example of road image and ground truth (GT) elevation map.

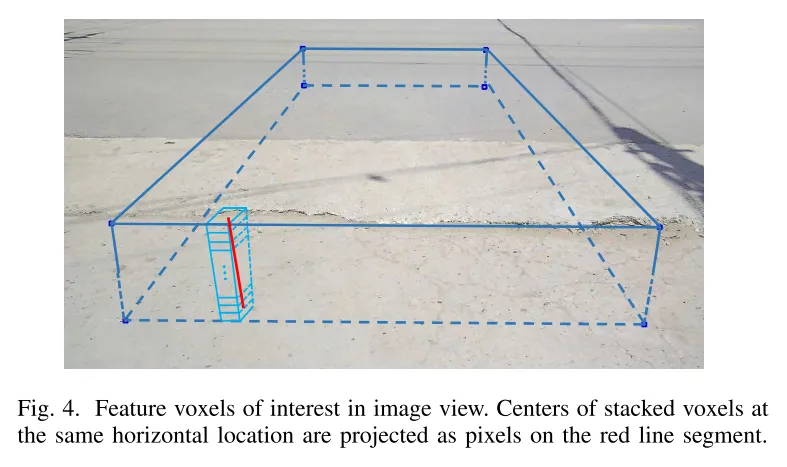

Figure 4. Feature voxels of interest in image view. The centers of stacked voxels located at the same horizontal position are projected to pixels on the red line segment.

Figure 5. Architecture of RoadBEV-mono. This paper uses 3D to 2D projection to query pixel features. The elevation estimation head uses 2D convolution to extract features on the reshaped Bird's Eye View (BEV) features.

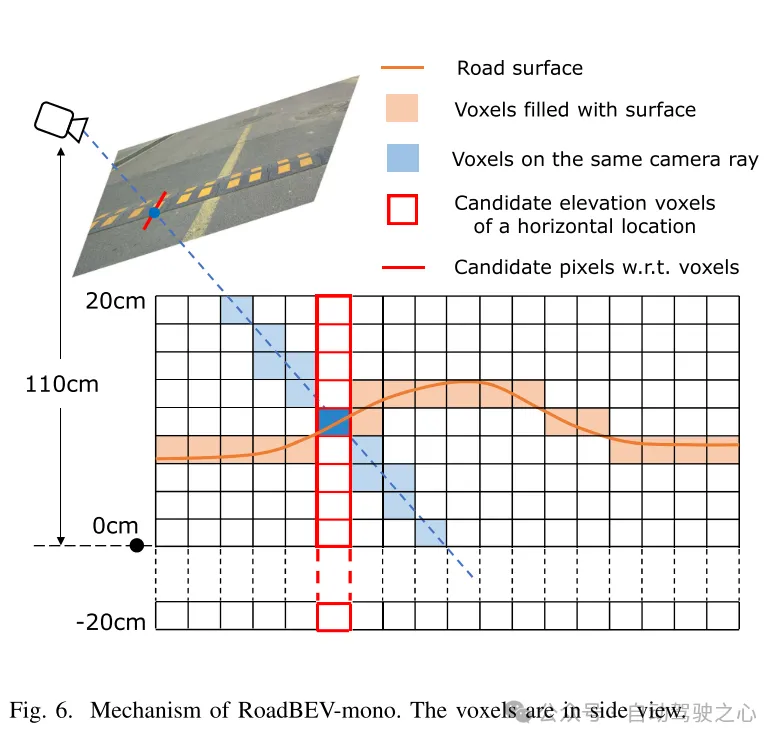

図6. RoadBEV-monoの仕組み。ボクセルは側面図で表示されます。

図 7. RoadBEV ステレオ アーキテクチャ。左カメラ座標系で定義されたボクセルは、左右の特徴マップのピクセル特徴を照会します。この論文では、左右のボクセル特徴間の減算を通じて鳥瞰図 (BEV) の差分ボリュームを構築します。次に、3D コンボリューションにより、鳥瞰図で 4D ボリュームが集約されます。

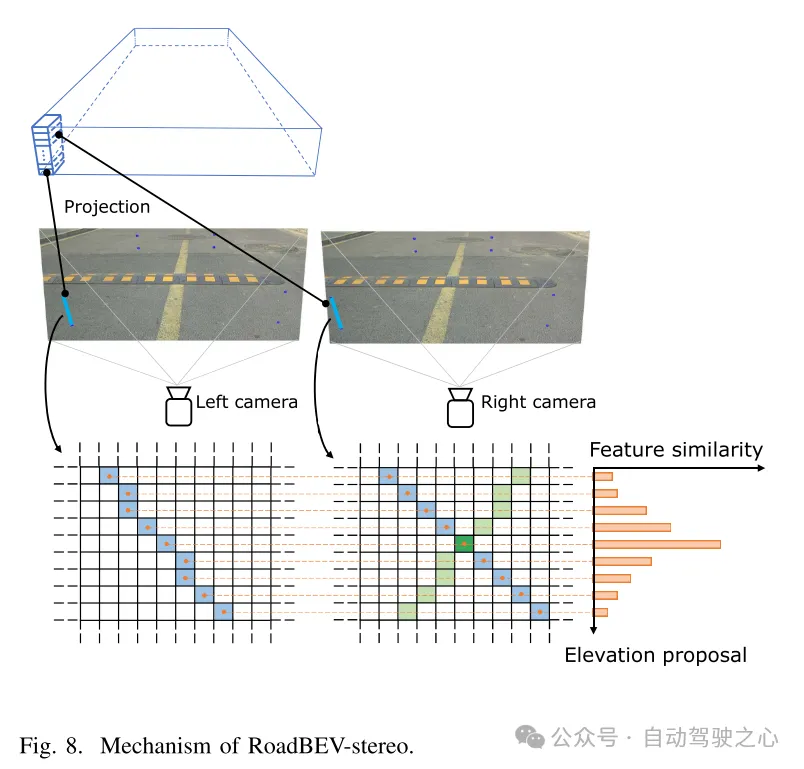

図 8. RoadBEV ステレオのメカニズム。

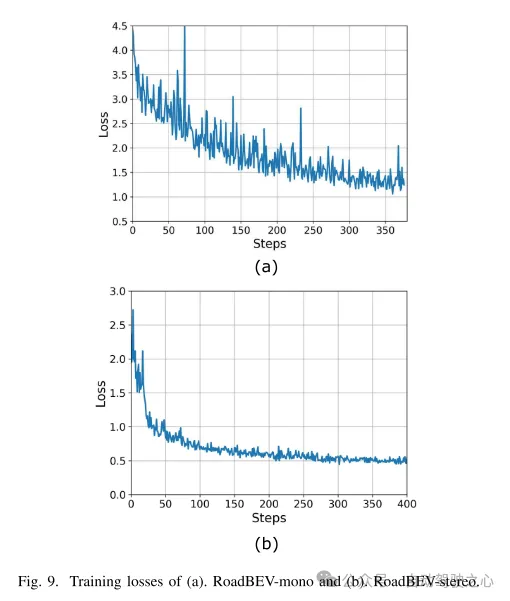

図 9. (a) RoadBEV モノラルと (b) RoadBEV ステレオのトレーニング損失。

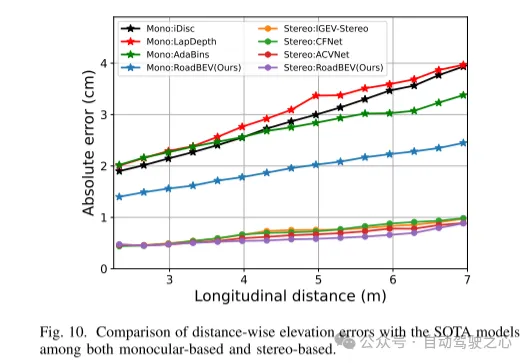

図 10. 単眼と両眼に基づく SOTA モデルとの距離方向の標高誤差の比較。

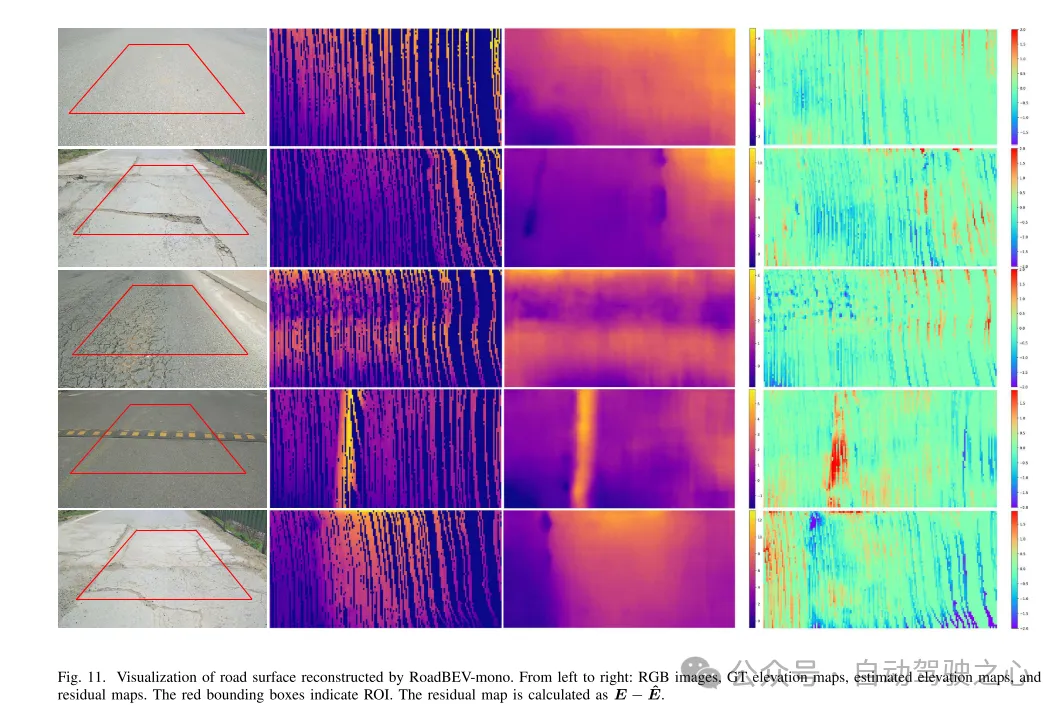

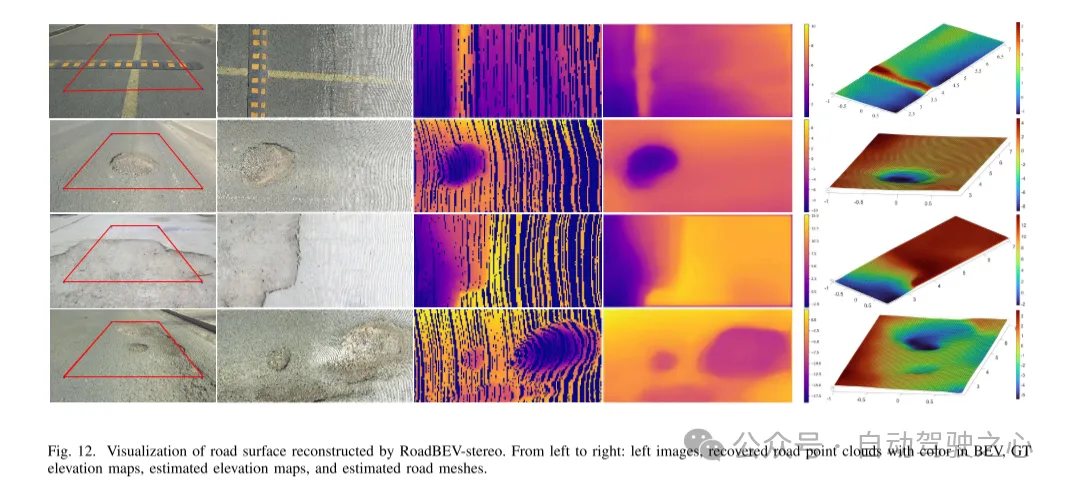

図 11. RoadBEV-mono によって再構築された路面の可視化。

図 12. RoadBEV ステレオによって再構築された路面の視覚化。

本稿では初めて路面の高さを鳥瞰図で再現しました。この論文では、それぞれ RoadBEV-mono と RoadBEV-stereo と名付けられた、単眼画像と両眼画像に基づく 2 つのモデルを提案し、分析します。この論文では、BEV における単眼推定と両眼マッチングは透視図と同じメカニズムであり、探索範囲を狭め、標高方向に直接特徴をマイニングすることで改善されることを発見しました。現実世界のデータセットでの包括的な実験により、提案された BEV ボリューム、推定ヘッド、パラメーター設定の実現可能性と優位性が検証されます。単眼カメラの場合、BEV での再構成パフォーマンスは、透視図と比較して 50% 向上します。同時に、BEVでは、双眼カメラを使用した場合の性能が単眼カメラの3倍になります。この記事では、モデルに関する詳細な分析とガイダンスを提供します。この記事の画期的な探求は、BEV 知覚、3D 再構成、および 3D 検出に関連するさらなる研究と応用のための貴重な参考資料も提供します。

以上が清華の最新作! RoadBEV: BEV で路面の再構築をどのように実現するか?の詳細内容です。詳細については、PHP 中国語 Web サイトの他の関連記事を参照してください。