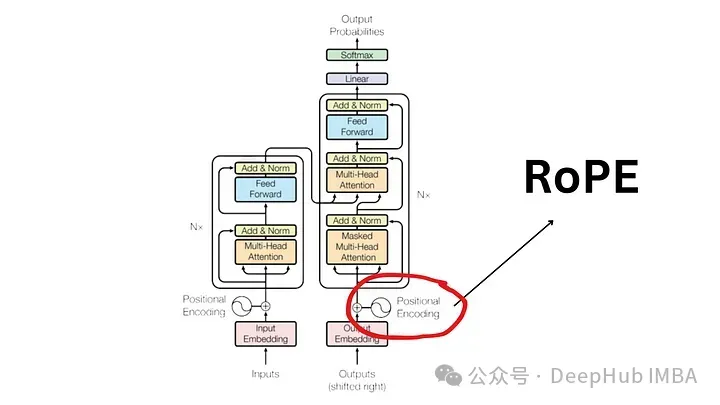

Since the "Attention Is All You Need" paper published in 2017, the Transformer architecture has been the cornerstone of the natural language processing (NLP) field. Its design has remained largely unchanged for years, with 2022 marking a major development in the field with the introduction of Rotary Position Encoding (RoPE).

Rotated position embedding is the most advanced NLP position embedding technology. Most popular large-scale language models such as Llama, Llama2, PaLM, and CodeGen already use it. In this article, we’ll take a deep dive into what rotational positional encodings are, and how they neatly blend the advantages of absolute and relative positional embeddings.

In order to understand the importance of RoPE, let’s first review why positional encoding Encoding is crucial. Transformer models, by their inherent design, do not take into account the order of input tokens.

For example, phrases like "the dog chases the pig" and "the pig chases the dogs", although they have different meanings, are considered indistinguishable because they are treated as an unordered set of tokens. . In order to maintainsequence information and its meaning, a representation is needed to integrate positional information into the model.

In order to encode the position in the sentence, another tool is required using a vector with the same dimensions, where each A vector represents a position in a sentence. For example, specify a specific vector for the second word in a sentence. Therefore, each sentence position has its unique vector. The input to the Transformer layer is then formed by combining the word embeddings with the embeddings of their corresponding positions.

There are two main ways to generate these embeddings:

Although widely used, absolute positional embedding is not without its disadvantages:

The relative position does not focus on the absolute position of the note in the sentence, but on the relationship between the note pairs. distance. This method does not add position vectors directly to the word vectors. Instead, the attention mechanism is changed to incorporate relative position information.

T5 (Text-to-Text Transfer Transformer) is a well-known model that utilizes relative position embedding. T5 introduces a subtle way of handling position information:

尽管它们在理论上很有吸引力,但相对位置编码得问题很严重

由于这些工程复杂性,位置编码未得到广泛采用,特别是在较大的语言模型中。

RoPE 代表了一种编码位置信息的新方法。传统方法中无论是绝对方法还是相对方法,都有其局限性。绝对位置编码为每个位置分配一个唯一的向量,虽然简单但不能很好地扩展并且无法有效捕获相对位置;相对位置编码关注标记之间的距离,增强模型对标记关系的理解,但使模型架构复杂化。

RoPE巧妙地结合了两者的优点。允许模型理解标记的绝对位置及其相对距离的方式对位置信息进行编码。这是通过旋转机制实现的,其中序列中的每个位置都由嵌入空间中的旋转表示。RoPE 的优雅之处在于其简单性和高效性,这使得模型能够更好地掌握语言语法和语义的细微差别。

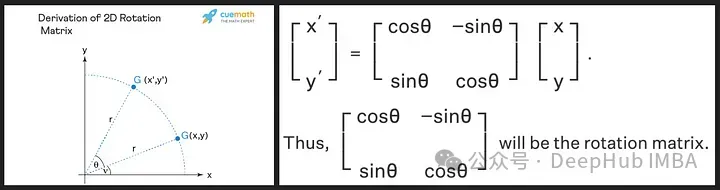

旋转矩阵源自我们在高中学到的正弦和余弦的三角性质,使用二维矩阵应该足以获得旋转矩阵的理论,如下所示!

我们看到旋转矩阵保留了原始向量的大小(或长度),如上图中的“r”所示,唯一改变的是与x轴的角度。

RoPE 引入了一个新颖的概念。它不是添加位置向量,而是对词向量应用旋转。旋转角度 (θ) 与单词在句子中的位置成正比。第一个位置的向量旋转 θ,第二个位置的向量旋转 2θ,依此类推。这种方法有几个好处:

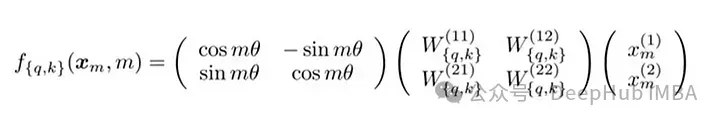

RoPE的技术实现涉及到旋转矩阵。在 2D 情况下,论文中的方程包含一个旋转矩阵,该旋转矩阵将向量旋转 Mθ 角度,其中 M 是句子中的绝对位置。这种旋转应用于 Transformer 自注意力机制中的查询向量和键向量。

对于更高维度,向量被分成 2D 块,并且每对独立旋转。这可以被想象成一个在空间中旋转的 n 维。听着这个方法好好像实现是复杂,其实不然,这在 PyTorch 等库中只需要大约十行代码就可以高效的实现。

import torch import torch.nn as nn class RotaryPositionalEmbedding(nn.Module): def __init__(self, d_model, max_seq_len): super(RotaryPositionalEmbedding, self).__init__() # Create a rotation matrix. self.rotation_matrix = torch.zeros(d_model, d_model, device=torch.device("cuda")) for i in range(d_model): for j in range(d_model): self.rotation_matrix[i, j] = torch.cos(i * j * 0.01) # Create a positional embedding matrix. self.positional_embedding = torch.zeros(max_seq_len, d_model, device=torch.device("cuda")) for i in range(max_seq_len): for j in range(d_model): self.positional_embedding[i, j] = torch.cos(i * j * 0.01) def forward(self, x): """Args:x: A tensor of shape (batch_size, seq_len, d_model). Returns:A tensor of shape (batch_size, seq_len, d_model).""" # Add the positional embedding to the input tensor. x += self.positional_embedding # Apply the rotation matrix to the input tensor. x = torch.matmul(x, self.rotation_matrix) return x

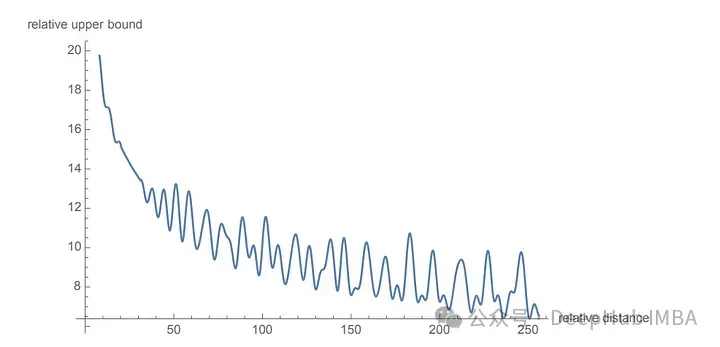

为了旋转是通过简单的向量运算而不是矩阵乘法来执行。距离较近的单词更有可能具有较高的点积,而距离较远的单词则具有较低的点积,这反映了它们在给定上下文中的相对相关性。

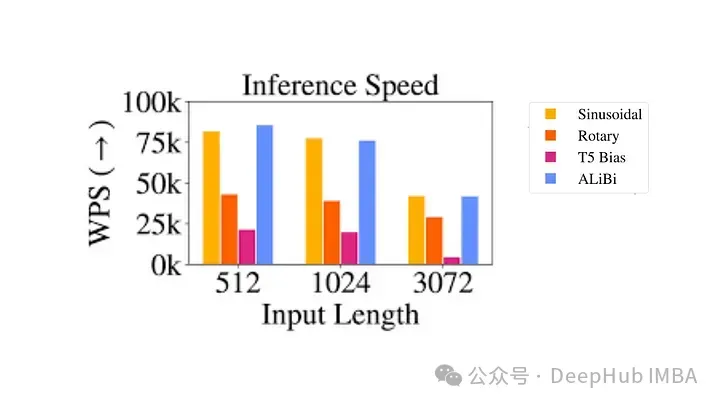

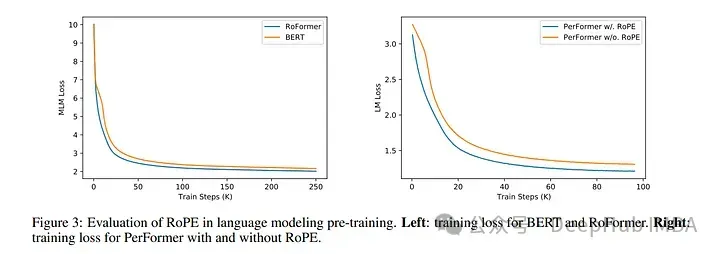

使用 RoPE 对 RoBERTa 和 Performer 等模型进行的实验表明,与正弦嵌入相比,它的训练时间更快。并且该方法在各种架构和训练设置中都很稳健。

最主要的是RoPE是可以外推的,也就是说可以直接处理任意长的问题。在最早的llamacpp项目中就有人通过线性插值RoPE扩张,在推理的时候直接通过线性插值将LLAMA的context由2k拓展到4k,并且性能没有下降,所以这也可以证明RoPE的有效性。

代码如下:

import transformers old_init = transformers.models.llama.modeling_llama.LlamaRotaryEmbedding.__init__ def ntk_scaled_init(self, dim, max_position_embeddings=2048, base=10000, device=None): #The method is just these three linesmax_position_embeddings = 16384a = 8 #Alpha valuebase = base * a ** (dim / (dim-2)) #Base change formula old_init(self, dim, max_position_embeddings, base, device) transformers.models.llama.modeling_llama.LlamaRotaryEmbedding.__init__ = ntk_scaled_init

旋转位置嵌入代表了 Transformer 架构的范式转变,提供了一种更稳健、直观和可扩展的位置信息编码方式。

RoPE不仅解决了LLM context过长之后引起的上下文无法关联问题,并且还提高了训练和推理的速度。这一进步不仅增强了当前的语言模型,还为 NLP 的未来创新奠定了基础。随着我们不断解开语言和人工智能的复杂性,像 RoPE 这样的方法将有助于构建更先进、更准确、更类人的语言处理系统。

The above is the detailed content of Detailed explanation of rotational position encoding RoPE commonly used in large language models: why is it better than absolute or relative position encoding?. For more information, please follow other related articles on the PHP Chinese website!