Google has released a new video framework:

You only needa picture of you and a recording of your speech, and you can getA lifelike video of my speech.

The video duration is variable, and the current example seen is up to 10s.

You can see that whether it is mouth shape or facial expression, it is very natural.

If the input image covers the entire upper body, it can also be matched with rich gestures:

Netizens see After finishing it, he said:

With it, we no longer need to fix our hair or put on clothes for online video conferences in the future.

Well, just take a portrait and record the speech audio (manual dog head)

This framework is calledVLOGGER.

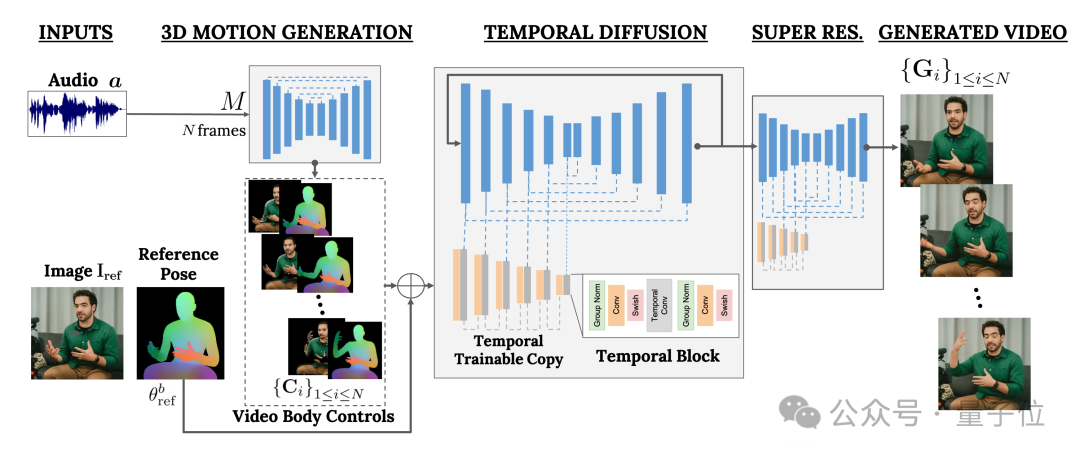

It is mainly based on the diffusion model and contains two parts:

One is the random human-to-3D-motion (human-to-3d-motion) Diffusion model.

The other is a new diffusion architecture for enhancing text-to-image models.

Among them, the former is responsible for using the audio waveform as input to generate the character's body control actions, including eyes, expressions and gestures, overall body posture, etc.

The latter is a temporal dimension image-to-image model that is used to extend the large-scale image diffusion model and use the just predicted actions to generate corresponding frames.

In order to make the results conform to a specific character image, VLOGGER also takes the pose diagram of the parameter image as input.

The training of VLOGGER is completed on a very large data set (named MENTOR) .

How big is it? It has a total length of 2,200 hours and contains a total of 800,000 character videos.

Among them, the video duration of the test set is also 120 hours long, with a total of 4,000 characters.

According to Google, the most outstanding performance of VLOGGER is its diversity:

As shown in the figure below, the darker the color of the final pixel image (red) represents The richer the action.

Compared with previous similar methods in the industry, the biggest advantage of VLOGGER is that it does not require training for each person, does not rely on face detection and cropping, and The generated video is very complete (including both face and lips, as well as body movements) and so on.

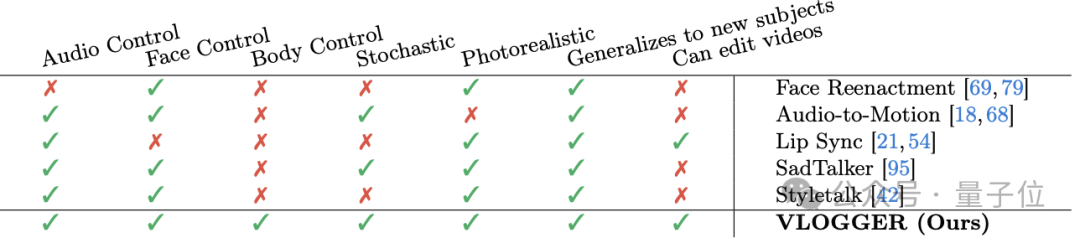

Specifically, as shown in the following table:

The Face Reenactment method cannot use audio and text to control such video generation.

Audio-to-motion can generate audio by encoding audio into 3D facial movements, but the effect it generates is not realistic enough.

Lip sync can handle videos of different themes, but it can only simulate mouth movements.

In comparison, the latter two methods SadTaker and Styletalk perform closest to Google VLOGGER, but they are also defeated by the inability to control the body and further edit the video.

Speaking of video editing, as shown in the figure below, one of the applications of the VLOGGER model is this. It can make the character shut up, close eyes, and only close the left eye with one click. Or keep your eyes open the whole time:

#Another application is video translation:

For example, change the English speech in the original video into Spanish with the same mouth shape.

Finally, according to the "old rule", Google did not release the model. Now all we can see are more effects and papers.

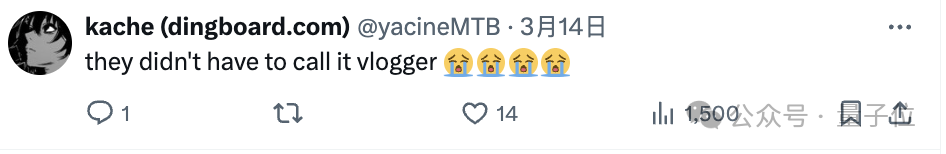

Well, there are a lot of complaints:

The image quality of the model, the mouth shape is not right, it still looks very robotic, etc.

Therefore, some people do not hesitate to leave negative reviews:

Is this the level of Google?

I’m a bit sorry for the name “VLOGGER”.

#——Compared with OpenAI’s Sora, the netizen’s statement is indeed not unreasonable. .

What do you think?

More effects:https://enriccorona.github.io/vlogger/

Full paper:https://enriccorona.github.io/vlogger/paper.pdf

The above is the detailed content of Google releases 'Vlogger” model: a single picture generates a 10-second video. For more information, please follow other related articles on the PHP Chinese website!