Robots are a technology with unlimited potential, especially with the support of intelligent technology. Recently, some large-scale models with revolutionary applications are considered to be possible intelligent brains for robots, which can help robots perceive and understand the world, and make decisions and plans. Recently, a joint team led by Yonatan Bisk of CMU and Fei Xia of Google DeepMind released a review report introducing the application and development of basic models in the field of robotics.

#Human beings have always dreamed of developing a robot that can adapt to different environments autonomously. However, realizing this dream is a long and challenging road.

In the past, robot perception systems usually used traditional deep learning methods, which required a large amount of labeled data to train supervised learning models. However, labeling large datasets through crowdsourcing is very costly.

In addition, classic supervised learning methods have certain limitations in their generalization capabilities. In order to apply these trained models to specific scenarios or tasks, careful design of domain adaptation technology is usually required, which often requires further data collection and annotation. Likewise, traditional robot planning and control methods also require accurate modeling of the dynamics of the environment, the agent itself, and other agents. These models are often built for a specific environment or task, and when conditions change, the model needs to be rebuilt. This shows that the transfer performance of classical models is also limited.

In fact, for many use cases, building effective models is either too expensive or simply impossible. Although deep (reinforcement) learning-based motion planning and control methods help alleviate these problems, they still suffer from distribution shift and reduced generalization ability.

Although there are many challenges in developing general-purpose robotic systems, the fields of natural language processing (NLP) and computer vision (CV) have made rapid progress recently, including for NLP Large language model (LLM), diffusion model for high-fidelity image generation, powerful visual model and visual language model for CV tasks such as zero-shot/few-shot generation.

The so-called "foundation model" is actually a large pre-training model (LPTM). They have powerful visual and verbal abilities. Recently, these models have also been applied in the field of robotics and are expected to give robotic systems open-world perception, task planning and even motion control capabilities. In addition to using existing vision and/or language basic models in the field of robotics, some research teams are developing basic models for robot tasks, such as action models for manipulation or motion planning models for navigation. These basic robot models demonstrate strong generalization capabilities and can adapt to different tasks and even specific solutions.

There are also researchers who directly use vision/language basic models for robot tasks, which shows the possibility of integrating different robot modules into a single unified model.

Although vision and language basic models have promising prospects in the field of robotics, and new robot basic models are also being developed, there are still many challenges in the field of robotics that are difficult to solve.

From the perspective of actual deployment, models are often unreproducible, unable to generalize to different robot forms (multi-embodied generalization) or difficult to accurately understand which behaviors in the environment is feasible (or acceptable). In addition, most research uses Transformer-based architecture, focusing on semantic perception of objects and scenes, task-level planning, and control. Other parts of the robot system are less studied, such as basic models for world dynamics or basic models that can perform symbolic reasoning. These require cross-domain generalization capabilities.

Finally, we also need more large-scale real-world data and high-fidelity simulators that support diverse robotic tasks.

This review paper summarizes the basic models used in the field of robotics, with the goal of understanding how the basic models can help solve or alleviate the core challenges in the field of robotics.

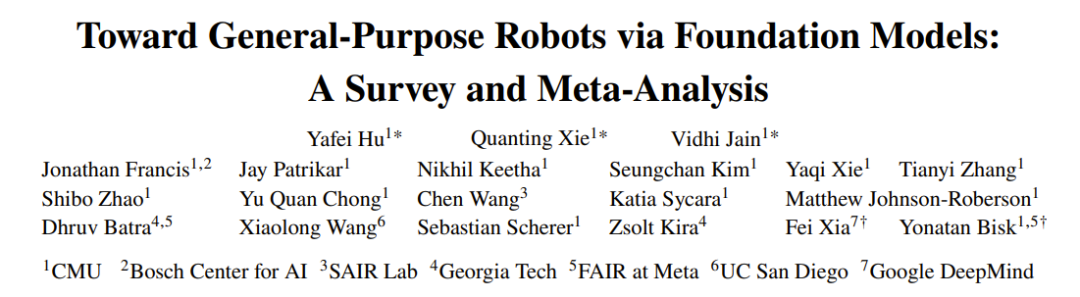

Figure 1 shows the main components of this review report.

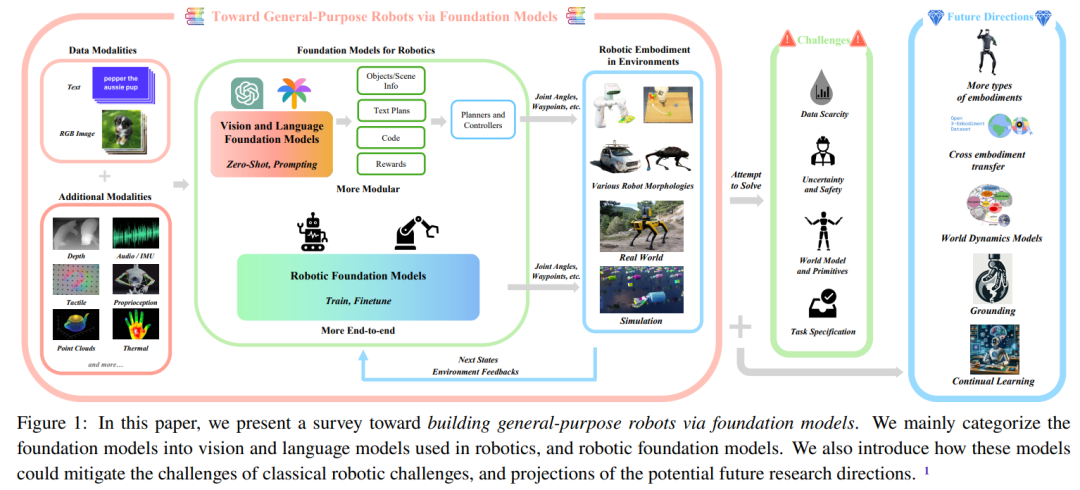

Figure 2 gives the overall structure of this review.

In order to help readers better understand the content of this review, the team first provides A section of preparatory knowledge content.

They will first introduce the basics of robotics and the best current technologies. The main focus here is on methods used in the field of robotics before the era of basic models. Here is a brief explanation, please refer to the original paper for details.

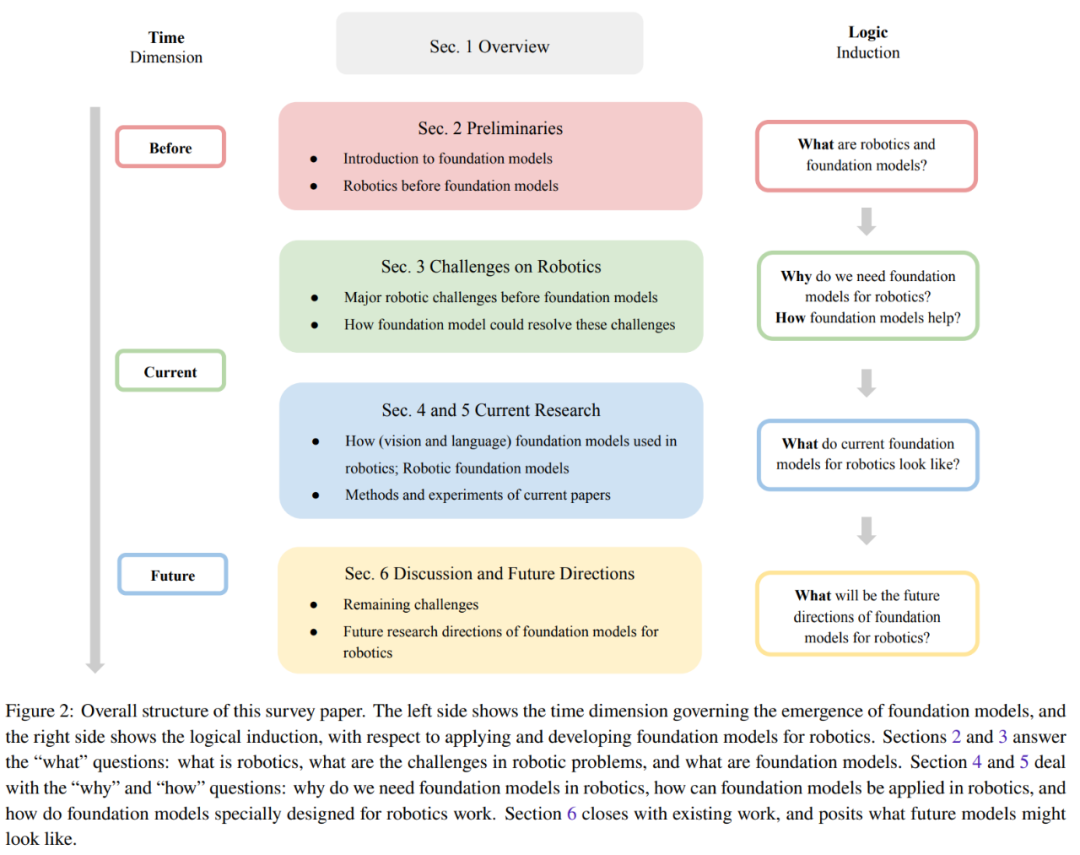

This section summarizes the five core challenges faced by different modules of a typical robotic system. Figure 3 shows the classification of these five challenges.

Robot systems often have difficulty accurately sensing and understanding its environment. They also lack the ability to generalize training results on one task to another, which further limits their usefulness in the real world. In addition, due to different robot hardware, it is also difficult to transfer the model to different forms of robots. The generalization problem can be partially solved by using the base model for robots.

The further question of generalization to different robot forms remains to be answered.

In order to develop reliable robot models, large-scale high-quality data is crucial. Efforts are already underway to collect large-scale data sets from the real world, including automated values, robot operation trajectories, and more. And collecting robot data from human demonstrations is expensive. And due to the diversity of tasks and environments, the process of collecting sufficient and extensive data in the real world will be even more complicated. Additionally, there are security concerns surrounding collecting data in the real world.

To address these challenges, many research efforts have attempted to generate synthetic data in simulated environments. These simulations can provide a highly realistic virtual world, allowing robots to learn and use their skills in nearly real-life scenarios. However, using simulated environments also has limitations, particularly in terms of the variety of objects, which makes the skills learned difficult to directly transfer to real-world situations.

In addition, in the real world, it is very difficult to collect data on a large scale, and it is even more difficult to collect the Internet-scale image/text data used to train the basic model. .

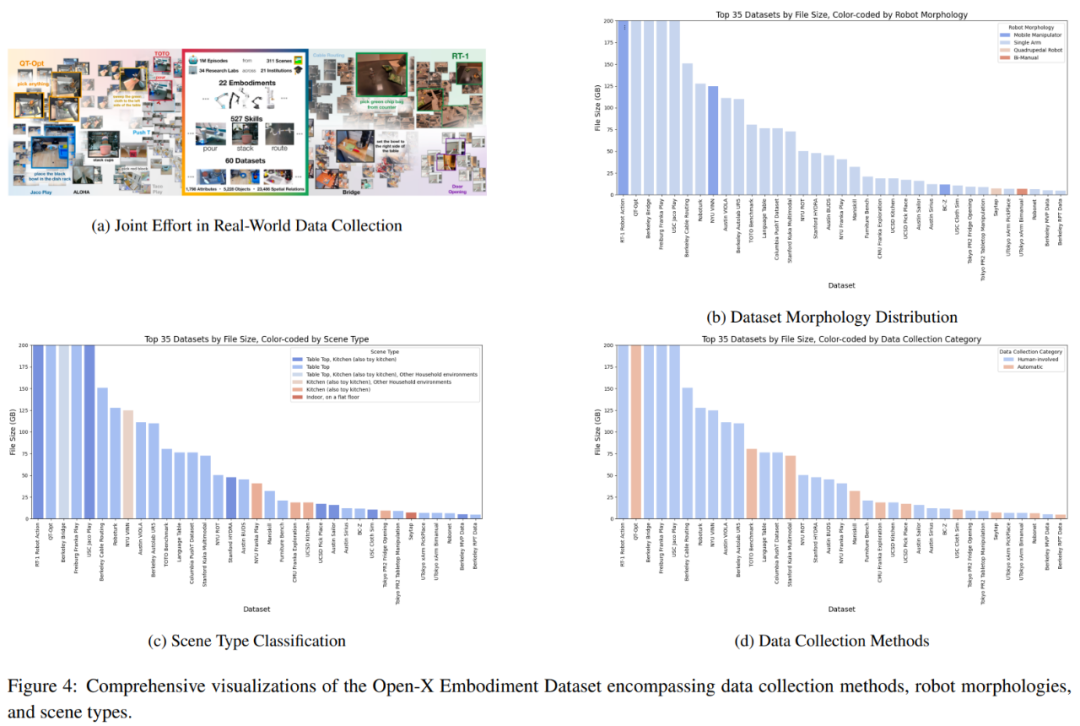

One promising approach is collaborative data collection, which brings together data from different laboratory environments and robot types, as shown in Figure 4a. However, the team took an in-depth look at the Open-X Embodiment Dataset and discovered that there were some limitations in terms of data type availability.

To achieve a general-purpose agent, a key challenge is to understand the task specifications and ground them in the robot's current understanding of the world. Typically, these task specifications are provided by the user, who only has a limited understanding of the limitations of the robot's cognitive and physical capabilities. This raises many questions, including not only what best practices can be provided for these task specifications, but also whether drafting these specifications is natural and simple enough. It is also challenging to understand and resolve ambiguities in task specifications based on the robot's understanding of its capabilities.

In order to deploy robots in the real world, a key challenge is dealing with the environment and task specifications inherent uncertainty. Depending on the source, uncertainty can be divided into epistemic uncertainty (uncertainty caused by lack of knowledge) and accidental uncertainty (noise inherent in the environment).

The cost of uncertainty quantification (UQ) may be so high that research and applications are unsustainable, and it may also prevent downstream tasks from being solved optimally. Given the massively over-parameterized nature of the underlying model, in order to achieve scalability without sacrificing model generalization performance, it is crucial to provide UQ methods that preserve the training scheme while changing the underlying architecture as little as possible. Designing robots that can provide reliable confidence estimates of their own behavior and, in turn, intelligently request clearly stated feedback remains an unsolved challenge.

Despite recent progress, ensuring that robots have the ability to learn from experience to fine-tune their strategies and stay safe in new environments remains challenging.

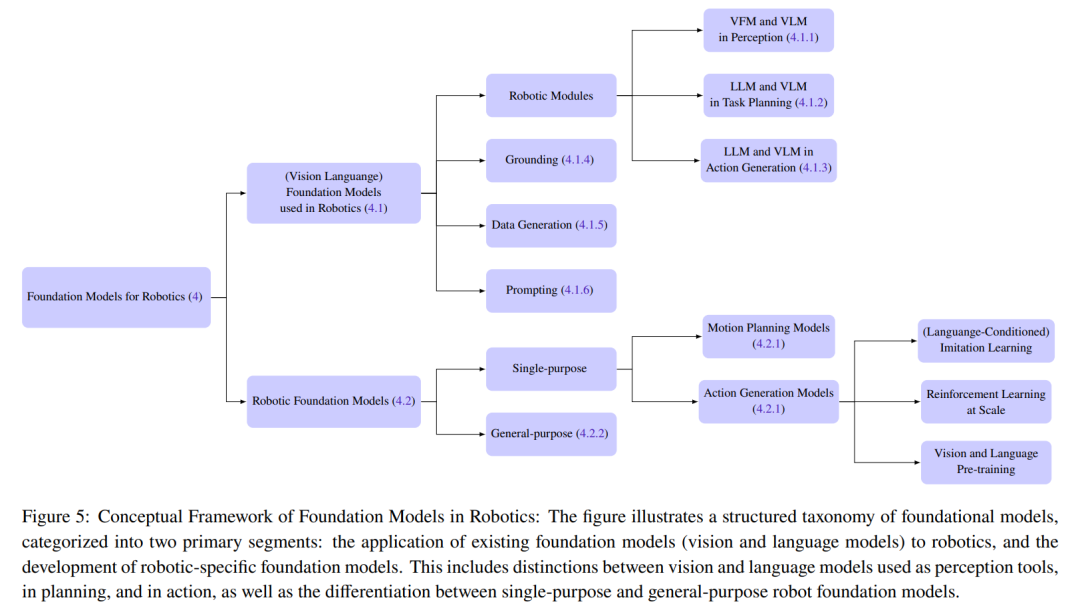

This section summarizes current research methods for base models of robots. The team divided the basic models used in the field of robotics into two major categories: basic models for robots and robot basic models (RFM).

The basic model used for robots mainly refers to using the visual and language basic models for robots in a zero-sample manner, which means that no additional fine-tuning or training is required. The robot base model may be warm-started using vision-language pre-training initialization and/or training the model directly on the robot dataset.

Figure 5 gives the classification details

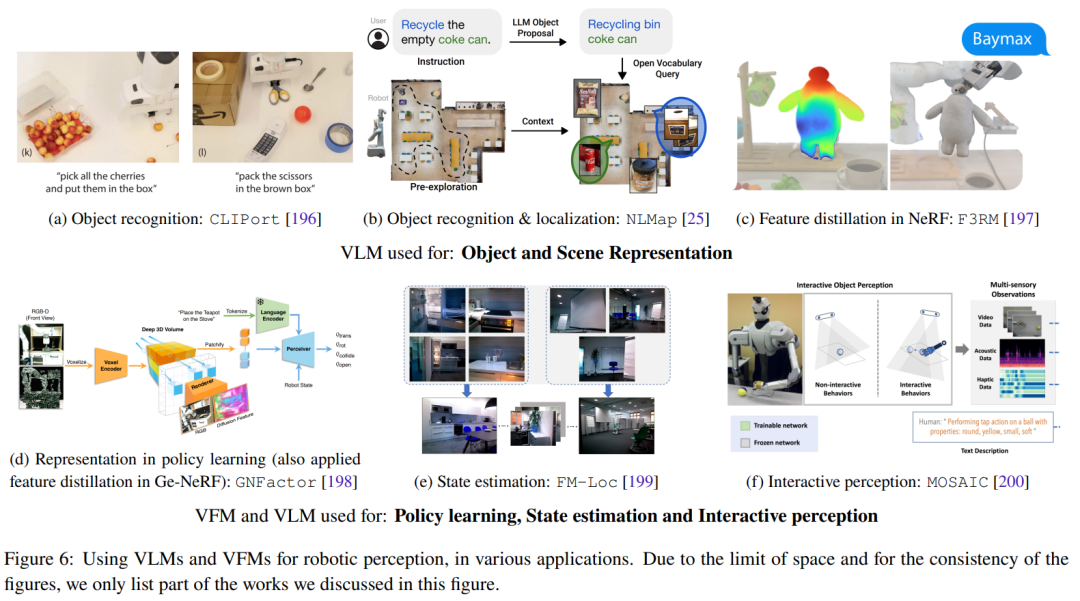

This section focuses on the zero-sample application of basic vision and language models in the field of robotics. This mainly includes deploying VLM in a zero-shot manner into robot perception applications, using the contextual learning capabilities of LLM for task-level and motion-level planning and action generation. Figure 6 shows some representative research works.

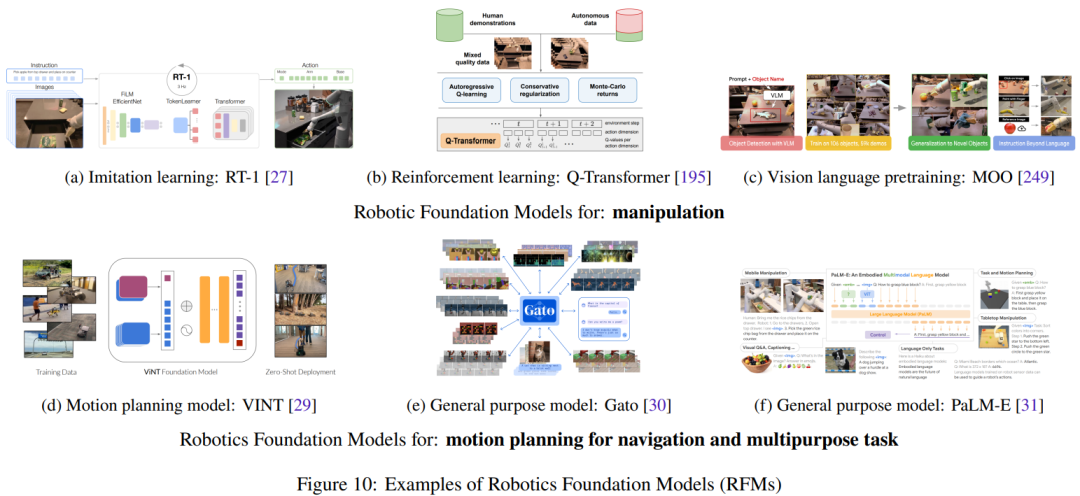

As robotics datasets containing state-action pairs from real robots grow, so does the Robot Fundamental Model (RFM) category Success becomes more and more likely. These models feature the use of robotic data to train the model to solve robotic tasks.

This section will summarize and discuss the different types of RFM. The first is an RFM that can perform a type of task in a single robot module, which is also called a single-objective robot base model. For example, an RFM can generate low-level actions to control the robot or a model that can generate higher-level motion planning.

The RFM that can perform tasks in multiple robot modules will be introduced later, that is, a universal model that can perform perception, control and even non-robotic tasks.

The five major challenges facing the field of robotics are listed above. This section describes how basic models can help address these challenges.

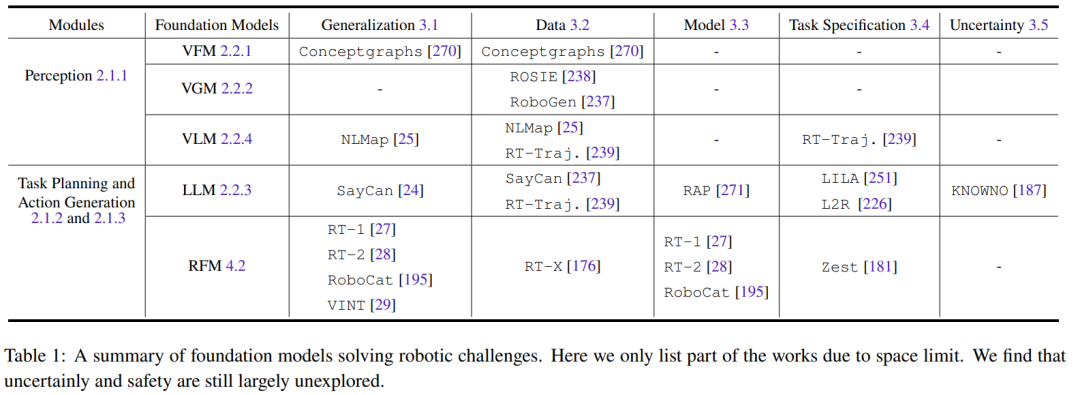

All basic models related to visual information (such as VFM, VLM and VGM) can be used in the robot’s perception module. LLM, on the other hand, is more versatile and can be used for planning and control. The Robot Basic Model (RFM) is typically used in planning and action generation modules. Table 1 summarizes the underlying models for solving different robotics challenges.

As can be seen from the table, all basic models are good at generalizing the tasks of various robot modules. LLM is particularly good at task specification. RFM, on the other hand, is good at dealing with the challenges of dynamic models since most RFMs are model-free approaches. For robot perception, generalization ability and model challenges are coupled with each other, because if the perception model already has good generalization ability, there is no need to acquire more data to perform domain adaptation or additional fine-tuning.

In addition, there is a lack of research on security challenges, which will be an important future research direction.

This section summarizes the current research results on datasets, benchmarks, and experiments.

There are limitations to relying solely on knowledge learned from language and visual datasets. As some research results show, some concepts such as friction and weight cannot be easily learned through these modalities alone.

Therefore, in order to enable robotic agents to better understand the world, the research community is not only adapting basic models from the language and vision domains, but also advancing the development of training and fine-tuning these models. A large and diverse multi-modal robot dataset.

Currently these efforts are divided into two major directions: collecting data from the real world and collecting data from the simulated world and then migrating it to the real world. Each direction has its pros and cons. The datasets collected from the real world include RoboNet, Bridge Dataset V1, Bridge-V2, Language-Table, RT-1, etc. Commonly used simulators include Habitat, AI2THOR, Mujoco, AirSim, Arrival Autonomous Racing Simulator, Issac Gym, etc.

Another major contribution of this team is to the papers mentioned in this review report The experiments were meta-analysed, which helped the authors clarify the following questions:

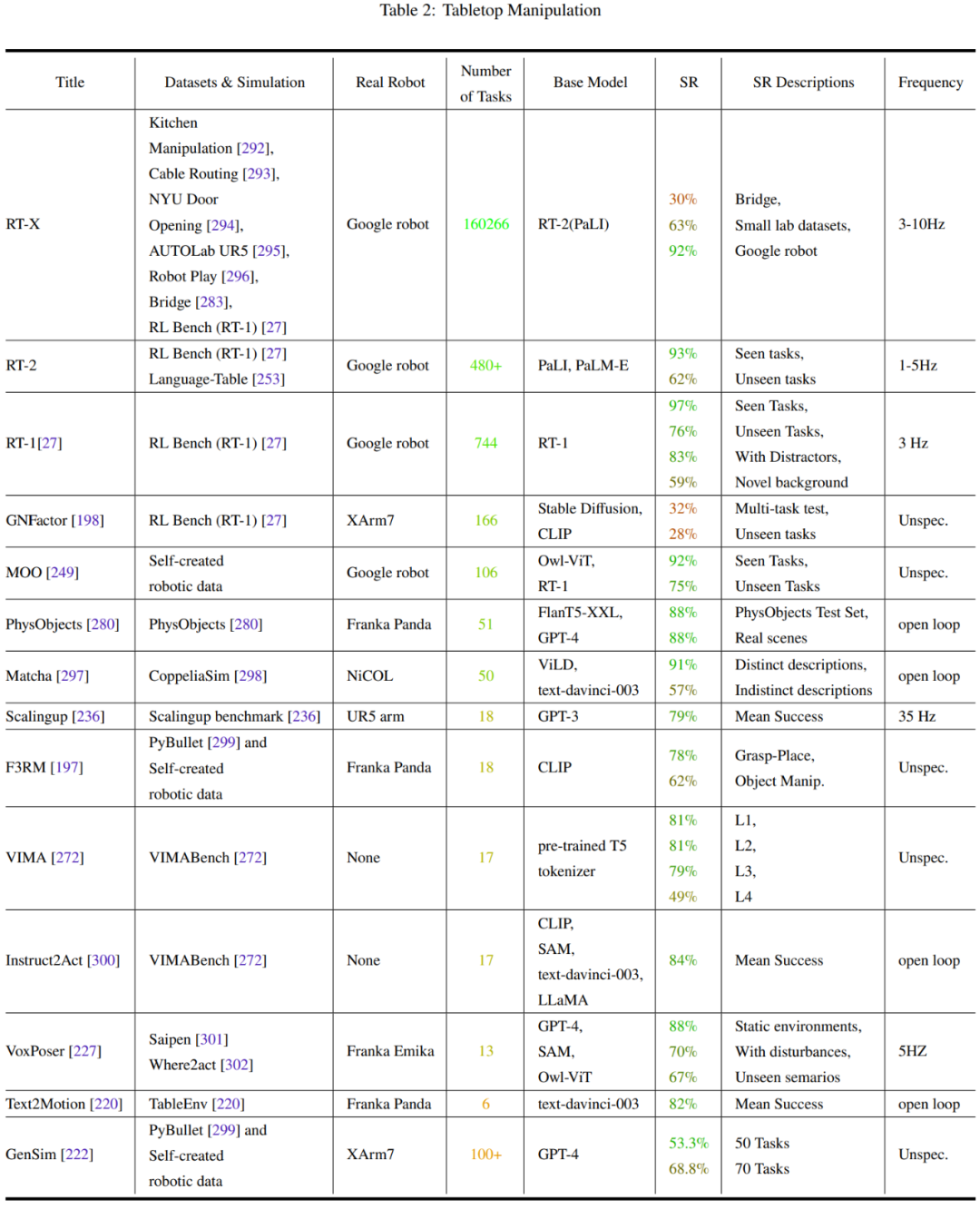

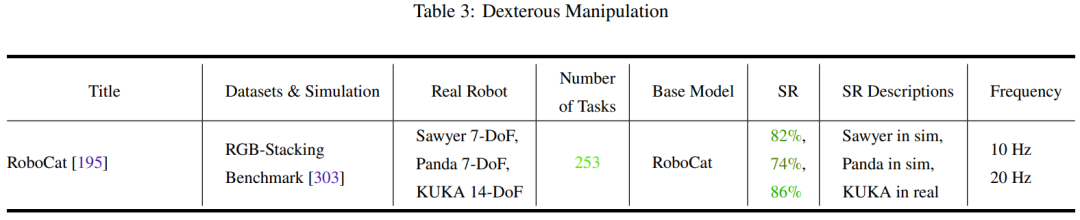

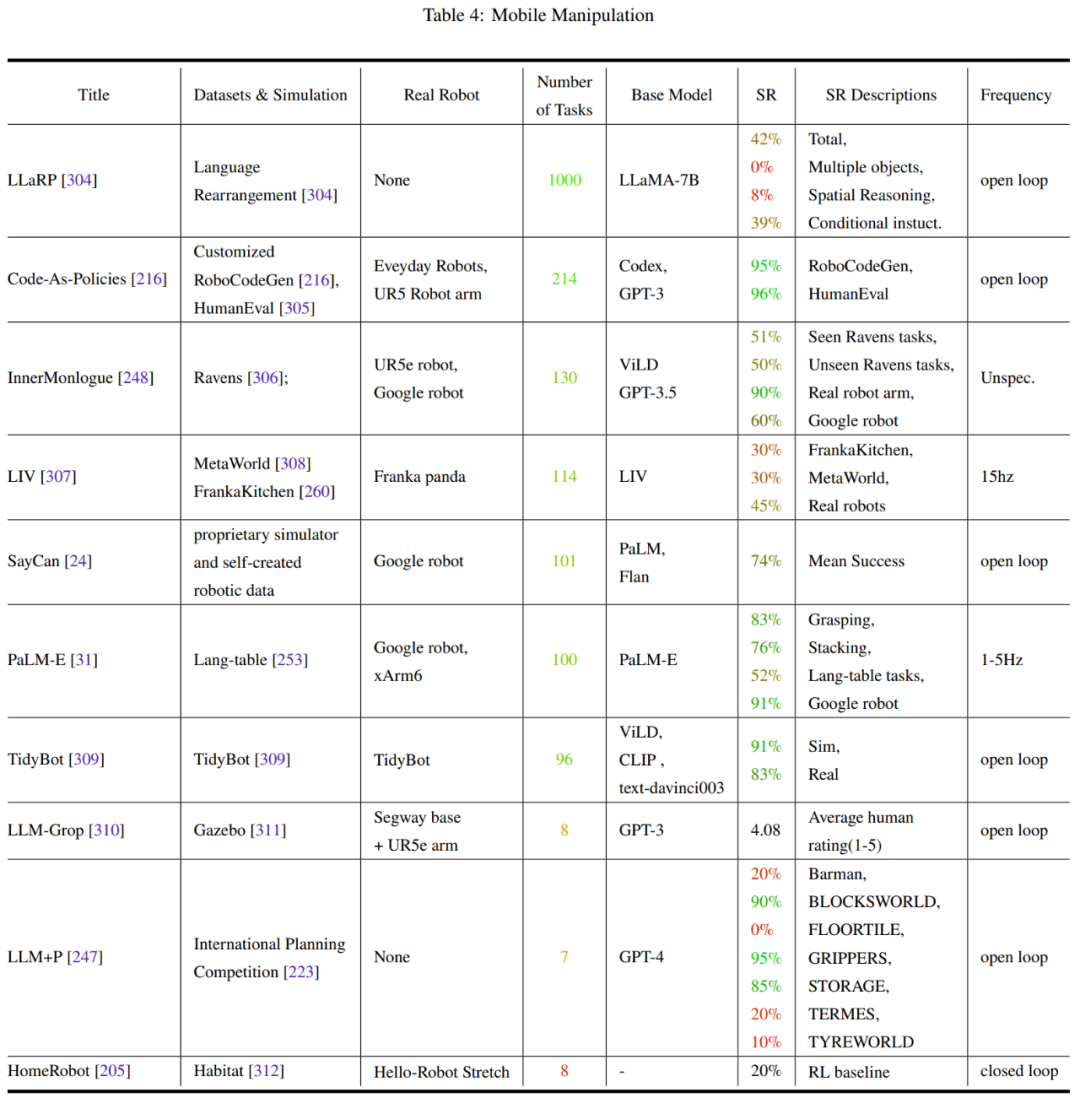

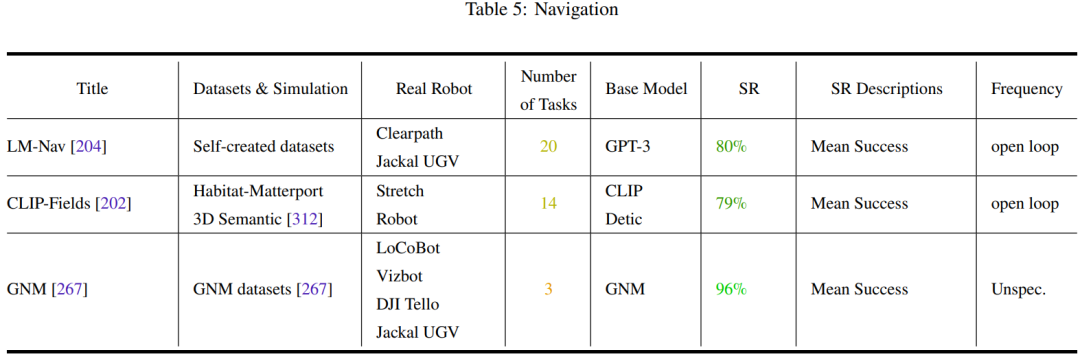

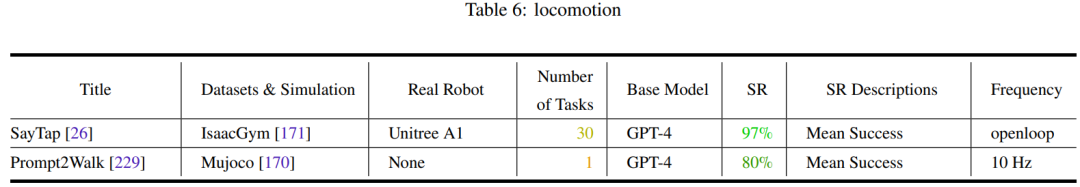

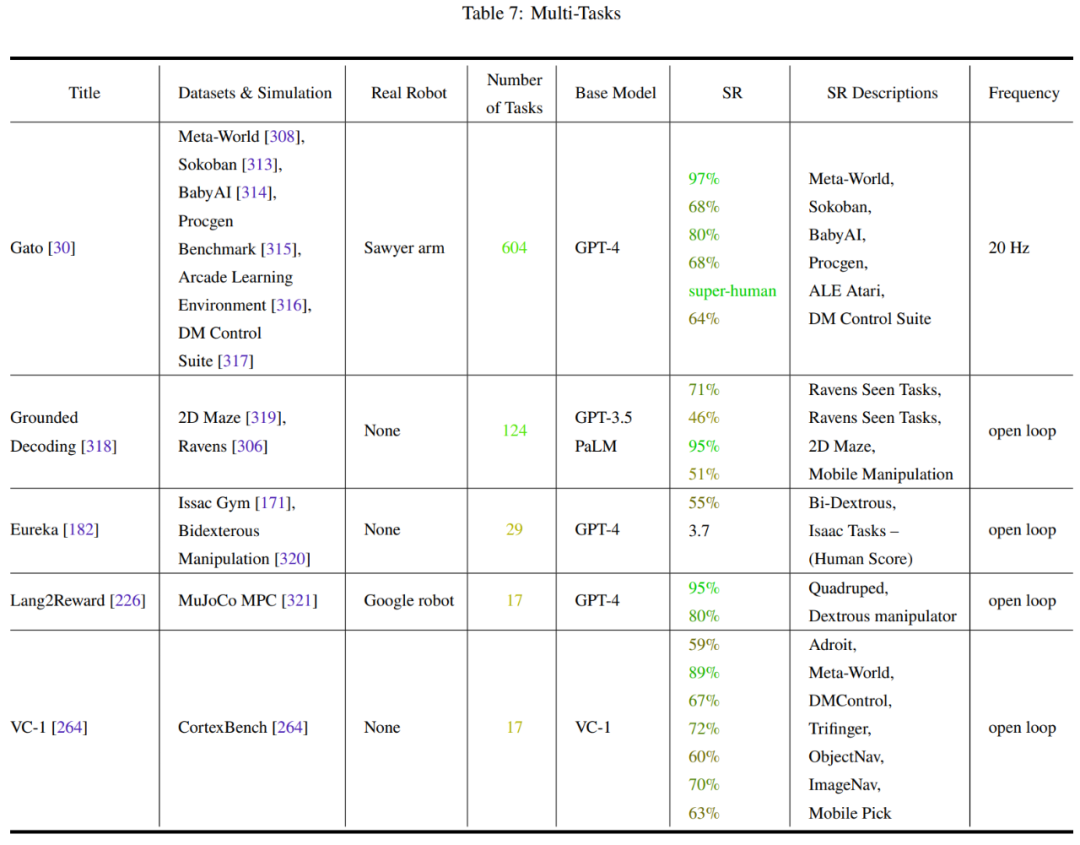

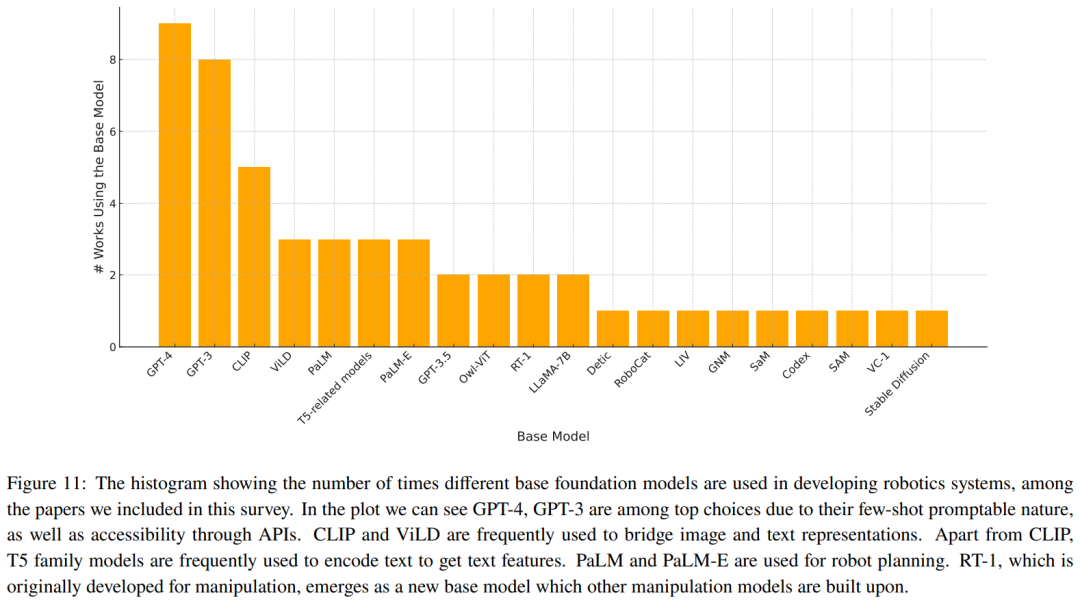

Table 2-7 and Figure 11 give the analysis results.

##The team identified some key trends:

Research Community Unbalanced attention to robot operation tasksDiscussion and future directions

The team summarized some Challenges to be solved and research directions worth discussing:

Setting a standard grounding for robot embodimentThe above is the detailed content of Robotics: How's the progress on the base model?. For more information, please follow other related articles on the PHP Chinese website!