ChatGPT (Chat Generative Pre-training Transformer) is an AI model that belongs to the field of Natural Language Processing (NLP). NLP is a branch of artificial intelligence. The so-called natural language refers to English, Chinese, German, etc. that people come into contact with and use in their daily lives. Natural language processing refers to allowing computers to understand and correctly operate natural language to complete tasks specified by humans. Common tasks in NLP include keyword extraction from text, text classification, machine translation, etc.

There is another very difficult task in NLP: dialogue systems, which can also be generally referred to as chatbots, which is exactly what ChatGPT accomplishes.

ChatGPT and Turing Test

For a long time, the Turing test has been considered by academic circles to be an elusive peak. Because of this, NLP is also known as the crown jewel of artificial intelligence. The work that ChatGPT can do goes far beyond the scope of chat robots. It can write articles according to user instructions, answer technical questions, do math problems, do foreign language translation, play word games, etc. So, in a way, ChatGPT has taken the crown jewel.

ChatGPT’s modeling form

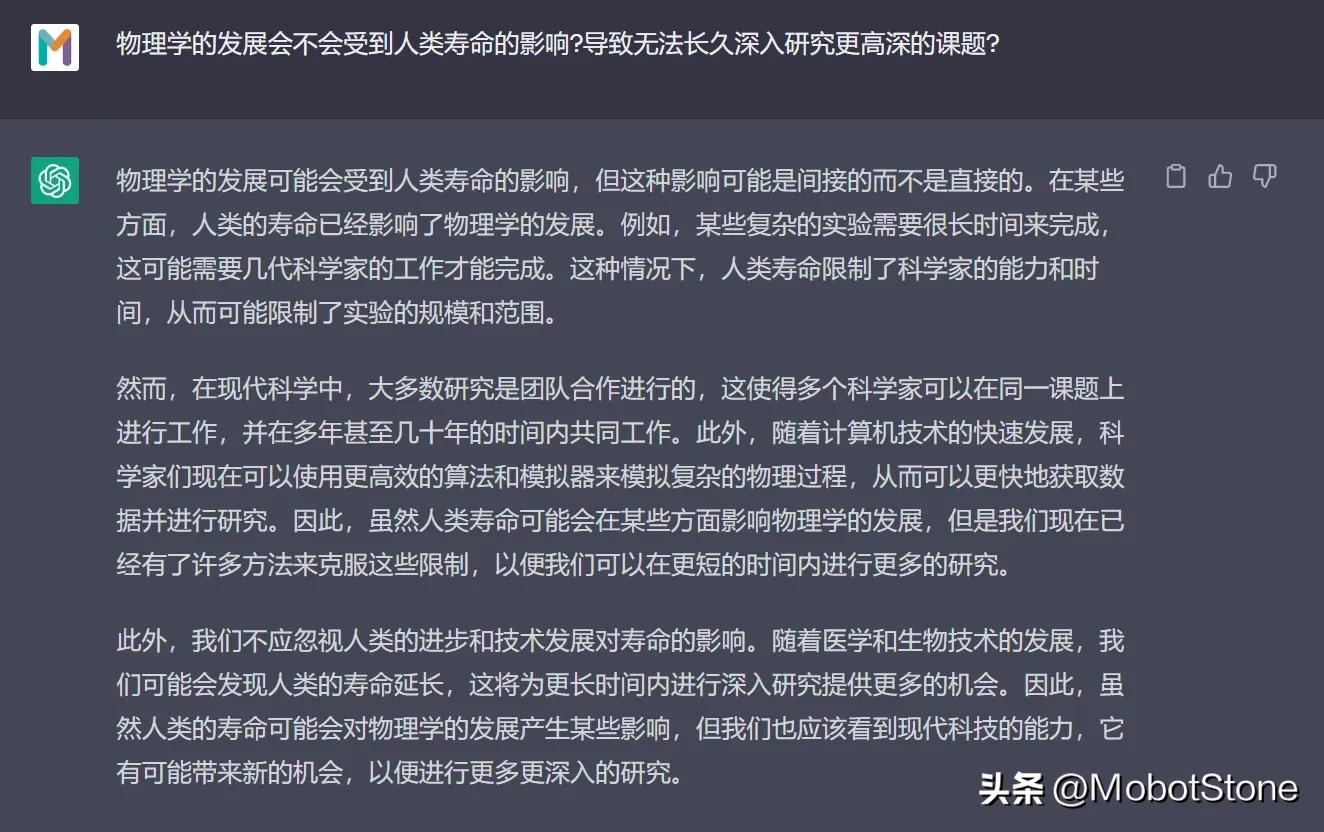

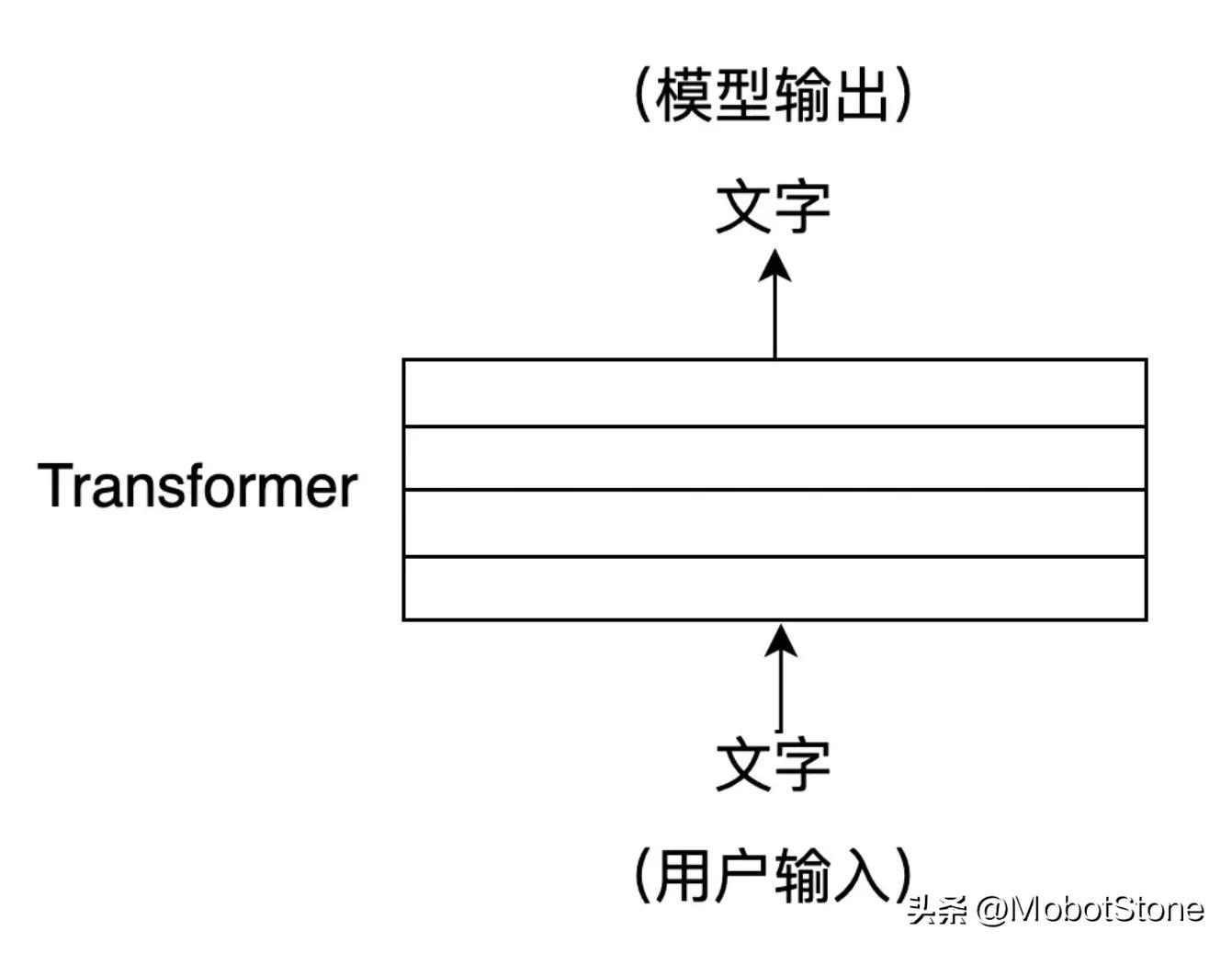

Among them, the user's input and the model's output are both in the form of

Among them, the user's input and the model's output are both in the form of

. One user input and one corresponding output from the model are called a conversation. We can abstract the ChatGPT model into the following process:

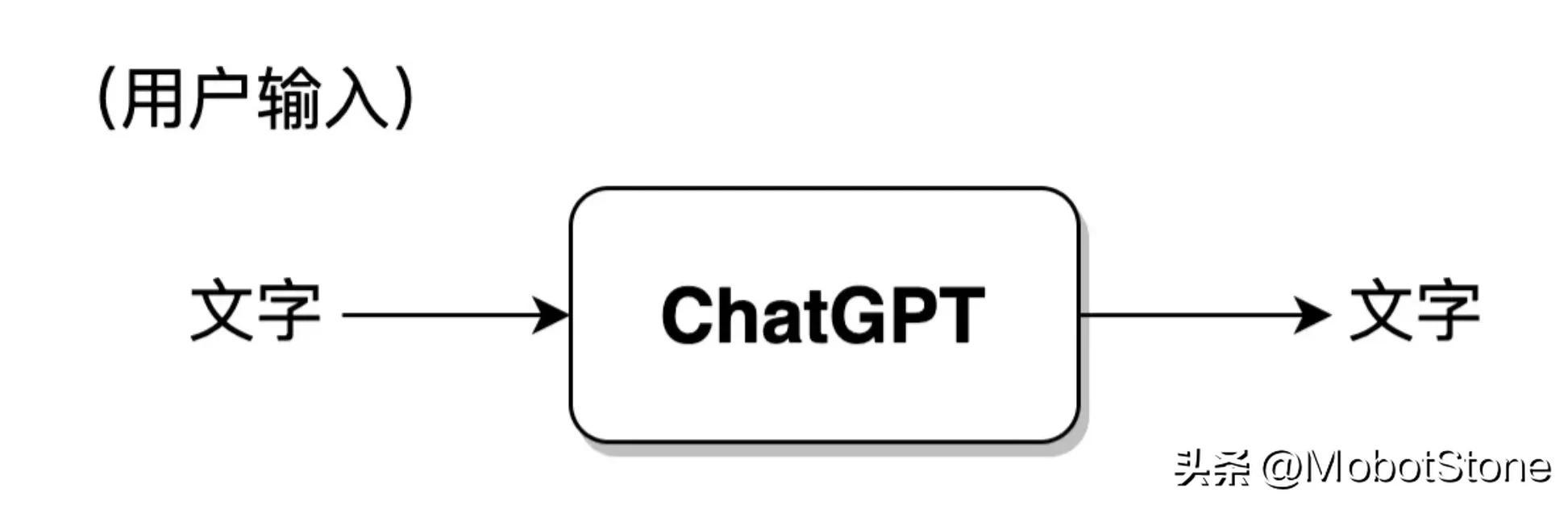

In addition, ChatGPT can also answer continuous questions from users, that is, multiple rounds of dialogue, and there are Information related. Its specific form is also very simple. When the user inputs for the second time, the system will splice the input and output information of the first time together by default for ChatGPT to refer to the information of the last conversation.

In addition, ChatGPT can also answer continuous questions from users, that is, multiple rounds of dialogue, and there are Information related. Its specific form is also very simple. When the user inputs for the second time, the system will splice the input and output information of the first time together by default for ChatGPT to refer to the information of the last conversation.

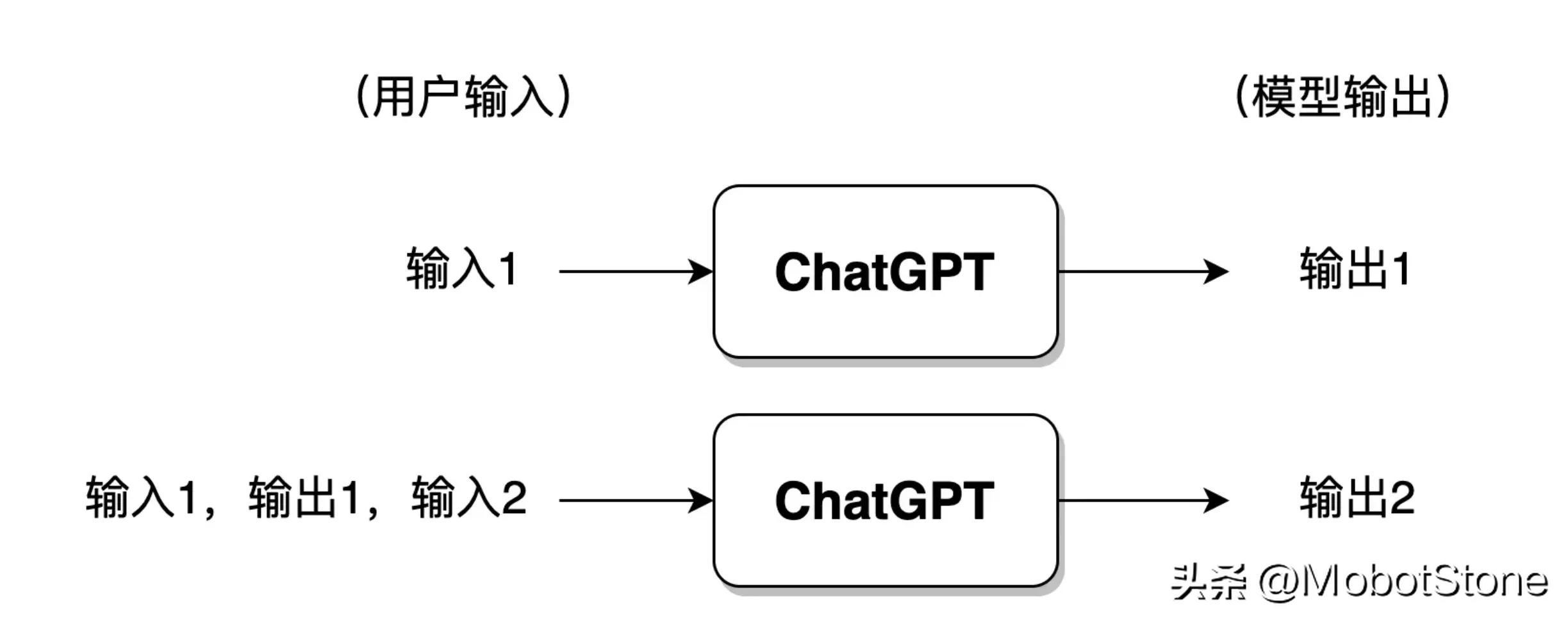

If the user has had too many conversations with ChatGPT, generally speaking, the model will only retain information from the most recent rounds of conversations, and previous conversation information will be forgotten.

If the user has had too many conversations with ChatGPT, generally speaking, the model will only retain information from the most recent rounds of conversations, and previous conversation information will be forgotten.

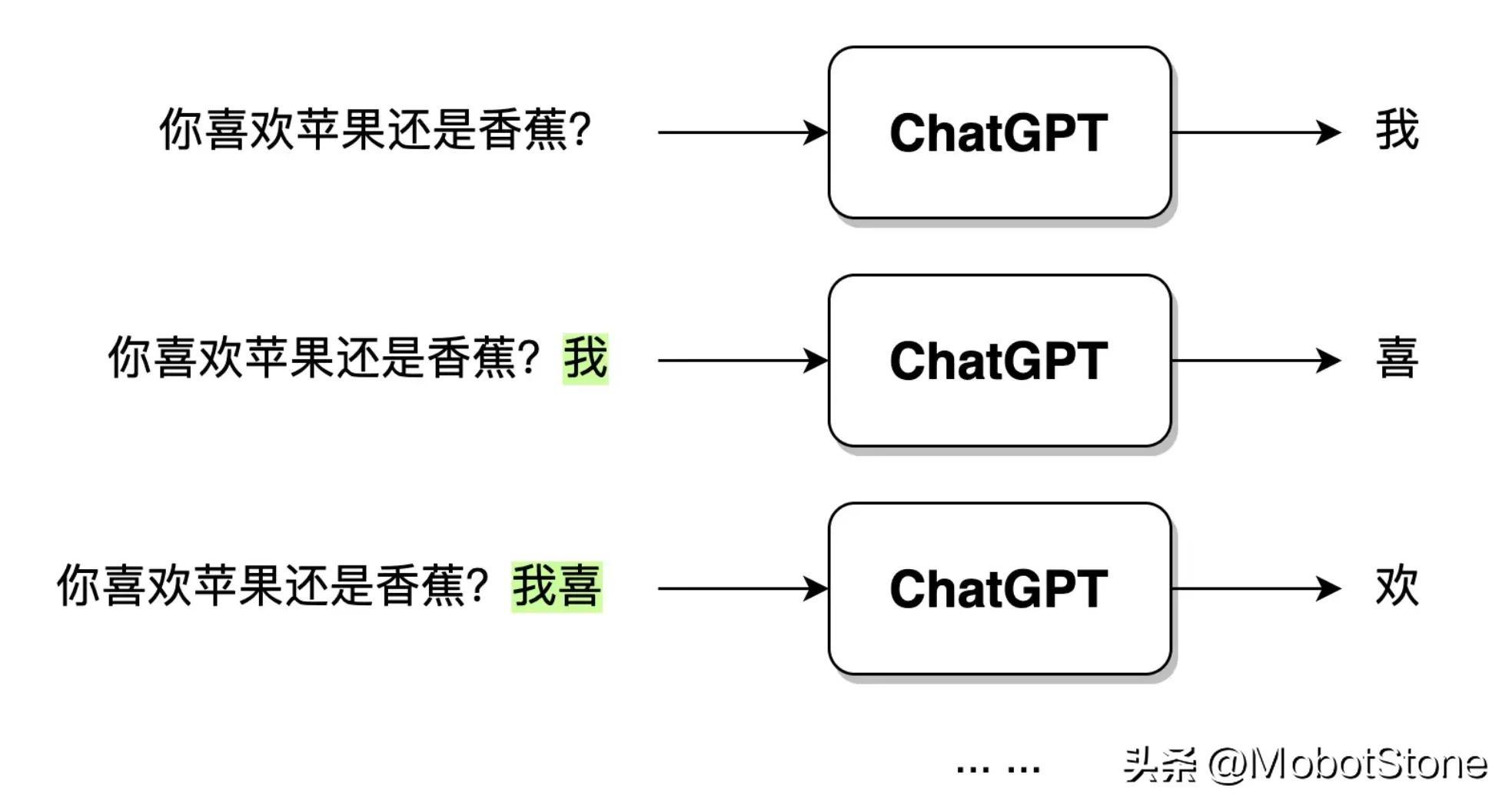

ChatGPT After receiving the user's question input, the output text is not directly generated in one go, but generated word by word. This kind of word by word Generation, that is,

ChatGPT After receiving the user's question input, the output text is not directly generated in one go, but generated word by word. This kind of word by word Generation, that is,

. As shown below.

When the user enters the question: "Do you like apples or bananas?", after ChatGPT receives the data, it will first generate the word "I", and then the model will synthesize The user's question and the generated word "I" continue to generate the next word "hi". And so on until a complete sentence "I like apples" is generated.

When the user enters the question: "Do you like apples or bananas?", after ChatGPT receives the data, it will first generate the word "I", and then the model will synthesize The user's question and the generated word "I" continue to generate the next word "hi". And so on until a complete sentence "I like apples" is generated.

The modeling form of ChatGPT was introduced earlier. Just imagine, if you were asked to implement a ChatGPT model, what ideas and methods would you have? In fact, there are roughly two strategies, NLP based onrulesand NLP based onstatistics. Since the beginning of ChatGPT, the field of NLP has entered thereinforcement learningera, that is, NLP based on reinforcement learning.

Rule-based NLP refers to the use of manually written rules to process natural language. These rules are usually based on knowledge of grammar, semantics, and pragmatics and can be used to parse and generate natural language. For example, we can design a dialogue system based on the following rules:

Rule 1: When the model receives the user's question, remove the word "?" in the question and replace it with "?" "."

Rule 2: Replace "you" with "I" and "I" with "you"

From this, we can make according to these rules A dialogue model, the dialogue mode is turned on.

User: Hello. Model: Hello. User: Are you ChatGPT? Model: Yes, I'm ChatGPT. User: Do you like the Nuggets community? Model: I love the Nuggets community. User: Have you ever used the jionlp toolkit? Model: I have used jionlp toolkit.

The above is a very superficial example of a rule-based dialogue system. I believe readers can easily find out the problems that exist. What if the user problem is too complex? What if there is no question mark in the question? We need to constantly write various rules to cover the above special situations. This shows that there are several obvious shortcomings based on rules:

This is how NLP was developed in the early stages: building a model system based on rules. In the early days, it was also generally called symbolism.

Statistics-based NLP uses machine learning algorithms to learn regular features of natural language from a large number of corpora. It was also called connectionism in the early days. This method does not require manual writing of rules. The rules are mainly implicit in the model by learning the statistical characteristics of the language. In other words, in the rule-based method, the rules are explicit and written manually; in the statistical-based method, the rules are invisible, implicit in the model parameters, and are trained by the model based on the data.

These models have developed rapidly in recent years, and ChatGPT is one of them. In addition, there are a variety of models with different shapes and structures, but their basic principles are the same. Their processing methods are mainly as follows:

Training model=> Use the trained model to work

In ChatGPT, pre-training (Pre-training) is mainly used ) technology to complete statistics-based NLP model learning. At the earliest, pre-training in the NLP field was first introduced by the ELMO model (Embedding from Language Models), and this method was widely adopted by various deep neural network models such as ChatGPT.

Its focus is to learn a language model based on large-scale original corpus, and this model does not directly learn how to solve a specific task, but learns from grammar, morphology, pragmatics, to common sense, Knowledge and other information are integrated into the language model. Intuitively, it is more like a knowledge memory rather than applying knowledge to solve practical problems.

Pre-training has many benefits, and it has become a necessary step for almost all NLP model training. We will expand on this in subsequent chapters.

Statistics-based methods are far more popular than rule-based methods. However, its biggest disadvantage is black box uncertainty, that is, the rules are invisible and implicit in the parameters. For example, ChatGPT will also give some ambiguous and incomprehensible results. We have no way to judge from the results why the model gave such an answer.

The ChatGPT model is based on statistics, but it also uses a new method, reinforcement learning with human feedback (Reinforcement Learning with Human Feedback, RLHF), which has achieved excellent results and brought the development of NLP into a new stage.

A few years ago, Alpha GO defeated Ke Jie. This can almost prove that if reinforcement learning is under suitable conditions, it can completely defeat humans and approach the limit of perfection. Currently, we are still in the era of weak artificial intelligence, but limited to the field of Go. Alpha GO is a strong artificial intelligence, and its core lies in reinforcement learning.

The so-called reinforcement learning is a machine learning method that aims to let the agent (agent, in NLP mainly refers to the deep neural network model, which is the ChatGPT model) learn how to make decisions through interaction with the environment. Optimal decision making.

This method is like training a dog (agent) to listen to a whistle (environment) and eat (learning goal).

A puppy will be rewarded with food when it hears its owner blow the whistle; but when the owner does not blow the whistle, the puppy can only starve. By repeatedly eating and starving, the puppy can establish corresponding conditioned reflexes, which actually completes a reinforcement learning.

In the field of NLP, the environment here is much more complex. The environment for the NLP model is not a real human language environment, but an artificially constructed language environment model. Therefore, the emphasis here is on reinforcement learning with artificial feedback.

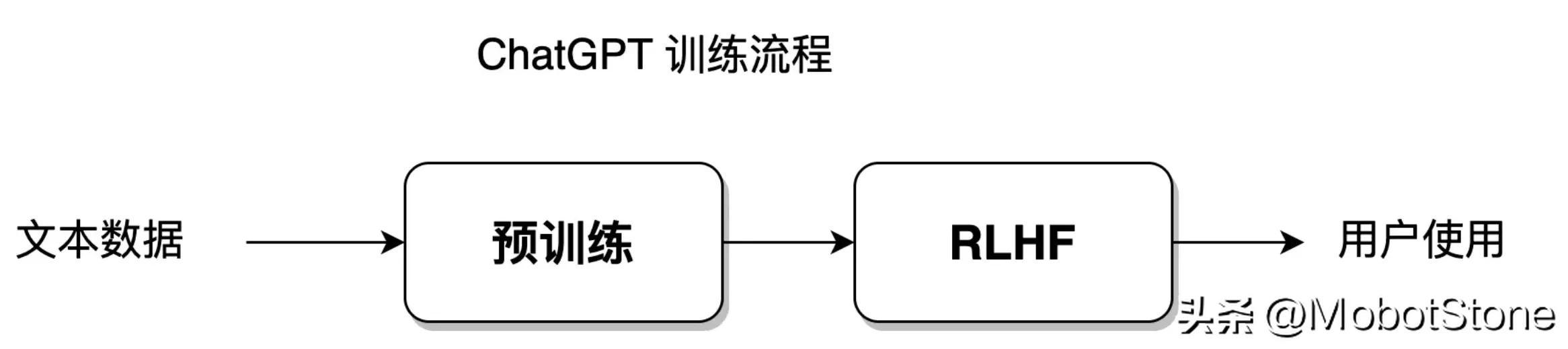

Statistics-based methods allow the model to fit the training data set with the greatest degree of freedom; while reinforcement learning gives the model a greater degree of freedom, allowing the model to learn independently , breaking through the established data set limitations. The ChatGPT model is a fusion of statistical learning methods and reinforcement learning methods. Its model training process is shown in the figure below:

This part of the training process will be launched in Sections 8-11 speak.

In fact, the three methods based on rules, based on statistics, and based on reinforcement learning are not just a means of processing natural language, but a means of processing natural language. Thought. An algorithm model that solves a certain problem is often the product of a fusion of these three solutions.

If the computer is compared to a child, natural language processing is like a human being educating the child to grow.

The rule-based approach is like a parent controlling a child 100% and requiring him to act according to his own instructions and rules, such as stipulating a few hours of study every day and teaching the child every question. Throughout the process, the emphasis is on hands-on instruction, with the initiative and focus being on the parents. For NLP, the initiative and focus of the entire process lies with programmers and researchers who write language rules.

The statistics-based method is like parents only telling their children how to learn, but not teaching each specific question. The emphasis is on semi-guidance. For NLP, the focus of learning is on neural network models, but the initiative is still controlled by algorithm engineers.

Based on the method of intensive learning, it is like parents only set educational goals for their children. For example, they require their children to reach 90 points in the exam, but they do not care about how the children learn and rely entirely on self-study. Children have a very high degree of freedom and initiative. Parents only reward or punish the final results and do not participate in the entire education process. For NLP, the focus and initiative of the entire process lies in the model itself.

The development of NLP has been gradually moving closer to methods based on statistics, and finally the method based on reinforcement learning achieved completevictory. The sign of victory isChatGPTcame out; and the rule-based method gradually declined and became an auxiliary processing method. From the beginning, the development of the ChatGPT model has been unswervingly progressing in the direction of letting the model learn by itself.

In the previous introduction, in order to facilitate readers’ understanding, the specific internal structure of the ChatGPT model was not mentioned.

ChatGPT is a large neural network. Its internal structure is composed of several layers of Transformer. Transformer is a structure of a neural network. Since 2018, it has become a common standard model structure in the NLP field, and Transformer can be found in almost all NLP models.

If ChatGPT is a house, then Transformer is the brick that builds ChatGPT.

The core of Transformer is the self-attention mechanism (Self-Attention), which can help the model automatically pay attention to other position characters related to the current position character when processing the input text sequence. The self-attention mechanism can represent each position in the input sequence as a vector, and these vectors can participate in calculations at the same time, thereby achieving efficient parallel computing. Give an example:

In machine translation, when translating the English sentence "I am a good student" into Chinese, the traditional machine translation model may translate it into "I am a good student" student", but this translation may not be accurate enough. The article "a" in English needs to be determined based on the context when translated into Chinese.

When using the Transformer model for translation, you can get more accurate translation results, such as "I am a good student."

This is because Transformer can better capture the relationship between words across long distances in English sentences and solvelong dependencies in text context. The self-attention mechanism will be introduced in Section 5-6, and the detailed structure of Transformer will be introduced in Section 6-7.

The above is the detailed content of Everyone understands ChatGPT Chapter 1: ChatGPT and natural language processing. For more information, please follow other related articles on the PHP Chinese website!