In the movie, as the heroine Lucy’s brain power gradually develops, she acquires the following abilities:

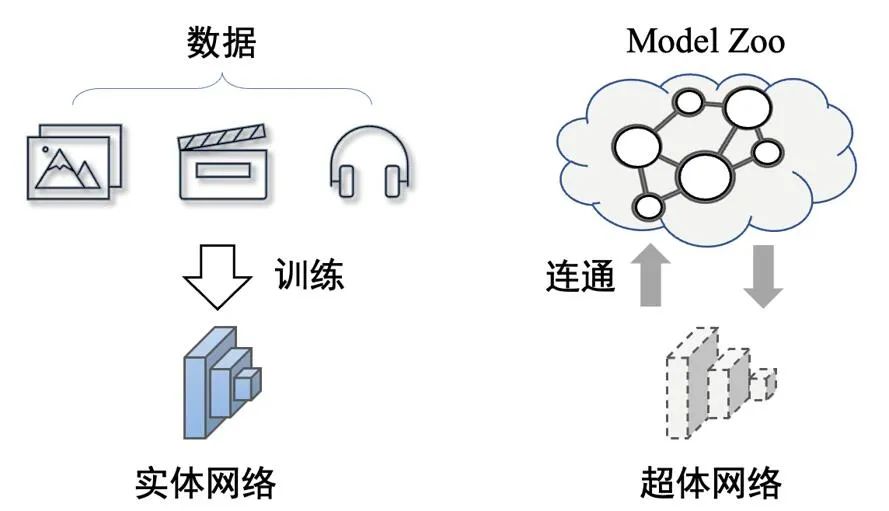

At the end of the movie, the heroine gradually disappears and turns into a pure energy form, eventually disappearing into the universe and becoming one with the universe and time. The realization of the human super body is the ability to connect to the outside world to obtain infinite value. Migrating this idea to the neural network domain, if the connection with the entire network can be established,can also realize the network super body, and theoretically will obtain unbounded prediction capabilities.

That is, the physical network will inevitably limit the growth of network performance. When the target network is connected to the Model Zoo, the network no longer has an entity, but a network is established. The connected super-body form between them.

Above the picture: The difference between super-body network and entity network. The super-body network has no entity and is a form of connectivity between networks

The idea of the network's super-body is shared in this article CVPR 2023 The paper"Partial Network Cloning"can be explored. In this paper, the National University of SingaporeLV labproposes a new network cloning technology.

##Link: https://arxiv.org/abs/2303.10597##01 Problem Definition

In this article, the author mentioned that using this network cloning technology to achieve network dematerialization can bring the following advantages:

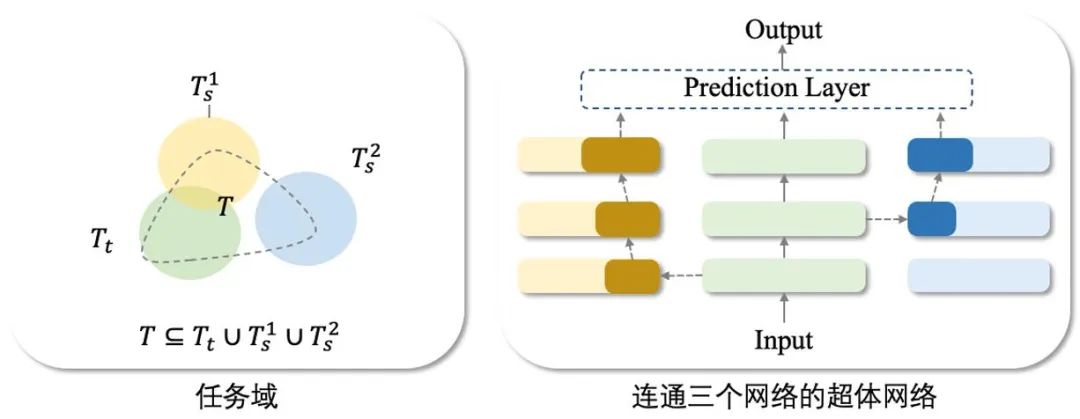

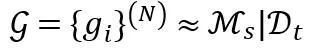

The implementation foundation of the super-body network is the rapidly expanding Model Zoo, with a large number of pre-trained models available for use. Therefore, for any task T, we can always find one or more models

so that the tasks of these existing models can be composed into the required tasks. That is:

In summary, the network cloning technology required to build a network superbody proposed in this article can be expressed as:

Among them, M_s represents the correction network set, so the connection form of the network superbody is an ontology network plus one or several correction networks. Network cloning technology is to clone the partial correction network needed and embed it into In the ontology network.

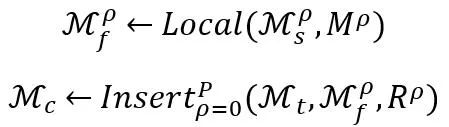

Specifically, the network cloning framework proposed in this article includes the following two technical points:

For a clone containing P correction networks, the first technical point iskey part positioning Local (∙). Since the correction network may contain task information that is irrelevant to the task set T, the key part positioning Local (∙) aims to locate the parts in the correction network that are related to the task T⋂T_s. The positioning parameter is represented by M^ρ. The implementation details are in Section 1. given in subsection 2.1. The second technical point is the network module embedding Insert (∙). It is necessary to select the appropriate network embedding point R^ρ to embed all the correction networks. The implementation details are given in Section 2.2.

In the method part of network cloning, in order to simplify the description, we set the number of correction networks P=1 (therefore omitting the upper part of the correction network (labeled ρ), that is, we connect an ontology network and a correction network to build the required superbody network.

As mentioned above, network cloning includes key part positioning and network module embedding. Here, we introduce the intermediate transferable module M_f to assist understanding. That is, the network cloning technology locates key parts in the revised network to form a transferable module M_f, and then embeds the transferable module into the ontology network M_t through soft connections. Therefore, the goal ofnetwork cloning technology is to locate and embed migratable moduleswith portability and local fidelity.

##2.1 Locating key parts of the network

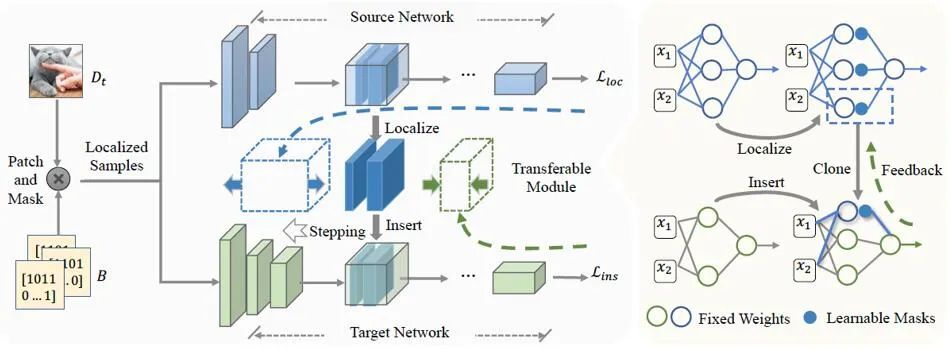

The goal of locating key parts of the network is to learn the selection function M. The selection function M is defined here as the mask that acts on the filter of each layer of the network. The migratable module at this time can be expressed as:

In the above formula, we represent the modified network M_s as L layer, each layer Expressed as. The extraction of known migratable modules does not make any modifications to the correction network.

In order to get the appropriate transferable module M_f, we locate the explicit part of the correction network M_s that makes the greatest contribution to the final prediction result. Prior to this, considering the black-box nature of neural networks and that we only need part of the prediction results of the network, we used LIME to fit and correct the network to model the local part of the required task (see the main text of the paper for specific details).

The local modeling results are represented by , where D_t is the training data set corresponding to the required partial prediction results (smaller than the training set of the original network).

, where D_t is the training data set corresponding to the required partial prediction results (smaller than the training set of the original network).

Therefore, the selection of function M can be optimized through the following objective function:

In this formula, The key parts of the localization are fitted to the locally modeled G.

2.2 Network module embedding

When locating the migratable module M_f in the correction network, use the selection function M directly Extracted from M_s without modifying its weights. The next step is to decide where to embed the migratable module M_f in the ontology network M_t to obtain the best cloning performance.

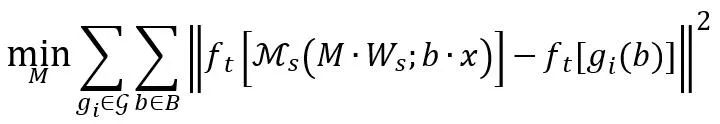

The embedding of network modules is controlled by the positional parameter R. Following most model reuse settings, network cloning retains the first few layers of the ontology model as generic feature extractors, and the network embedding process is simplified to finding the best embedding position (i.e. embedding the transferable module M_f at the Rth layer). The process of finding embeddings can be expressed as:

Please refer to the text for detailed formula explanation. In general, search-based embedding includes the following points:

, it is necessary to additionally introduce the Adapter A at the embedded position and re-finetune the F_c layer (for the classification network) Said), but the parameter amounts of the two are negligible compared to the entire model zoo;

, it is necessary to additionally introduce the Adapter A at the embedded position and re-finetune the F_c layer (for the classification network) Said), but the parameter amounts of the two are negligible compared to the entire model zoo;The core of the network cloning technology proposed in this article is to establish the connection path between pre-trained networks. It does not require By modifying any parameters of the pre-trained network, it can not only be used as a key technology for building network super-body, but can also be flexibly applied to various practical scenarios.

Scenario 1: Network cloning technology makes it possible to use Model Zoo online. In some cases with limited resources, users can flexibly utilize the online Model Zoo without downloading the pre-trained network to the local.

Note that the cloned model is determined by, where M_t and M_s are fixed and unchanged throughout the process. Model cloning does not make any modifications to the pre-trained model, nor does it introduce a new model. Model cloning makes any combination of functions in Model Zoo possible, which also helps maintain a good ecological environment of Model Zoo, because establishing a connection using M and R is a simple mask and positioning operation that is easy to undo. Therefore, the proposed network cloning technology supports the establishment of a sustainable Model Zoo online inference platform.

Scenario 2: The network generated through network cloning has a better information transmission mode. This technology can reduce transmission delays and losses when performing network transmission.

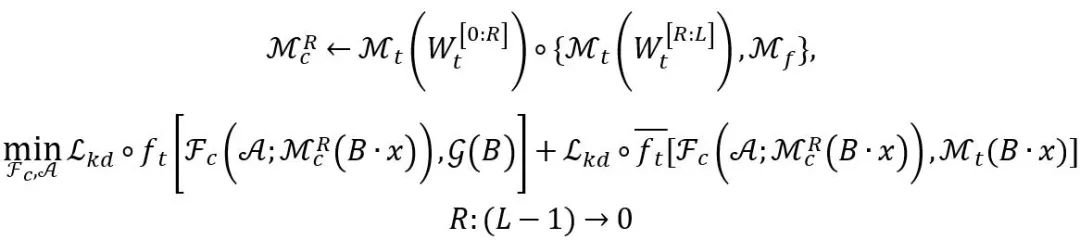

When performing network transmission, we only need to transmit the set . Combined with the public Model Zoo, the receiver can restore the original network. Compared with the entire cloned network,

. Combined with the public Model Zoo, the receiver can restore the original network. Compared with the entire cloned network, is very small, so transmission delay can be reduced. If A and F_c still have some transmission loss, the receiver can easily fix it by fine-tuning on the data set. Therefore, network cloning provides a new form of network for efficient transmission.

is very small, so transmission delay can be reduced. If A and F_c still have some transmission loss, the receiver can easily fix it by fine-tuning on the data set. Therefore, network cloning provides a new form of network for efficient transmission.

We conducted experimental verification on the classification task. In order to evaluate the local performance representation ability of transferable modules, we introduce the conditional similarity index:

where Sim_cos (∙ ) represents cosine similarity.

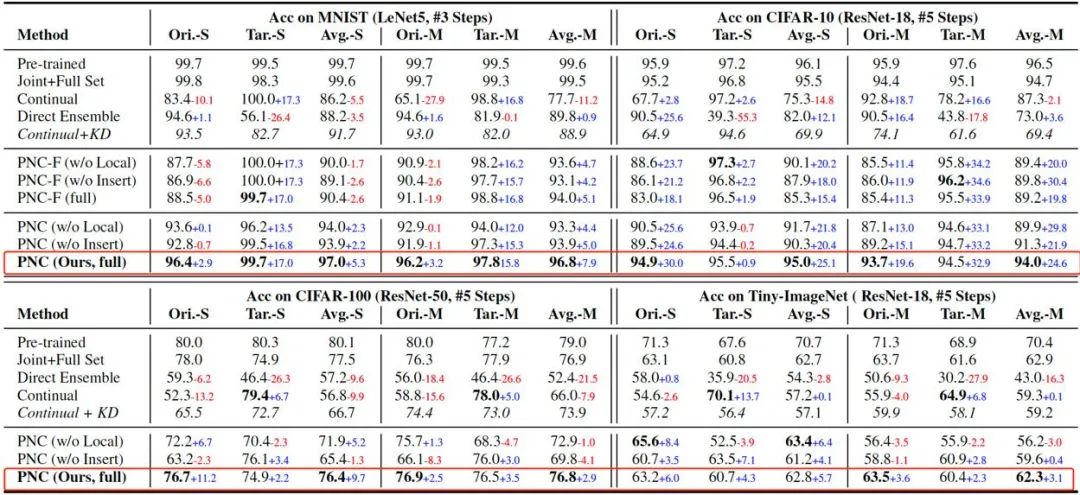

Experiments on MNIST, CIFAR-10, CIFAR-100 and Tiny-ImageNet are given in the above table As a result, it can be seen that the performance improvement of the model obtained by network cloning (PNC) is the most significant. And fine-tuning the entire network (PNC-F) will not improve network performance. On the contrary, it will increase the bias of the model.

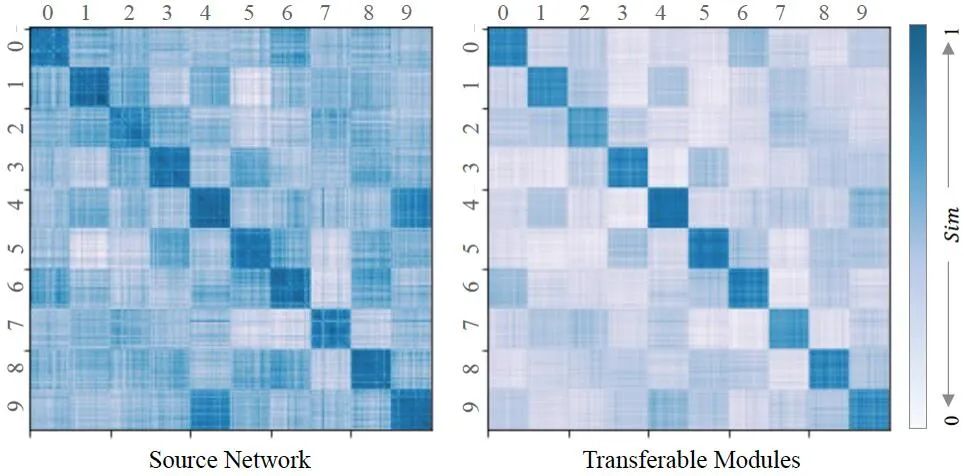

In addition to this, we evaluated the quality of the migratable modules (as shown above). As can be seen from the figure (left), each feature learned from each sub-dataset is more or less correlated, which shows the importance of extracting and localizing local features from the revised network. For transferable modules, we calculate their similarity Sim (∙). The figure (right) shows that the transferable module is highly similar in similarity to the sub-dataset to be cloned, and its relationship with the remaining sub-datasets is weakened (off-diagonal areas are marked with a lighter color than the matrix plot of the source network ). Therefore, it can be concluded that the transferable module successfully simulates the local performance on the task set to be cloned, proving the correctness of the positioning strategy.

This paper studies a new knowledge transfer task called Partial Network Cloning (PNC), which copies and pastes data from a revised network Clone the parameter module and embed it into the ontology network. Unlike previous knowledge transfer setups (which rely on updating the parameters of the network) our approach ensures that the parameters of all pre-trained models are unchanged. The core technology of PNC is to simultaneously locate key parts of the network and embed removable modules. The two steps reinforce each other.

We demonstrate outstanding results of our method on accuracy and transferability metrics on multiple datasets.

The above is the detailed content of Neural network superbody? New National LV lab proposes new network cloning technology. For more information, please follow other related articles on the PHP Chinese website!