Large language models (LLM) have demonstrated excellent performance on a variety of complex tasks through in-context learning without requiring task-specific training or fine-tuning. Recent advances in prompts and decoding have also enabled LLM becomes a reality for solving complex reasoning tasks.

However, LLM may store outdated, incomplete, or incorrect knowledge, and to successfully deploy LLM into real-world applications, external knowledge sources (such as Wikipedia) are crucial. Previous attempts have been made to apply knowledge to smaller language models (LMs) such as T5, BERT, and RoBERTa, but these methods often require additional training or fine-tuning, are costly, and are completely impractical for LLMs.

Based on this, researchers from the University of Rochester, Tencent AI Lab and the University of Pennsylvania jointly proposed a post-processing method called Rethinking with Retrieval (RR) to Leveraging external knowledge in LLM.

##Paper address: https://arxiv.org/pdf/2301.00303v1.pdf

The idea of this research is to first use the chain-of-thought (CoT) prompting method to generate a set of different reasoning paths, similar to Wang et al. (2022) method. The study then uses each inference step in these paths to retrieve relevant external knowledge, allowing the RR method to provide more plausible explanations and more accurate predictions.

This study uses GPT-3 175B and several common external knowledge sources (Wikipedia, Wikidata, WordNet and Conceptnet) to evaluate the performance of the RR method on three complex reasoning tasks Effectiveness, including common sense reasoning, temporal reasoning and tabular reasoning. Experimental results show that RR consistently outperforms other methods on these three tasks without additional training or fine-tuning, indicating that the RR method has great advantages in leveraging external knowledge to improve LLM performance.

Rethinking with RetrievalIn fact, even though LLM accurately captures the elements needed to answer the question, these models sometimes produce incorrect results. This phenomenon indicates that there are some problems in the way LLM stores and retrieves knowledge, including:

The general idea of the RR method is as follows: given an input question Q, the RR method first uses chain-of though prompting to generate a set of different Inference paths R_1, R_2, ..., R_N, where each inference path R_i consists of an explanation E_i followed by a prediction P_i, which is then supported by retrieving relevant knowledge K_1, ..., K_M from the appropriate knowledge base KB explanations in each reasoning path and select the prediction  that best fits that knowledge.

that best fits that knowledge.

Chain of Thought (CoT) prompting. Significantly different from standard prompting, CoT prompting involves step-by-step reasoning example demonstrations in the prompting to generate a series of short sentences that capture the reasoning process.

For example, given the input question: "Did Aristotle use a laptop?" CoT prompting aims to generate a complete reasoning path:

CoT prompting’s reasoning is: “Aristotle died in 322 BC. The first laptop was invented in 1980. Therefore, Aristotle did not use a laptop. So the answer is no. ” instead of simply outputting “No”.

Sampling different inference paths. Similar to Wang et al. (2022), this study sampled a different set of inference paths R_1, R_2, ..., R_N instead of only considering the optimal proportional path (greedy) as in Wei et al. (2022) path). Regarding the question "Did Aristotle use a laptop?", the possible reasoning path is as follows:

(R_1) Aristotle died in 2000. The first laptop was invented in 1980. So Aristotle used a laptop. So the answer to this question is yes.

(R_2) Aristotle died in 322 BC. The first laptop was invented in 2000. Therefore, Aristotle did not use a laptop. So the answer is no.

Knowledge retrieval. Different knowledge bases can be used to handle different tasks. For example, to answer the question "Did Aristotle use a laptop?" we can use Wikipedia as the external knowledge base KB. Information retrieval techniques can be used to retrieve relevant knowledge K_1,...K_M from Wikipedia based on decomposed reasoning steps. Ideally, we would get the following two paragraphs from Wikipedia for this question:

(K_1) Aristotle (384 BC to 322 BC) was Greek philosopher and polymath in the classical period of ancient Greece

(K_2) The first laptop computer, the Epson HX-20, was invented in 1980...

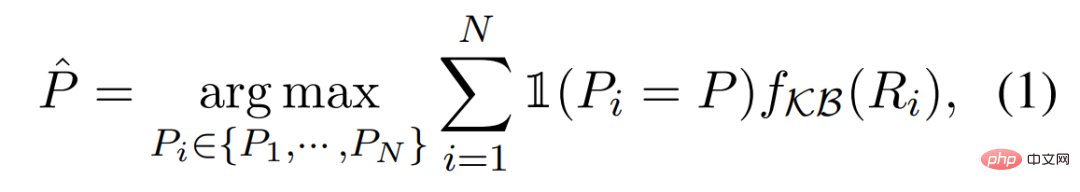

Faithful reasoning. We can estimate the confidence of each inference path R_i using the function f_KB(R_i), which is based on the relevant knowledge K_1,...,K_M retrieved from the knowledge base KB. The final prediction can be obtained by applying the following inference process:

In this section , this study presents the evaluation of RR on three complex reasoning tasks: commonsense reasoning, temporal reasoning, and tabular reasoning.

Experimental settings. In all experiments, this study uses GPT-3 text-davinci-002 unless otherwise stated. The maximum number of tokens generated during the completion of the experiment was set to 256, zero-shot, few-shot, and chain-of-thought prompting, and the temperature parameter (temperature) was fixed to 0.

result. As shown in Table 1, our proposed method, RR, consistently outperforms all baselines on all three inference tasks without additional training or fine-tuning. These results highlight the effectiveness of RR in leveraging external knowledge to improve LLM performance.

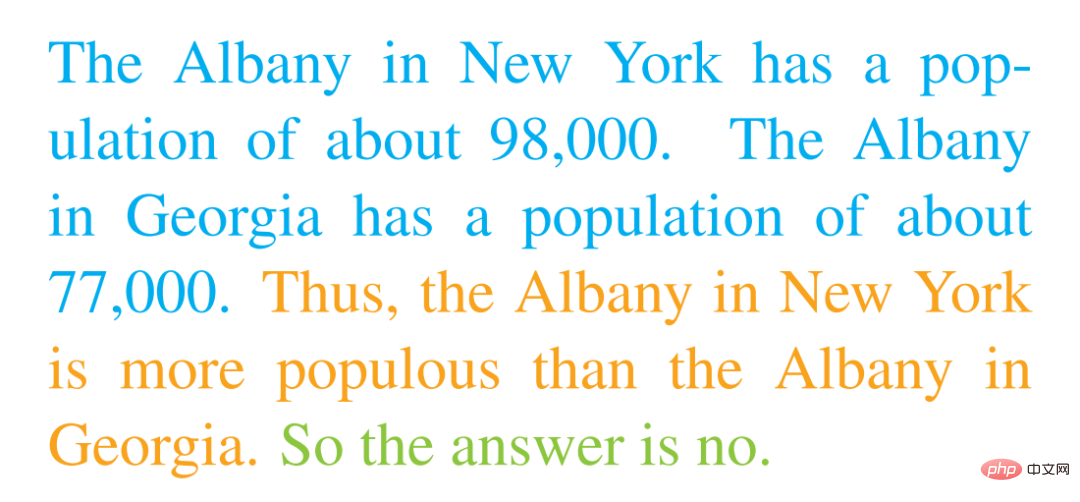

This study demonstrates the analysis of GPT-3 with the CoT prompting method on the StrategyQA dataset. After carefully examining the output of GPT-3, the study observed that RR can provide reasonable explanations and correct predictions for many problems. For example, when given the question "Will Albany, Georgia reach 100,000 residents before Albany, New York?" GPT-3 produced the following output:

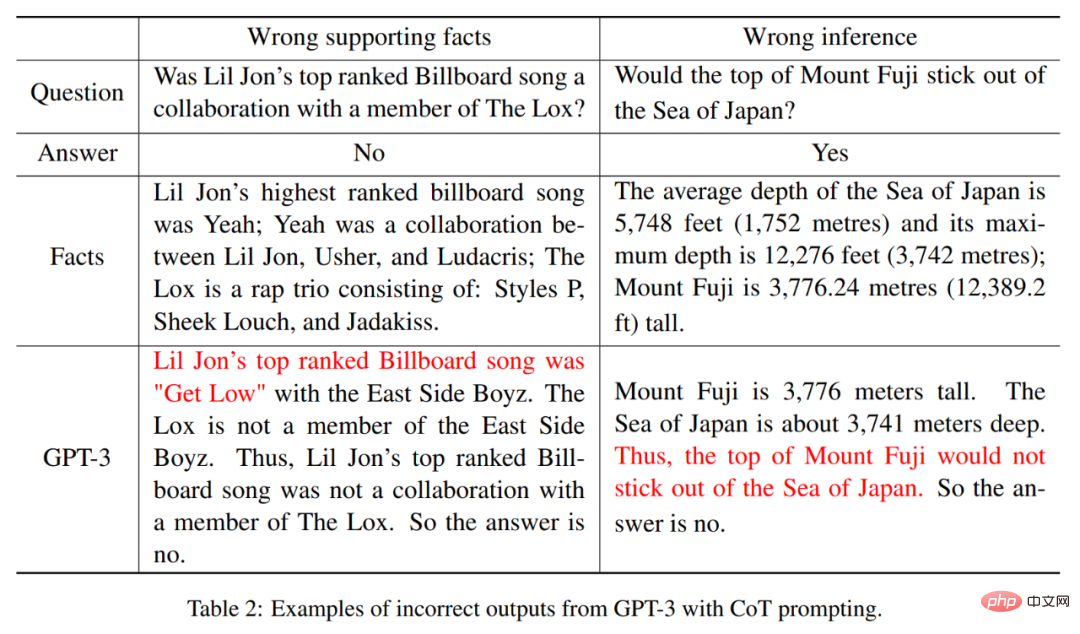

# Overall, the quality of the output answers to questions is very high. However, the study also observed that GPT-3 may occasionally provide incorrect factual support for its interpretations or make incorrect reasoning for its predictions, although it is generally able to identify appropriate viewpoints.

Wrong supporting facts. As shown in Table 2, GPT-3 provides incorrect factual support for Lil Jon's highest-charting song on the Billboard chart, stating that the highest-charting song is Get Low instead of the correct answer Yeah. Additionally, GPT-3 incorrectly reasoned that the summit of Mount Fuji cannot be higher than the Sea of Japan, rather than the correct answer being that it is.

Please refer to the original paper for more technical details.

The above is the detailed content of It is forbidden to make up large-scale language models randomly, and given some external knowledge, the reasoning is very reliable.. For more information, please follow other related articles on the PHP Chinese website!