How to improve the speed of PyTorch "Alchemy"?

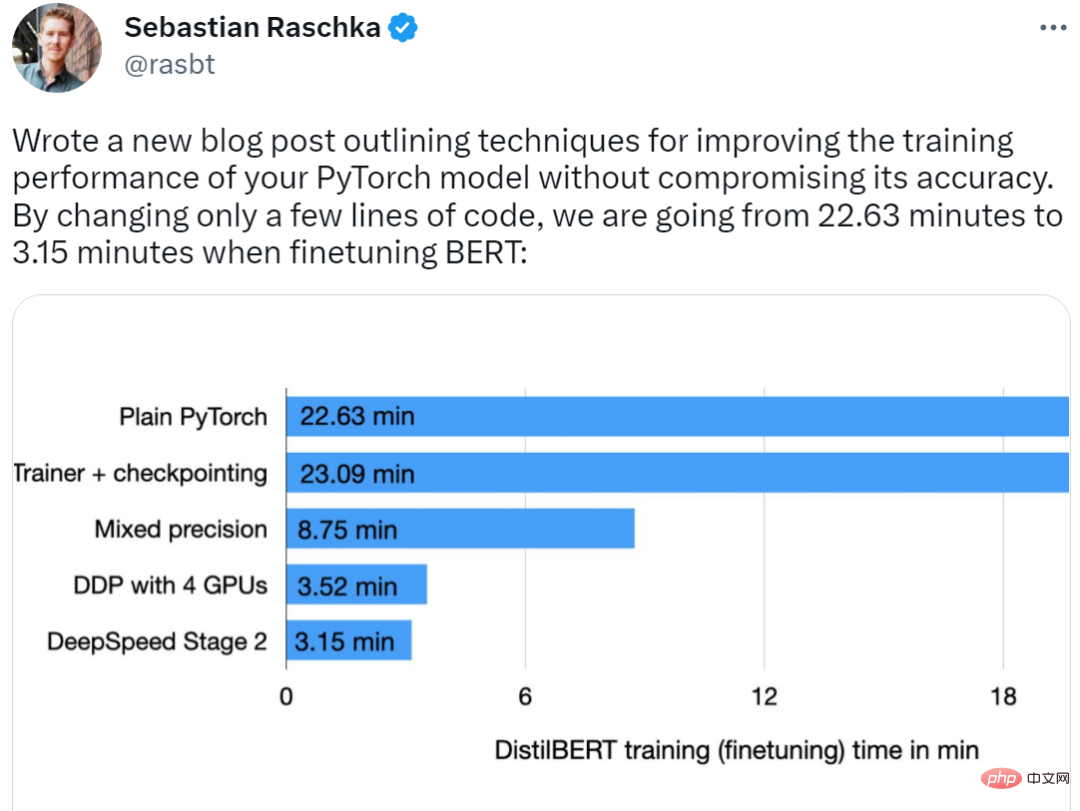

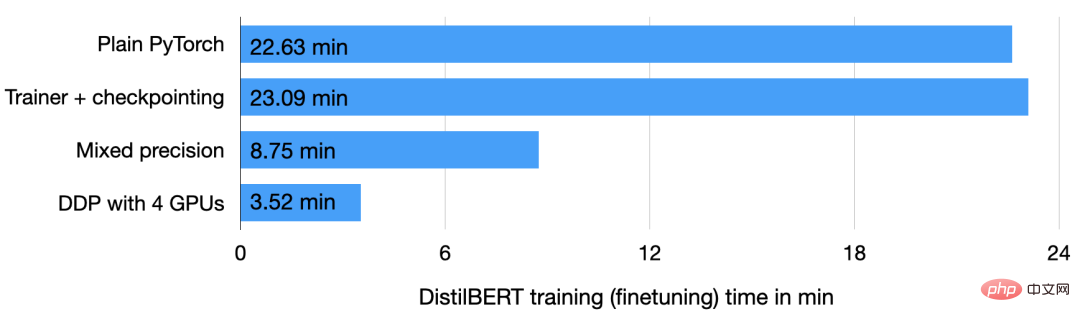

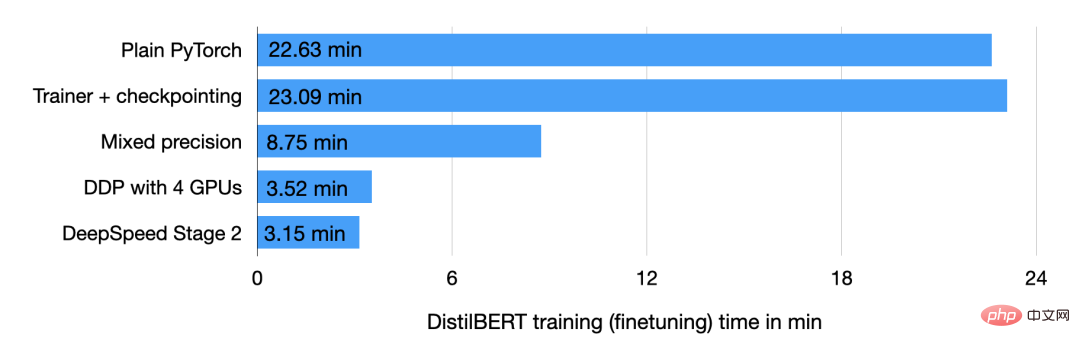

Recently, well-known machine learning and AI researcher Sebastian Raschka showed us his trick. According to him, his method reduced the BERT optimization time from 22.63 minutes to 3.15 minutes by changing only a few lines of code without affecting the accuracy of the model, and the training speed was increased by a full 7 times.

##The author even stated that if you have 8 GPUs available, the entire training process only requires It takes 2 minutes to achieve 11.5x performance acceleration.

Let’s take a look at how he achieved it.

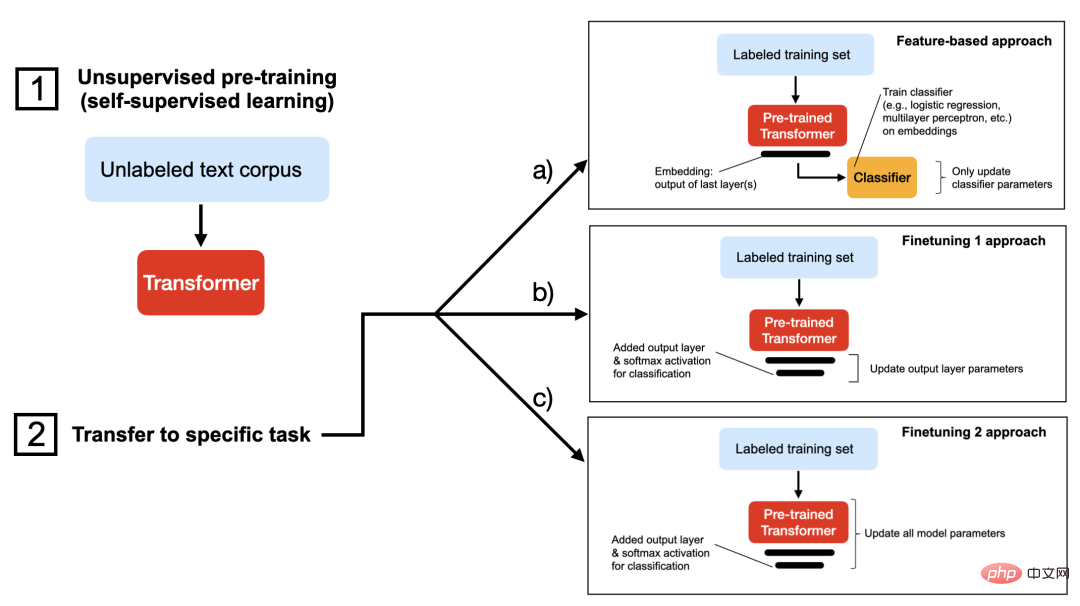

Make PyTorch model training fasterThe first is the model. The author uses the DistilBERT model for research. It is a streamlined version of BERT and is 40 times smaller than BERT. %, but with almost no performance loss. The second is the data set. The training data set is the IMDB Large Movie Review, a large movie review data set, which contains a total of 50,000 movie reviews. The author will use method c in the figure below to predict movie review sentiment in the dataset.

After the basic tasks have been explained clearly, the following is the training process of PyTorch. In order to let everyone better understand this task, the author also intimately introduces a warm-up exercise, that is, how to train the DistilBERT model on the IMDB movie review data set. If you want to run the code yourself, you can set up a virtual environment using the relevant Python libraries, as shown below:

The versions of the relevant software are as follows:

Now omit the boring data loading introduction, you only need to understand that this article divides the data set into 35,000 training examples, 5000 validation examples and 10000 test examples. The required code is as follows:

#Screenshot of the code part

Full code address:

https://github.com/rasbt/faster-pytorch-blog /blob/main/1_pytorch-distilbert.py

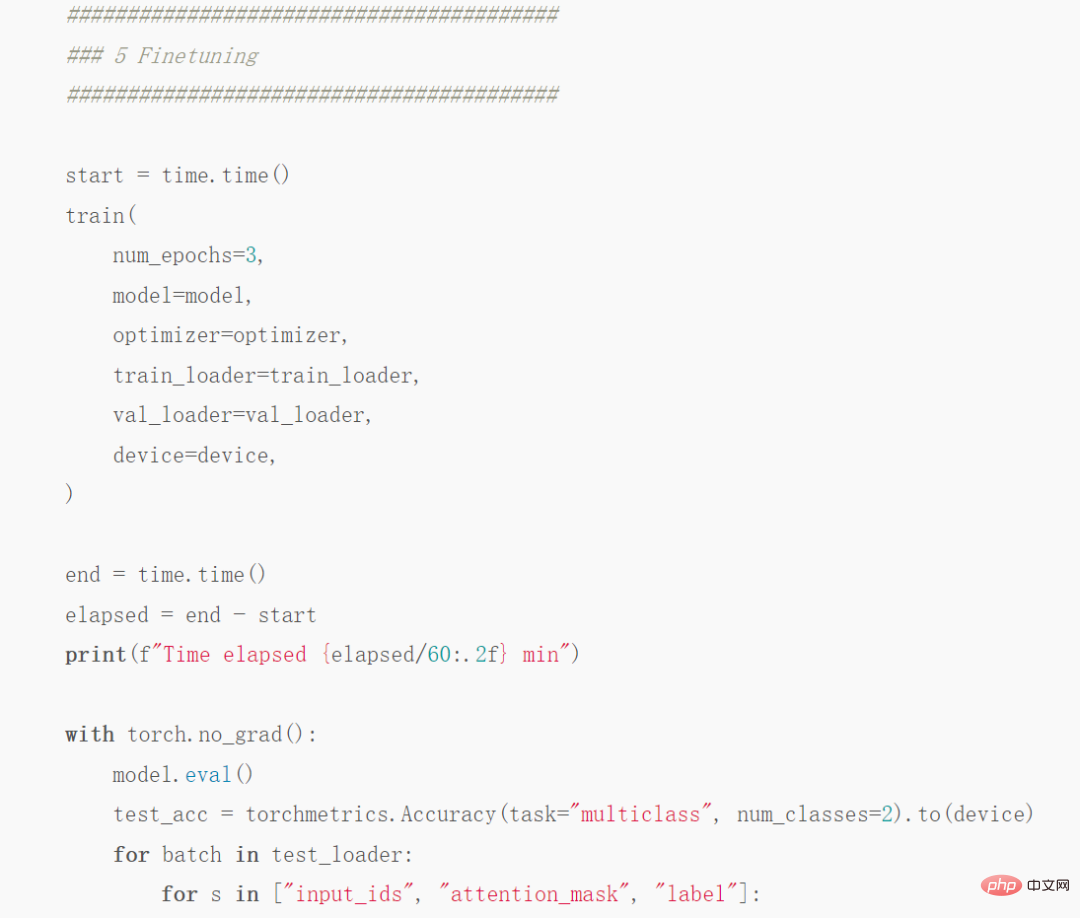

Then run the code on the A100 GPU and get the following results:

Partial results screenshot

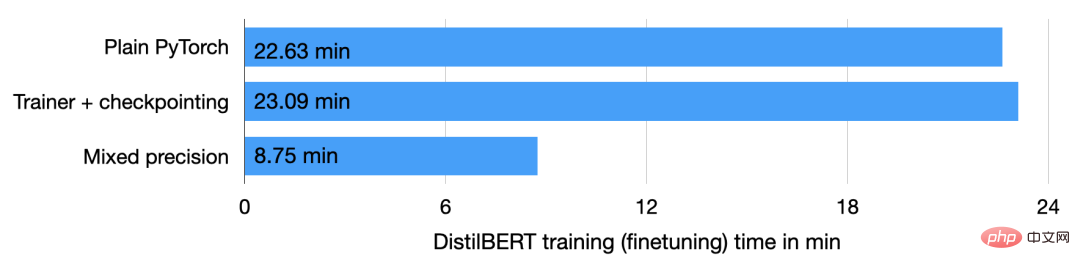

As shown in the above code, the model goes from round 2 to round 3 There was a little overfitting at the beginning of the round, and the verification accuracy dropped from 92.89% to 92.09%. After fine-tuning the model for 22.63 minutes, the final test accuracy was 91.43%.

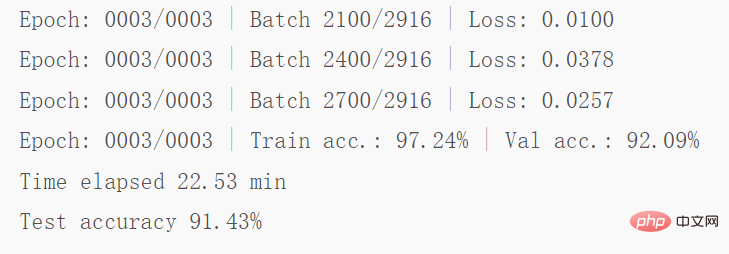

Use the Trainer class##The next step is to improve the above code. The improvement part is mainly to wrap the PyTorch model in LightningModule so that you can use the Trainer class from Lightning. Some code screenshots are as follows:

Full code address: https://github.com/rasbt/faster-pytorch-blog/blob/main/2_pytorch- with-trainer.py

#The above code creates a LightningModule, which defines how to perform training, validation and testing. Compared to the code given previously, the main change is in Part 5 (i.e.5 Finetuning), which is fine-tuning the model. Unlike before, the fine-tuning part wraps the PyTorch model in the LightningModel class and uses the Trainer class to fit the model.

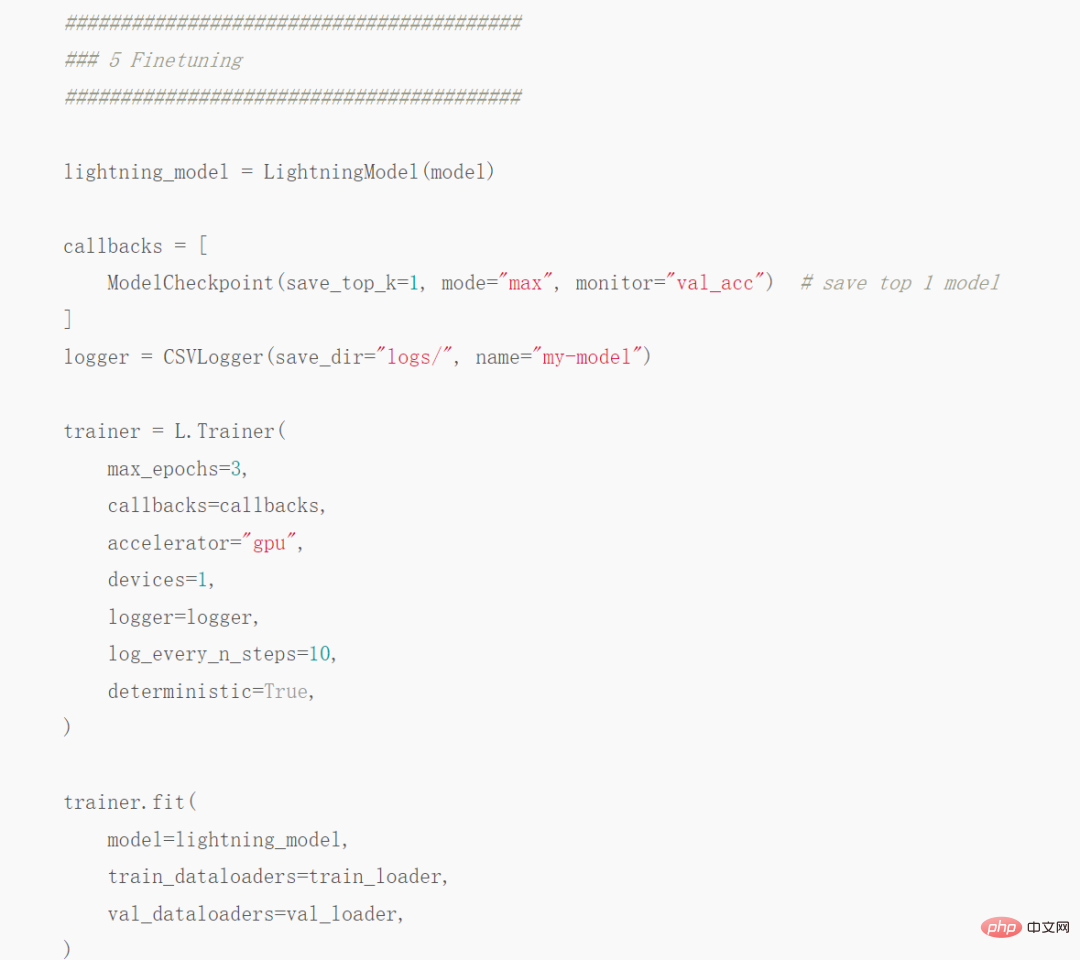

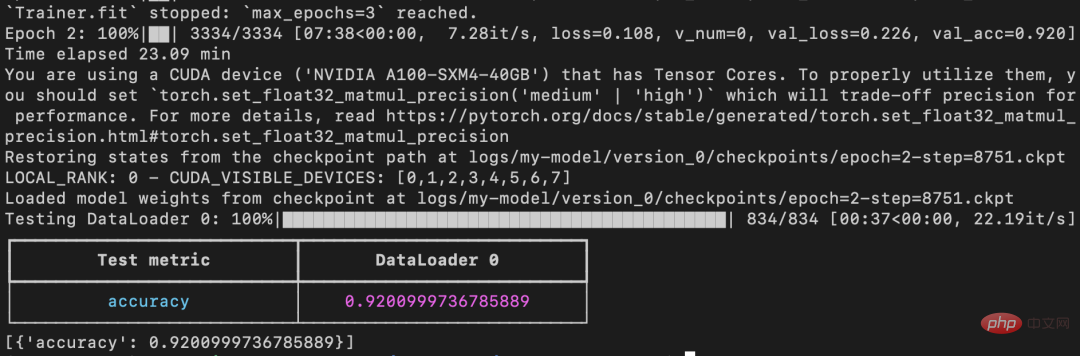

The previous code showed that the verification accuracy dropped from round 2 to round 3, but the improved The code uses ModelCheckpoint to load the best model. On the same machine, the model achieved 92% test accuracy in 23.09 minutes.

It should be noted that if checkpointing is disabled and PyTorch is allowed to run in non-deterministic mode, this run will eventually get the same running time as normal PyTorch (The time is 22.63 minutes instead of 23.09 minutes).

Automatic mixed precision training

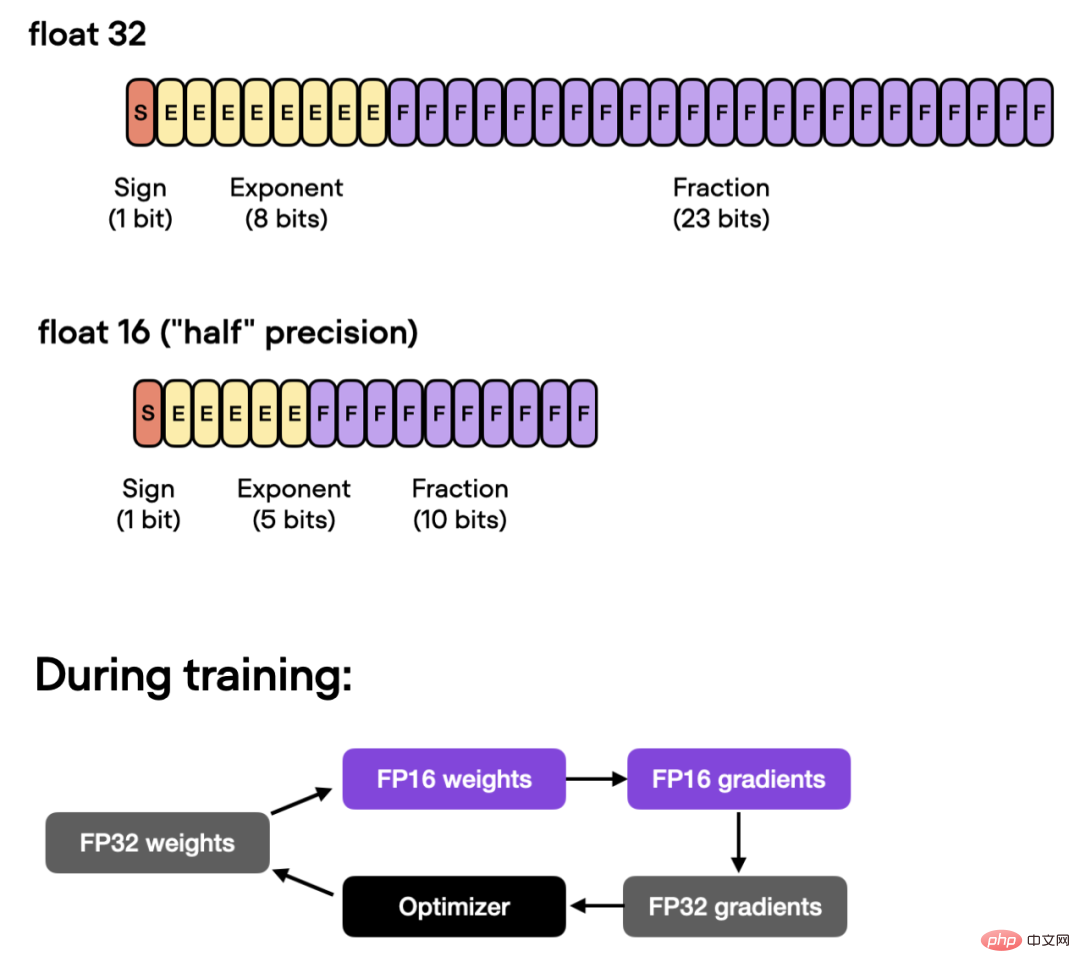

Further, if the GPU supports mixed precision training, you can turn on the GPU to improve computing efficiency . The authors use automatic mixed-precision training, switching between 32-bit and 16-bit floating point without sacrificing accuracy.

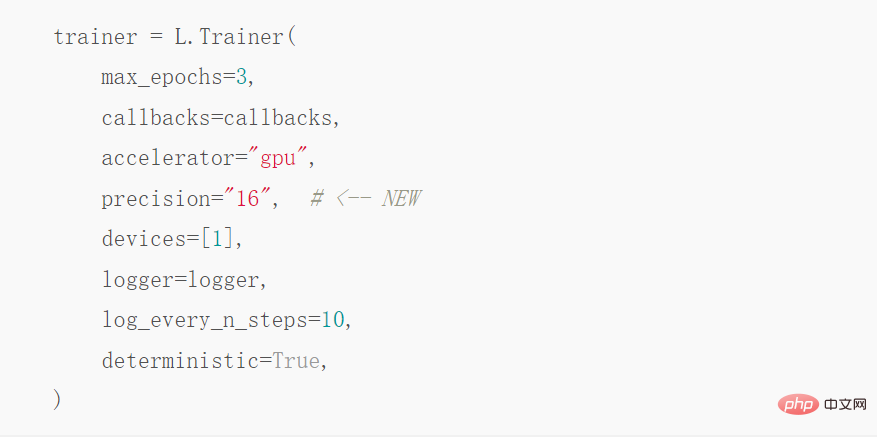

##Under this optimization, using the Trainer class, automatic Mixed precision training:

The above operation can reduce the training time from 23.09 minutes to 8.75 minutes, which is almost 3 times faster times. The accuracy on the test set is 92.2%, even slightly improved from the previous 92.0%.

Use Torch.Compile static image

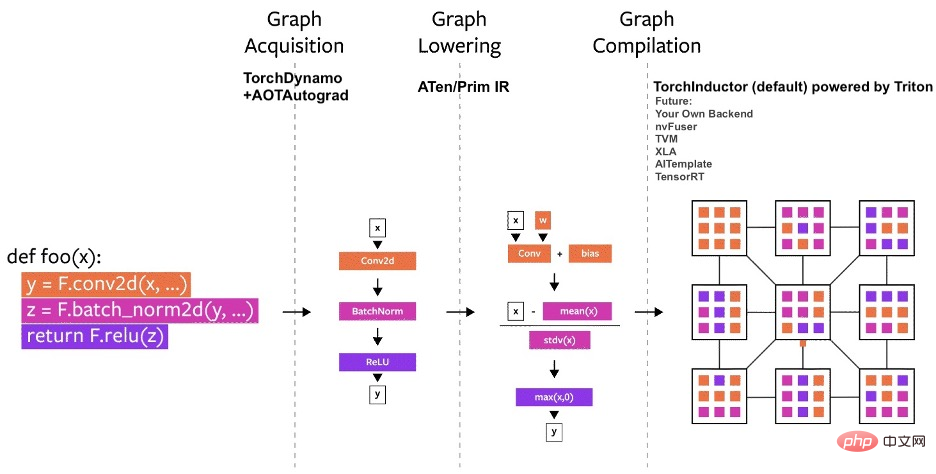

Recent PyTorch 2.0 announcement display , the PyTorch team introduced the new toch.compile function. This function can speed up PyTorch code execution by generating optimized static graphs instead of using dynamic graphs to run PyTorch code.

Since PyTorch 2.0 has not yet been officially released, torchtriton must be installed first and updated to This feature is only available in the latest version of PyTorch.

Then modify the code by adding this line:

Distributed data parallelism on 4 GPUs

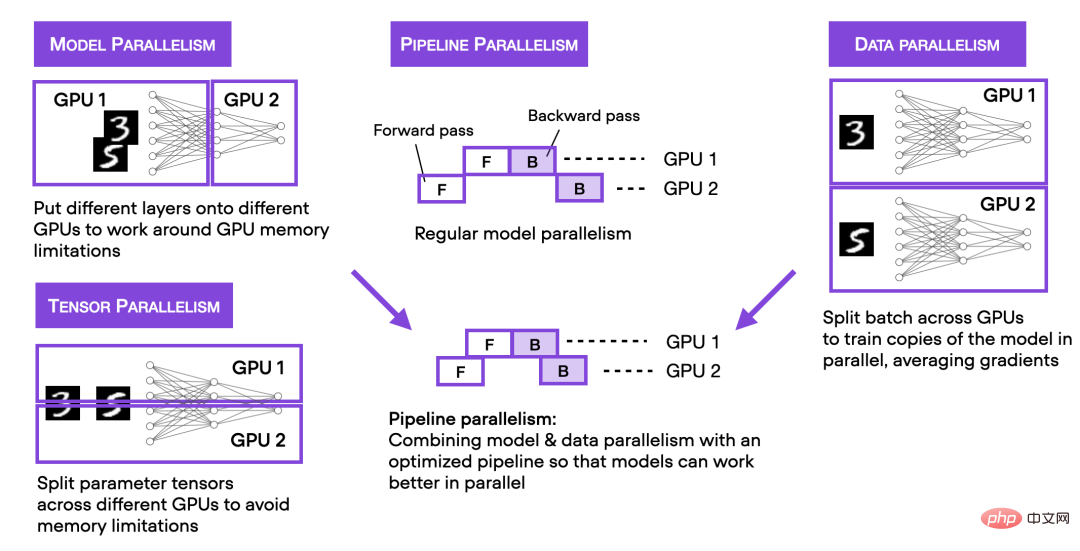

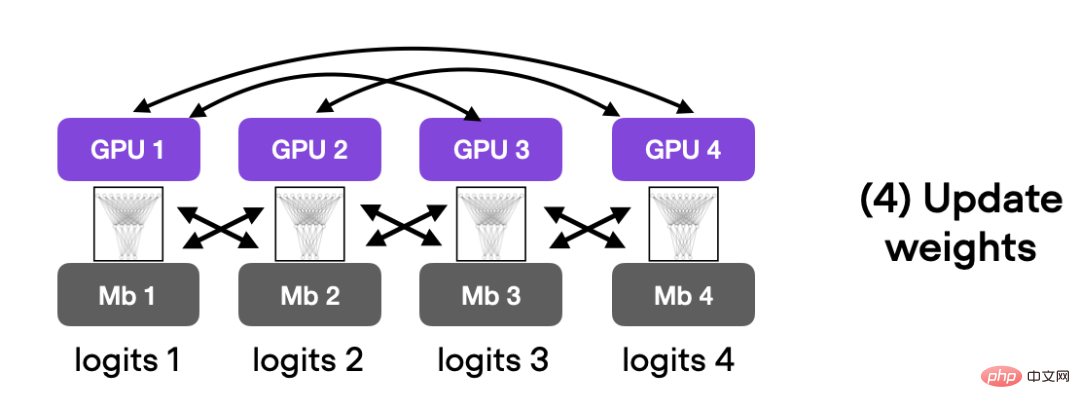

The above describes the mixed precision training of accelerating code on a single GPU. Next, we introduce the multi-GPU training strategy. The figure below summarizes several different multi-GPU training techniques.

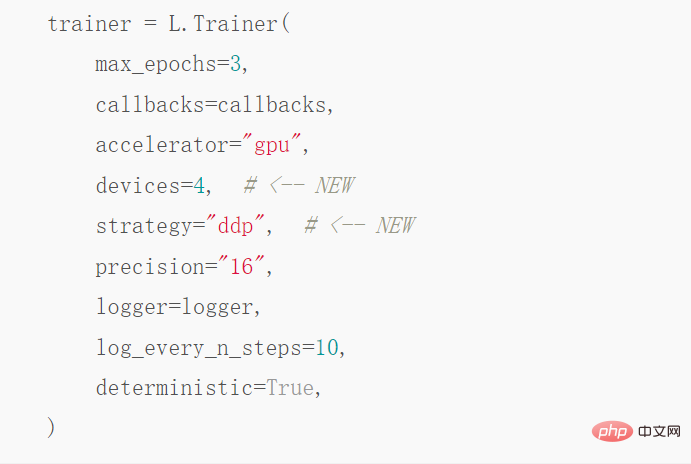

If you want to achieve distributed data parallelism, you can achieve it through DistributedDataParallel, just modify it Trainer can be used with just one line of code.

After this step of optimization, on 4 A100 GPUs, this code ran for 3.52 minutes and reached 93.1 % test accuracy.

#DeepSpeed

Finally, the author explores the results of using the deep learning optimization library DeepSpeed and the multi-GPU strategy in Trainer. First you must install the DeepSpeed library:

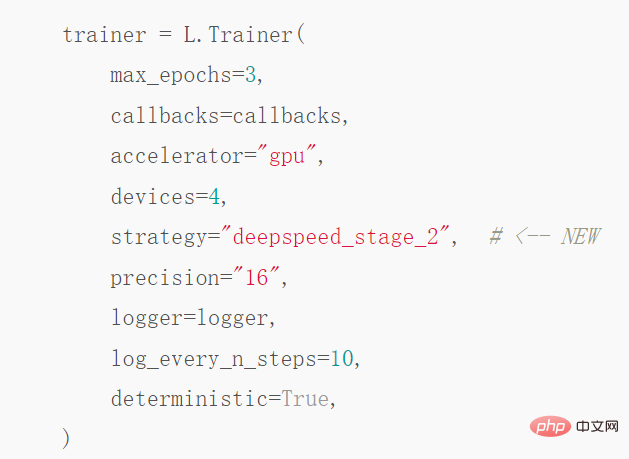

Then you only need to change one line of code to enable the library:

After this wave, it took 3.15 minutes to reach a test accuracy of 92.6%. However, PyTorch also has an alternative to DeepSpeed: fully-sharded DataParallel, called with strategy="fsdp", which finally took 3.62 minutes to complete.

The above is the author’s method to improve the training speed of PyTorch model. Interested friends can Follow the original blog and give it a try, I believe you will get the results you want.

The above is the detailed content of By changing a few lines of code, PyTorch's alchemy speed is soaring and model optimization time is greatly reduced.. For more information, please follow other related articles on the PHP Chinese website!