Deep learning has been able to achieve such achievements thanks to its ability to solve large-scale non-convex optimization problems with relative ease. Although non-convex optimization is NP-hard, some simple algorithms, usually variants of stochastic gradient descent (SGD), have shown surprising effectiveness in actually fitting large neural networks.

In this article, several scholars from the University of Washington wrote "Git Re-Basin: Merging Models modulo Permutation Symmetries". They studied the SGD algorithm in high-dimensional non-convex optimization in deep learning. Unreasonable effectiveness on the issue. They were inspired by three questions:

1. Why does SGD perform well in the optimization of high-dimensional non-convex deep learning loss landscapes, while in other non-convex optimization settings, such as policy learning, The robustness of trajectory optimization and recommendation systems has significantly declined?

2. Where are the local minima? Why does the loss decrease smoothly and monotonically when linearly interpolating between initialization weights and final training weights?

3. Why do two independently trained models with different random initialization and data batching order achieve almost the same performance? Furthermore, why do their training loss curves look the same

Paper address:https://arxiv.org/pdf/2209.04836. pdf

This article believes that there is some invariance in model training, so that different trainings will show almost the same performance.

Why is this so? In 2019, Brea et al. noticed that hidden units in neural networks have arrangement symmetry. Simply put: we can swap any two units in the hidden layer of the network, and the network functionality will remain the same. Entezari et al. 2021 speculated that these permutation symmetries might allow us to linearly connect points in weight space without compromising losses.

Below we use an example from one of the authors of the paper to illustrate the main purpose of the article, so that everyone will have a clearer understanding.

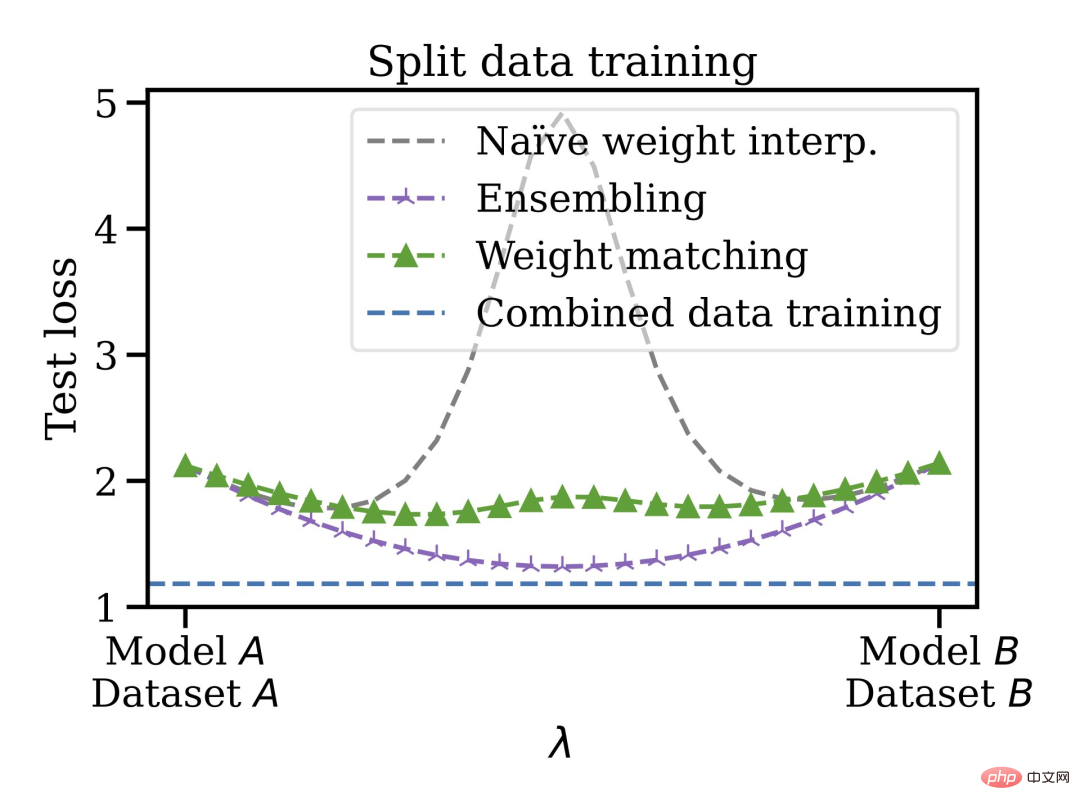

Suppose you trained an A model and your friend trained a B model, the training data of the two models may be different. It doesn't matter, using the Git Re-Basin proposed in this article, you can merge the two models A B in the weight space without damaging the loss.

The authors of the paper stated that Git Re-Basin can be applied to any neural network (NN), and they demonstrated it for the first time A zero-barrier linear connection is possible between two independently trained (without pre-training) models (ResNets).

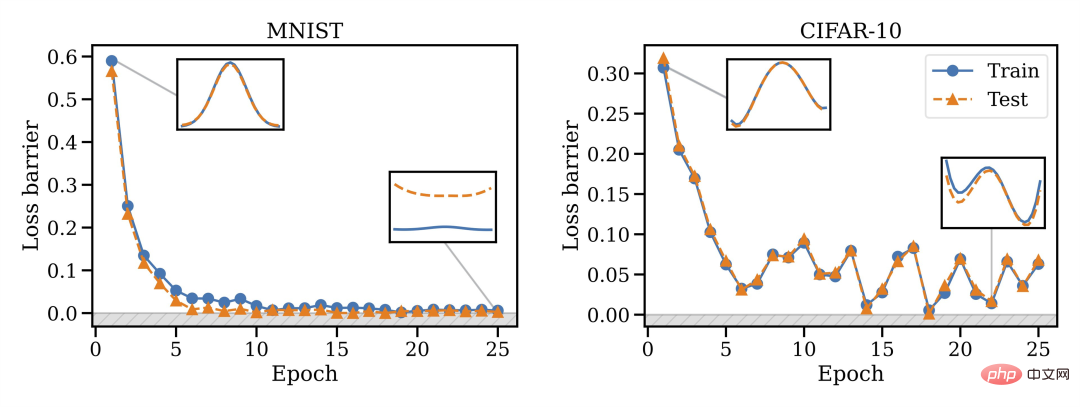

They found that merging ability is a property of SGD training, merging does not work at initialization, but a phase change occurs, so merging will become possible over time.

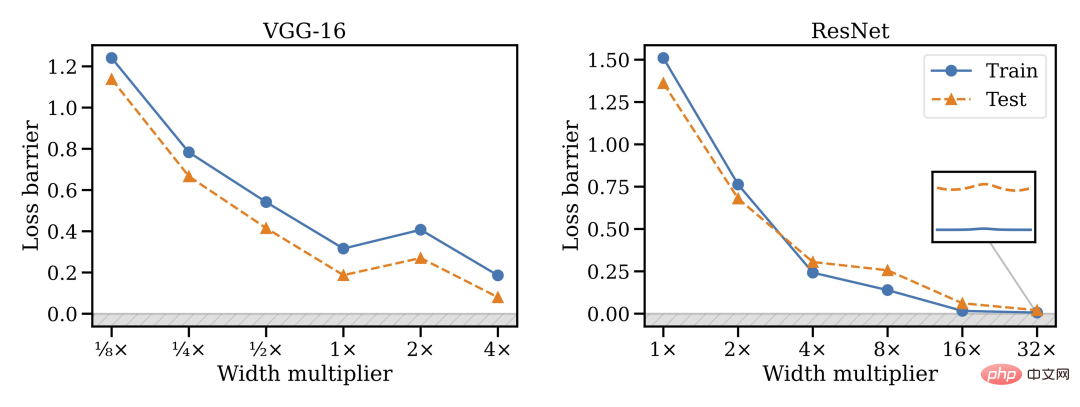

# They also found that model width is closely related to mergeability, that is, wider is better.

Also, not all architectures can be merged: VGG seems to be more difficult to merge than ResNets.

This merging method has other advantages, you can train the model on disjoint and biased data sets and then merge them together in the weight space. For example, you have some data in the US and some in the EU. For some reason the data cannot be mixed. You can train separate models first, then merge the weights, and finally generalize to the merged dataset.

Thus, trained models can be mixed without the need for pre-training or fine-tuning. The author expressed that he is interested in knowing the future development direction of linear mode connection and model repair, which may be applied to areas such as federated learning, distributed training, and deep learning optimization.

Finally, it is also mentioned that the weight matching algorithm in Chapter 3.2 only takes about 10 seconds to run, so a lot of time is saved. Chapter 3 of the paper also introduces three methods for matching model A and model B units. Friends who are not clear about the matching algorithm can check the original paper.

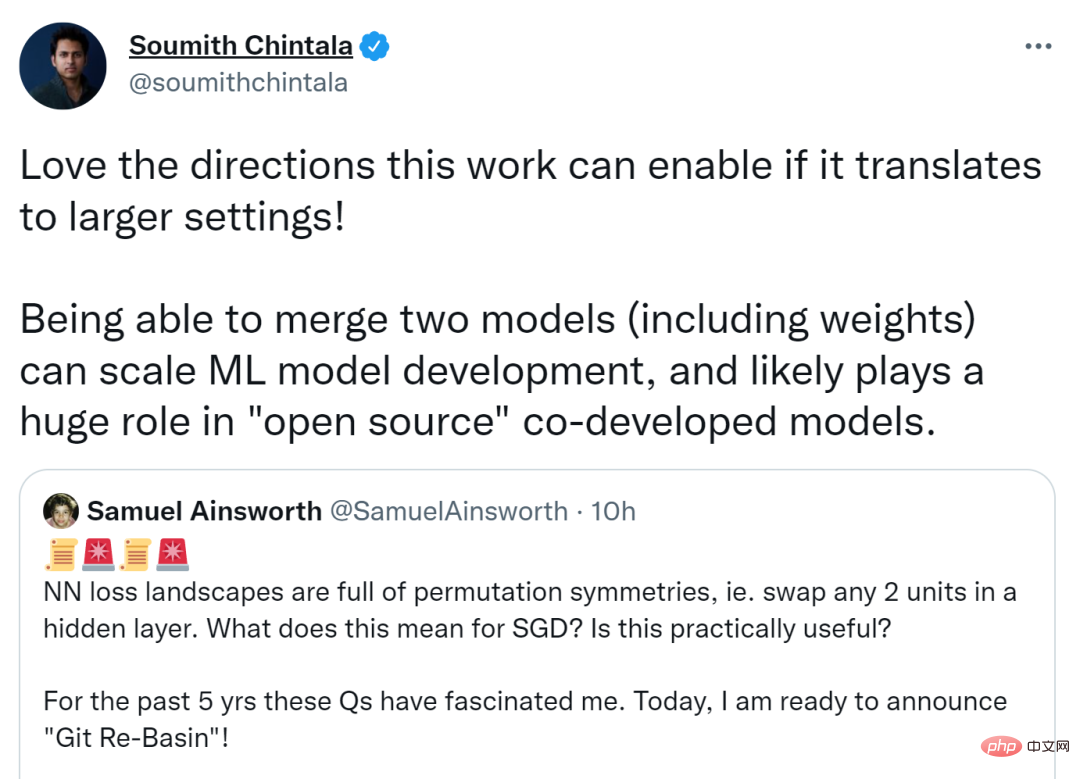

This paper triggered a heated discussion on Twitter. Soumith Chintala, co-founder of PyTorch, said that if this research can be migrated to more The larger the setting, the better the direction it can take. Merging two models (including weights) can expand ML model development and may play a huge role in open source co-development of models.

Others believe that if permutation invariance can capture most equivalences so efficiently, it will provide inspiration for theoretical research on neural networks.

The first author of the paper, Dr. Samuel Ainsworth of the University of Washington, also answered some questions raised by netizens.

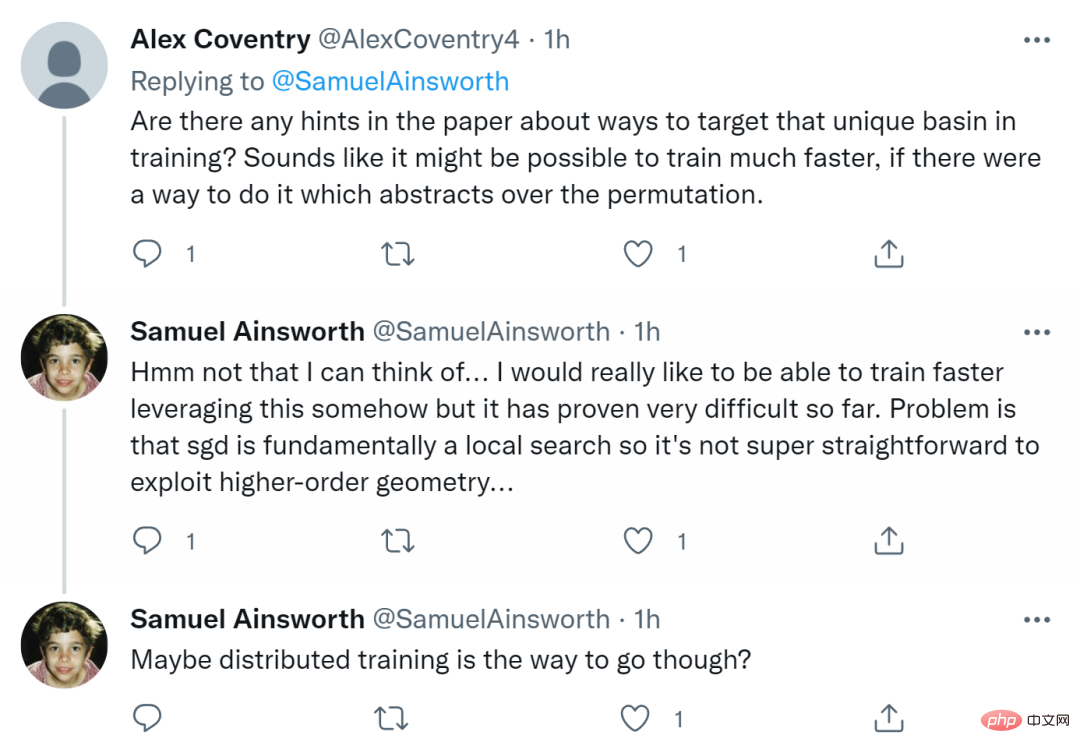

First someone asked, "Are there any hints in the paper about targeting unique basins in training? If there was a way to abstract the permutations, the training might be faster. Quick."

Ainsworth replied that he had not thought of this. He really hopes to be able to train faster somehow, but so far it has proven to be very difficult. The problem is that SGD is essentially a local search, so it's not that easy to exploit higher-order geometry. Maybe distributed training is the way to go.

Some people also ask whether it is applicable to RNN and Transformers? Ainsworth says it works in principle, but he hasn't experimented with it yet. Time will prove everything.

Finally someone asked, "This seems to be very important for distributed training to "come true"? Could it be that DDPM ( Denoising diffusion probability model) does not use the ResNet residual block?"

Ainsworth replied that although he himself is not very familiar with DDPM, he bluntly stated that it is used for distributed Training will be very exciting.

The above is the detailed content of Merging two models with zero obstacles, linear connection of large ResNet models takes only seconds, inspiring new research on neural networks. For more information, please follow other related articles on the PHP Chinese website!