Technology peripherals

Technology peripherals

AI

AI

LLM is really not good for time series prediction. It doesn't even use its reasoning ability.

LLM is really not good for time series prediction. It doesn't even use its reasoning ability.

LLM is really not good for time series prediction. It doesn't even use its reasoning ability.

Can language models really be used for time series prediction? According to Betteridge's Law of Headlines (any news headline ending with a question mark can be answered with "no"), the answer should be no. This seems to be the case: such a powerful LLM cannot handle time series data well.

Time series, that is, time series, as the name suggests, refers to a set of data point sequences arranged in the order of occurrence.

Time series analysis is critical in many fields, including disease spread prediction, retail analytics, healthcare, and finance. In the field of time series analysis, many researchers have recently been studying how to use large language models (LLM) to classify, predict, and detect anomalies in time series. These papers assume that language models that are good at handling sequential dependencies in text can also generalize to sequential dependencies in time series data. This assumption is not surprising, after all, language models are now the most popular in the field of machine learning.

So, how much help can language models bring to traditional timing tasks?

Recently, a team from the University of Virginia and the University of Washington tried to answer this question, and finally made a simple but important proposition: For time series prediction tasks, the performance of common methods using language models is close to or worse than basic ablation method, but the former requires orders of magnitude more calculations than the latter.

Paper title: Are Language Models Actually Useful for Time Series Forecasting?

Paper address: https://arxiv.org/pdf/2406.16964

These findings were obtained by the team through a large number of ablation obtained from the study, which revealed a "worrying trend" in current time series forecasting research.

But the team also said: "Our goal is not to imply that language models can never be used for time series." In fact, some recent studies have shown that there is good potential for interaction between language and time series to handle time series reasoning and tasks such as social understanding.

Instead, their goal is to highlight this surprising finding: for existing time series tasks, existing methods make little use of the innate reasoning capabilities of pre-trained language models.

Experimental setup

The team used three state-of-the-art time series prediction methods and proposed three ablation methods for LLM: w/o LLM, LLM2Attn, LLM2Trsf.

To evaluate the effectiveness of LLM on time series forecasting tasks, they tested these methods on 8 standard datasets.

Reference Methods for Language Models and Time Series

They experimented with three recent methods for time series forecasting using LLM. See Table 2. The base model used by these methods is GPT-2 or LLaMA, and different alignment and fine-tuning strategies are used.

OneFitsAll: The OneFitsAll (sometimes also called GPT4TS) method first uses instance normalization and patching techniques on the input time series and then feeds it to a linear layer to obtain the input representation for the language model . During training, the multi-head attention and feed-forward layers of the language model are frozen, while position embeddings and layer normalization are optimized. The role of the final layer is to convert the final hidden state of the language model into prediction results.

Time-LLM: When using Time-LLM, the input time series is tokenized by the patching technique, and multi-head attention aligns it with the low-dimensional representation of the word embedding. The output of this alignment process is then fed to a frozen pre-trained language model along with an embedding of descriptive statistical features. The output representation of this language model is then flattened and passed through a linear layer, resulting in predictions.

LLaTA: The way LLaTA embeds the input time series is by treating each channel as a token. One half of the architecture is the “text branch,” which uses cross-attention to align the time series representation with the low-dimensional representation of the language model’s word embeddings. This representation is then passed to a frozen pre-trained language model, resulting in a "textual prediction". At the same time, the "temporal" branch of the architecture learns a low-rank adapter for the pre-trained language model based on the input time series, thereby obtaining a "temporal prediction" for inference. The model contains an additional loss term that takes into account the similarity between these representations.

Ablation methods proposed by the team

For LLM-based predictors, in order to isolate the impact of LLM, the team proposed three ablation methods: removing the LLM component or replacing it with a simple module.

Specifically, for each of the above three methods, they made the following three modifications:

w/o LLM, see Figure 1b. Remove the language model completely and pass the input token directly to the final layer of the reference method.

LLM2Attn, see Figure 1c. Replace the language model with a single randomly initialized multi-head attention layer.

LLM2Trsf, see Figure 1d. Replace the language model with a single randomly initialized Transformer module.

In the above ablation study, the rest of the predictor is kept unchanged (trainable). For example, as shown in Figure 1b, after removing the LLM, the input encoding is passed directly to the output map. And as shown in Figure 1c and 1d, after replacing the LLM with attention or Transformer, they are trained together with the remaining structure of the original method.

Datasets and Evaluation Metrics

Benchmark Datasets. The evaluation uses the following real-world datasets: ETT (which contains 4 subsets: ETTm1, ETTm2, ETTh1, ETTh2), Illness, Weather, Traffic, Electricity. Table 1 gives the statistics of these data sets. Also available are Exchange Rate, Covid Deaths, Taxi (30 min), NN5 (Daily) and FRED-MD.

Evaluation indicators. The evaluation metrics reported in this study are the mean absolute error (MAE) and mean squared error (MSE) between predicted and true time series values.

Results

Specifically, the team explored the following research questions (RQ):

(RQ1) Can pre-trained language models help improve prediction performance?

(RQ2) Are LLM-based methods worth the computational cost they consume?

(RQ3) Does language model pre-training help the performance of prediction tasks?

(RQ4) Can LLM characterize sequential dependencies in time series?

(RQ5) Does LLM help with few-shot learning?

(RQ6) Where does the performance come from?

Does pre-training language models help improve prediction performance? (RQ1)

Experimental results show that pre-trained LLM is not yet very useful for time series forecasting tasks.

Overall, as shown in Table 3, on 8 data sets and 2 indicators, the ablation method is better than the Time-LLM method in 26/26 cases and outperforms the Time-LLM method in 22/26 cases. Better than LLaTA and better than OneFitsAll in 19/26 cases.

In conclusion, it is difficult to say that LLM can be effectively used for time series forecasting.

Are LLM-based methods worth the computational cost they consume? (RQ2)

Here, the computational intensity of these methods is evaluated based on their nominal performance. Language models in the reference approach use hundreds of millions or even billions of parameters to perform time series predictions. Even when the parameters of these language models are frozen, they still have significant computational overhead during training and inference.

For example, Time-LLM has 6642 M parameters and takes 3003 minutes to complete training on the Weather data set, while the ablation method only has 0.245 M parameters and the average training time is only 2.17 minutes. Table 4 gives information on training other methods on the ETTh1 and Weather datasets.

As for inference time, the approach here is to divide by the maximum batch size to estimate the inference time per example. On average, Time-LLM, OneFitsAl, and LLaTA take 28.2, 2.3, and 1.2 times more inference time compared to the modified model.

Figure 3 gives some examples where the green markers (ablation methods) are generally lower than the red markers (LLM) and concentrated on the left side, which illustrates that they are less computationally expensive but have better prediction performance.

In short, in time series prediction tasks, the computational intensity of LLM cannot bring corresponding improvements in performance.

Does language model pre-training help the performance of prediction tasks? (RQ3)

The evaluation results show that for time series forecasting tasks, pre-training with large datasets is really not necessary. In order to test whether the knowledge learned during pre-training can bring meaningful improvements to prediction performance, the team experimented with the effects of different combinations of pre-training and fine-tuning on LLaTA on time series data.

Pre-training + fine-tuning (Pre+FT): This is the original method, which is to fine-tune a pre-trained language model on time series data. For LLaTA here, the approach is to freeze the base language model and learn a low-rank adapter (LoRA).

隨機初始化 + 微調(woPre+FT):預先訓練得到的文字知識是否有助於時間序列預測?這裡,隨機初始化語言模型的權重(由此清除了預訓練的效果),再在微調資料集上從頭開始訓練 LLM。

預訓練 + 不使用微調(Pre+woFT):在時間序列資料上進行微調又能為預測效能帶來多大提升呢?這裡是凍結語言模型,同時放棄學習 LoRA。這能反映語言模型自身處理時間序列的表現。

隨機初始化 + 無微調(woPre+woFT):很明顯,這就是將輸入時間序列隨機投射到一個預測結果。該結果被用作與其它方法進行比較的基準。

整體結果如表 5。在 8 個資料集上,依照 MAE 和 MSE 指標,「預訓練 + 微調」有三次表現最佳,而「隨機初始化 + 微調」獲得了 8 次最佳。這說明語言知識對時間序列預測的幫助有限。但是,「預訓練 + 無微調」與基準「隨機初始化 + 無微調」各自有 5 和 0 次最佳,這說明語言知識對微調過程的幫助也不大。

總之,預訓練得到的文本知識對時間序列預測的幫助有限。

LLM 能否表徵時間序列中的順序依賴關係? (RQ4)

大多數使用 LLM 來微調位置編碼的時間序列預測方法都有助於理解序列中時間步驟的位置。該團隊預計,對於一個具有優良位置表徵的時間序列模型,如果將輸入的位置打亂,那麼其預測性能將會大幅下降。他們實驗了三種打亂時間序列資料的方法:隨機混洗整個序列(sf-all)、僅隨機混洗前一半序列(sf-half)、交換序列的前半和後半部(ex-half) 。結果見表 6。

輸入混洗對基於 LLM 的方法與其消融方法的影響差不太多。這說明 LLM 在表徵時間序列中的順序依賴關係方面並沒有什麼突出能力。

LLM 是否有助於少樣本學習? (RQ5)

評估結果表明,LLM 對少樣本學習情境而言意義不大。

他們的評估實驗是取用每個資料集的 10%,再訓練模型及其消融方法。具體來說,這裡評估的是 LLaMA(Time-LLM)。結果見表 7。

可以看到,有無 LLM 的表現差不多 —— 各自都有 8 個案例表現更好。該團隊也使用基於 GPT-2 的方法 LLaTA 進行了類似的實驗。結果見表 8,這裡消融法在少樣本場景的表現還優於 LLM。

性能從何而來? (RQ6)

這一節評估的是 LLM 時間序列模型中常用的編碼技術。結果發現,將 patching 和單層注意力組合起來是一種簡單卻有效的選擇。

前面發現對基於 LLM 的方法進行簡單的消融並不會降低其性能。為了理解這現象的原因,團隊研究了 LLM 時間序列任務中常用的一些編碼技術,例如 patching 和分解。一種基本的 Transformer 模組也可用於輔助編碼。

結果發現,一種組合了 patching 和注意力的結構在小數據集(時間戳少於 100 萬)上的表現優於其它大部分編碼方法,甚至能與 LLM 方法媲美。

其詳細結構如圖 4 所示,其中涉及將「實例歸一化」用於時間序列,然後進行 patching 和投射。然後,在 patch 之間使用一層注意力進行特徵學習。對於 Traffic(約 1500 萬)和 Electricity(約 800 萬)等更大的資料集,則使用了基本 Transformer 的單層線性模型的編碼表現更優。在這些方法中,最後還要使用單層線性層來投射時間序列嵌入,從而得到預測結果。

總之,patching 對編碼非常重要。此外,基本的注意力和 Transformer 模組也能為編碼帶來有效助益。

The above is the detailed content of LLM is really not good for time series prediction. It doesn't even use its reasoning ability.. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

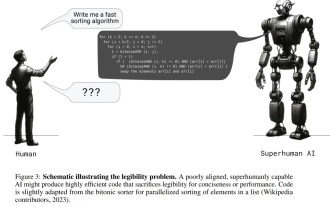

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Show the causal chain to LLM and it learns the axioms. AI is already helping mathematicians and scientists conduct research. For example, the famous mathematician Terence Tao has repeatedly shared his research and exploration experience with the help of AI tools such as GPT. For AI to compete in these fields, strong and reliable causal reasoning capabilities are essential. The research to be introduced in this article found that a Transformer model trained on the demonstration of the causal transitivity axiom on small graphs can generalize to the transitive axiom on large graphs. In other words, if the Transformer learns to perform simple causal reasoning, it may be used for more complex causal reasoning. The axiomatic training framework proposed by the team is a new paradigm for learning causal reasoning based on passive data, with only demonstrations

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which